Context-Aware Correlation Filter Tracking

Matthias Mueller, Neil Smith, Bernard Ghanem

King Abdullah University of Science and Technology (KAUST), Thuwal, Saudi Arabia

{matthias.mueller.2, neil.smith, bernard.ghanem}@kaust.edu.sa

Abstract

Correlation filter (CF) based trackers have recently

gained a lot of popularity due to their impressive per-

formance on benchmark datasets, while maintaining high

frame rates. A significant amount of recent research focuses

on the incorporation of stronger features for a richer repre-

sentation of the tracking target. However, this only helps

to discriminate the target from background within a small

neighborhood. In this paper, we present a framework that

allows the explicit incorporation of global context within

CF trackers. We reformulate the original optimization prob-

lem and provide a closed form solution for single and multi-

dimensional features in the primal and dual domain. Ex-

tensive experiments demonstrate that this framework signif-

icantly improves the performance of many CF trackers with

only a modest impact on frame rate.

1. Introduction

Object tracking remains a core problem in computer

vision with numerous applications, such as surveillance,

human-machine interaction, robotics, etc. Large new

datasets and benchmarks such as OTB-50 [26], OTB-100

[27], TC-128 [19], ALOV300++ [24] and UAV123 [23],

as well as, tracking challenges such as the visual object

tracking (VOT) challenge and multi-object tracking (MOT)

challenge have sparked the interest of many researchers and

helped advance the field significantly. Despite substantial

progress in recent years, visual object tracking remains a

challenging problem in computer vision.

In this paper, we address the problem of single-object

tracking, which is commonly approached as a tracking-by-

detection problem. Currently, most research focuses on

model-free generic object trackers, where no prior assump-

tions regarding the object appearance are made. The generic

nature of this problem makes it challenging, since there are

very few constraints on object appearance, and the object

can undergo a variety of unpredictable transformations in

consecutive frames (e.g. aspect ratio change, illumination

variation, in/out-of-plane rotation, occlusion, etc.).

The tracking problem can be divided into two main chal-

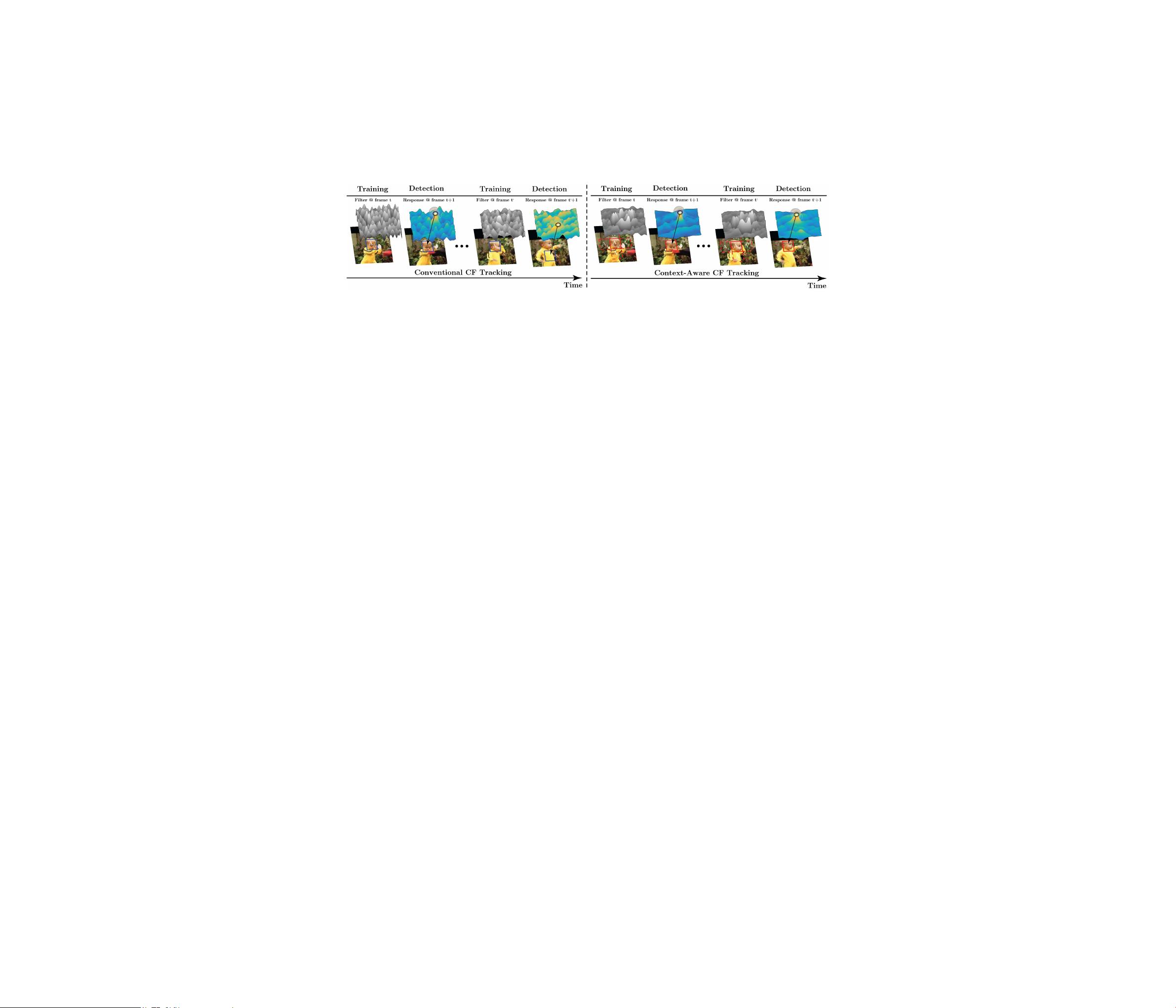

Figure 1: Tracking results of our context-aware adaptation

of the baseline SAMF tracker, denoted as SAMF

CA

, and a

comparison with recent state-of-the-art tracking algorithms

on the Box and Jump sequences from OTB-100.

lenges, object representation and sampling for detection.

Recently, most successful single-object tracking algorithms

use a discriminative object representation with either strong

hand-crafted features, such as HOG and Colornames, or

learned ones. Recent work has integrated deep features [21]

trained on a large dataset, such as ImageNet, to represent the

tracked object. Sampling on the other hand is a trade-off be-

tween computation time and precise scanning of the region

of interest for the target.

Lately, CF trackers have sparked a lot of interest, due

to their high accuracy while running at high frame rates.

[4, 6, 10, 11, 14]. In general, CF trackers learn a correla-

tion filter online to localize the object in consecutive frames.

The learned filter is applied to the region of interest in the

next frame and the location of the maximum response cor-

responds to the object location. The filter is then updated

by using the new object location. The major reasons be-

hind the success of this tracking paradigm is the approxi-

mate dense sampling performed by circularly shifting the

training samples and the computational efficiency of learn-

ing the correlation filter in the Fourier domain. Provided

that the background is homogeneous and the object does

not move much, these circular shifts are equivalent to actual

translations in the image and this framework works well.

However, since these assumptions are not always valid,

CF trackers have several drawbacks. One major drawback is

2017 IEEE Conference on Computer Vision and Pattern Recognition

1063-6919/17 $31.00 © 2017 IEEE

DOI 10.1109/CVPR.2017.152

1387