没有合适的资源?快使用搜索试试~ 我知道了~

信号处理中的矩阵论(英文)

温馨提示

试读

220页

Matrix Computations for Signal Processing 英文版的,对于想深入学习信号处理各种算法的人了解一些矩阵论方面的东西还是很必要的。

资源推荐

资源详情

资源评论

EE731 Lecture Notes: Matrix Computations

for Signal Pro cessing

James P. Reilly

c

Department of Electrical and Computer Engineering

McMaster University

February 2, 2000

0 Preface

This collection of ten chapters of notes will give the reader an intro duc-

tion to the fundamental principles of linear algebra for application in many

disciplines of mo dern engineering and science, including signal pro cessing,

control theory, pro cess control, applied statistics, robotics, etc. We assume

the reader has an equivalentbackground to a freshman course in linear alge-

bra, some introduction to probability and statistics, and a basic knowledge

of the Fourier transform.

The rst chapter, some fundamental ideas required for the remaining p ortion

of the course are established. First, we lo ok at some fundamental ideas of

linear algebra such as linear indep endence, subspaces, rank, nullspace, range,

etc., and how these concepts are interrelated. The idea of auto correlation,

and the covariance matrix of a signal, are then discussed and interpreted.

In chapter 2, the most basic matrix decomposition, the so{called eigendecom-

p osition, is presented. The fo cus of the presentation is to give an intuitive

1

insight into what this decomp osition accomplishes. We illustrate how the

eigendecomp osition can be applied through the Karhunen-Lo eve transform.

In this way, the reader is made familiar with the imp ortant prop erties of this

decomp osition. The Karhunen-Loeve transform is then generalized to the

broader idea of transform co ding.

In chapter 3, we develop the

singular value decomposition

(SVD), which is

closely related to the eigendecomp osition of a matrix. We develop the rela-

tionships between these two decomp ositions and explore various prop erties

of the SVD.

Chapter 4, etc.

1 Fundamental Concepts

The purpose

of this lecture is to review imp ortant fundamental concepts in

linear algebra, as a foundation for the rest of the course. We rst discuss the

fundamental building blo cks, such as an overview of matrix multiplication

from a \big block" p ersp ective, linear independence, subspaces and related

ideas, rank, etc., up on which the rigour of linear algebra rests. We then

discuss vector norms, and various interpretations of the matrix multiplication

op eration. We close the chapter with a discussion on determinants.

1.1 Notation

Throughout this course, we shall indicate that a matrix

A

is of dimension

m

n

, and whose elements are taken

from the set of real numbers, by the

notation

A

2<

m

n

. This means that the matrix

A

b elongs to the Cartesian

pro duct of the real numbers, taken

m

n

times, one for each element of

A

.

In a similar way, the notation

A

2 C

mn

means the matrix is of dimension

m

n

, and the elements are taken from the set of complex numb ers. By the

matrix dimension \

m

n

", we mean

A

consists of

m

rows and

n

columns.

2

Similarly, the notation

a

2 <

m

(

C

m

) implies a vector of dimension

m

whose

elements are taken from the set of real (complex) numbers. By \dimension

of a vector", we mean its length, i.e., that it consists of

m

elements.

Also, we shall indicate that a scalar

a

is from the set of real (complex)

numb ers by the notation

a

2 <

(

C

). Thus, an upp er case bold character

denotes a

matrix

, a lower case bold character denotes a vector, and a

lower

case non-b old character denotes a scalar.

By convention, a vector by default is taken to be a

column

vector. Further,

for a matrix

A

, we imply that its

i

th column is

a

i

. We also imply that its

j

th row is

a

T

j

, even though this notation may be ambiguous, since it may

also be taken to mean the transp ose of the

j

th column. The context of the

discussion will help to resolve the

ambiguit

y.

1.2 \Bigger-Blo ck" Interpretations of Matrix Multi-

plication

Let us dene the matrix pro duct

C

as

C

m

n

=

A

m

k

B

k

n

(1)

The three interpretations of this op eration now follow:

1.2.1 Inner-Pro duct Representation

If

a

and

b

are column vectors of the same length, then the scalar quantity

a

T

b

is referred to as the

inner product

of

a

and

b

. If we dene

a

T

i

2 <

k

as

the

i

th rowof

A

and

b

j

2<

k

as the

j

th column of

B

, then the element

c

ij

of

C

is dened as the inner pro duct

a

T

i

b

j

. This is the conventional small-blo ck

representation of matrix multiplication.

3

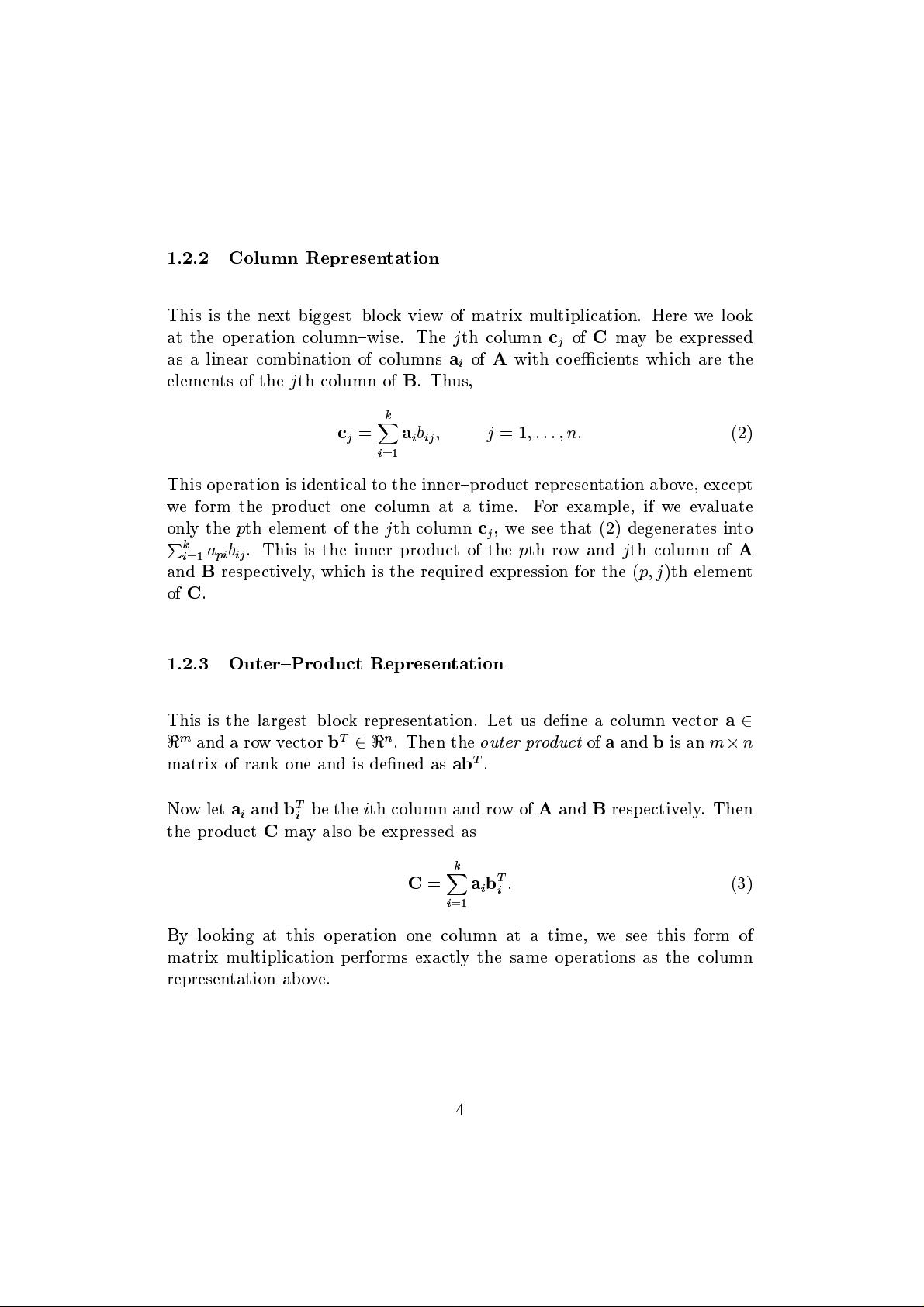

1.2.2 Column Representation

This is the next biggest{blo ck view of matrix multiplication. Here we lo ok

at the op eration column{wise. The

j

th column

c

j

of

C

may be expressed

as a linear combination of columns

a

i

of

A

with co ecients which are the

elements of the

j

th column of

B

. Thus,

c

j

=

k

X

i

=1

a

i

b

ij

j

=1

:::n:

(2)

This op eration is identical to the inner{pro duct representation ab ove, except

we form the pro duct one column at a time. For example, if we ev

aluate

only the

p

th element of the

j

th column

c

j

, we see that (2) degenerates into

P

k

i

=1

a

pi

b

ij

. This is the inner pro duct of the

p

th row and

j

th column of

A

and

B

resp ectively, which is the required expression for the (

p j

)th element

of

C

.

1.2.3 Outer{Pro duct Representation

This is the largest{blo ck representation. Let us dene a column vector

a

2

<

m

and a rowvector

b

T

2<

n

. Then the

outer pr

oduct

of

a

and

b

is an

m

n

matrix of rank one and is dened as

ab

T

.

Nowlet

a

i

and

b

T

i

b e the

i

th column and rowof

A

and

B

resp ectively. Then

the pro duct

C

may also b e expressed as

C

=

k

X

i

=1

a

i

b

T

i

:

(3)

By lo oking at this op eration one column at a time, we see this form of

matrix multiplication performs exactly the same op erations as the column

representation ab ove.

4

1.2.4 Matrix Pre{ and Post{Multiplication

Let us now lo ok at some fundamental ideas distinguishing matrix pre{ and

p ost{multiplication. In this respect, consider a matrix

A

pre{multiplied

by

B

to give

Y

=

BA

. (All matrices are assumed to have conformable di-

mensions). Then we can interpret this multiplication as

B

op erating on the

columns

of

A

to givethecolumns of the pro duct. This follows b ecause each

column

y

i

of the pro duct is a transformed version of the corresponding col-

umn of

A

i.e.,

y

i

=

Ba

i

. Likewise, let's consider

A

post{multiplied

by a

matrix

C

to give

X

=

AB

. Then, we interpret this multiplication as

C

op-

erating on the

rows

of

A

, b ecause eachrow

x

T

i

of the pro duct is a transformed

version of the corresp onding row of

A

i.e.,

x

T

i

=

a

T

i

C

, where we dene

a

T

i

as the

i

th row of

A

.

Example:

Consider an orthonormal matrix

Q

of appropriate dimension. Weknow

that multiplication by an orthonormal matrix results in a rotation op-

eration. The operation

QA

rotates each column of

A

. The op eration

AQ

rotates each row.

There is another way to interpret pre{ and p ost{multiplication. Again con-

sider the matrix

A

pre{multiplied by

B

to give

Y

=

BA

. Then according

to (2), the

j

th column

y

i

of

Y

is a

linear combination

of the

columns

of

B

,

whose coecients are the

j

th column of

A

. Likewise, for

X

=

AB

, we can

saythatthe

i

th row

x

T

i

of

X

is a

linear c

ombination

of the

rows

of

B

,whose

co ecients are the

i

th row of

A

.

Either of these interpretations is equally valid. Being comfortable with the

representations of this section is a big step in mastering the eld of linear

algebra.

5

剩余219页未读,继续阅读

资源评论

zjaixy2013-05-26理论要求比较高,而且是英文版的,还要求英语比较好

zjaixy2013-05-26理论要求比较高,而且是英文版的,还要求英语比较好 linuxluo20112011-10-05纯数学的东西,要有高等数学,矩阵论的基础

linuxluo20112011-10-05纯数学的东西,要有高等数学,矩阵论的基础 crazy314159262016-03-31一本很专业的参考书,并且对于提升英文阅读能力很有帮助

crazy314159262016-03-31一本很专业的参考书,并且对于提升英文阅读能力很有帮助 caicailoving2013-09-28不错的资源。就是比较难懂

caicailoving2013-09-28不错的资源。就是比较难懂

石膏灰

- 粉丝: 87

- 资源: 18

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功