LORA: LOW-RANK ADAPTATION OF LARGE LAN-

GUAGE MODELS

Edward Hu

∗

Yelong Shen

∗

Phillip Wallis Zeyuan Allen-Zhu

Yuanzhi Li Shean Wang Lu Wang Weizhu Chen

Microsoft Corporation

{edwardhu, yeshe, phwallis, zeyuana,

yuanzhil, swang, luw, wzchen}@microsoft.com

yuanzhil@andrew.cmu.edu

(Version 2)

ABSTRACT

An important paradigm of natural language processing consists of large-scale pre-

training on general domain data and adaptation to particular tasks or domains. As

we pre-train larger models, full fine-tuning, which retrains all model parameters,

becomes less feasible. Using GPT-3 175B as an example – deploying indepen-

dent instances of fine-tuned models, each with 175B parameters, is prohibitively

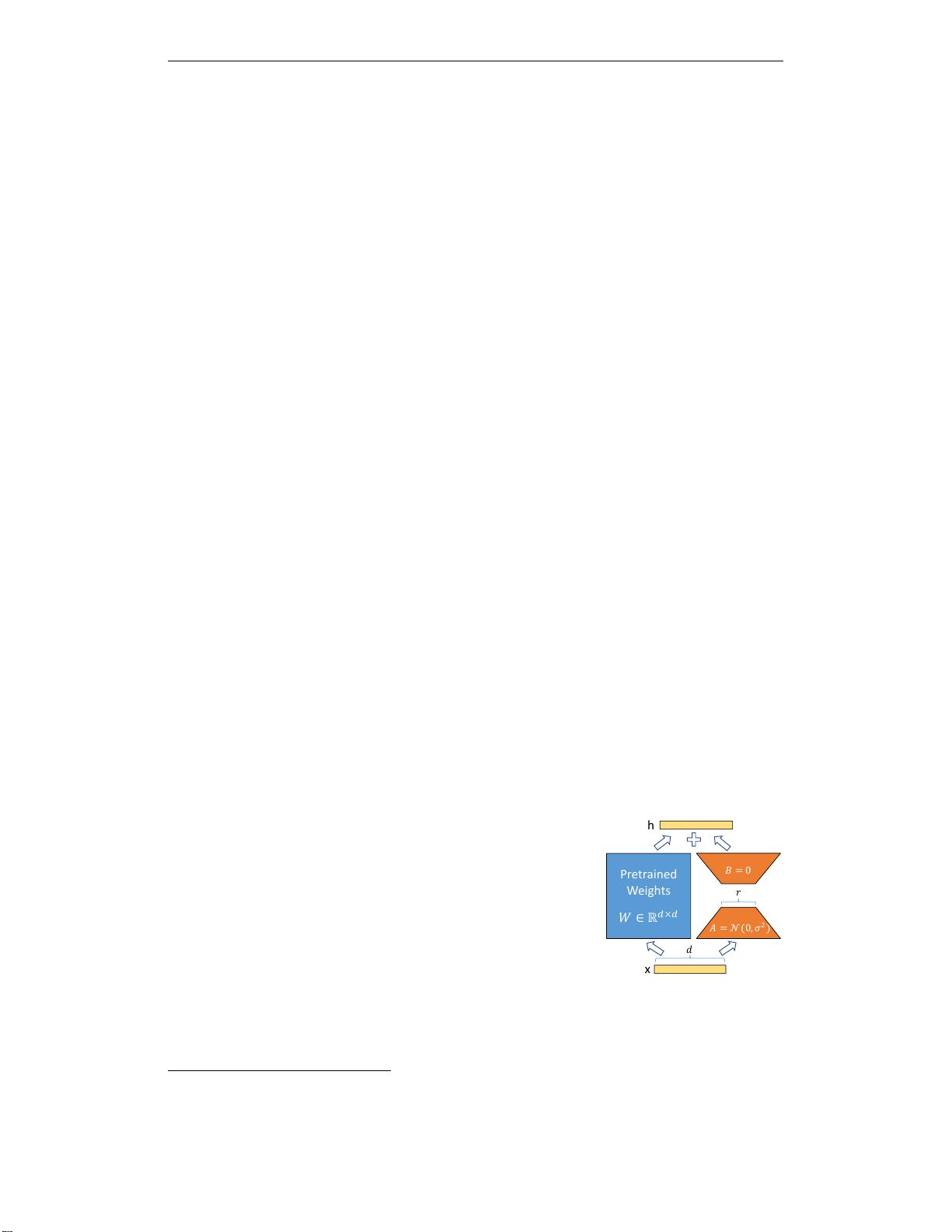

expensive. We propose Low-Rank Adaptation, or LoRA, which freezes the pre-

trained model weights and injects trainable rank decomposition matrices into each

layer of the Transformer architecture, greatly reducing the number of trainable pa-

rameters for downstream tasks. Compared to GPT-3 175B fine-tuned with Adam,

LoRA can reduce the number of trainable parameters by 10,000 times and the

GPU memory requirement by 3 times. LoRA performs on-par or better than fine-

tuning in model quality on RoBERTa, DeBERTa, GPT-2, and GPT-3, despite hav-

ing fewer trainable parameters, a higher training throughput, and, unlike adapters,

no additional inference latency. We also provide an empirical investigation into

rank-deficiency in language model adaptation, which sheds light on the efficacy of

LoRA. We release a package that facilitates the integration of LoRA with PyTorch

models and provide our implementations and model checkpoints for RoBERTa,

DeBERTa, and GPT-2 at https://github.com/microsoft/LoRA.

1 INTRODUCTION

Pretrained

Weights

x

h

Pretrained

Weights

x

f(x)

Figure 1: Our reparametriza-

tion. We only train A and B.

Many applications in natural language processing rely on adapt-

ing one large-scale, pre-trained language model to multiple down-

stream applications. Such adaptation is usually done via fine-tuning,

which updates all the parameters of the pre-trained model. The ma-

jor downside of fine-tuning is that the new model contains as many

parameters as in the original model. As larger models are trained

every few months, this changes from a mere “inconvenience” for

GPT-2 (Radford et al., b) or RoBERTa large (Liu et al., 2019) to a

critical deployment challenge for GPT-3 (Brown et al., 2020) with

175 billion trainable parameters.

1

Many sought to mitigate this by adapting only some parameters or

learning external modules for new tasks. This way, we only need

to store and load a small number of task-specific parameters in ad-

dition to the pre-trained model for each task, greatly boosting the

operational efficiency when deployed. However, existing techniques

∗

Equal contribution.

0

Compared to V1, this draft includes better baselines, experiments on GLUE, and more on adapter latency.

1

While GPT-3 175B achieves non-trivial performance with few-shot learning, fine-tuning boosts its perfor-

mance significantly as shown in Appendix A.

1

arXiv:2106.09685v2 [cs.CL] 16 Oct 2021