没有合适的资源?快使用搜索试试~ 我知道了~

深度学习CNN模型在CPU上的优化论文指导

需积分: 12 1 下载量 172 浏览量

2022-10-13

18:49:11

上传

评论

收藏 1.5MB PDF 举报

温馨提示

试读

16页

深度学习CNN模型在CPU上的优化论文指导

资源推荐

资源详情

资源评论

Optimizing CNN Model Inference on CPUs

Yizhi Liu

∗

, Yao Wang

∗

, Ruofei Yu, Mu Li, Vin Sharma, Yida Wang

Amazon Web Services

{yizhiliu, wayao, yuruofei, mli, vinarm, wangyida}@amazon.com

Abstract

The popularity of Convolutional Neural Network (CNN) mod-

els and the ubiquity of CPUs imply that better performance of

CNN model inference on CPUs can deliver significant gain

to a large number of users. To improve the performance of

CNN inference on CPUs, current approaches that treat the

model as a graph mostly rely on the use of high-performance

libraries such as Intel MKL-DNN and some basic graph-level

optimizations, which is restrictive and misses the opportu-

nity to optimize the end-to-end inference pipeline as a whole.

This paper presents a more comprehensive approach of CNN

model inference on CPUs that employs a full-stack and sys-

tematic scheme of optimizations. The proposed solution op-

timizes the operations as templates, which enables further

improvement of the performance via operation- and graph-

level joint optimization. Experiments show that the proposed

solution achieves up to 3.45

×

lower latency for CNN model

inference than the current state-of-the-art implementations on

various kinds of popular CPUs.

1 Introduction

The growing use of Convolutional Neural Network (CNN)

models in computer vision applications makes this model

architecture a natural focus for performance optimization

efforts. Similarly, the widespread deployment of CPUs in

servers, clients, and edge devices makes this hardware plat-

form an attractive target. Therefore, performing CNN model

inference efficiently on CPUs is of critical interest to many

users.

The performance of CNN model inference on CPUs leaves

significant room for improvement. Performing a CNN model

inference is essentially executing a computation graph con-

sisting of operations. In practice, people normally use high-

performance kernel libraries (e.g. Intel MKL-DNN [25] and

OpenBlas [47]) to obtain decent performance for CNN opera-

tions. While these libraries tune very carefully for common

∗

Equal contribution

operations with normal input data shapes (e.g. 2D convolu-

tions), they only focus on the (mostly, convolution) operations

but miss the opportunities to further optimize the end-to-end

model inference from the graph level. The graph-level opti-

mization is often handled by the deep learning frameworks,

e.g. TensorFlow [5] and MXNet [8].

However, the graph-level optimization such as operation fu-

sion and data layout planing that a framework can do is limited

because the operation implementation is already predefined

in the third-party libraries. Therefore, the optimizations in the

frameworks do not work in concert with the optimizations in

the kernel library, which leaves significant performance gains

unrealized in practice. Furthermore, different CPU architec-

tures rely on different high-performance libraries and integrat-

ing a library into a deep learning framework requires error-

prone and time-consuming engineering effort. For instance,

integrating MKL-DNN into MXNet involved 9732 lines of

additions and 8234 lines of deletions of the code over a period

of 6 months

1

, without considering a number of following

patches. Lastly, although those libraries are highly optimized,

they present as third-party plug-ins, which may introduce con-

tention issues with other libraries in the framework. As an

example, TensorFlow originally used the Eigen library [4]

to handle computation on CPUs. Later on, MKL-DNN was

also introduced. As a consequence, at runtime MKL-DNN

threads compete with Eigen threads, resulting in performance

loss. In summary, this kind of framework-specific approach

for CNN model inference on CPUs is inflexible, cumbersome,

and sub-optimal.

Because of the constraint imposed by the framework, opti-

mizing the performance of CNN model inference end-to-end

without involving a framework (i.e. a framework-agnostic

method) is of obvious interest to many deep learning prac-

titioners. Recently, Intel launched a universal CNN model

inference engine called OpenVINO Toolkit [16]. This toolkit

optimizes CNN models in the computer vision domain on

Intel processors (mostly x86 CPUs) and claims to achieve

better performance than the deep learning frameworks alone.

1

https://github.com/apache/incubator- mxnet/pull/8302

1

arXiv:1809.02697v2 [cs.DC] 11 Jan 2019

Yet, OpenVINO provides limited graph-level optimization

(e.g. operation fusion as implemented in ngraph [15]). Also,

OpenVINO relies upon MKL-DNN to deliver performance

gains for the carefully-tuned operations. Therefore, the opti-

mization done by OpenVINO is still not sufficient for most

of the CNN models.

Based on the previous observation, we argue that in order

to get the most decent CNN model inference performance out

from CPUs, being able to do the flexible end-to-end optimiza-

tion is the key. In this paper, we propose a comprehensive

approach to optimize CNN models for efficient inference on

CPUs. Our approach is full-stack and systematic, which in-

cludes operation-level and graph-level joint optimizations and

does not rely on any third-party high-performance libraries.

At the operation level, we follow the well-studied techniques

to optimize the most computationally-intensive operations

like convolution (CONV) in a template, which is applicable

to different workloads on multiple CPU architectures and

enables us for flexible graph-level optimization. At the graph

level, we coordinate the individual operation optimizations

by manipulating the data layout flowing through the entire

model for the best end-to-end performance, in addition to the

common techniques such as operation fusion and inference

simplification. In summary, our approach does the end-to-end

optimization in a flexible and automatic fashion, while the

existing works rely on third-party libraries and lack compre-

hensive performance tuning.

Our approach is built upon a deep learning compiler stack

named TVM [9] with a number of enhancements. There ex-

ist other deep learning compilers such as Tensor Compre-

hensions [43] and Glow [37]. However, they either do not

target on CPUs or not optimize the CPU performance well.

Therefore we do not incorporate those works as the baseline.

Specifically, this paper makes the following contributions:

•

Provides an operation- and graph-level joint optimization

scheme to obtain the best-ever CNN model inference

performance on different popular CPUs including Intel,

AMD and ARM;

•

Constructs a template to achieve decent performance

of convolutions, which is flexible to apply to various

convolution workloads on multiple CPU architectures

(x86 and ARM);

•

Designs a global scheme to look for the best layout com-

bination in different operations of a CNN model, which

minimizes the data layout transformation overhead be-

tween operations while maintaining the decent perfor-

mance of individual operations;

• Improves the runtime performance by employing a cus-

tomized thread pool for multi-thread parallelization.

To the best of our knowledge, this work achieves the un-

precedented performance for CNN model inference on var-

ious kinds of popular CPUs. Table 1 summarizes the fea-

Op-level opt Graph-level opt Joint opt Open-source

Our solution 3 3 3 3

MXNet [8]/TensorFlow [5] 3rd party limited 7 3

OpenVINO [16] 3rd party limited ? 7

Glow [37] single core 3 7 3

Table 1: Side-by-side comparison between our solution and

exisiting works on CNN model inference

tures of our solution compared to others. This paper primarily

deals with direct convolution computation, while the pro-

posed ideas are applicable to other optimization works on

the computationally-intensive kernels, e.g. CONVs via Wino-

grad [7, 27] or FFT [48].

We evaluated our solution on CPUs on both x86 and ARM

architectures. In general, our solution delivers the best per-

formance for 13 out of 15 popular networks on Intel Skylake

CPUs, 14 out of 15 on AMD EYPC CPUs, and all 15 models

on ARM Cortex A72 CPUs. It is worthwhile noting that the

baselines on x86 CPUs were more carefully tuned by the chip

vendor (Intel MKL-DNN) but the ARM CPUs were less opti-

mized. While the selected framework-specific (MXNet and

TensorFlow) and framework-agnostic (OpenVINO) solutions

may perform well on one case and less favorably on the other

case, our solution runs efficiently across models on different

architectures.

In addition, our approach produces a standalone module

with minimal size that does not depend on either the frame-

works or the high-performance kernel libraries, which enables

easy deployment to multiple platforms. Our solution is in pro-

duction use in several applications that employ CNN models

for inference on several types of platforms. All source code

has been released to the open source TVM project

2

.

The rest of this paper is organized as follows: Section 2

reviews the background of modern CPUs as well as the typical

CNN models; Section 3 elaborates the optimization ideas

that we propose and how we implement them, followed by

evaluations in Section 4. We list the related works in Section 5

and summarize the paper in Section 6.

2 Background

2.1 Modern CPUs

Although accelerators like GPUs and TPUs demonstrate their

outstanding performance on the deep learning workloads, in

practice, there is still a lot of deep learning computation, es-

pecially model inference, taking place on the general-purpose

CPUs due to the high availability. Currently, most of the CPUs

equipped on PCs and servers are manufactured by Intel or

AMD with x86 architecture [1], while ARM CPUs with ARM

architecture occupy the majority of embedded/mobile device

market [2].

2

https://github.com/dmlc/tvm

2

Modern CPUs use thread-level parallelism via multi-

core [20] to improve the overall processor performance given

the diminishing increasing of transistor budgets to build larger

and more complex uniprocessor. It is critical to avoid the in-

terference among threads running on the same processor and

minimize their synchronization cost in order to have a decent

scalable performance on multi-core processors. Within the

processor, a single physical core achieves the peak perfor-

mance via the SIMD (single-instruction-multiple-data) tech-

nique. SIMD loads multiple values into wide vector registers

to process together. For example, Intel introduced the 512-

bit Advanced Vector Extension instruction set (AVX-512),

which handles up to 16 32-bit single precision floating point

numbers (totally 512 bits) per CPU cycle. And the less ad-

vanced AVX2 processes data in 256-bit registers. In addition,

these instruction sets utilize the Fused-Multiply-Add (FMA)

technique which executes one vectorized multiplication and

then accumulates the results to another vector register in the

same CPU cycle. The similar SIMD technique is embodied

in ARM CPUs as NEON [3]. As shown in the experiments,

our solution works on both x86 and ARM architectures.

In addition, it is worth noting that mordern server-side

CPUs normally supports hyper-threading [34] via the simul-

taneous multithreading (SMT) technique, in which the sys-

tem could assign two virtual cores (i.e. two threads) to one

physical core, aiming at improving the system throughput.

However, the performance improvement of hyper-threading is

application-dependent [32]. In our case, we do not use hyper-

threading since one thread has fully utilized its physical core

resource and adding one more thread to the same physical core

will normally decrease the performance due to the additional

context switch. We also restrict our optimization within pro-

cessors using the shared-memory programming model. The

Non-Uniformed Memory Access (NUMA) pattern occurred

in the context of multiple processors on the same motherboard

is beyond the scope of this paper.

2.2 Convolutional neural networks

Convolutional neural networks (CNNs) are commonly used

in computer vision workloads [22, 24, 30, 33,38

–

40]. A CNN

model is normally abstracted as a computation graph, essen-

tially, Directed Acyclic Graph (DAG), in which a node rep-

resents an operation and a directed edge pointing from node

X to Y represents that the output of operation X serves as (a

part of) the inputs of operation Y (i.e. Y cannot be executed

before X). Executing a model inference is actually to flow

the input data through the graph to get the output. Doing the

optimization on the graph (e.g. prune unnecessary nodes and

edges, pre-compute values independent to input data) could

potentially boost the model inference performance.

Most of the computation in the CNN model inference at-

tributes to convolutions (CONVs). These operations are es-

sentially a series of multiplication and accumulation, which

by design can fully utilize the parallelization, vectorization

and FMA features of modern CPUs. The challenge is how to

manage the data flowing through these operations to achieve

the fully utilization.

The rest of the CNN workloads are mostly memory-bound

operations associated to CONVs (e.g. batch normalization,

pooling, activation, element-wise addition, etc.). The common

practice [9] is fusing them to CONVs so as to increase the

overall arithmetic intensity of the workload and consequently

boost the performance. The challenge is how to handle these

operations nicely so that the data layout optimized for CONVs

is applicable to them.

3 Optimizations

This section describes our optimization ideas and implemen-

tations in detail. The solution presented in this paper is end-

to-end for doing the CNN model inference. Our solution is

generic enough to work for a lot of popular models as we

will show in the evaluation. The basic idea of our approach

is to view the optimization as an end-to-end problem and

search for a globally best optimization. That is, we are not

only in favor of a local performance optimal of a single op-

eration as many previous works do. In order to achieve this,

we first present how we optimized the computationally inten-

sive convolution operations at low-level using a configurable

template (Section 3.1). This makes it flexible to search for

the best implementation of a specific convolution workload

on a particular CPU architecture, and to optimize the entire

computation graph by choosing proper data layouts between

operations to eliminate unnecessary data layout transforma-

tion overhead (presented in Section 3.2 and 3.3).

We implemented the optimization based on the TVM

stack [9] by adding a number of new features to the compil-

ing pass, operation scheduling and runtime components. The

original TVM stack has done a couple of generic graph-level

optimizations including operation fusion, pre-computing, sim-

plifying inference for batch-norm and dropout [9], which are

also inherited to our solution but will not be covered in this

paper.

3.1 Operation optimization

Optimizing convolution operations is critical to the overall

performance of a CNN workload as it takes the majority of

computation. This is a well-studied problem but the previous

works normally go deep to the assembly code level for decent

performance [23,25]. In this subsection, we show how to take

advantage of the latest CPU features (SIMD, FMA, paral-

lelization, etc.) to optimize a single CONV without going into

the tedious assembly code or C++ intrinsics. By managing

the implementation in high-level instead, it is then easy to

extend our optimization from a single operation to the entire

computation graph.

3

3.1.1 Single thread optimization

We started from optimizing CONV within one thread. CONV

is computationally-intensive which traverses its operands mul-

tiple times for computation. Therefore, it is critical to man-

age the layout of the data fed to the CONV to reduce the

memory access overhead. We first revisit the computation

of CONV to illustrate our memory management scheme. A

2D CONV in CNN takes a 3D feature map (height

×

width

×

channels) and a number of 3D convolution kernels (nor-

mally smaller height and width but the same number of chan-

nels) to convolve to output another 3D tensor. The calculation

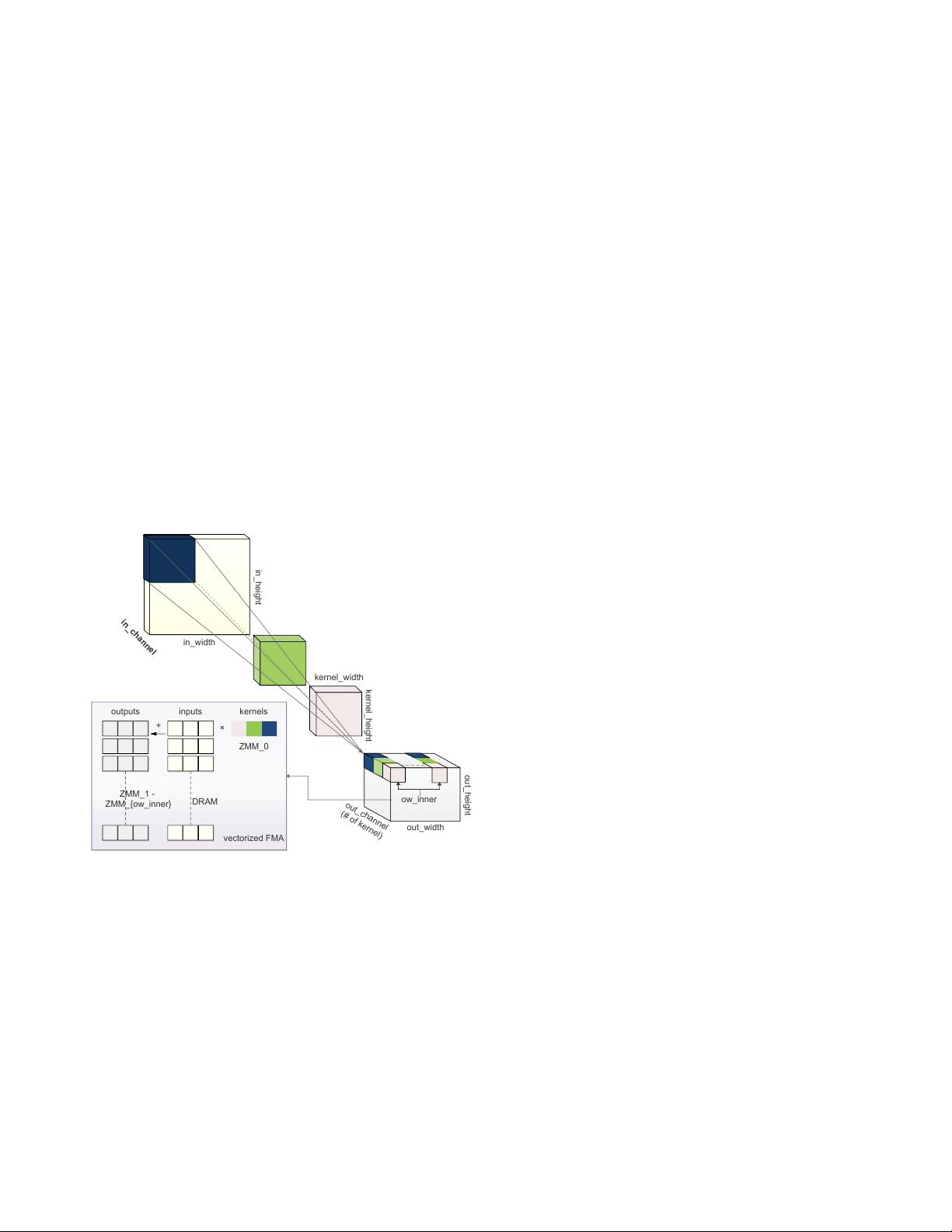

is illustrated in Figure 1, which implies loops of 6 dimen-

sions: in_channel, kernel_height, kernel_width, out_channel,

out_height and out_width. Each kernel slides over the input

feature map along the height and width dimensions, does

element-wise product and accumulates the values to produce

the corresponding element in the output feature map, which

can naturally leverage FMA. The number of kernels forms

out_channel. Note that three of the dimensions (in_channel,

kernel_height and kernel_width) are reduction axes that can-

not be embarrassingly parallelized.

in_height

in_width

kernel_width

kernel_height

out_width

out_height

out_channel

(# of kernel)

in_channel

ow_inner

inputs kernels

ZMM_0

ZMM_1 -

ZMM_{ow_inner}

+

×

DRAM

outputs

vectorized FMA

Figure 1: The illustration of CONV and the efficient imple-

mentation in AVX-512 instructions as an example. There

are three kernels depicted in dark blue, green and light pink.

To do efficient FMA, multiple kernel values are packed into

one

ZMM

register and reused to multiply with different input

values and accumulate to output values in different

ZMM

registers.

We use the conventional notation NCHW to describe the

default data layout, which means the input and output are 4-D

tensors with batch size N, number of channels C, feature map

height H, feature map width W, where N is the outermost and

W is the innermost dimension of the data. The related layout

of kernel is KCRS, in which K, C, R, S stand for the output

channel, input channel, kernel height and kernel width.

Following the common practice [25, 42], we organized

the feature map layout as NCHW[x]c for better memory ac-

cess patterns, in which c is a split sub-dimension of chan-

nel C in super-dimension, and the number x indicates the

split size of the sub-dimension (i.e.

#channels = sizeo f (C)×

sizeo f (c)

, where

sizeo f (c) = x

). The output has the same

layout NCHW[y]c as the input, while the split factor can be

different. Correspondingly, the convolution kernel is orga-

nized in KCRS[x]c[y]k, in which c with split size

x

and k

with split size

y

are the sub-dimensions of input channel C

and output channel K, respectively. It is worth noting that a

significant amount of data transformation overhead needs to

be paid to get the desired layout.

In addition to the dimension reordering, for better uti-

lizing the latest vectorization instructions (e.g. AVX-512,

AVX2, NEON, etc.), we split out_width to ow_outer and

ow_inner using a factor reg_n and move the loop of ow_inner

inside for register blocking. For example, on a CPU fea-

tured AVX-512, we can utilize its 32 512-bit width registers

ZMM

0

− ZMM

31

[26] as follows. We maintain the loop hier-

archy to use one ZMM register to store the kernel data while

others storing the feature map. The kernel values stored in

one

ZMM

register (up to 512 bits, a.k.a, 16 output channels

in float32) are used to multiply with a number of input feature

map values continuously stored in the DRAM via AVX-512F

instructions [26], whose results are then accumulated to other

ZMM

registers storing the output values. Figure 1 illustrates

this idea. For other vectorized instructions, the same idea ap-

plies but the split factor of out_width (i.e. reg_n) may change.

Algorithm 1 summarizes our optimization of CONV in

single thread, which essentially is about 1) dimension order-

ing for friendly memory locality and 2) register blocking

for good vectorization instruction utilization, as in previous

works. However, unlike others, we made it a template in high-

level language (see supplementary material), in which the

block size (

x

,

y

), the number of utilized registers (reg_n), and

the loop-unroll strategy (

unroll_ker

) are easily configurable.

Consequently, the computing logic can be adjusted according

to different CPU architectures (cache size, registered vector

width, etc.) as well as different workloads (feature map size,

convolution kernel size, etc.). This is flexible and enables

graph-level optimization we will discuss later.

3.1.2 Thread-level parallelization

It is a common practice to partition CONV into disjoint pieces

to parallelize among multiple cores of a modern CPU. Kernel

libraries like Intel MKL-DNN usually uses off-the-shelf multi-

threading solution such as OpenMP. However, we observe

that the resulting scalability of the off-the-shelf parallelization

solution is not desirable (Section 4.2.4).

Therefore, we implemented a customized thread pool to

efficiently process this kind of embarrassing parallelization.

4

剩余15页未读,继续阅读

资源评论

david-yue

- 粉丝: 251

- 资源: 44

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功