没有合适的资源?快使用搜索试试~ 我知道了~

嵌入式系统设计的验证与调试技术

温馨提示

试读

52页

嵌入式系统设计的验证与调试技术 Embedded Systems and Software Validation (Morgan Kaufmann, 20...

资源推荐

资源详情

资源评论

“RoyChoudhury: 09-Ch05-P374230” — 2009/4/17 — 14:36 — page 181 — #1

CHAPTER

Functionality Validation

5

So far, we have discussed various aspects of validation, namely, (a) system modeling

from informal requirements and validating the model, (b) validating the communi-

cation across system components, and (c) validating timing properties of embedded

software. In this chapter, we discuss the functionality validation of embedded soft-

ware. Some of the techniques we discuss here are generic to software validation

and can be integrated with any software development life cycle. However, some

of the methods are specifically useful for classes of software. For example, the

software model checking method is particularly useful for control-intensive (and

less data operation–dominated) programs that are common in controllers or device

drivers.

In discussing software validation methods, we need to clarify what we precisely

mean by “validation” here. Aloose definition of validation will be checking whether

the software behaves as expected. However, such a definition also implies that the

“expectation” from the software is properly documented. So, the first question we

face is how to document or describe the “expected” behavior of embedded soft-

ware. There are several ways to answer this question, and indeed the answer to the

question depends on what kind of validation methods we are resorting to. If our

validation method is software testing, the description of expected behavior consists

of the expected program output for selected test cases. If our validation method is

software model checking, the description of expected program behavior will consist

of the temporal properties being verified.

Having clarified the issue of expected behavior, we ought to differentiate soft-

ware validation from model validation.After all, our discussion on model validation

(Chapter 2) covered how to check properties of the models via model checking. In

principle, one could validate the model and generate the implementation software

from the models, automatically or semiautomatically. However, in practice, this is

Embedded Systems and Software Validation

Copyright © 2009, Elsevier Inc. All rights reserved.

181

“RoyChoudhury: 09-Ch05-P374230” — 2009/4/17 — 14:36 — page 182 — #2

182 CHAPTER 5 Functionality Validation

rarely done. The modeling mostly serves the purpose of design documentation and

comprehension. The modeling activity gives the system designer a methodical way

of eliciting the requirements and putting them together in the form of an initial

design. Validating the model clarifies the designer’s understanding of what the sys-

tem ought to be. On the other hand, software validation is a much more downstream

activity where the actual implementation to be deployed is validated.

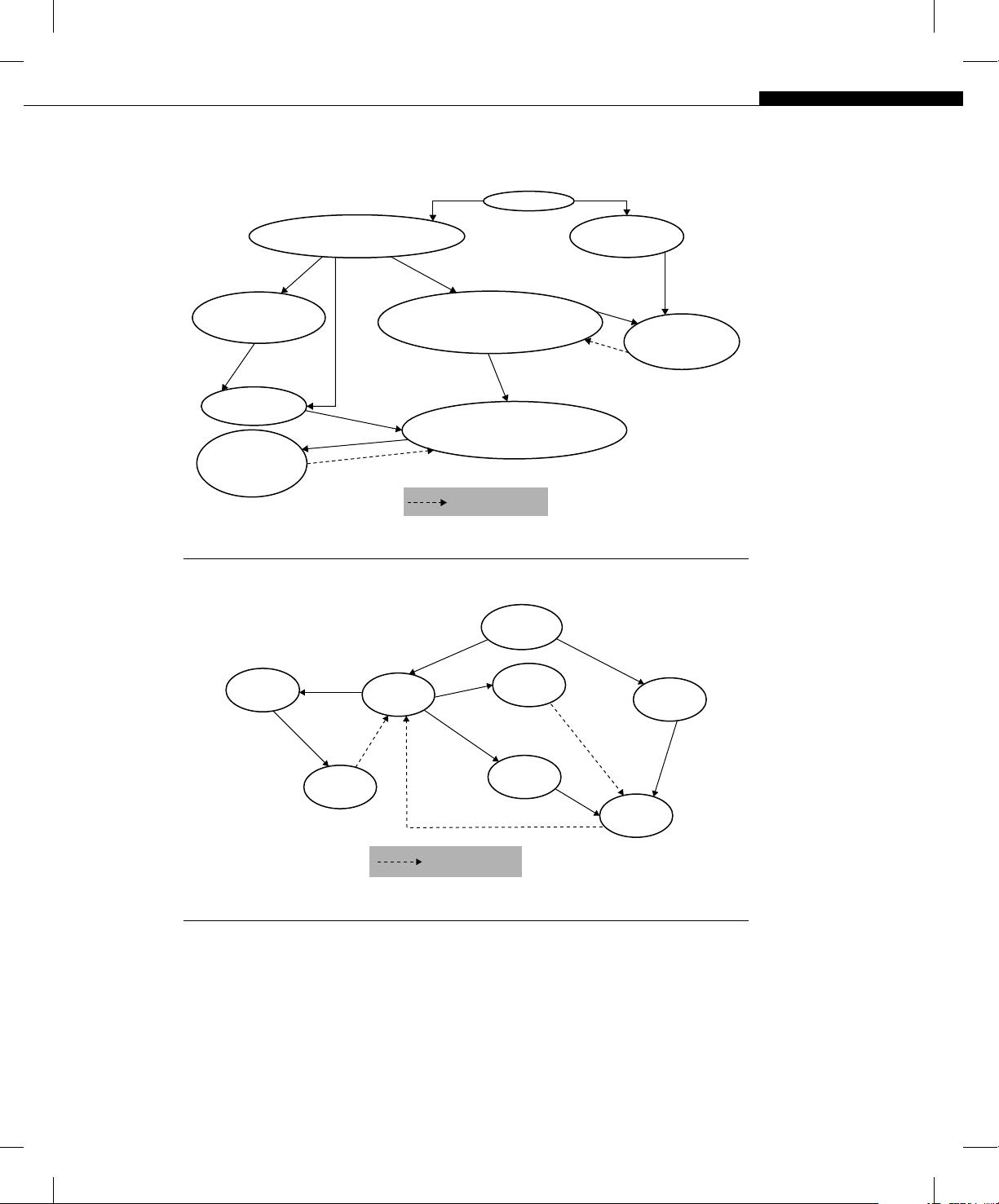

Depending on whether the software being validated is constructed from a design

model (or not), the flow of software validation can be different. Figure 5.1 shows

the overall software validation flow in the case where the software is written with a

design model as a guide. Here we could first employ static checking on the design

model to ensure that it satisfies certain important properties. Subsequently, we write

code using the design model as a guidepost (certain parts of the code could even be

automatically generated). This code can be subjected to dynamic checking such as

testing/debugging for specific program inputs.

Figure 5.2 shows the overall software validation flow, where the software is

written directly by the programmer. In this case, the software is hand-written and

not generated automatically or semiautomatically from a design model. This is often

the situation in industrial practice where the design model, even if one exists, is

primarily used for the purposes of documentation. We note that in this case, the code

is potentially subjected to three kinds of validation:

■

Dynamic checking,

■ Static checking, and

■ Static analysis.

Dynamic checking corresponds to software debugging via software testing —

we run test cases, check whether the observed behavior is the same as the expected

behavior, and if not, analyze the execution trace(s) for possible reasons. Static check-

ing corresponds to checking predefined properties againsta given program — a prime

example of static checking methods being model checking (Section 2.8). Recall

that model checking verifies a temporal property (a property about the sequence of

events in system execution) against a finite-state model of the implementation. In

this case, because we wrote the software without any model, the model needs to

be extracted from the software, as shown in Figure 5.2. The final kind of validation

illustrated in Figure 5.2 is static analysis. Unlike static checking, here we do not have

a property to verify — instead we attempt to infer program properties by analyzing

it. Typically, we may use static analysis to infer invariants about specific program

locations; for example, whenever control flow reaches line 70, the variable v must

be 0. These properties can then be exploited in static checking methods such as model

checking. In other words, the role of the static analysis methods here is primarily to

help methods such as model checking.

“RoyChoudhury: 09-Ch05-P374230” — 2009/4/17 — 14:36 — page 183 — #3

CHAPTER 5 Functionality Validation 183

Requirements (English)

Desirable

properties

User

Manual step

Manual step

Design model (State diagrams?)

Alternate models?

Sequence diag.

Static checking

tools

Code

Tests

Semi-automated

Dynamic

checking tools

Testing

Validation output

Figure 5.1

Validation in model-driven software engineering.

Programmer

Code

Test suite

Coverage

Static

analyzer

Static

checker

Properties

Testing

Dynamic

checker

Model

Abstract

Validation output

Figure 5.2

Software engineering without a model: possible validation mechanisms.

In the rest of this chapter, we elaborate on static and dynamic checking methods

with illustrative examples. In the later part of the chapter, we present some hybrid

methods that combine static and dynamic checking.

“RoyChoudhury: 09-Ch05-P374230” — 2009/4/17 — 14:36 — page 184 — #4

184 CHAPTER 5 Functionality Validation

5.1 DYNAMIC OR TRACE-BASED CHECKING

Dynamic checking of software corresponds to checking its behavior for specific

test cases. Dynamic checking goes by many other (similar-sounding) names such

as run-time monitoring, dynamic analysis, or software debugging. The basic idea in

these methods is to run the program against specific tests and compare the observed

program behavior against expected program behavior. The tests may have been

generated during model validation (Section 2.7), or they could be generated from

the program itself through some coverage criterion (such as covering all statements

in the program).

If the observed program behavior is different from the expected behavior, the

corresponding test case is considered as failed, and the execution trace for the test

case is examined automatically/manually to find the cause of failure. It is important

to note here that the “observed” and “expected” behavior may not necessarily be

given by output variable values. For example, the observed behavior of a program

for a given test case may be that the program crashes, and the expected behavior

may be the absence of a crash.

Economic importance

Let us illustrate the economic issues that drive interest in software testing and debug-

ging. A report on the “Economic Impacts of Inadequate Infrastructure for Software

Testing” published in 2002 by the Research Triangle Institute and the National Insti-

tute of Standards and Technology (USA) estimates that the annual cost incurred as

a result of an inadequate software testing infrastructure all over the United States

amounts to $59.5 billion — 0.6% of the $10 trillion U.S. GDP.

Industrial studies on quality control of software have indicated high defect den-

sities. Ebnau in an ACM Crosstalk article

1

reports case studies where on an average

13 major errors per 1000 lines of code were reported. These errors are observed via

slow code inspection (at 195 lines per hour) by humans. So, in reality, we can expect

many more major errors. Nevertheless, conservatively let us fix the defect density at

13 major errors per 1000 lines of code. Now consider a software project with 5 mil-

lion lines of code (the Windows Vista operating system is 50 million lines of code, so

5 million lines of code is by no means an astronomical figure). Even assuming a linear

scaling up of defect counts, this amounts to at least (13 ⫻ 5000,000/1000) ⫽ 65,000

major errors. Even if we assume that the average time saved to fix one error using

1

See http://www.stsc.hill.af.mil/crosstalk/1994/06/xt94d06e.asp.

“RoyChoudhury: 09-Ch05-P374230” — 2009/4/17 — 14:36 — page 185 — #5

5.1 Dynamic or Trace-Based Checking 185

an automated debugging tool as opposed to manual debugging is 1 hour (this is

a very modest estimate; often, fixing a bug takes a day or two), the time saved is

65,000 man-hours ⫽ 65,000/44 ⫽ 1477 work weeks ⫽ 1477/50 ⫽ 30 man-years.

Clearly, this is a huge amount of time that a company can save, leading to more

productive usage of its manpower and saving of precious dollar value. Assuming an

employee salary of $ 40,000 per year, the foregoing translates to $ 1.2 million savings

in employee salary simply by using better debugging tools. A much bigger savings,

moreover, comes from customer satisfaction. By using automated debugging tools, a

software development team can find more bugs than via manual debugging, leading

to increased customer confidence and enhanced reputation of the company’s prod-

ucts. Finally, manual approaches are error-prone, and the chances of leaving bugs

can have catastrophic effects in safety-critical systems.

Related Terminology

To clarify the terminology related to dynamic checking methods, let us start with

the “folklore” definition of software bug in Wikipedia:

A software bug (or just “bug”) is an error, flaw, mistake, “undocumented feature,”

failure, or fault in a computer program that prevents it from behaving as intended

(e.g., producing an incorrect result). Most bugs arise from mistakes and errors made

by people in either a program’s source code or its design, and a few are caused by

compilers producing incorrect code. A program that contains a large number of bugs,

and/or bugs that seriously interfere with its functionality, is said to be buggy. Reports

detailing bugs in a program are commonly known as bug reports, fault reports, problem

reports, trouble reports, change requests, and so forth.

The conventional notion of a software bug is an error in the program that gets

introduced during the software construction. It is worthwhile to note that the mani-

festation of a bug may be very different from the bug itself. Thus, the main task in

software debugging is to trace back to the software bug from the manifestation of it.

A good bug report will be able to take in a manifestation of a bug and locate the bug.

In case this sounds unclear, let us consider the following program fragment marked

with line numbers, written in Java style:

1. void setRunningVersion(boolean runningVersion)

2. if( runningVersion ) {

3. savedValue = value;

}

else{

4. savedValue = "";

}

剩余51页未读,继续阅读

yfw418

- 粉丝: 20

- 资源: 1

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功

- 1

- 2

前往页