# Code of [Detecting Visual Relationships with Deep Relational Networks](https://arxiv.org/abs/1704.03114)

The code is written in python, and all networks are implemented using [Caffe](https://github.com/BVLC/caffe).

## Datasets

* [VRD](http://cs.stanford.edu/people/ranjaykrishna/vrd/dataset.zip)

* sVG: subset of [Visual Genome](https://visualgenome.org/)

- [Link](https://drive.google.com/file/d/0B5RJWjAhdT04SXRfVHBKZ0dOTzQ/view?usp=sharing&resourcekey=0-bW_W0QVJOfaNs5NyGjDjbQ)

- Images can be downloaded from the website of Visual Genome

- Remarks: eventually I found no time to further clean it. This subset has a manually cleaned list for relationship predicates. The list for objects may need further cleaning, although Faster-RCNN can get a recall@20 around 50%.

- Using our method, you can get the corresponding results reported in the paper on this dataset.

## Networks

This repo contains three kinds of networks. And all of them get the raw response for predicate based on both appearance cues and spatial cues,

followed by a refinement according to responses of the subject, the object and the predicate.

The networks are designed for the task of predicate recognition,

where ground-truth labels of the subject and the object are provided as inputs.

Therefore, in these networks, responses of the subject and the object are replaced with indicator vectors,

and only response of the predicate will be refined.

In these networks, the subnet for appearance cues is VGG16, and the subnet for spatial cues consists of three conv layers.

And outputs of both subnets are combined via a customized concatenate layer,

followed by two fc layers to generate raw response for the predicate.

The customized concatenate layer is used for combining the output of a fc layer and channels of the output of a conv layer,

which can be replaced with caffe's Concat layer

if the last conv layer in spatial subnet (conv3_p) is equivalently replaced with a fc layer.

The details of these networks are

* drnet_8units_softmax: it has 8 inference units with softmax function as the activation function.

* drnet_8units_linear_shareweight: it has 8 inference units with no activation function, and the weights are shared across units.

* drnet_8units_relu_shareweight: it has 8 inference units with relu function as the activation function, and the weights are shared across units.

### Training

The training procedure is component-by-component.

Specifically, a network usually contains three components,

namely the subnet for appearance (A), the subnet for spatial cues (S), and the drnet for statistical dependencies (D).

In training, we train the network as follow:

* train A in isolation

* train S in isolation

* train A + S in isolation, with weights initialized from previous steps

* train A + S + D jointly, with weights initialized from previous steps

Each step we use the same loss, and we use dropout to avoid overfit.

### Recalls on Predicate Recognition

| Networks | Recall@50 | Recall@100 |

| --- | :---: | :---: |

| drnet_8units_softmax | 75.22 | 77.55 |

| drnet_8units_linear_shareweight | 78.57 | 79.94 |

| drnet_8units_relu_shareweight | 80.86 | 81.83 |

## Codes

* lib/: python layers, as well as auxiliary files for evaluation

* prototxts/: training and testing prototxts

* tools/: python codes for preparing data and evaluation

* snapshots/: pretrain models

## Finetune or Evaluate

1. Download the dataset [VRD](https://github.com/Prof-Lu-Cewu/Visual-Relationship-Detection)

2. Preprocess the dataset using tools/preprare_data.py

3. Download one pretrain model in snapshots/

4. Finetune or Evaluate using corresponding prototxts in prototxts/

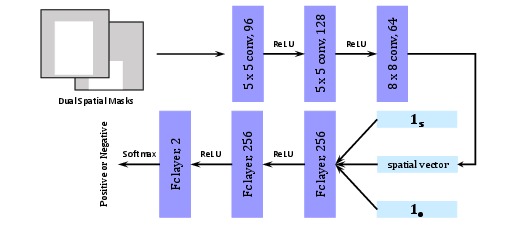

## Pair Filter

### Structure

### Training

To train this network, we randomly sample pairs of bounding boxes (with labels) from

each training image, treating those with 0.5 IoU (or above) with any ground-truth pairs (with same labels)

as positive samples, and the rest as negative samples.

## Citation

If you use this code, please cite the following paper(s):

@article{dai2017detecting,

title={Detecting Visual Relationships with Deep Relational Networks},

author={Dai, Bo and Zhang, Yuqi and Lin, Dahua},

booktitle={Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition},

year={2017}

}

## License

This code is used for research only. See LICENSE for details.

没有合适的资源?快使用搜索试试~ 我知道了~

DRnet实现对图片进行去噪

共30个文件

py:12个

pyc:7个

prototxt:6个

需积分: 5 0 下载量 118 浏览量

2024-03-28

16:58:09

上传

评论

收藏 53KB ZIP 举报

温馨提示

DRNet(Deep Residual Network)通常用于图像分类、目标检测等任务,但并非专门设计用于图像去噪。然而,由于其强大的特征表示能力,DRNet或类似的残差网络结构可以经过适当修改和调整来用于图像去噪任务。 要在DRNet的基础上实现图像去噪,你需要考虑以下几个关键步骤: 网络结构: 可以使用原始的DRNet结构,或者对其进行简化或修改以适应去噪任务。 通常,去噪网络不需要像分类网络那样深的层数,因此可以适当减少层数或调整卷积核的大小。 损失函数: 对于去噪任务,常用的损失函数是均方误差(MSE)或L1损失。这些损失函数能够度量去噪后的图像与原始无噪图像之间的差异。 你还可以考虑使用更复杂的损失函数,如结构相似性损失(SSIM)或感知损失,以更好地捕捉图像的视觉质量。 训练数据: 准备有噪声的图像和对应的无噪声图像作为训练数据。你可以使用真实世界的噪声图像或合成噪声图像。 对于合成噪声,常见的噪声类型包括高斯噪声、椒盐噪声等。 训练过程: 使用训练数据对DRNet进行训练,通过反向传播算法优化网络参数。 在训练过程中,可以使用一些正则化技术(如权重衰减)来防止过拟合

资源推荐

资源详情

资源评论

收起资源包目录

drnet_cvpr2017-master.zip (30个子文件)

drnet_cvpr2017-master.zip (30个子文件)  drnet_cvpr2017-master

drnet_cvpr2017-master  lib

lib  customize_layers

customize_layers  __init__.py 0B

__init__.py 0B concat_layer.pyc 2KB

concat_layer.pyc 2KB concat_layer.py 970B

concat_layer.py 970B __init__.pyc 154B

__init__.pyc 154B utils

utils  __init__.py 0B

__init__.py 0B eval_utils.py 324B

eval_utils.py 324B __init__.pyc 143B

__init__.pyc 143B eval_utils.pyc 782B

eval_utils.pyc 782B rel_data_layer

rel_data_layer  __init__.py 0B

__init__.py 0B layer.py 3KB

layer.py 3KB layer.pyc 5KB

layer.pyc 5KB __init__.pyc 152B

__init__.pyc 152B tools

tools  _init_paths.py 371B

_init_paths.py 371B _init_paths.pyc 645B

_init_paths.pyc 645B test_predicate_recognition.py 5KB

test_predicate_recognition.py 5KB eval_triplet_recall.py 4KB

eval_triplet_recall.py 4KB prepare_data.py 3KB

prepare_data.py 3KB test_triplet_detection.py 6KB

test_triplet_detection.py 6KB eval_union_recall.py 4KB

eval_union_recall.py 4KB LICENSE 2KB

LICENSE 2KB snapshots

snapshots  README.md 353B

README.md 353B prototxts

prototxts  drnet_8units_linear_shareweight.prototxt 15KB

drnet_8units_linear_shareweight.prototxt 15KB test_drnet_8units_softmax.prototxt 14KB

test_drnet_8units_softmax.prototxt 14KB test_drnet_8units_relu_shareweight.prototxt 15KB

test_drnet_8units_relu_shareweight.prototxt 15KB drnet_8units_relu_shareweight.prototxt 16KB

drnet_8units_relu_shareweight.prototxt 16KB test_drnet_8units_linear_shareweight.prototxt 14KB

test_drnet_8units_linear_shareweight.prototxt 14KB drnet_8units_softmax.prototxt 15KB

drnet_8units_softmax.prototxt 15KB .gitignore 27B

.gitignore 27B imgs

imgs  pair_filter.jpg 21KB

pair_filter.jpg 21KB README.md 4KB

README.md 4KB共 30 条

- 1

资源评论

yc1111yc

- 粉丝: 22

- 资源: 164

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功