248

• 2023 IEEE International Solid-State Circuits Conference

ISSCC 2023 / SESSION 16 / EFFICIENT COMPUTE-IN-MEMORY BASED PROCESSORS FOR ML / 16.1

16.1 MulTCIM: A 28nm 2.24μJ/Token Attention-Token-Bit Hybrid

Sparse Digital CIM-Based Accelerator for Multimodal

Transformers

Fengbin Tu, Zihan Wu, Yiqi Wang, Weiwei Wu, Leibo Liu, Yang Hu,

Shaojun Wei, Shouyi Yin

Tsinghua University, Beijing, China

Human perception is multimodal and able to comprehend a mixture of vision, natural

language, speech, etc. Multimodal Transformer (MulT, Fig. 16.1.1) models introduce a

cross-modal attention mechanism to vanilla transformers to learn from different

modalities, achieving excellent results on multimodal AI tasks like video question

answering and multilingual image retrieval. Transformers require specialized hardware

for efficient inference [1]. Prior work demonstrates that a Compute-In-Memory (CIM)

accelerator with attention sparsity can efficiently process vanilla transformers [2].

Multimodal signals like video and audio exhibit diverse token significance, providing new

opportunities for token sparsity via runtime pruning [3]. Additionally, activation functions

like GELU and softmax produce many near-zero values that expose bit sparsity in the

most-significant bits (MSB). In utilizing attention-token-bit hybrid sparsity, there are

three challenges: 1) For attention sparsity, irregular patterns result in long reuse distance,

which requires CIM to hold infrequently used weights, lowering CIM utilization. 2)

Although token sparsity reduces computation, MulT’s cross-modal attention processes

tokens from two modalities with different token lengths (N) and embedding

dimensionality (d

m

), causing high latency in cross-modal switch. 3) At the bit level, since

token sparsity reduces value locality, a CIM macro has more variance in effective bitwidth

for the same group of inputs. In a conventional CIM’s bit-serial MAC scheme,

computation time is defined by the longest bitwidth.

We propose a digital CIM-based MulT model accelerator MulTCIM, with three features

to tackle the hybrid sparsity challenges: 1) Instead of generating Q, K, V tokens in order,

we implement a Long Reuse Elimination Scheduler (LRES) to dynamically reshape the

attention matrix like a global+local sparse pattern. With this approach, weights stored in

the CIM can be reused more frequently to improve CIM utilization. 2) We design a

Runtime Token Pruner (RTP) to remove insignificant tokens and a Modal-Adaptive CIM

Network (MACN) to dynamically divide all CIM cores into two pipeline stages, StageS

for static matrix multiplication (MM) in Q, K and V generation, and StageD for dynamic

MM of attention computation. In cross-modal switch, MACN further exploits modal

symmetry to overlap Q, K generation for lower latency. 3) An Effective Bitwidth Balanced

CIM (EBB-CIM) architecture is designed to balance input bits across in-memory MACs

by performing effective bitwidth detection and bit equalization, reducing the computation

time.

Figure 16.1.2 shows the MulTCIM accelerator, comprising a MACN with 16 CIM cores,

an RTP, an LRES, a 64KB input buffer (IB), a 128KB global buffer (GB), a SIMD core and

a top controller. CIM cores are connected by the MACN’s pipeline bus, and each core

has 8 EBB-CIM macros. The controller configures the MACN with current layer

parameters. For fully-connected layers, all CIM cores store layer weights and work in

parallel. For attention layers (QK

T

-MM, A’V-MM), CIM cores work in pipeline mode similar

to [2] for less off-chip access. Take QK

T

-MM for example. RTP first prunes insignificant

input tokens. LRES then configures sparse token and attention scheduling for IB and

MACN’s StageD. The cores in StageS store weight matrices (W

Q

, W

K

), and load inputs

from IB to generate Q, K. The output q, k vectors are merged in the StageS adder and

streamed to StageD. LRES controls the StageS output’s destination core in StageD for

weight writing or input feed. Specifically for cross-modal attention, MACN utilizes the

modal workload allocator (MWA) for adjusting the StageS workload during cross-modal

switch. The MACN outputs are finally stored in the GB with activation functions performed

in the SIMD core.

Figure 16.1.3 illustrates LRES that optimizes for attention sparsity. LRES comprises an

attention sparsity manager, a local attention sorter and a reshaped attention generator.

The manager stores the sparse attention pattern obtained by training. After runtime token

pruning by the RTP, the manager compares the pattern’s row/column-wise sums to

select global-like attention. The corresponding q or k vectors are reused as weights in

StageD’s CIM for a long time. The local attention sorter forms a local-like pattern for the

rest of the attention with k as weights and q as inputs in StageD’s CIM. Local-like means

k is frequently consumed and dynamically replaced by new k from StageS. The pattern

is first row-wise reordered via the similarity-based q-sorter to decide the input feed

sequence with minimum reuse distance for the current k. Then, the difference-based k-

sorter reorders the pattern column-wise to decide the weight writing sequence. The

reshaped attention generator sends configurations to the IB for token feed and StageD

for workload assignment. In the example QK

T

-MM, LRES achieves 4.16× higher CIM

utilization for StageD and 2.39× total speedup over the conventional in-order computing

approach.

Figure 16.1.4 depicts the RTP and MACN blocks that optimize for token sparsity. Since

the class (CLS) token characterizes other tokens’ significance [3], the RTP receives the

previous layer’s CLS score and selects the current layer’s top-n most-significant tokens.

The MACN comprises an MWA, 16 CIM cores and a pipeline bus. The MWA updates

pruning information for the current layer and allocates the StageS workload. Initially, the

MWA divides CIM cores into StageS and StageD, and pre-distributes weights for StageS

based on the allocation table. In cross-modal attention, conventional methods compute

one modality after another. Different modal parameters lead to many idle CIM macros in

cross-modal switch. The MWA exploits modal symmetry to overlap multimodal Q, K

generation. The CIM’s 4:1 activation structure stores multimodal weights in one macro

and switches modality by time multiplexing. Core1 stores W

QX

and W

QY

in the example.

At cycle N

X

, the MACN switches to Phase2 and Core1 activates W

QY

to generate Q

Y

instead

of staying idle. Modal symmetry makes Q

Y

, K

X

generation conclude at the same time with

better CIM utilization. The RTP reduces latency by 2.13× and 1.58× for single- and cross-

modal attention, and symmetric modal overlap offers an extra 1.69× speedup for

cross-modal attention.

Figure 16.1.5 shows the EBB-CIM macro that optimizes for bit sparsity. It comprises 32

EBB-CIM arrays, an effective bitwidth detector, a bit equalizer and a bit-balanced feeder.

Each EBB-CIM array has 4×64 6T-SRAM bitcells (8 banks) and a cross-shift MAC tree.

We exploit a full-digital CIM architecture with 4 rows of time-sharing CIM logic (4:1

activation) to achieve high computing accuracy at INT16, while maintaining memory

density [4]. The detector receives inputs and detects effective bitwidth (EB) at runtime.

The bit equalizer calculates the average EB, assigns the long-EB data’s bits to the short-

EB data, producing a bit-balanced input sequence. The bit-balanced feeder fetches the

sequence and generates cross-shift configurations. The example shows INT8

multiplication of 8-element input and weight vectors. The MAC tree cross-shifts 8 weights

in a row to multiply 8 input bits at the correct position. At cycle 0, the cross-shift MAC

computes I

0

[3]×W

0

+I

3

[6]×(W

3

<<3)+I

3

[4]×(W

3

<<1)+I

3

[3]×W

3

+...+I

6

[4]×(W

6

<<1) by

balancing long-EB W

3

and W

6

, thereby avoiding wasting in-memory MACs. The bit

equalizer limits weight shifting within 4 bits for lower cost in memory. The EBB-CIM is

reconfigurable for INT16 by fusing every two INT8 operations. Compared to conventional

bit-serial CIM, the EBB-CIM reduces latency by 2.38×, 2.20×, and 1.58× for softmax-

MM, GELU-MM, and the entire encoder, respectively, with only 5.1% power and 4.6%

area overhead.

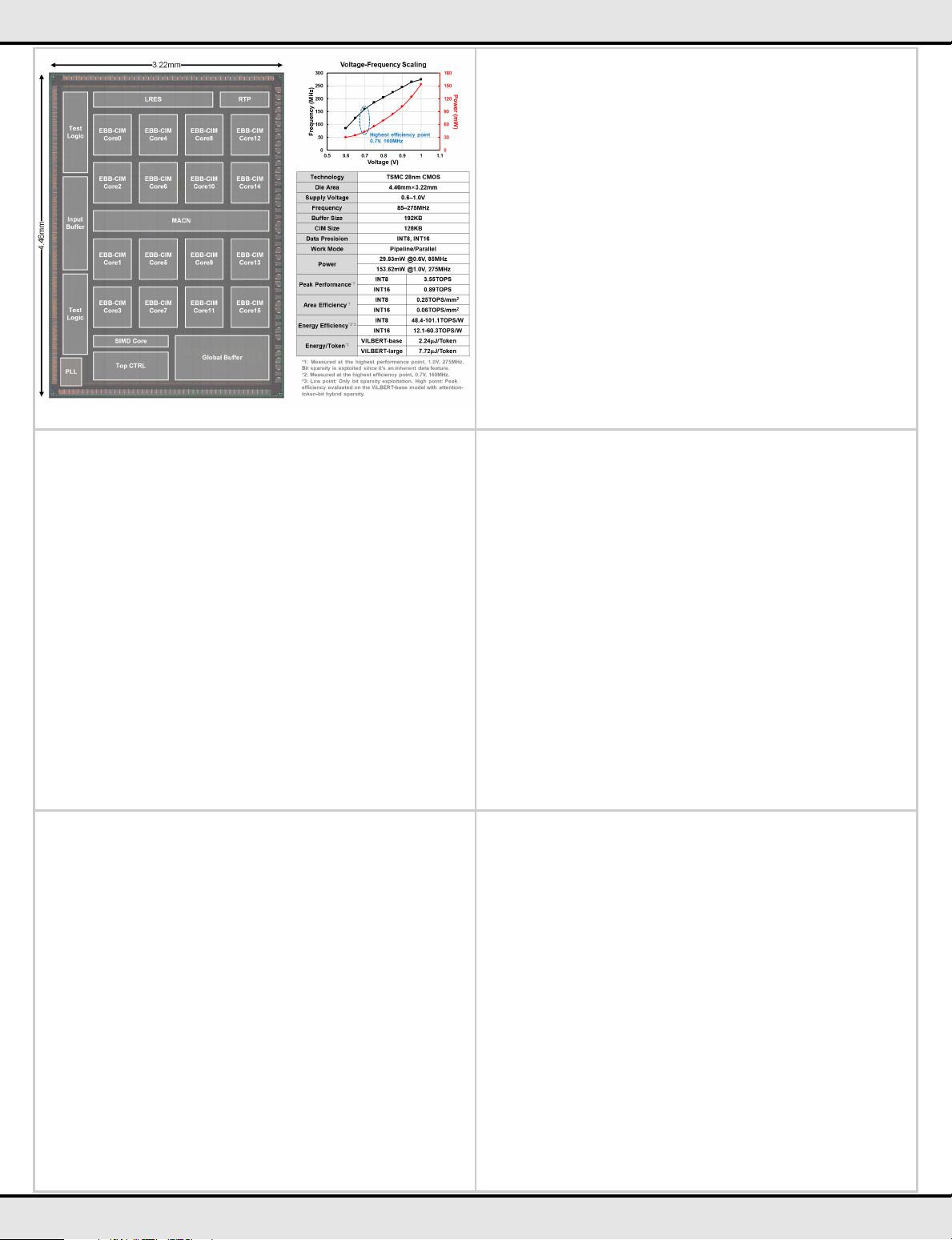

Figure 16.1.6 shows the measurement results for the 28nm MulTCIM accelerator. The

chip works at 0.6-1.0V supply, corresponding to 85-275MHz. MulTCIM supports general

transformer models with special optimizations for MulT. Experiments are conducted on

2 typical MulT models, ViLBERT-base and ViLBERT-large [5], with the Visual Question

Answering v2.0 Dataset. Hybrid sparse techniques obtain 9.47× speedup and 8.11×

energy savings on ViLBERT-base’s attention layers, achieving 6.54× speedup and 5.61×

energy savings on the entire model. The negligible accuracy loss mainly comes from

INT8/16 quantization. The peak energy efficiency is 101.1TOPS/W for INT8 and

60.3TOPS/W for INT16 at 0.7V, 160MHz. Compared with prior transformer and digital

CIM accelerators, MulTCIM consumes 2.24μJ/Token for the ViLBERT-base model – an

energy reduction of 5.91× over [1] and 5.61× over [2]. Owing to the hybrid sparsity

exploitation, MulTCIM achieves 2.50× higher efficiency than the more advanced 5nm

digital CIM architecture [4]. MulTCIM’s die photo, voltage-frequency scaling curves and

summary table are shown in Fig. 16.1.7.

Acknowledgement:

This work was supported in part by NSFC Grant 62125403, Grant U19B2041, and Grant

92164301; in part by the National Key Research and Development Program under Grant

2021ZD0114400; in part by Beijing National Research Center for Information Science

and Technology; and, in part by the Beijing Advanced Innovation Center for Integrated

Circuits. The corresponding author of this paper is Shouyi Yin (yinsy@tsinghua.edu.cn).

References:

[1] Y. Wang et al., “A 28nm 27.5TOPS/W Approximate-Computing-Based Transformer

Processor with Asymptotic Sparsity Speculating and Out-of-Order Computing,” ISSCC,

pp. 464-465, 2022.

[2] F. Tu et al., “A 28nm 15.59μJ/Token Full-Digital Bitline-Transpose CIM-Based Sparse

Transformer Accelerator with Pipeline/Parallel Reconfigurable Modes,” ISSCC, pp. 466-

467, 2022.

[3] Y. Xu et al., “Evo-ViT: Slow-Fast Token Evolution for Dynamic Vision Transformer,”

AAAI, pp, 2964-2972, 2022.

[4] H. Fujiwara et al., “A 5-nm 254-TOPS/W 221-TOPS/mm

2

Fully-Digital Computing-in-

Memory Macro Supporting Wide-Range Dynamic-Voltage-Frequency Scaling and

Simultaneous MAC and Write Operations,” ISSCC, pp. 186-187, 2022.

[5] J. Lu et al., “ViLBERT: Pretraining Task-Agnostic Visiolinguistic Representations for

Vision-and-Language Tasks,” Conf. on Neural Information Processing, 2019.

978-1-6654-9016-0/23/$31.00 ©2023 IEEE

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜 信息提交成功

信息提交成功