[](https://eval.vision.rwth-aachen.de/rvsu-workshop21/?page_id=110)

# RobMOTS Official Evaluation Code

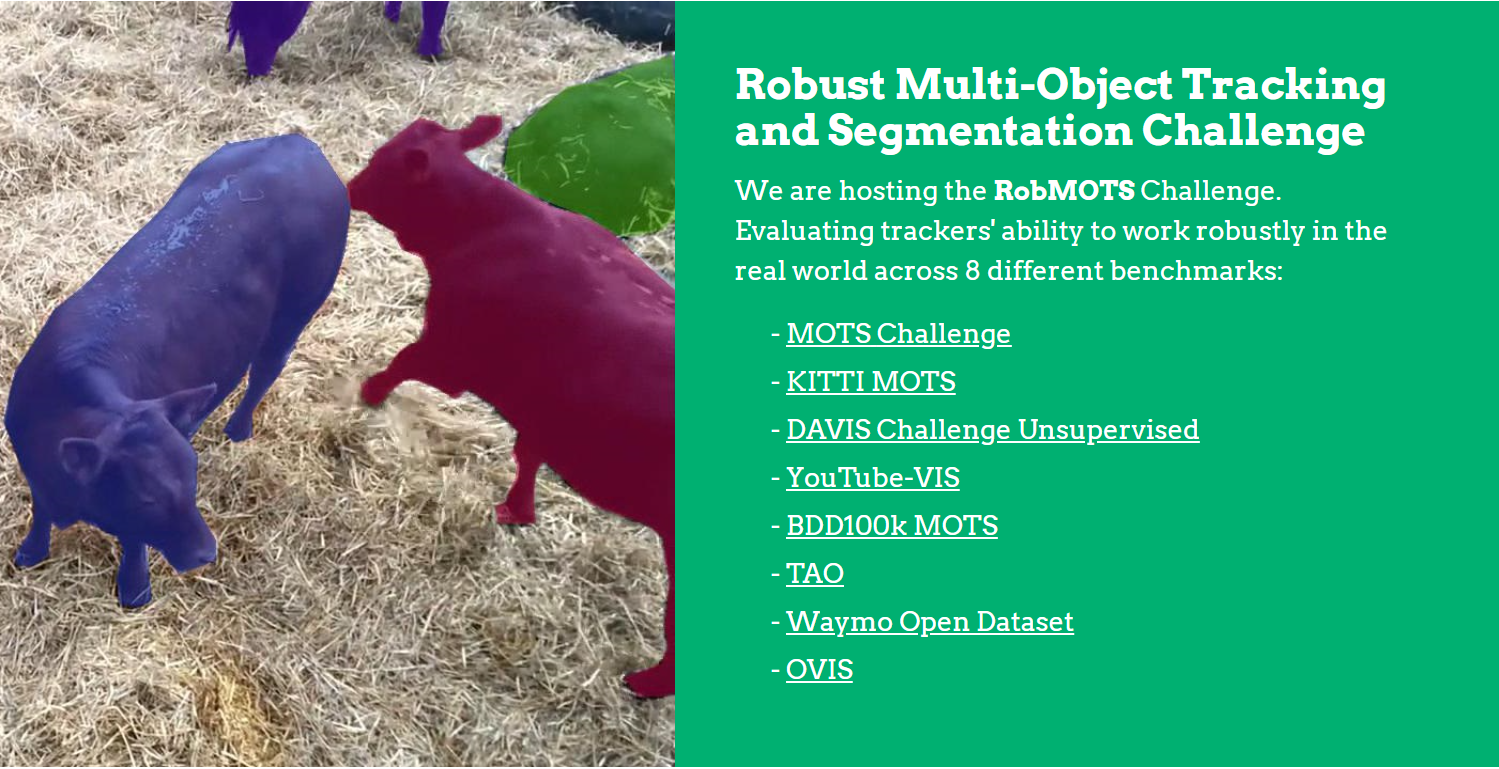

### NEWS: [RobMOTS Challenge](https://eval.vision.rwth-aachen.de/rvsu-workshop21/?page_id=110) for the [RVSU CVPR'21 Workshop](https://eval.vision.rwth-aachen.de/rvsu-workshop21/) is now live!!!! Challenge deadline June 15.

### NEWS: [Call for short papers](https://eval.vision.rwth-aachen.de/rvsu-workshop21/?page_id=74) (4 pages) on tracking and other video topics for [RVSU CVPR'21 Workshop](https://eval.vision.rwth-aachen.de/rvsu-workshop21/)!!!! Paper deadline June 4.

TrackEval is now the Official Evaluation Kit for the RobMOTS Challenge.

This repository contains the official evaluation code for the challenges available at the [RobMOTS Website](https://eval.vision.rwth-aachen.de/rvsu-workshop21/?page_id=110).

The RobMOTS Challenge tests trackers' ability to work robustly across 8 different benchmarks, while tracking the [80 categories of objects from COCO](https://cocodataset.org/#explore).

The following benchmarks are included:

Benchmark | Website |

|----- | ----------- |

|MOTS Challenge| https://motchallenge.net/results/MOTS/ |

|KITTI-MOTS| http://www.cvlibs.net/datasets/kitti/eval_mots.php |

|DAVIS Challenge Unsupervised| https://davischallenge.org/challenge2020/unsupervised.html |

|YouTube-VIS| https://youtube-vos.org/dataset/vis/ |

|BDD100k MOTS| https://bdd-data.berkeley.edu/ |

|TAO| https://taodataset.org/ |

|Waymo Open Dataset| https://waymo.com/open/ |

|OVIS| http://songbai.site/ovis/ |

## Installing, obtaining the data, and running

Simply follow the code snippet below to install the evaluation code, download the train groundtruth data and an example tracker, and run the evaluation code on the sample tracker.

Note the code requires python 3.5 or higher.

```

# Download the TrackEval repo

git clone https://github.com/JonathonLuiten/TrackEval.git

# Move to repo folder

cd TrackEval

# Create a virtual env in the repo for evaluation

python3 -m venv ./venv

# Activate the virtual env

source venv/bin/activate

# Update pip to have the latest version of packages

pip install --upgrade pip

# Install the required packages

pip install -r requirements.txt

# Download the train gt data

wget https://omnomnom.vision.rwth-aachen.de/data/RobMOTS/train_gt.zip

# Unzip the train gt data you just downloaded.

unzip train_gt.zip

# Download the example tracker

wget https://omnomnom.vision.rwth-aachen.de/data/RobMOTS/example_tracker.zip

# Unzip the example tracker you just downloaded.

unzip example_tracker.zip

# Run the evaluation on the provided example tracker on the train split (using 4 cores in parallel)

python scripts/run_rob_mots.py --ROBMOTS_SPLIT train --TRACKERS_TO_EVAL STP --USE_PARALLEL True --NUM_PARALLEL_CORES 4

```

You may further download the raw sequence images and supplied detections (as well as train GT data and example tracker) by following the ```Data Download``` link here:

[RobMOTS Challenge Info](https://eval.vision.rwth-aachen.de/rvsu-workshop21/?page_id=110)

## Accessing tracking evaluation results

You will find the results of the evaluation (for the supplied tracker STP) in the folder ```TrackEval/data/trackers/rob_mots/train/STP/```.

The overall summary of the results is in ```./final_results.csv```, and more detailed results per sequence and per class and results plots can be found under ```./results/*```.

The ```final_results.csv``` can be most easily read by opening it in Excel or similar. The ```c```, ```d``` and ```f``` prepending the metric names refer respectively to ```class averaged```, ```detection averaged (class agnostic)``` and ```final``` (the geometric mean of class and detection averaged).

## Supplied Detections

To make creating your own tracker particularly easy, we supply a set of strong supplied detection.

These detections are from the Detectron 2 Mask R-CNN X152 (very bottom model on this [page](https://github.com/facebookresearch/detectron2/blob/master/MODEL_ZOO.md) which achieves a COCO detection mAP score of 50.2).

We then obtain segmentation masks for these detections using the Box2Seg Network (also called Refinement Net), which results in far more accurate masks than the default Mask R-CNN masks. The code for this can be found [here](https://github.com/JonathonLuiten/PReMVOS/tree/master/code/refinement_net).

We supply two different supplied detections. The first is the ```raw_supplied``` detections, which is taking all 1000 detections output from the Mask R-CNN, and only removing those for which the maximum class score is less than 0.02 (here no non-maximum suppression, NMS, is run). These can be downloaded [here](https://eval.vision.rwth-aachen.de/rvsu-workshop21/?page_id=110).

The second is ```non_overlap_supplied``` detections. These are the same detections as above, but with further processing steps applied to them. First we perform Non-Maximum Suppression (NMS) with a threshold of 0.5 to remove any masks which have an IoU of 0.5 or more with any other mask that has a higher score. Second we run a Non-Overlap algorithm which forces all of the masks for a single image to be non-overlapping. It does this by putting all the masks 'on top of' each other, ordered by score, such that masks with a lower score will be partially removed if a mask with a higher score partially overlaps them. Note that these detections are still only thresholded at a score of 0.02, in general we recommend further thresholding with a higher value to get a good balance of precision and recall.

Code for this NMS and Non-Overlap algorithm can be found here:

[Non-Overlap Code](https://github.com/JonathonLuiten/TrackEval/blob/master/trackeval/baselines/non_overlap.py).

Note that for RobMOTS evaluation the final tracking results need to be 'non-overlapping' so we recommend using the ```non_overlap_supplied``` detections, however you may use the ```raw_supplied```, or your own or any other detections as you like.

Supplied detections (both raw and non-overlapping) are available for the train, val and test sets.

Example code for reading in these detections and using them can be found here:

[Tracker Example](https://github.com/JonathonLuiten/TrackEval/blob/master/trackeval/baselines/stp.py).

## Creating your own tracker

We provide sample code ([Tracker Example](https://github.com/JonathonLuiten/TrackEval/blob/master/trackeval/baselines/stp.py)) for our STP tracker (Simplest Tracker Possible) which walks though how to create tracking results in the required RobMOTS format.

This includes code for reading in the supplied detections and writing out the tracking results in the desired format, plus many other useful functions (IoU calculation etc).

## Evaluating your own tracker

To evaluate your tracker, put the results in the folder ```TrackEval/data/trackers/rob_mots/train/```, in a folder alongside the supplied tracker STP with the folder labelled as your tracker name, e.g. YOUR_TRACKER.

You can then run the evaluation code on your tracker like this:

```

python scripts/run_rob_mots.py --ROBMOTS_SPLIT train --TRACKERS_TO_EVAL YOUR_TRACKER --USE_PARALLEL True --NUM_PARALLEL_CORES 4

```

## Data format

For RobMOTS, trackers must submit their results in the following folder format:

```

|—— <Benchmark01>

|—— <Benchmark01SeqName01>.txt

|—— <Benchmark01SeqName02>.txt

|—— <Benchmark01SeqName03>.txt

|—— <Benchmark02>

|—— <Benchmark02SeqName01>.txt

|—— <Benchmark02SeqName02>.txt

|—— <Benchmark02SeqName03>.txt

```

See the supplied STP tracker results (in the Train Data linked above) for an example.

Thus there is one .txt file for each sequence. This file has one row per detection (object mask in one frame). Each row must have 7 values and has the following format:

</p>

<code>

<Timestep>(int),

<Track ID>(int),

&

没有合适的资源?快使用搜索试试~ 我知道了~

基于YBTrack YOLOv5 + BYTE实现的 无人机目标跟踪系统

共947个文件

py:195个

yaml:116个

txt:112个

1.该资源内容由用户上传,如若侵权请联系客服进行举报

2.虚拟产品一经售出概不退款(资源遇到问题,请及时私信上传者)

2.虚拟产品一经售出概不退款(资源遇到问题,请及时私信上传者)

版权申诉

温馨提示

【作品名称】:基于YBTrack YOLOv5 + BYTE实现的 无人机目标跟踪系统 【适用人群】:适用于希望学习不同技术领域的小白或进阶学习者。可作为毕设项目、课程设计、大作业、工程实训或初期项目立项。 【项目介绍】:YBTrack是一种无人机目标跟踪的系统,算法采用YOLOv5+BYTE的组合,并在无人机视角下的数据集:Visdrone2019和MOT17数据集上进行了实验。下载包包含所有训练的代码,跟踪代码、指标计算代码以及真机部署的代码。

资源推荐

资源详情

资源评论

收起资源包目录

基于YBTrack YOLOv5 + BYTE实现的 无人机目标跟踪系统 (947个子文件)

基于YBTrack YOLOv5 + BYTE实现的 无人机目标跟踪系统 (947个子文件)  train.cache 26.91MB

train.cache 26.91MB train.cache 26.91MB

train.cache 26.91MB train.cache 8.27MB

train.cache 8.27MB train.cache 8.27MB

train.cache 8.27MB val.cache 4.29MB

val.cache 4.29MB val.cache 4.29MB

val.cache 4.29MB val.cache 2.86MB

val.cache 2.86MB val.cache 2.86MB

val.cache 2.86MB .catkin_workspace 98B

.catkin_workspace 98B setup.cfg 745B

setup.cfg 745B tensorrt_model.cpp 33KB

tensorrt_model.cpp 33KB px4_pos_controller.cpp 30KB

px4_pos_controller.cpp 30KB cv_bridge.cpp 25KB

cv_bridge.cpp 25KB formation_control_sitl.cpp 19KB

formation_control_sitl.cpp 19KB tensorrt_track.cpp 17KB

tensorrt_track.cpp 17KB autonomous_landing.cpp 17KB

autonomous_landing.cpp 17KB target_tracking.cpp 16KB

target_tracking.cpp 16KB serial_node.cpp 14KB

serial_node.cpp 14KB px4_pos_estimator.cpp 14KB

px4_pos_estimator.cpp 14KB collision_avoidance_vfh.cpp 13KB

collision_avoidance_vfh.cpp 13KB px4_pos_estimator (copy).cpp 13KB

px4_pos_estimator (copy).cpp 13KB px4_pos_controller_UDE.cpp 13KB

px4_pos_controller_UDE.cpp 13KB px4_pos_att_controller.cpp 13KB

px4_pos_att_controller.cpp 13KB opencv_track.cpp 12KB

opencv_track.cpp 12KB collision_avoidance.cpp 12KB

collision_avoidance.cpp 12KB utils.cpp 11KB

utils.cpp 11KB px4_pos_controller_passivity.cpp 11KB

px4_pos_controller_passivity.cpp 11KB rgb_colors.cpp 11KB

rgb_colors.cpp 11KB payload_drop.cpp 10KB

payload_drop.cpp 10KB px4_sender.cpp 10KB

px4_sender.cpp 10KB module_opencv4.cpp 9KB

module_opencv4.cpp 9KB module_opencv3.cpp 9KB

module_opencv3.cpp 9KB tracker.cpp 8KB

tracker.cpp 8KB ImageQueueLayout.cpp 8KB

ImageQueueLayout.cpp 8KB utest2.cpp 8KB

utest2.cpp 8KB module_opencv2.cpp 8KB

module_opencv2.cpp 8KB postprocess.cpp 7KB

postprocess.cpp 7KB lapjv.cpp 7KB

lapjv.cpp 7KB BYTETracker.cpp 7KB

BYTETracker.cpp 7KB collision_avoidance_streo.cpp 7KB

collision_avoidance_streo.cpp 7KB moni.cpp 6KB

moni.cpp 6KB linear_assignment.cpp 6KB

linear_assignment.cpp 6KB move.cpp 6KB

move.cpp 6KB tensorrt_detect.cpp 6KB

tensorrt_detect.cpp 6KB FeatureTensor.cpp 6KB

FeatureTensor.cpp 6KB square.cpp 6KB

square.cpp 6KB set_mode.cpp 5KB

set_mode.cpp 5KB ImageQueue.cpp 5KB

ImageQueue.cpp 5KB Data_log.cpp 5KB

Data_log.cpp 5KB module.cpp 5KB

module.cpp 5KB kalmanfilter.cpp 5KB

kalmanfilter.cpp 5KB nn_matching.cpp 5KB

nn_matching.cpp 5KB BytekalmanFilter.cpp 4KB

BytekalmanFilter.cpp 4KB utest.cpp 4KB

utest.cpp 4KB STrack.cpp 4KB

STrack.cpp 4KB YOLOv5Detector.cpp 4KB

YOLOv5Detector.cpp 4KB calibrator.cpp 3KB

calibrator.cpp 3KB serial_test.cpp 3KB

serial_test.cpp 3KB cam_node.cpp 2KB

cam_node.cpp 2KB track.cpp 2KB

track.cpp 2KB fake_vicon.cpp 2KB

fake_vicon.cpp 2KB id_management.cpp 2KB

id_management.cpp 2KB util.cpp 2KB

util.cpp 2KB TFmini.cpp 2KB

TFmini.cpp 2KB CloudPlatform_data.cpp 1KB

CloudPlatform_data.cpp 1KB eigen_test.cpp 1KB

eigen_test.cpp 1KB yolov5_opencv_detector.cpp 1KB

yolov5_opencv_detector.cpp 1KB test_endian.cpp 1KB

test_endian.cpp 1KB test_compression.cpp 1KB

test_compression.cpp 1KB filter_tester.cpp 1KB

filter_tester.cpp 1KB hungarianoper.cpp 990B

hungarianoper.cpp 990B munkres.cpp 948B

munkres.cpp 948B SemaphoreLocker.cpp 896B

SemaphoreLocker.cpp 896B QueueHead.cpp 873B

QueueHead.cpp 873B model.cpp 510B

model.cpp 510B test_rgb_colors.cpp 483B

test_rgb_colors.cpp 483B pedestrian_detailed.csv 73KB

pedestrian_detailed.csv 73KB valid_detailed.csv 62KB

valid_detailed.csv 62KB valid_detailed.csv 62KB

valid_detailed.csv 62KB valid_detailed.csv 62KB

valid_detailed.csv 62KB valid_detailed.csv 62KB

valid_detailed.csv 62KB results.csv 58KB

results.csv 58KB results.csv 58KB

results.csv 58KB results.csv 32KB

results.csv 32KB results.csv 32KB

results.csv 32KB results.csv 31KB

results.csv 31KB results.csv 30KB

results.csv 30KB pedestrian_detailed.csv 16KB

pedestrian_detailed.csv 16KB pedestrian_detailed.csv 16KB

pedestrian_detailed.csv 16KB valid_summary.csv 464B

valid_summary.csv 464B valid_summary.csv 464B

valid_summary.csv 464B valid_summary.csv 463B

valid_summary.csv 463B valid_summary.csv 462B

valid_summary.csv 462B pedestrian_summary.csv 452B

pedestrian_summary.csv 452B pedestrian_summary.csv 452B

pedestrian_summary.csv 452B yololayer.cu 10KB

yololayer.cu 10KB preprocess.cu 4KB

preprocess.cu 4KB Dockerfile 3KB

Dockerfile 3KB Dockerfile 821B

Dockerfile 821B Dockerfile-arm64 2KB

Dockerfile-arm64 2KB共 947 条

- 1

- 2

- 3

- 4

- 5

- 6

- 10

资源评论

2401_825977702024-05-22资源不错,很实用,内容全面,介绍详细,很好用,谢谢分享。

2401_825977702024-05-22资源不错,很实用,内容全面,介绍详细,很好用,谢谢分享。

MarcoPage

- 粉丝: 3083

- 资源: 3405

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜最新资源

- SI4947ADY-T1-E3-VB一款SOP8封装2个P-Channel场效应MOS管

- TeeChart ProFS 2023.38 源码版, 本人一直在用,稳定可靠

- python程序设计:数字类型 转换 运算

- doubleball1.m

- 二层半独栋别墅-12.00&10.80米-施工图.dwg

- SI4940DY-T1-E3-VB一款SOP8封装2个N-Channel场效应MOS管

- 端午节相关庆祝代码案例.zip

- SaaS 短链接系统,承载高并发和海量存储等场景难题 专为实习、校招以及社招而出的最新项目,项目质量不亚于12306铁路购票项目

- TeeChart ProFS 2023.38 VCL 试过各种版本,这个应该是最新最全源码的,本人一直在用 稳定运行

- 嵌入式产品开发.xmind

资源上传下载、课程学习等过程中有任何疑问或建议,欢迎提出宝贵意见哦~我们会及时处理!

点击此处反馈

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功