没有合适的资源?快使用搜索试试~ 我知道了~

oversubscribed command queues in gpus

需积分: 5 0 下载量 73 浏览量

2023-09-20

16:27:41

上传

评论

收藏 1.16MB PDF 举报

温馨提示

试读

11页

as gpus become larger and provide an increasing number of parallel execution units, a single kernel is no longer sufficient to utilize all avalliable resources. as a result gpu applications are beginning to use fine grain asynchronous kernels, which are executed in parallel and expose more concurrency. currently the HSA and copute unified device architecture specifications support concurrent kernel launches with the help of multiple command queues.

资源推荐

资源详情

资源评论

1

Permission to make digital or hard copies of all or part of this work for personal or

classroom use is granted without fee provided that copies are not made or distributed

for profit or commercial advantage and that copies bear this notice and the full citation

on the first page. Copyrights for components of this work owned by others than ACM

must be honored. Abstracting with credit is permitted. To copy otherwise, or republish,

to post on servers or to redistribute to lists, requires prior specific permission and/or a

fee. Request permissions from Permissions@acm.org.

GPGPU-11, February 24–28, 2018, Vienna, Austria

© 2018 Association for Computing Machinery.

ACM ISBN 978-1-4503-5647-3/18/02…$15.00

https://doi.org/10.1145/3180270.3180271

Oversubscribed Command Queues in GPUs

Sooraj Puthoor

AMD Research

Sooraj.Puthoor@amd.com

Xulong Tang

Penn State

xzt102@cse.psu.edu

Joseph Gross

AMD Research

Joe.Gross@amd.com

Bradford M. Beckmann

AMD Research

Brad.Beckmann@amd.com

Abstract

As GPUs become larger and provide an increasing number of

parallel execution units, a single kernel is no longer sufficient to

utilize all available resources. As a result, GPU applications are

beginning to use fine-grain asynchronous kernels, which are

executed in parallel and expose more concurrency. Currently, the

Heterogeneous System Architecture (HSA) and Compute Unified

Device Architecture (CUDA) specifications support concurrent

kernel launches with the help of multiple command queues (a.k.a.

HSA queues and CUDA streams, respectively). In conjunction,

GPU hardware has decreased launch overheads making fine-grain

kernels more attractive.

Although increasing the number of command queues is good for

kernel concurrency, the GPU hardware can only monitor a fixed

number of queues at any given time. Therefore, if the number of

command queues exceeds hardware’s monitoring capability, the

queues become oversubscribed and hardware has to service some

of these queues sequentially. This mapping process periodically

swaps between all allocated queues and limits the available

concurrency to the ready kernels in the currently mapped queues.

In this paper, we bring to attention the queue oversubscription

challenge and demonstrate one solution, queue prioritization,

which provides up to 45x speedup for NW benchmark against the

baseline that swaps queues in a round-robin fashion.

CCS CONCEPTS

• Computer systems organization~Single instruction, multiple

data • Software and its engineering~Scheduling

ACM Reference format:

S. Puthoor, X. Tang, J. Gross and B. Beckmann. In GPGPU-11: In

GPGPU-11: General Purpose GPUs, February 24–28, 2018, Vienna,

Austria. ACM, New York, NY, USA, 11 pages.

DOI: https://doi.org/10.1145/3180270.3180271

1. Introduction

GPUs are continually increasing their computational resources with

the latest offerings from AMD and NVIDIA boasting impressive

performance improvements over their previous generations [1][41].

With this increase in hardware resources, there is a demand to run

a more diverse set of applications, such as machine learning, cloud

computing, graph analytics, and high performance computing

(HPC) [42][45][46][51]. While some of these workloads can

launch a single kernel large enough to completely consume the

entire GPU, many others rely on concurrent kernel launches to

utilize all available resources.

For graphics workloads, the benefits of using concurrent

kernels has been well documented [1][60]. By running multiple

rendering tasks (a.k.a. kernels) concurrently, these tasks can share

the available resources and increase utilization, leading to faster

frame rate within the same power budget. Complementary, there

have been previous works in the HPC domain that have also

increased GPU utilization by running multiple kernels concurrently

[52][56].

To run multiple kernels simultaneously, existing bulk-

synchronous applications are often refactored to use asynchronous

tasks. For these task-based implementations, their execution is

typically represented as a Directed Acyclic Graph (DAG) with

nodes of the DAG representing tasks and the edges representing

dependencies between tasks. From the perspective of a GPU, a task

is an instance of a GPU kernel with its associated arguments. As

the task size decreases, GPU utilization usually increases because

smaller tasks are ready to launch when a smaller fraction of data

and resources become available versus larger tasks. The idea is

similar to filling an hour glass with sand versus marbles. With sand,

the hour glass empties faster because the fine-grain particles

occupy the available free space at the narrow neck more quickly.

Of course, the benefit from running fine-grain tasks depends on

amortizing the launch overhead. This can be done by both reducing

the latency for an individual kernel launch and by allowing multiple

kernel launches simultaneously. To reduce kernel launch latency,

the Heterogeneous System Architecture (HSA) allows applications

to directly enqueue work into user-mode queues and avoids heavy

weight operating system (OS) or GPU driver involvement

[28][47]. To allow simultaneous kernel launches, HSA allows

applications to allocate multiple user-mode queues which hardware

can service simultaneously. Compute Unified Device Architecture

(CUDA) supports a similar feature by allowing applications to

allocate multiple streams [17]. In the extreme case, the concurrency

exposed to hardware is only limited by the virtual memory

available to queue allocation.

While hypothetically more queues expose more concurrency, it

is not feasible to build a hardware that can simultaneously monitor

many queues. Instead, when the queues created by the application

exceed the number of queues can be monitored by the hardware,

the queues are oversubscribed and the GPU must employ a

procedure to ensure all queues are eventually serviced. For

instance, periodically swapping between all queues at regular

intervals is one obvious solution.

Processing task graphs using this queue swapping mechanism

can cause performance challenges. For example, when tasks in

mapped queues are waiting for tasks in unmapped queues, GPU

utilization can suffer significantly. While hardware will eventually

map the queues with ready tasks ensuring forward progress, the

delay caused by unintelligent queue mapping leads to significant

performance degradation and the degradation becomes worse with

more complex task graphs.

GPGPU’18, Vosendorf, Austria

S.Puthoor et al.

2

In this paper, we bring to attention this overlooked queue

oversubscription challenge and show that a simple queue

scheduling policy guided by prioritization can effectively mitigate

oversubscription and improve the GPU performance.

Specifically, the contributions from this paper are:

To the best of our knowledge, we are the first to highlight the

largely underappreciated scheduling challenge with

oversubscribed GPU command queues and the first to detail

their scheduling mechanisms.

We show that a simple priority-based scheduling policy can

reduce the underutilization caused by command queue

oversubscription and increase performance by 91x versus a

naïve round-robin policy and 45x and 43x versus more

optimized policies that do not map empty queues and

immediately unmap queues when they become empty.

We show that the proposed priority-based scheduling is

particularly beneficial for fine-grain tasks and they achieved

up to 11% speedup versus coarse-grain or medium-grain tasks.

The rest of the paper is organized as follows. Section 2 discusses

the background on GPU command queue oversubscription and

Section 3 introduces the command queue organization. Priority-

based queue scheduling schemes are discussed in detail in Section

4. Section 5 discusses simulation methodology and Section 6

evaluates different queue scheduling policies. Finally, Section 7

reviews related work and Section 8 concludes the paper.

2. Background

Programmers define the parallel portions of applications as

kernels and offload them to GPUs using command queues. Then

GPUs monitor these command queues and execute the submitted

kernels. As GPUs integrate more compute resources, concurrently

executing multiple kernels can more efficiently utilize those

available resources [1][17]. While CUDA requires multiple streams

to achieve kernel concurrency, HSA can launch simultaneously

executing kernels from a single queue [17][28]. HSA can further

increase kernel concurrency with multiple queues by avoiding the

sequential processing overhead of launching kernels from same

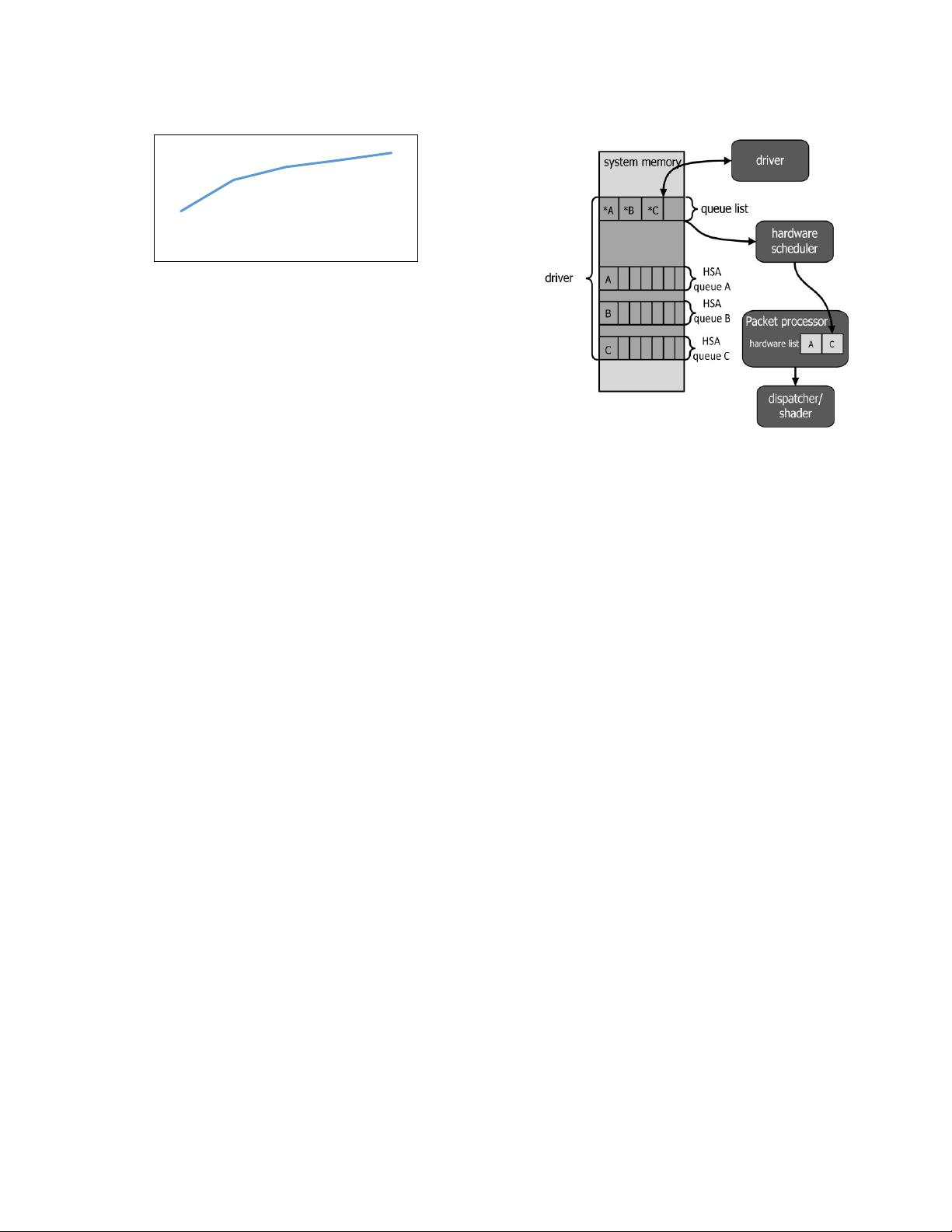

queue [47]. Figure 1 shows the speedup of NW application with

multiple HSA queues relative to a single HSA queue. The fine-

grain version of NW has 64 tasks and even for this simple task

graph, multiple HSA queues provide speedup.

While multiple command queues can increase the concurrency

exposed to GPUs, traditional applications use bulk synchronization

that lack multi-kernel concurrency. Meanwhile, structuring

applications as tasks represented by a DAG has been proposed to

increase concurrency [14]. In the DAG-based approach, the entire

computation is represented by a task graph with nodes in the DAG

representing tasks and edges representing the dependencies

between these tasks. Once the application is represented in its DAG

form, programming APIs allow it to be directly exposed to

hardware.

HSA provides features to expose and process task graphs

[27][28][29]. HSA-compatible systems, including commercially

available AMD APUs [10][23], support hardware features, such as

shared coherent virtual memory between different agents (CPU or

GPU devices), and provide a low-level runtime API with extensive

tasking capabilities. The tasking capabilities of HSA-compatible

hardware have been evaluated in the past, highlighting its

performance improvements [47]. HSA provides kernel dispatch

packets that can be used to launch kernels on HSA agents and

barrier packets that can be used to enforce dependencies between

these kernels. Any task graph can be represented with these two

packets by replacing tasks with kernel dispatch packets and edges

with barrier packets. These packets are then enqueued to HSA

queues. Once a kernel finishes execution, the corresponding barrier

packets are processed and the dependent kernels can be launched.

Additionally, since HSA queues are created in user space, there is

no expensive OS involvement while processing packets, which

significantly reduces task dispatch latency. As a result, fine-grain

tasks, whose reduced resource requirement is easier to schedule,

can be launched efficiently.

Historically, CPU task scheduling is managed by software,

either the OS or user-level runtime, and benefits from a higher

level, global view of the system. In general, the task scheduling

problem is known to be NP-complete [55]. Thus, the goal of a task

scheduler should be to find a good heuristic algorithm and make a

best-effort schedule based on application and system knowledge.

For instance, CPU software schedulers manage all active tasks in

the system and schedulers optimized for high-throughput

heterogeneous system can be enhanced with prioritization [15]. The

application or user-level runtime may assign priorities to tasks to

identify those with the earliest finishing time or those on the

critical-path of a data-dependent execution [7], [15], [32].

In contrast to CPUs, GPU task scheduling is exclusively

managed in hardware and current GPU hardware schedulers are

handicapped by minimal knowledge of the kernels. With HSA user

mode queuing, an application can create multiple user mode queues

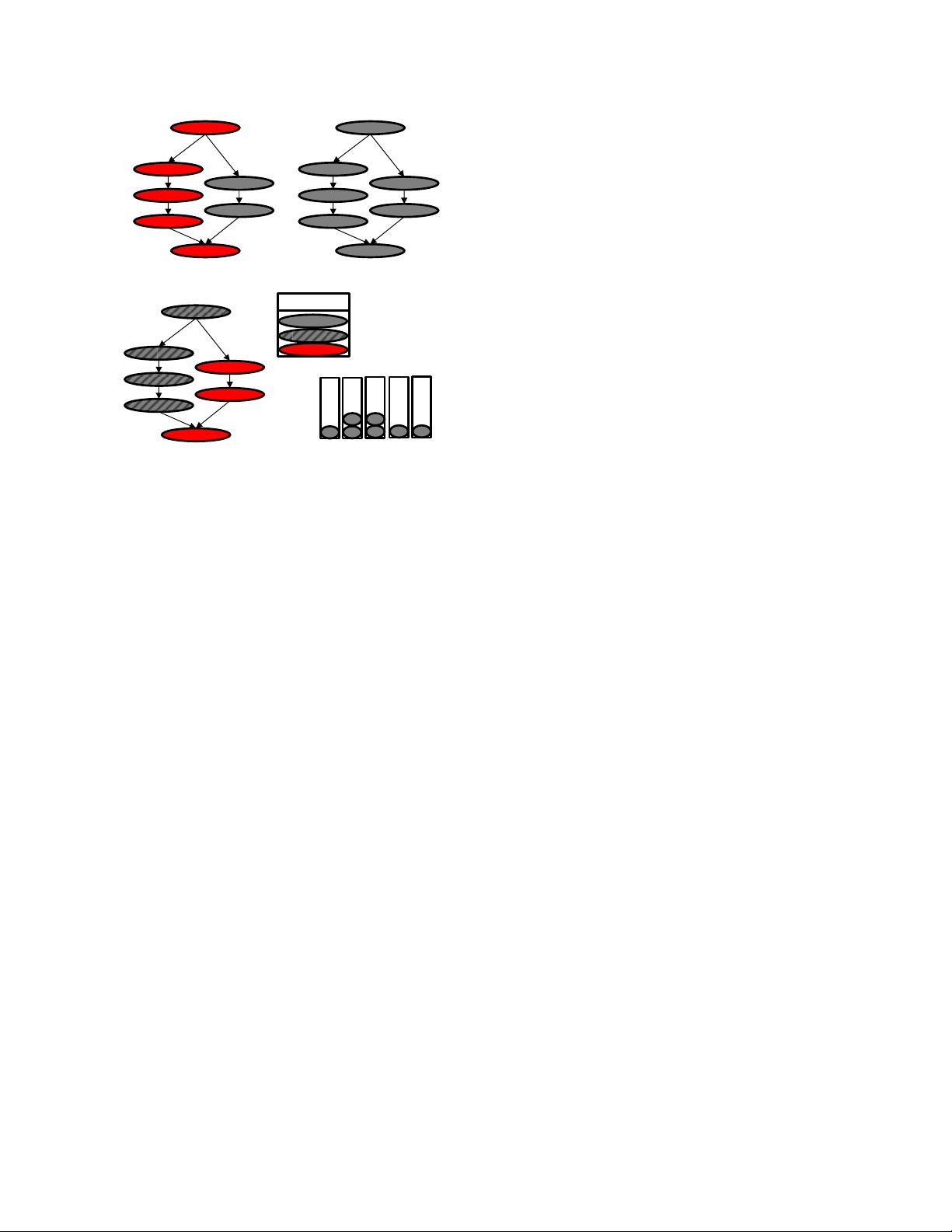

Figure 2. GPU queue and task scheduling hardware.

Figure 1. NW application speedup with multiple HSA

queues relative to single HSA queue.

0.9

0.95

1

1.05

1.1

1.15

1 2 4 8 16

Normalized speedup

Number of HSA queues

Oversubscribed Command Queues in GPUs

GPGPU’18, Vosendorf, Austria

3

(HSA queues) to launch kernels. However, it is challenging for the

GPU to locate all outstanding works on these queues when it can

only monitor a fixed number of queues at a time. Therefore, the

GPU periodically swaps different queues into the available

hardware queue slots (hereafter referred as hardware queues) and

processes ready tasks on the monitored queues. To ensure all

queues are eventually serviced, the hardware will swap in and out

queues at regular intervals, called the scheduling quantum.

When the number of allocated HSA queues is greater than the

number of hardware queues, the GPU wastes significant time

rotating between all allocated queues in search of ready tasks.

Furthermore, barrier packets that handle task dependencies can

block queues while waiting for prior tasks to complete. In this

situation, it is important for the GPU to choose a scheduling policy

that prioritizes queues having ready tasks. In this work, combining

round-robin and priority-based scheduling with various queue

mapping and unmapping criteria, we evaluate five different

scheduling policies (three round-robin and two priority-based).

3. GPU Command Queue Organization

Figure 2 shows the GPU queue scheduling and task dispatching

mechanism used in this paper. The GPU device driver is

responsible for creating queues in the system memory. The driver

also creates a queue list that contains the queue descriptors of all

the queues created by the application. Queue descriptors have all

the necessary information to access HSA queues, such as the

pointer to the base address of a queue. This queue list is passed to

the GPU hardware scheduler during initialization phase. Although

the queue list is read by the hardware scheduler, it is modified only

by the device driver. The hardware scheduler maps and unmaps

queues from the queue list to the hardware list stored inside the

packet processor at each scheduling quantum. The number of

entries in the hardware list is limited and each entry in the hardware

list corresponds to the HSA queue mapped to a hardware queue.

The packet processor monitors these hardware queues, processes

both kernel dispatch and barrier HSA packets, resolves

dependencies expressed by barrier packets and forwards ready

tasks to the dispatcher. The dispatcher then launches these tasks on

the shaders in work-group granularity.

When the HSA queues are oversubscribed, the hardware

scheduler unmaps a queue from the hardware list and maps a new

queue from the queue list. Our baseline hardware scheduler selects

a new queue to be mapped at each scheduling quantum based on a

round-robin policy. However, the newly selected queue may not

have ready tasks, resulting in idling GPU resources. Priority-based

queue scheduling techniques tries to reduce idling by intelligently

mapping a queue with ready tasks. The next section discusses this

priority-based queue scheduling in detail.

4. Priority-Based Queue Scheduling

While HSA can expose more task level concurrency to the GPU, it

shifts the burden of task scheduling to the hardware. In a complex

task graph with hundreds of interdependent tasks, many task queues

can become unblocked at any given time. From a data dependency

standpoint, the task scheduler is free to launch any ready task.

However, a naïve policy may not be able to achieve efficient use of

resources and may leave tasks blocked for long periods of time.

Figure 3 explains the deficiency of the naïve scheduler. Tasks

on the initial critical path of execution are marked in red in Figure

3 (a). After task A is executed, both tasks B and C are ready to

execute. A naïve scheduler could pick either of these two tasks, but

executing task B first is the better scheduling decision because task

B is on the critical path. Task C can be delayed, but Figure 3 (b)

shows delaying the execution of task C until tasks B, D, and F

complete, puts task C on the critical path. A more informed

scheduler would have executed task C before task F.

To make better decisions, a task priority-aware scheduler is

needed. Many techniques have been proposed in literature to

prioritize tasks [15][54]. One such technique is the Heterogeneous

Earliest-Finish-Time (HEFT) algorithm proposed by Topçuoğlu et

al. [54] that computes upward ranks of the tasks and selects the task

with highest upward rank for scheduling. A task’s upward rank is

its distance from an exit node. Figure 3 (c) shows the same task

graph from Figure 3 (a) with each task annotated with their upward

rank. The ranking uses HEFT algorithm but assumes equal

computation time for all tasks. Since the tasks are now annotated

with priorities, a scheduler that is aware of the priorities can make

informed scheduling decisions. For example, after task A is

completed, priority-based scheduler will choose task B to be

scheduled because the rank of task B (rank 4) is higher than task C

(rank 3). In this paper, we use this HEFT algorithm to determine

the priorities of each task.

4.1 Priority-based Hardware Scheduling in HSA

There are three challenges to implement a priority-based task

scheduler, (a) the tasks need to be annotated by a ranking algorithm,

(b) these annotations should be exposed to the scheduler, and (c)

the scheduler should be able to use these rankings when making

scheduling decisions.

Since HSA relies on queues to expose concurrency and schedule

tasks, we modified the HSA queue creation API [Figure 4], to

include a priority field. A queue priority (or rank) is a positive

integer value that can be specified at the time of queue creation and

Figure 3. Task graph execution and priorities.

Barrie

r

C

A

B

D

F

G

E

C (3)

A (5)

B (4)

D (3)

F (2)

G (1)

E (2)

C

A

B

D

F

G

E

(a)

(b)

(c)

completed

to be run

Legend

critical path

G

E

C

B

A

F

D

(d)

Q1

Q2

Q3 Q4

Q5

hsa_queue_create(gpu_agent, queue_size,

HSA_QUEUE_TYPE_SINGLE, NULL, NULL,

UINT32_MAX, UINT32_MAX, q_ptr, priority);

Figure 4. HSA API modifications. priority is added to HSA

queue create API.

剩余10页未读,继续阅读

资源评论

泰勒朗斯

- 粉丝: 2069

- 资源: 12

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功