没有合适的资源?快使用搜索试试~ 我知道了~

资源推荐

资源详情

资源评论

AutoAugment:

Learning Augmentation Strategies from Data

Ekin D. Cubuk

∗

, Barret Zoph

∗

, Dandelion Man

´

e, Vijay Vasudevan, Quoc V. Le

Google Brain

Abstract

Data augmentation is an effective technique for improv-

ing the accuracy of modern image classifiers. However, cur-

rent data augmentation implementations are manually de-

signed. In this paper, we describe a simple procedure called

AutoAugment to automatically search for improved data

augmentation policies. In our implementation, we have de-

signed a search space where a policy consists of many sub-

policies, one of which is randomly chosen for each image

in each mini-batch. A sub-policy consists of two opera-

tions, each operation being an image processing function

such as translation, rotation, or shearing, and the probabil-

ities and magnitudes with which the functions are applied.

We use a search algorithm to find the best policy such that

the neural network yields the highest validation accuracy

on a target dataset. Our method achieves state-of-the-art

accuracy on CIFAR-10, CIFAR-100, SVHN, and ImageNet

(without additional data). On ImageNet, we attain a Top-1

accuracy of 83.5% which is 0.4% better than the previous

record of 83.1%. On CIFAR-10, we achieve an error rate of

1.5%, which is 0.6% better than the previous state-of-the-

art. Augmentation policies we find are transferable between

datasets. The policy learned on ImageNet transfers well to

achieve significant improvements on other datasets, such as

Oxford Flowers, Caltech-101, Oxford-IIT Pets, FGVC Air-

craft, and Stanford Cars.

1. Introduction

Deep neural nets are powerful machine learning systems

that tend to work well when trained on massive amounts

of data. Data augmentation is an effective technique to in-

crease both the amount and diversity of data by randomly

“augmenting” it [3, 54, 29]; in the image domain, common

augmentations include translating the image by a few pix-

els, or flipping the image horizontally. Intuitively, data aug-

mentation is used to teach a model about invariances in the

data domain: classifying an object is often insensitive to

∗

Equal contribution.

horizontal flips or translation. Network architectures can

also be used to hardcode invariances: convolutional net-

works bake in translation invariance [16, 32, 25, 29]. How-

ever, using data augmentation to incorporate potential in-

variances can be easier than hardcoding invariances into the

model architecture directly.

Dataset GPU Best published Our results

hours results

CIFAR-10 5000 2.1 1.5

CIFAR-100 0 12.2 10.7

SVHN 1000 1.3 1.0

Stanford Cars 0 5.9 5.2

ImageNet 15000 3.9 3.5

Table 1. Error rates (%) from this paper compared to the best re-

sults so far on five datasets (Top-5 for ImageNet, Top-1 for the

others). Previous best result on Stanford Cars fine-tuned weights

originally trained on a larger dataset [66], whereas we use a ran-

domly initialized network. Previous best results on other datasets

only include models that were not trained on additional data, for

a single evaluation (without ensembling). See Tables 2,3, and 4

for more detailed comparison. GPU hours are estimated for an

NVIDIA Tesla P100.

Yet a large focus of the machine learning and computer

vision community has been to engineer better network ar-

chitectures (e.g., [55, 59, 20, 58, 64, 19, 72, 23, 48]). Less

attention has been paid to finding better data augmentation

methods that incorporate more invariances. For instance,

on ImageNet, the data augmentation approach by [29], in-

troduced in 2012, remains the standard with small changes.

Even when augmentation improvements have been found

for a particular dataset, they often do not transfer to other

datasets as effectively. For example, horizontal flipping of

images during training is an effective data augmentation

method on CIFAR-10, but not on MNIST, due to the dif-

ferent symmetries present in these datasets. The need for

automatically learned data-augmentation has been raised re-

cently as an important unsolved problem [57].

In this paper, we aim to automate the process of finding

an effective data augmentation policy for a target dataset.

In our implementation (Section 3), each policy expresses

several choices and orders of possible augmentation opera-

1

arXiv:1805.09501v3 [cs.CV] 11 Apr 2019

tions, where each operation is an image processing func-

tion (e.g., translation, rotation, or color normalization),

the probabilities of applying the function, and the magni-

tudes with which they are applied. We use a search al-

gorithm to find the best choices and orders of these oper-

ations such that training a neural network yields the best

validation accuracy. In our experiments, we use Reinforce-

ment Learning [71] as the search algorithm, but we believe

the results can be further improved if better algorithms are

used [48, 39].

Our extensive experiments show that AutoAugment

achieves excellent improvements in two use cases: 1) Au-

toAugment can be applied directly on the dataset of interest

to find the best augmentation policy (AutoAugment-direct)

and 2) learned policies can be transferred to new datasets

(AutoAugment-transfer). Firstly, for direct application, our

method achieves state-of-the-art accuracy on datasets such

as CIFAR-10, reduced CIFAR-10, CIFAR-100, SVHN, re-

duced SVHN, and ImageNet (without additional data). On

CIFAR-10, we achieve an error rate of 1.5%, which is 0.6%

better than the previous state-of-the-art [48]. On SVHN,

we improve the state-of-the-art error rate from 1.3% [12]

to 1.0%. On reduced datasets, our method achieves per-

formance comparable to semi-supervised methods without

using any unlabeled data. On ImageNet, we achieve a Top-

1 accuracy of 83.5% which is 0.4% better than the previous

record of 83.1%. Secondly, if direct application is too ex-

pensive, transferring an augmentation policy can be a good

alternative. For transferring an augmentation policy, we

show that policies found on one task can generalize well

across different models and datasets. For example, the pol-

icy found on ImageNet leads to significant improvements

on a variety of FGVC datasets. Even on datasets for which

fine-tuning weights pre-trained on ImageNet does not help

significantly [26], e.g. Stanford Cars [27] and FGVC Air-

craft [38], training with the ImageNet policy reduces test

set error by 1.2% and 1.8%, respectively. This result sug-

gests that transferring data augmentation policies offers an

alternative method for standard weight transfer learning. A

summary of our results is shown in Table 1.

2. Related Work

Common data augmentation methods for image recog-

nition have been designed manually and the best augmenta-

tion strategies are dataset-specific. For example, on MNIST,

most top-ranked models use elastic distortions, scale, trans-

lation, and rotation [54, 8, 62, 52]. On natural image

datasets, such as CIFAR-10 and ImageNet, random crop-

ping, image mirroring and color shifting / whitening are

more common [29]. As these methods are designed manu-

ally, they require expert knowledge and time. Our approach

of learning data augmentation policies from data in princi-

ple can be used for any dataset, not just one.

This paper introduces an automated approach to find data

augmentation policies from data. Our approach is inspired

by recent advances in architecture search, where reinforce-

ment learning and evolution have been used to discover

model architectures from data [71, 4, 72, 7, 35, 13, 34, 46,

49, 63, 48, 9]. Although these methods have improved upon

human-designed architectures, it has not been possible to

beat the 2% error-rate barrier on CIFAR-10 using architec-

ture search alone.

Previous attempts at learned data augmentations include

Smart Augmentation, which proposed a network that au-

tomatically generates augmented data by merging two or

more samples from the same class [33]. Tran et al. used a

Bayesian approach to generate data based on the distribu-

tion learned from the training set [61]. DeVries and Taylor

used simple transformations in the learned feature space to

augment data [11].

Generative adversarial networks have also been used for

the purpose of generating additional data (e.g., [45, 41, 70,

2, 56]). The key difference between our method and gen-

erative models is that our method generates symbolic trans-

formation operations, whereas generative models, such as

GANs, generate the augmented data directly. An exception

is work by Ratner et al., who used GANs to generate se-

quences that describe data augmentation strategies [47].

3. AutoAugment: Searching for best Augmen-

tation policies Directly on the Dataset of In-

terest

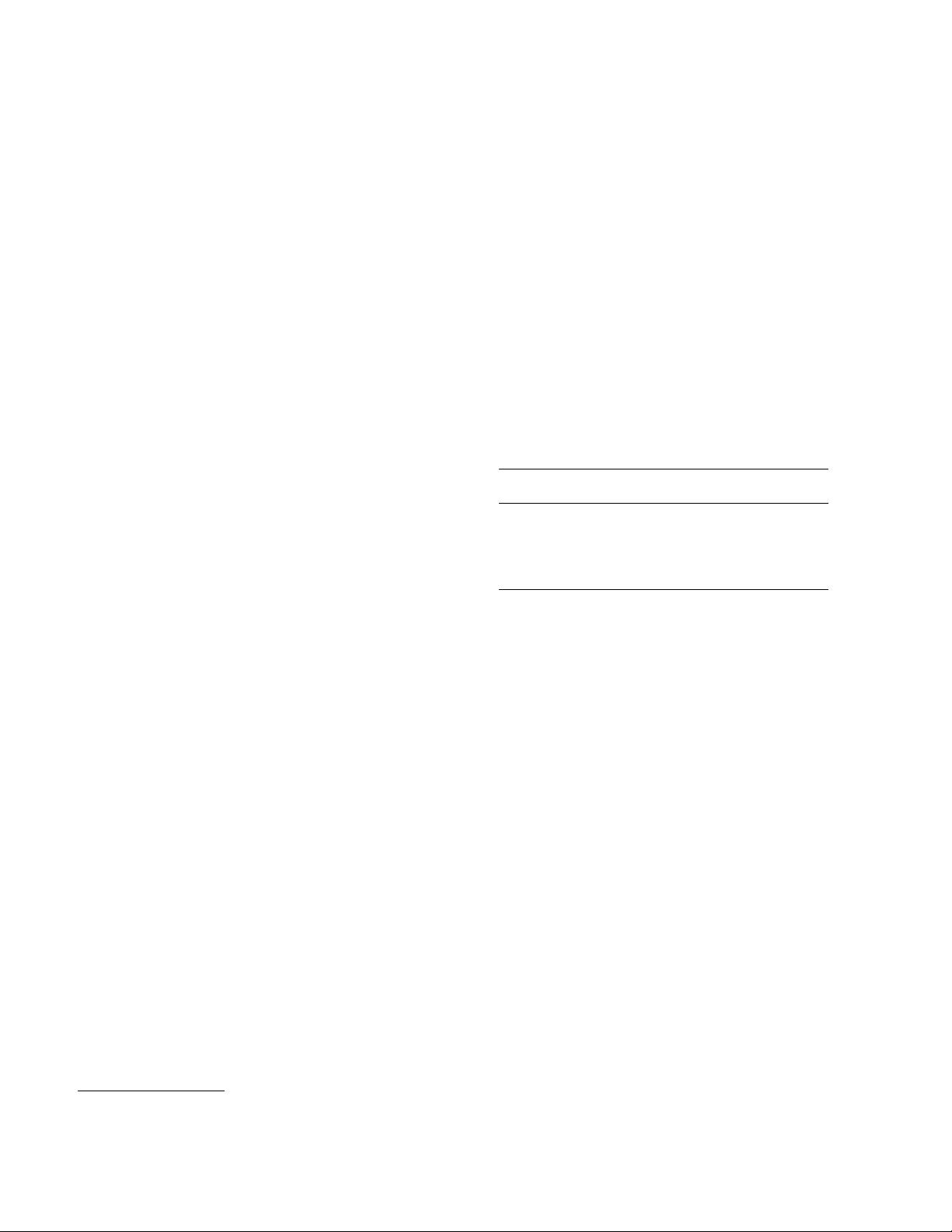

We formulate the problem of finding the best augmen-

tation policy as a discrete search problem (see Figure 1).

Our method consists of two components: A search algo-

rithm and a search space. At a high level, the search al-

gorithm (implemented as a controller RNN) samples a data

augmentation policy S, which has information about what

image processing operation to use, the probability of using

the operation in each batch, and the magnitude of the oper-

ation. Key to our method is the fact that the policy S will

be used to train a neural network with a fixed architecture,

whose validation accuracy R will be sent back to update the

controller. Since R is not differentiable, the controller will

be updated by policy gradient methods. In the following

section we will describe the two components in detail.

Search space details: In our search space, a policy con-

sists of 5 sub-policies with each sub-policy consisting of

two image operations to be applied in sequence. Addition-

ally, each operation is also associated with two hyperpa-

rameters: 1) the probability of applying the operation, and

2) the magnitude of the operation.

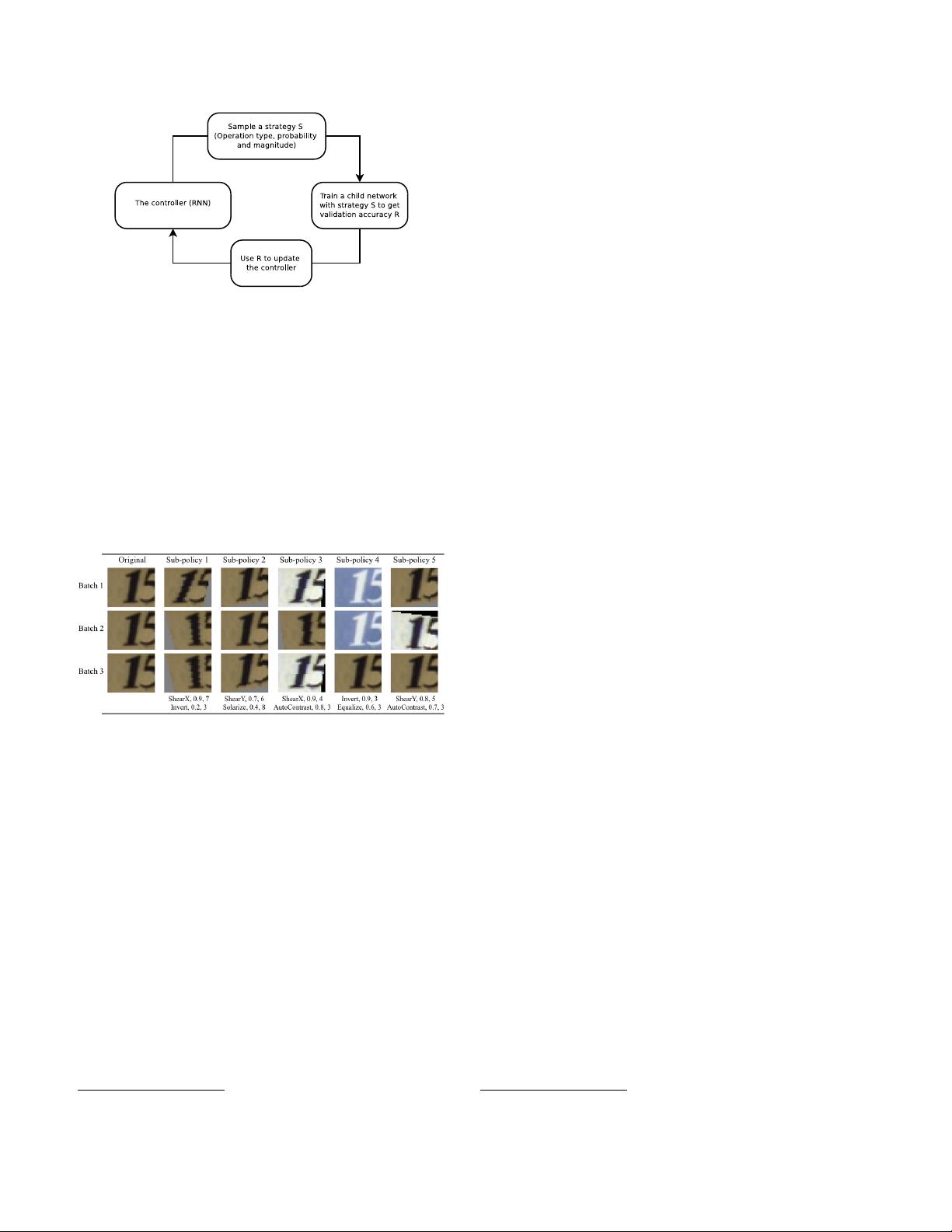

Figure 2 shows an example of a policy with 5-sub-

policies in our search space. The first sub-policy specifies

a sequential application of ShearX followed by Invert. The

Figure 1. Overview of our framework of using a search method

(e.g., Reinforcement Learning) to search for better data augmen-

tation policies. A controller RNN predicts an augmentation policy

from the search space. A child network with a fixed architecture

is trained to convergence achieving accuracy R. The reward R will

be used with the policy gradient method to update the controller

so that it can generate better policies over time.

probability of applying ShearX is 0.9, and when applied,

has a magnitude of 7 out of 10. We then apply Invert with

probability of 0.8. The Invert operation does not use the

magnitude information. We emphasize that these operations

are applied in the specified order.

Figure 2. One of the policies found on SVHN, and how it can be

used to generate augmented data given an original image used to

train a neural network. The policy has 5 sub-policies. For every

image in a mini-batch, we choose a sub-policy uniformly at ran-

dom to generate a transformed image to train the neural network.

Each sub-policy consists of 2 operations, each operation is associ-

ated with two numerical values: the probability of calling the op-

eration, and the magnitude of the operation. There is a probability

of calling an operation, so the operation may not be applied in that

mini-batch. However, if applied, it is applied with the fixed mag-

nitude. We highlight the stochasticity in applying the sub-policies

by showing how one image can be transformed differently in dif-

ferent mini-batches, even with the same sub-policy. As explained

in the text, on SVHN, geometric transformations are picked more

often by AutoAugment. It can be seen why Invert is a commonly

selected operation on SVHN, since the numbers in the image are

invariant to that transformation.

The operations we used in our experiments are from PIL,

a popular Python image library.

1

For generality, we consid-

ered all functions in PIL that accept an image as input and

1

https://pillow.readthedocs.io/en/5.1.x/

output an image. We additionally used two other promis-

ing augmentation techniques: Cutout [12] and SamplePair-

ing [24]. The operations we searched over are ShearX/Y,

TranslateX/Y, Rotate, AutoContrast, Invert, Equalize, So-

larize, Posterize, Contrast, Color, Brightness, Sharpness,

Cutout [12], Sample Pairing [24].

2

In total, we have 16

operations in our search space. Each operation also comes

with a default range of magnitudes, which will be described

in more detail in Section 4. We discretize the range of mag-

nitudes into 10 values (uniform spacing) so that we can use

a discrete search algorithm to find them. Similarly, we also

discretize the probability of applying that operation into 11

values (uniform spacing). Finding each sub-policy becomes

a search problem in a space of (16× 10 × 11)

2

possibilities.

Our goal, however, is to find 5 such sub-policies concur-

rently in order to increase diversity. The search space with 5

sub-policies then has roughly (16× 10×11)

10

≈ 2.9×10

32

possibilities.

The 16 operations we used and their default range of val-

ues are shown in Table 1 in the Appendix. Notice that there

is no explicit “Identity” operation in our search space; this

operation is implicit, and can be achieved by calling an op-

eration with probability set to be 0.

Search algorithm details: The search algorithm that

we used in our experiment uses Reinforcement Learning,

inspired by [71, 4, 72, 5]. The search algorithm has two

components: a controller, which is a recurrent neural net-

work, and the training algorithm, which is the Proximal

Policy Optimization algorithm [53]. At each step, the con-

troller predicts a decision produced by a softmax; the pre-

diction is then fed into the next step as an embedding. In

total the controller has 30 softmax predictions in order to

predict 5 sub-policies, each with 2 operations, and each op-

eration requiring an operation type, magnitude and proba-

bility.

The training of controller RNN: The controller is

trained with a reward signal, which is how good the policy is

in improving the generalization of a “child model” (a neural

network trained as part of the search process). In our exper-

iments, we set aside a validation set to measure the gen-

eralization of a child model. A child model is trained with

augmented data generated by applying the 5 sub-policies on

the training set (that does not contain the validation set). For

each example in the mini-batch, one of the 5 sub-policies is

chosen randomly to augment the image. The child model

is then evaluated on the validation set to measure the accu-

racy, which is used as the reward signal to train the recurrent

network controller. On each dataset, the controller samples

about 15,000 policies.

Architecture of controller RNN and training hyper-

parameters: We follow the training procedure and hyper-

parameters from [72] for training the controller. More con-

2

Details about these operations are listed in Table 1 in the Appendix.

剩余13页未读,继续阅读

资源评论

IRUIRUI__

- 粉丝: 543

- 资源: 55

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜最新资源

- 热设计仿真分析汇报模板,可编辑,真实项目!

- 基于YOLOv5 + Flask + Vue实现深度学习算法的垃圾检测系统源码+数据库(高分项目)

- yuuuuuuuuuuuuuuuuuu

- 打印设计软件DLL(最新版,修复了很多bug)+ 测试源码 + Dev所需全部组件(Debug目录里面)

- YOLOv5 + Flask + Vue实现基于深度学习算法的垃圾检测系统源码+数据库

- IMG_0310.jpg

- Fine Report-常用JS接口实例汇总演示

- 【Elman算法】matlab实现Elman网络预测股市开盘价.docx

- MobaXterm-telnet-ssh远程链接工具

- 微信小程序自拍人相框拍摄

资源上传下载、课程学习等过程中有任何疑问或建议,欢迎提出宝贵意见哦~我们会及时处理!

点击此处反馈

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功