IEEE SIGNAL PROCESSING LETTERS, VOL. 4, NO. 12, DECEMBER 1997 325

A Fast Encoding Algorithm for Vector Quantization

SeongJoon Baek, BumKi Jeon, and Koeng-Mo Sung, Member, IEEE

Abstract—In this letter, we present a fast encoding algorithm

for vector quantization that uses two characteristics of a vector,

mean, and variance. Although a similar method using these fea-

tures was already proposed, it handles these features separately.

On the other hand, the proposed algorithm utilizes these features

simultaneously to save computation time all the more. Since the

proposed algorithm rejects those codewords that are impossible

to be the nearest codeword, it produces the same output as

conventional full search algorithm. The simulation results confirm

the effectiveness of the proposed algorithm.

I. INTRODUCTION

V

ECTOR quantization (VQ) is a very efficient approach

to low bit rate image compression [1], [2]. VQ has the

potential to achieve coding performance close to the rate-

distortion limit with increasing vector dimension. However,

the utilization of VQ is severely limited by its encoding

complexity which increases exponentially with dimension.

Thus, it is important to reduce the computational complexity

of VQ for realizing its full potential.

Many researchers have looked into a fast encoding al-

gorithm to accelerate the VQ process. These works can be

classified into two groups. The first group does not solve the

nearest-neighbor problem itself but instead seeks a suboptimal

solution that is almost as good in the sense of mean squared

error (MSE). Usually they rely on the use of data structures

that facilitate fast search of the codebook such as TSVQ or

K-d tree [3], [4].

The second group addresses an exact solution of the nearest-

neighbor encoding problem. A very simple but effective

method is the partial distortion search (PDS) method reported

by Bei and Gray [5]. It is important to note that this method

requires no memory overhead, but provides only moderate

acceleration.

With some memory overhead, the fast nearest-neighbor

search (FNNS) algorithm [6], [7] and the projection method

saves great deal of computation time. FNNS algorithm uses

the triangle inequality and can reject a great many unlikely

codewords. However, this algorithm requires an additional

memory of size

to store the distances of all pairs

of codewords, where

is a codebook size. When is large,

the memory requirement can be a serious problem.

The projection method such as equal-average nearest neigh-

bor search (ENNS) algorithm [8], [9] uses the mean of an input

vector to cancel the unlikely codeword. This method shows

Manuscript received April 14, 1997. This work was supported by Korea

Telecom. The associate editor coordinating the review of this manuscript and

approving it for publication was Prof. R. Ansari.

The authors are with the School of Electrical Engineering, Seoul National

Publisher Item Identifier S 1070-9908(97)08973-6.

a great deal of computation time savings over conventional

full-search algorithm with only

additional memory. The

improved algorithm using the variance as well as the mean

of an input vector—we will call it the equal-average equal-

variance nearest-neighbor search (EENNS) algorithm—shows

more computation time savings with

additional memory

[10].

In this letter, we will examine ENNS and EENNS, algo-

rithm and present a new fast encoding algorithm. Though the

proposed algorithm uses the mean and the variance of an input

vector like EENNS, it develops a new inequality between these

features and the distance. Since the codeword searching area is

reduced with this inequality, the proposed algorithm requires

less computation time than EENNS algorithm.

II. T

HE PROPOSED ALGORITHM

Before describing the proposed algorithm, we will give a

definition and review ENNS and EENNS algorithm.

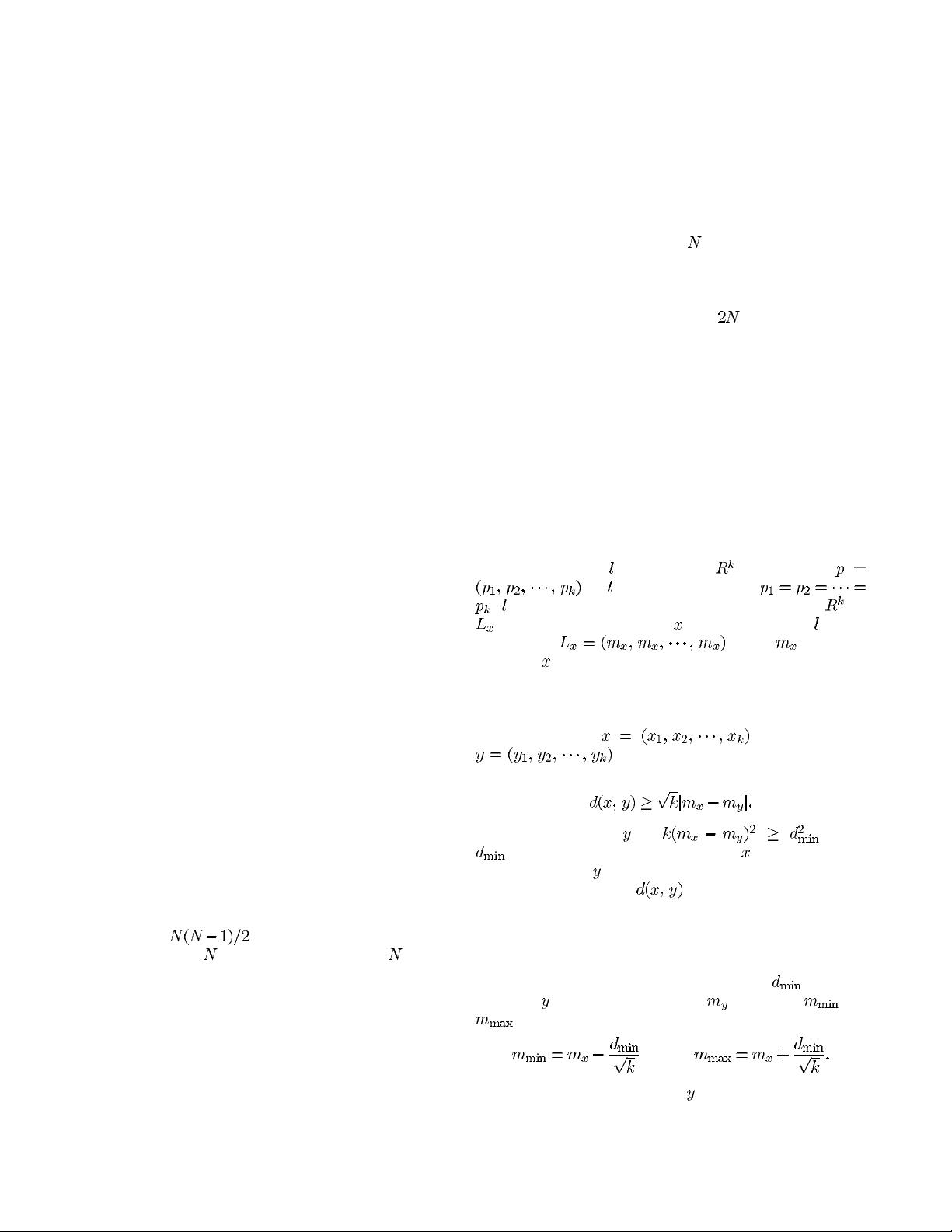

Definition 1: Let

be a line on . If any point

on satisfies the condition

, is called the central line or the central axis of .If

be the projection point of on the central line , it can

be seen that

where is a mean

of vector

.

ENNS algorithm uses the mean of a vector to reject many

unlikely codewords. The main logic of ENNS can be stated

as follows.

Theorem 1: Let

be a vector and

be a codeword. If the distortion is the

Euclidean distance, then

For any codeword ,if where

is a current minimum distance of represented by a

certain codeword,

can not be the nearest codeword and it is

unnecessary to calculate

[8], [9].

With the above theorem in hand, we now turn to describe

the ENNS algorithm. For an input vector, the algorithm

first calculates its mean value. Then the algorithm finds the

codeword that has the minimum mean difference to an input

vector and sets the current minimum distance

. For each

codeword

, the algorithm checks if is between and

, where

and

If the answer is no, codeword is rejected. Otherwise, the

distance from the input vector to the codeword is computed.

Since the vectors in the codebook are already sorted according

1070–9908/97$10.00 1997 IEEE