This article has been accepted for inclusion in a future issue of this journal. Content is final as presented, with the exception of pagination.

IEEE TRANSACTIONS ON CYBERNETICS 1

Feature Correlation Hypergraph: Exploiting

High-order Potentials for Multimodal Recognition

Luming Zhang, Yue Gao, Chaoqun Hong, Yinfu Feng, Jianke Zhu, Member, IEEE, and Deng Cai

Abstract—In computer vision and multimedia analysis, it is

common to use multiple features (or multimodal features) to

represent an object. For example, to well characterize a natural

scene image, we typically extract a set of visual features to

represent its color, texture, and shape. However, it is challeng-

ing to integrate multimodal features optimally. Since they are

usually high-order correlated, e.g., the histogram of gradient

(HOG), bag of scale invariant feature transform descriptors,

and wavelets are closely related because they collaboratively

reflect the image texture. Nevertheless, the existing algorithms

fail to capture the high-order correlation among multimodal

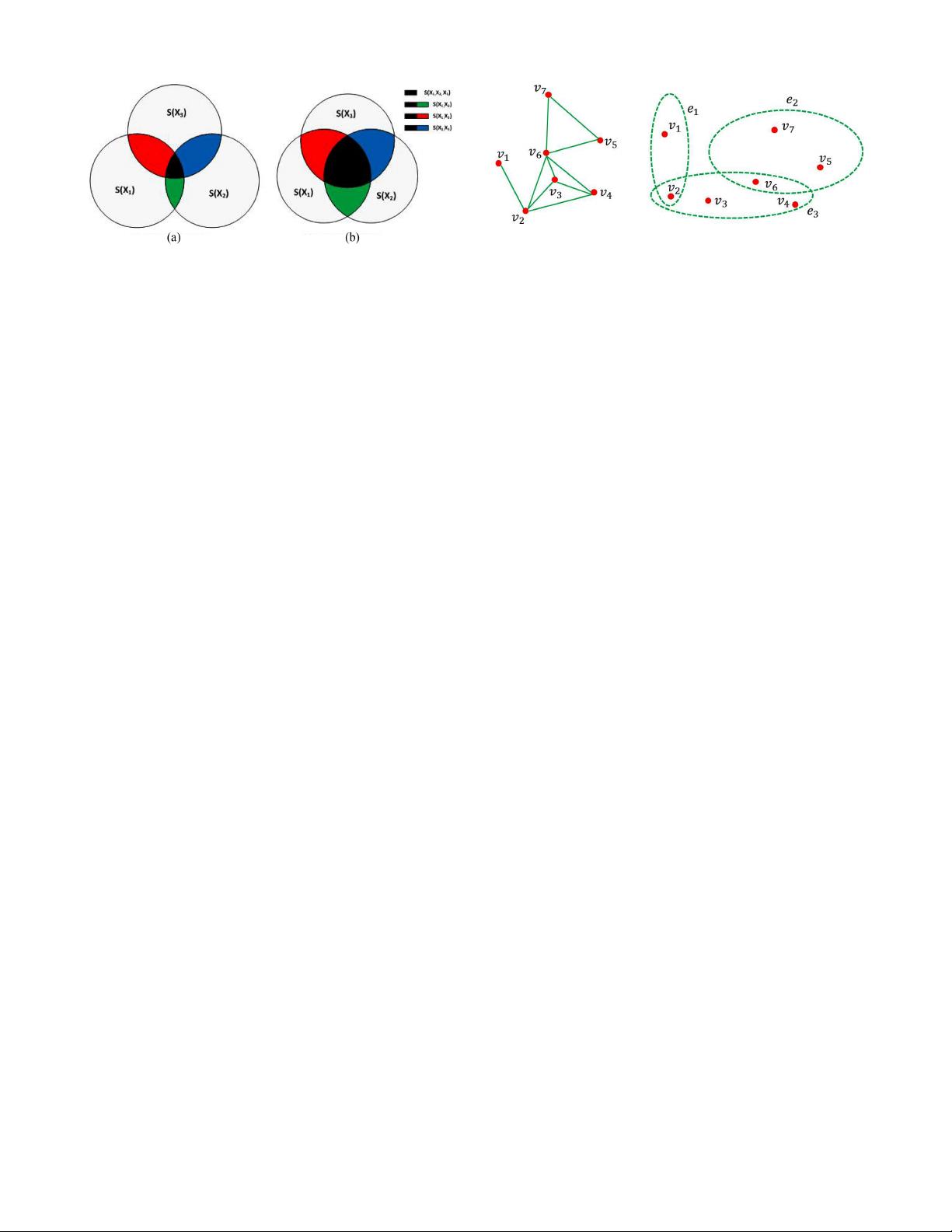

features. To solve this problem, we present a new multimodal

feature integration framework. Particularly, we first define a

new measure to capture the high-order correlation among the

multimodal features, which can be deemed as a direct extension of

the previous binary correlation. Therefore, we construct a feature

correlation hypergraph (FCH) to model the high-order relations

among multimodal features. Finally, a clustering algorithm is

performed on FCH to group the original multimodal features

into a set of partitions. Moreover, a multiclass boosting strategy

is developed to obtain a strong classifier by combining the weak

classifiers learned from each partition. The experimental results

on seven popular datasets show the effectiveness of our approach.

Index Terms—Feature correlation hypergraph, high-order re-

lations, multimodal features.

I. Introduction

T

O BETTER recognize objects, the human cognitive sys-

tem usually combines different types of features. For

example, it is difficult to separate the pear from the banana

by using color information alone because both are of yellow.

Similarly, it is difficult to separate apple from pear by using the

shape information alone. However, by combining both color

and shape into a more discriminative feature and employing it

Manuscript received December 13, 2012; revised June 7, 2013; accepted

September 22, 2013. This work was supported in part by the Singapore Na-

tional Research Foundation under its International Research Centre Singapore

Funding Initiative and administered by the IDM Programme Office, and in

part by the Natural Science Foundation of China under Grant 61202145. This

paper was recommended by Associate Editor H. Zhang.

L. Zhang and Y. Gao are with the School of Computing, Na-

tional University of Singapore, Singapore (e-mail: zglumg@zju.edu.cn;

kevin.gaoy@gmail.com).

C. Hong is with the Department of Computer Science, Xiamen University

of Technology, Xiamen, China (e-mail: cqhong@xmut.edu.cn).

Y. Feng and J. Zhu are with the College of Computer Sci-

ence, Zhejiang University, Zhejiang, China (e-mail: yinfufeng@zju.edu.cn;

jianke11@zju.edu.cn).

D. Cai is with the State Key Laboratory of CAD & CG, College of Computer

Science, Zhejiang University, Zhejiang, China (e-mail: dengcaii@zju.edu.cn).

Color versions of one or more of the figures in this paper are available

online at http://ieeexplore.ieee.org.

Digital Object Identifier 10.1109/TCYB.2013.2285219

for recognition, these fruits can be recognized more easily and

accurately. Motivated by this instance, researchers managed to

improve the recognition accuracy by integrating multimodal

features in the recognition process. In contrast to the con-

ventional single modal approaches, the multimodal features

contain the richer cues, and if such different modalities of

features are integrated optimally, a great enhancement can be

obtained in the process of patternrecognition.

Our work closely relates to three research topics: multicue

integration, modality identification integration, and hypergraph

learning methods.

A. Multicue-Based Integration

Multicue integration treats each multimodal feature as a

modality. In the literature, a series of multicue integration

methods have been proposed. In [1], each multimodal feature

corresponds to a subclassifier and recognition accuracy of

the subclassifier with the highest accuracy is treated as the

final decision. Kittler et al. [2], [40] proposed a theoretical

framework to combine the decisions from subclassifiers though

several classifier combining strategies, such as product rule or

sum rule are derived and analyzed. Greene et al. [3] proposed

a supervised algorithm for multimodal features combination,

wherein the combination is performed by applying matrix fac-

torization to group the related clusters produced on individual

views. Zhao et al. [4] proposed a multimodal feature selection

method by adjusting the covariance matrix obtained from the

multimodal features. Multiple kernel learning (MKL) [5] lin-

early combines kernels from the different multimodal features

into a more expressive one. As a generalized version of MKL,

LPBoost [6] adopts a boosting strategy to integrate multimodal

features. By exploring the complementary property of differ-

ent multimodal features, Multiview spectral embedding [7]

and Multiview stochastic neighbor embedding(m-SNE) [8]

obtains a physical meaningful embedding of the multimodal

features. It is noticeable that, all the above multicue integration

methods treat each multimodal as one modality, which is

heuristic-based one, since the interdependency between dif-

ferent features is left unexplored. Foresti and Snidaro [53],

Yang et al. [54] for detecting and tracking people, and

Wang et al. [55] and Kankanhalli et al. [57] for video surveil-

lance and traffic monitoring.

B. Modality Identification-Based Integration

In order to overcome the limitation of multicue integration,

modality identification integration is proposed by rearranging

2168-2267

c

2013 IEEE