SUPPORT VECTOR MACHINES

Introduction

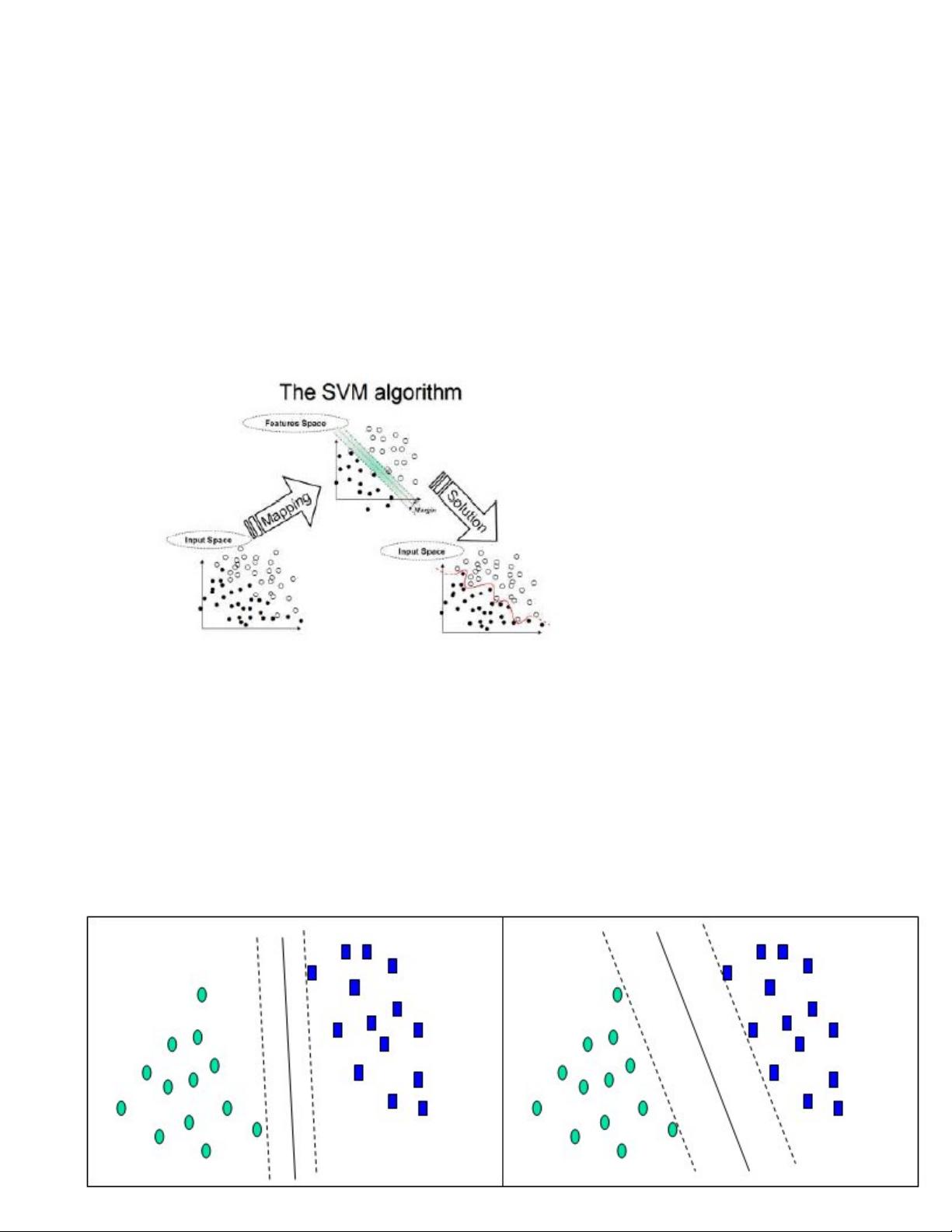

Support vector machines (SVMs) are a set of related supervised learning methods

which analyze data and recognize patterns, used for statistical classification and regression

analysis. Since an SVM is a classifier, then given a set of training examples, each marked as

belonging to one of two categories, an SVM training algorithm builds a model that predicts

whether a new example falls into one category or the other. Intuitively, an SVM model is a

representation of the examples as points in space, mapped so that the examples of the separate

categories are divided by a clear gap that is as wide as possible. New examples are then mapped

into that same space and predicted to belong to a category based on which side of the gap they

fall on.

More formally, a support vector machine constructs a hyperplane or set of hyperplanes in

a high or infinite dimensional space, which can be used for classification, regression or other

tasks. Intuitively, a good separation is achieved by the hyperplane that has the largest distance to

the nearest training datapoints of any class (so-called functional margin), since in general the

larger the margin the lower the generalization error of the classifier.

Whereas the original problem may be stated in a finite dimensional space, it often

happens that in that space the sets to be discriminated are not linearly separable. For this reason it

was proposed that the original finite dimensional space be mapped into a much higher

dimensional space presumably making the separation easier in that space. SVM schemes use a

mapping into a larger space so that cross products may be computed easily in terms of the

variables in the original space making the computational load reasonable. The cross products in

the larger space are defined in terms of a kernel function K(x,y) which can be selected to suit the

problem. The hyperplanes in the large space are defined as the set of points whose cross product

with a vector in that space is constant.

The vectors defining the hyperplanes can be chosen to be linear combinations with

parameters α

i

of images of feature vectors which occur in the data base. With this choice of a

hyperplane the points x in the feature space which are mapped into the hyperplane are

defined by the relation:

评论0