# PoolFormer: [MetaFormer is Actually What You Need for Vision](https://arxiv.org/abs/2111.11418) (CVPR 2022 Oral)

<p align="center">

<a href="https://arxiv.org/abs/2111.11418" alt="arXiv">

<img src="https://img.shields.io/badge/arXiv-2111.11418-b31b1b.svg?style=flat" /></a>

<a href="https://huggingface.co/spaces/akhaliq/poolformer" alt="Hugging Face Spaces">

<img src="https://img.shields.io/badge/%F0%9F%A4%97%20Hugging%20Face-Spaces-blue" /></a>

<a href="https://colab.research.google.com/github/sail-sg/poolformer/blob/main/misc/poolformer_demo.ipynb" alt="Colab">

<img src="https://colab.research.google.com/assets/colab-badge.svg" /></a>

</p>

This is a PyTorch implementation of **PoolFormer** proposed by our paper "[MetaFormer is Actually What You Need for Vision](https://arxiv.org/abs/2111.11418)" (CVPR 2022 Oral).

**Note**: Instead of designing complicated token mixer to achieve SOTA performance, the target of this work is to demonstrate the competence of transformer models largely stem from the general architecture MetaFormer. Pooling/PoolFormer are just the tools to support our claim.

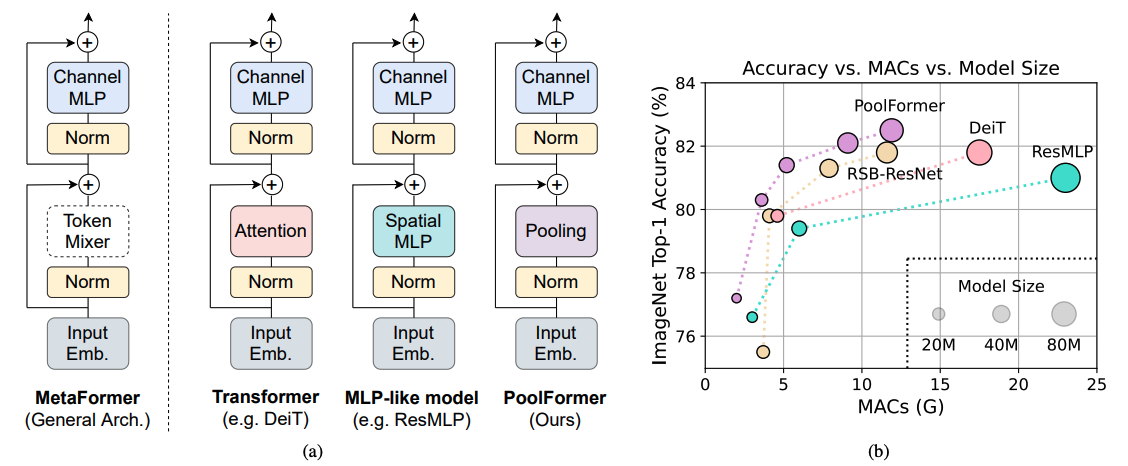

Figure 1: **MetaFormer and performance of MetaFormer-based models on ImageNet-1K validation set.**

We argue that the competence of transformer/MLP-like models primarily stem from the general architecture MetaFormer instead of the equipped specific token mixers.

To demonstrate this, we exploit an embarrassingly simple non-parametric operator, pooling, to conduct extremely basic token mixing.

Surprisingly, the resulted model PoolFormer consistently outperforms the DeiT and ResMLP as shown in (b), which well supports that MetaFormer is actually what we need to achieve competitive performance. RSB-ResNet in (b) means the results are from “ResNet Strikes Back” where ResNet is trained with improved training procedure for 300 epochs.

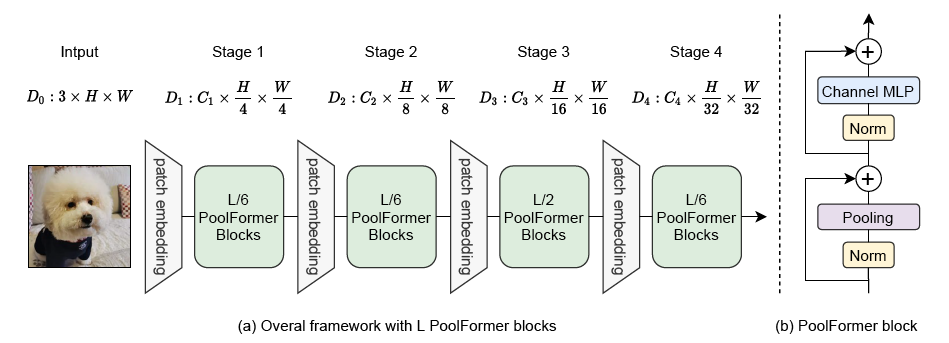

Figure 2: (a) **The overall framework of PoolFormer.** (b) **The architecture of PoolFormer block.** Compared with transformer block, it replaces attention with an extremely simple non-parametric operator, pooling, to conduct only basic token mixing.

## Bibtex

```

@article{yu2021metaformer,

title={MetaFormer is Actually What You Need for Vision},

author={Yu, Weihao and Luo, Mi and Zhou, Pan and Si, Chenyang and Zhou, Yichen and Wang, Xinchao and Feng, Jiashi and Yan, Shuicheng},

journal={arXiv preprint arXiv:2111.11418},

year={2021}

}

```

**Detection and instance segmentation on COCO** configs and trained models are [here](detection/).

**Semantic segmentation on ADE20K** configs and trained models are [here](segmentation/).

## Image Classification

### 1. Requirements

torch>=1.7.0; torchvision>=0.8.0; pyyaml; [apex-amp](https://github.com/NVIDIA/apex) (if you want to use fp16); [timm](https://github.com/rwightman/pytorch-image-models) (`pip install git+https://github.com/rwightman/pytorch-image-models.git@9d6aad44f8fd32e89e5cca503efe3ada5071cc2a`)

data prepare: ImageNet with the following folder structure, you can extract ImageNet by this [script](https://gist.github.com/BIGBALLON/8a71d225eff18d88e469e6ea9b39cef4).

```

│imagenet/

├──train/

│ ├── n01440764

│ │ ├── n01440764_10026.JPEG

│ │ ├── n01440764_10027.JPEG

│ │ ├── ......

│ ├── ......

├──val/

│ ├── n01440764

│ │ ├── ILSVRC2012_val_00000293.JPEG

│ │ ├── ILSVRC2012_val_00002138.JPEG

│ │ ├── ......

│ ├── ......

```

### 2. PoolFormer Models

| Model | #params | Image resolution | Top1 Acc| Download |

| :--- | :---: | :---: | :---: | :---: |

| poolformer_s12 | 12M | 224 | 77.2 | [here](https://github.com/sail-sg/poolformer/releases/download/v1.0/poolformer_s12.pth.tar) |

| poolformer_s24 | 21M | 224 | 80.3 | [here](https://github.com/sail-sg/poolformer/releases/download/v1.0/poolformer_s24.pth.tar) |

| poolformer_s36 | 31M | 224 | 81.4 | [here](https://github.com/sail-sg/poolformer/releases/download/v1.0/poolformer_s36.pth.tar) |

| poolformer_m36 | 56M | 224 | 82.1 | [here](https://github.com/sail-sg/poolformer/releases/download/v1.0/poolformer_m36.pth.tar) |

| poolformer_m48 | 73M | 224 | 82.5 | [here](https://github.com/sail-sg/poolformer/releases/download/v1.0/poolformer_m48.pth.tar) |

All the pretrained models can also be downloaded by [BaiDu Yun](https://pan.baidu.com/s/1HSaJtxgCkUlawurQLq87wQ) (password: esac).

#### Comparison with improved ResNet scores

#### Web Demo

Integrated into [Huggingface Spaces 🤗](https://huggingface.co/spaces) using [Gradio](https://github.com/gradio-app/gradio). Try out the Web Demo: [](https://huggingface.co/spaces/akhaliq/poolformer)

#### Usage

We also provide a Colab notebook which run the steps to perform inference with poolformer: [](https://colab.research.google.com/github/sail-sg/poolformer/blob/main/misc/poolformer_demo.ipynb)

### 3. Validation

To evaluate our PoolFormer models, run:

```bash

MODEL=poolformer_s12 # poolformer_{s12, s24, s36, m36, m48}

python3 validate.py /path/to/imagenet --model $MODEL \

--checkpoint /path/to/checkpoint -b 128

```

### 4. Train

We show how to train PoolFormers on 8 GPUs. The relation between learning rate and batch size is lr=bs/1024*1e-3.

For convenience, assuming the batch size is 1024, then the learning rate is set as 1e-3 (for batch size of 1024, setting the learning rate as 2e-3 sometimes sees better performance).

```bash

MODEL=poolformer_s12 # poolformer_{s12, s24, s36, m36, m48}

DROP_PATH=0.1 # drop path rates [0.1, 0.1, 0.2, 0.3, 0.4] responding to model [s12, s24, s36, m36, m48]

CUDA_VISIBLE_DEVICES=0,1,2,3,4,5,6,7 ./distributed_train.sh 8 /path/to/imagenet \

--model $MODEL -b 128 --lr 1e-3 --drop-path $DROP_PATH --apex-amp

```

## Acknowledgment

Our implementation is mainly based on the following codebases. We gratefully thank the authors for their wonderful works.

[pytorch-image-models](https://github.com/rwightman/pytorch-image-models), [mmdetection](https://github.com/open-mmlab/mmdetection), [mmsegmentation](https://github.com/open-mmlab/mmsegmentation).

Besides, Weihao Yu would like to thank TPU Research Cloud (TRC) program for the support of partial computational resources.

没有合适的资源?快使用搜索试试~ 我知道了~

poolformer源码_机器学习

共106个文件

py:91个

sh:9个

md:3个

1.该资源内容由用户上传,如若侵权请联系客服进行举报

2.虚拟产品一经售出概不退款(资源遇到问题,请及时私信上传者)

2.虚拟产品一经售出概不退款(资源遇到问题,请及时私信上传者)

版权申诉

0 下载量 70 浏览量

2022-09-21

02:53:01

上传

评论

收藏 442KB ZIP 举报

温馨提示

poolformer的源码,原文MetaFormer is Actually What You Need for Vision

资源推荐

资源详情

资源评论

收起资源包目录

poolformer源码_机器学习 (106个子文件)

poolformer源码_机器学习 (106个子文件)  Dockerfile_mmdetseg 1KB

Dockerfile_mmdetseg 1KB poolformer_demo.ipynb 426KB

poolformer_demo.ipynb 426KB LICENSE 11KB

LICENSE 11KB README.md 7KB

README.md 7KB README.md 5KB

README.md 5KB README.md 4KB

README.md 4KB train.py 40KB

train.py 40KB poolformer.py 20KB

poolformer.py 20KB validate.py 15KB

validate.py 15KB pytorch2onnx.py 13KB

pytorch2onnx.py 13KB deploy_test.py 11KB

deploy_test.py 11KB test.py 9KB

test.py 9KB onnx2tensorrt.py 9KB

onnx2tensorrt.py 9KB align_resize.py 9KB

align_resize.py 9KB checkpoint.py 9KB

checkpoint.py 9KB test.py 8KB

test.py 8KB train.py 7KB

train.py 7KB cascade_mask_rcnn_r50_fpn.py 7KB

cascade_mask_rcnn_r50_fpn.py 7KB train.py 7KB

train.py 7KB cascade_mask_rcnn_pvtv2_b2_fpn.py 7KB

cascade_mask_rcnn_pvtv2_b2_fpn.py 7KB coco_stuff10k.py 7KB

coco_stuff10k.py 7KB train.py 6KB

train.py 6KB cascade_rcnn_r50_fpn.py 6KB

cascade_rcnn_r50_fpn.py 6KB pytorch2torchscript.py 6KB

pytorch2torchscript.py 6KB stare.py 6KB

stare.py 6KB test.py 6KB

test.py 6KB train.py 6KB

train.py 6KB browse_dataset.py 5KB

browse_dataset.py 5KB coco_stuff164k.py 5KB

coco_stuff164k.py 5KB analyze_logs.py 4KB

analyze_logs.py 4KB hrf.py 4KB

hrf.py 4KB drive.py 4KB

drive.py 4KB mask_rcnn_r50_fpn.py 4KB

mask_rcnn_r50_fpn.py 4KB mask_rcnn_r50_caffe_c4.py 4KB

mask_rcnn_r50_caffe_c4.py 4KB epoch_based_runner.py 4KB

epoch_based_runner.py 4KB mmseg2torchserve.py 4KB

mmseg2torchserve.py 4KB faster_rcnn_r50_caffe_c4.py 4KB

faster_rcnn_r50_caffe_c4.py 4KB faster_rcnn_r50_fpn.py 4KB

faster_rcnn_r50_fpn.py 4KB faster_rcnn_r50_caffe_dc5.py 3KB

faster_rcnn_r50_caffe_dc5.py 3KB chase_db1.py 3KB

chase_db1.py 3KB voc_aug.py 3KB

voc_aug.py 3KB mit2mmseg.py 3KB

mit2mmseg.py 3KB pascal_context.py 3KB

pascal_context.py 3KB checkpoint.py 3KB

checkpoint.py 3KB swin2mmseg.py 3KB

swin2mmseg.py 3KB benchmark.py 2KB

benchmark.py 2KB vit2mmseg.py 2KB

vit2mmseg.py 2KB fast_rcnn_r50_fpn.py 2KB

fast_rcnn_r50_fpn.py 2KB wider_face.py 2KB

wider_face.py 2KB cityscapes_instance.py 2KB

cityscapes_instance.py 2KB ade20k.py 2KB

ade20k.py 2KB cityscapes_detection.py 2KB

cityscapes_detection.py 2KB coco_instance_semantic.py 2KB

coco_instance_semantic.py 2KB voc0712.py 2KB

voc0712.py 2KB deepfashion.py 2KB

deepfashion.py 2KB mmseg_handler.py 2KB

mmseg_handler.py 2KB cityscapes.py 2KB

cityscapes.py 2KB rpn_r50_fpn.py 2KB

rpn_r50_fpn.py 2KB get_flops.py 2KB

get_flops.py 2KB test_torchserve.py 2KB

test_torchserve.py 2KB coco_instance.py 2KB

coco_instance.py 2KB retinanet_r50_fpn.py 2KB

retinanet_r50_fpn.py 2KB rpn_r50_caffe_c4.py 2KB

rpn_r50_caffe_c4.py 2KB coco_detection.py 2KB

coco_detection.py 2KB ssd300.py 1KB

ssd300.py 1KB fpn_poolformer_s24_ade20k_40k.py 1KB

fpn_poolformer_s24_ade20k_40k.py 1KB fpn_poolformer_m48_ade20k_40k.py 1KB

fpn_poolformer_m48_ade20k_40k.py 1KB fpn_poolformer_s36_ade20k_40k.py 1KB

fpn_poolformer_s36_ade20k_40k.py 1KB fpn_poolformer_m36_ade20k_40k.py 1KB

fpn_poolformer_m36_ade20k_40k.py 1KB fpn_poolformer_s12_ade20k_40k.py 1KB

fpn_poolformer_s12_ade20k_40k.py 1KB optimizer.py 1KB

optimizer.py 1KB publish_model.py 1KB

publish_model.py 1KB fpn_r50.py 1KB

fpn_r50.py 1KB print_config.py 1KB

print_config.py 1KB retinanet_poolformer_s12_fpn_1x_coco.py 936B

retinanet_poolformer_s12_fpn_1x_coco.py 936B retinanet_poolformer_s36_fpn_1x_coco.py 935B

retinanet_poolformer_s36_fpn_1x_coco.py 935B retinanet_poolformer_s24_fpn_1x_coco.py 935B

retinanet_poolformer_s24_fpn_1x_coco.py 935B mask_rcnn_poolformer_s12_fpn_1x_coco.py 874B

mask_rcnn_poolformer_s12_fpn_1x_coco.py 874B mask_rcnn_poolformer_s36_fpn_1x_coco.py 873B

mask_rcnn_poolformer_s36_fpn_1x_coco.py 873B mask_rcnn_poolformer_s24_fpn_1x_coco.py 873B

mask_rcnn_poolformer_s24_fpn_1x_coco.py 873B lvis_v0.5_instance.py 786B

lvis_v0.5_instance.py 786B lvis_v1_instance.py 736B

lvis_v1_instance.py 736B fpn_r50_512x512_40k_ade20k.py 681B

fpn_r50_512x512_40k_ade20k.py 681B schedule_160k.py 382B

schedule_160k.py 382B schedule_20k.py 379B

schedule_20k.py 379B schedule_40k.py 379B

schedule_40k.py 379B schedule_80k.py 379B

schedule_80k.py 379B default_runtime.py 368B

default_runtime.py 368B default_runtime.py 321B

default_runtime.py 321B schedule_2x.py 320B

schedule_2x.py 320B schedule_20e.py 320B

schedule_20e.py 320B schedule_1x.py 319B

schedule_1x.py 319B fpn_x101644d_512x512_40k_ade20k.py 249B

fpn_x101644d_512x512_40k_ade20k.py 249B fpn_x101324d_512x512_40k_ade20k.py 249B

fpn_x101324d_512x512_40k_ade20k.py 249B fpn_r18_512x512_40k_ade20k.py 191B

fpn_r18_512x512_40k_ade20k.py 191B fpn_r101_512x512_40k_ade20k.py 123B

fpn_r101_512x512_40k_ade20k.py 123B __init__.py 25B

__init__.py 25B slurm_test.sh 566B

slurm_test.sh 566B slurm_train.sh 539B

slurm_train.sh 539B dist_test.sh 272B

dist_test.sh 272B共 106 条

- 1

- 2

资源评论

小波思基

- 粉丝: 72

- 资源: 1万+

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功