没有合适的资源?快使用搜索试试~ 我知道了~

AIGC生成视频模型+ StreamingT2V兼容SVD和animatediff

需积分: 1 0 下载量 113 浏览量

2024-04-16

14:21:58

上传

评论

收藏 16.25MB PDF 举报

温馨提示

试读

22页

超长视频生成! 1. Picsart AI Research等团队发布了StreamingT2V,一种生成长达2分钟、1200帧的AI视频模型,超越了Sora的60秒时长,且为开源项目。 2. StreamingT2V兼容SVD和animatediff等模型,有助于促进开源生态发展,理论上可生成无限长视频。 3. 该模型采用条件注意力模块和外观保留模块等技术,确保视频时间一致性和高质量图像。 4. 应用场景包括电影、游戏和虚拟世界训练环境,StreamingT2V的开源代码和Demo可在GitHub和huggingface上免费获取。 Demo试用:https://huggingface.co/spaces/PAIR/StreamingT2V

资源推荐

资源详情

资源评论

StreamingT2V: Consistent, Dynamic, and Extendable

Long Video Generation from Text

Roberto Henschel

1∗

, Levon Khachatryan

1∗

, Daniil Hayrapetyan

1∗

, Hayk Poghosyan

1

, Vahram Tadevosyan

1

,

Zhangyang Wang

1,2

, Shant Navasardyan

1

, Humphrey Shi

1,3

1

Picsart AI Resarch (PAIR)

2

UT Austin

3

SHI Labs @ Georgia Tech, Oregon & UIUC

https://github.com/Picsart-AI-Research/StreamingT2V

Figure 1. StreamingT2V is an advanced autoregressive technique that enables the creation of long videos featuring rich motion dynamics

without any stagnation. It ensures temporal consistency throughout the video, aligns closely with the descriptive text, and maintains high

frame-level image quality. Our demonstrations include successful examples of videos up to 1200 frames, spanning 2 minutes, and can be

extended for even longer durations. Importantly, the effectiveness of StreamingT2V is not limited by the specific Text2Video model used,

indicating that improvements in base models could yield even higher-quality videos.

Abstract

Text-to-video diffusion models enable the generation of

high-quality videos that follow text instructions, making it

easy to create diverse and individual content. However, ex-

*

Equal contribution.

isting approaches mostly focus on high-quality short video

generation (typically 16 or 24 frames), ending up with hard-

cuts when naively extended to the case of long video syn-

thesis. To overcome these limitations, we introduce Stream-

ingT2V, an autoregressive approach for long video gener-

ation of 80, 240, 600, 1200 or more frames with smooth

1

arXiv:2403.14773v1 [cs.CV] 21 Mar 2024

transitions. The key components are: (i) a short-term mem-

ory block called conditional attention module (CAM), which

conditions the current generation on the features extracted

from the previous chunk via an attentional mechanism, lead-

ing to consistent chunk transitions, (ii) a long-term mem-

ory block called appearance preservation module, which

extracts high-level scene and object features from the first

video chunk to prevent the model from forgetting the ini-

tial scene, and (iii) a randomized blending approach that

enables to apply a video enhancer autoregressively for in-

finitely long videos without inconsistencies between chunks.

Experiments show that StreamingT2V generates high mo-

tion amount. In contrast, all competing image-to-video

methods are prone to video stagnation when applied naively

in an autoregressive manner. Thus, we propose with Stream-

ingT2V a high-quality seamless text-to-long video genera-

tor that outperforms competitors with consistency and mo-

tion.

1. Introduction

In recent years, with the raise of Diffusion Models [15, 26,

28, 34], the task of text-guided image synthesis and manipu-

lation gained enormous attention from the community. The

huge success in image generation led to the further exten-

sion of diffusion models to generate videos conditioned by

textual prompts [4, 5, 7, 11–13, 17, 18, 20, 32, 37, 39, 45].

Despite the impressive generation quality and text align-

ment, the majority of existing approaches such as [4, 5,

17, 39, 45] are mostly focused on generating short frame

sequences (typically of 16 or 24 frame-length). However,

short videos are limited in real-world use-cases such as ad

making, storytelling, etc.

The na

¨

ıve approach of simply training existing methods

on long videos (e.g. ≥ 64 frames) is normally unfeasi-

ble. Even for generating short sequences, a very expensive

training (e.g. using more that 260K steps and 4500 batch

size [39]) is typically required. Without training on longer

videos, video quality commonly degrades when short video

generators are made to output long videos (see appendix).

Existing approaches, such as [5, 17, 23], thus extend the

baselines to autoregressively generate short video chunks

conditioned on the last frame(s) of the previous chunk.

However, the straightforward long-video generation ap-

proach of simply concatenating the noisy latents of a video

chunk with the last frame(s) of the previous chunk leads to

poor conditioning with inconsistent scene transitions (see

Sec. 5.3). Some works [4, 8, 40, 43, 48] incorporate also

CLIP [25] image embeddings of the last frame of the previ-

ous chunk reaching to a slightly better consistency, but are

still prone to inconsistent global motion across chunks (see

Fig. 5) as the CLIP image encoder loses information impor-

tant for perfectly reconstructing the conditional frames. The

concurrent work SparseCtrl [12] utilizes a more sophisti-

cated conditioning mechanism by sparse encoder. Its archi-

tecture requires to concatenate additional zero-filled frames

to the conditioning frames before being plugged into sparse

encoder. However, this inconsistency in the input leads to

inconsistencies in the output (see Sec.5.4). Moreover, we

observed that all image-to-video methods that we evaluated

in our experiments (see Sec.5.4) lead eventually to video

stagnation, when applied autoregressively by conditioning

on the last frame of the previous chunk.

To overcome the weaknesses and limitations of current

works, we propose StreamingT2V, an autoregressive text-

to-video method equipped with long/short-term memory

blocks that generates long videos without temporal incon-

sistencies.

To this end, we propose the Conditional Attention Mod-

ule (CAM) which, due to its attentional nature, effectively

borrows the content information from the previous frames

to generate new ones, while not restricting their motion by

the previous structures/shapes. Thanks to CAM, our results

are smooth and with artifact-free video chunk transitions.

Existing approaches are not only prone to temporal in-

consistencies and video stagnation, but they suffer from

object appearance/characteristic changes and video quality

degradation over time (see e.g., SVD [4] in Fig. 7). The

reason is that, due to conditioning only on the last frame(s)

of the previous chunk, they overlook the long-term depen-

dencies of the autoregressive process. To address this is-

sue we design an Appearance Preservation Module (APM)

that extracts object or global scene appearance information

from an initial image (anchor frame), and conditions the

video generation process of all chunks with that informa-

tion, which helps to keep object and scene features across

the autoregressive process.

To further improve the quality and resolution of our long

video generation, we adapt a video enhancement model for

autoregressive generation. For this purpose, we choose a

high-resolution text-to-video model and utilize the SDEdit

[22] approach for enhancing consecutive 24-frame chunks

(overlapping with 8 frames) of our video. To make the

chunk enhancement transitions smooth, we design a ran-

domized blending approach for seamless blending of over-

lapping enhanced chunks.

Experiments show that StreamingT2V successfully gen-

erates long and temporal consistent videos from text with-

out video stagnation. To summarize, our contributions are

three-fold:

• We introduce StreamingT2V, an autoregressive approach

for seamless synthesis of extended video content using

short and long-term dependencies.

• Our Conditional Attention Module (CAM) and Appear-

ance Preservation Module (APM) ensure the natural

continuity of the global scene and object characteristics

2

of generated videos.

• We seamlessly enhance generated long videos by intro-

ducing our randomized blending approach of consecu-

tive overlapping chunks.

2. Related Work

Text-Guided Video Diffusion Models. Generating videos

from textual instructions using Diffusion Models [15, 33]

is a recently established yet very active field of research

introduced by Video Diffusion Models (VDM) [17]. The

approach requires massive training resources and can gen-

erate only low-resolution videos (up to 128x128), which

are severely limited by the generation of up to 16 frames

(without autoregression). Also, the training of text-to-video

models usually is done on large datasets such as WebVid-

10M [3], or InternVid [41]. Several methods employ video

enhancement in the form of spatial/temporal upsampling

[5, 16, 17, 32], using cascades with up to 7 enhancer mod-

ules [16]. Such an approach produces high-resolution and

long videos. Yet, the generated content is still limited by the

key frames.

Towards generating longer videos (i.e. more keyframes),

Text-To-Video-Zero (T2V0) [18] and ART-V [42] employ

a text-to-image diffusion model. Therefore, they can gen-

erate only simple motions. T2V0 conditions on its first

frame via cross-frame attention and ART-V on an anchor

frame. Due to the lack of global reasoning, it leads to un-

natural or repetitive motions. MTVG [23] turns a text-to-

video model into an autoregressive method by a trainin-free

approach. It employs strong consistency priors between

and among video chunks, which leads to very low motion

amount, and mostly near-static background. FreeNoise [24]

samples a small set of noise vectors, re-uses them for the

generation of all frames, while temporal attention is per-

formed on local windows. As the employed temporal at-

tentions are invariant to such frame shuffling, it leads to

high similarity between frames, almost always static global

motion and near-constant videos. Gen-L [38] generates

overlapping short videos and aggregates them via temporal

co-denoising, which can lead to quality degradations with

video stagnation.

Image-Guided Video Diffusion Models as Long Video

Generators. Several works condition the video generation

by a driving image or video [4, 6–8, 10, 12, 21, 27, 40,

43, 44, 48]. They can thus be turned into an autoregres-

sive method by conditioning on the frame(s) of the previous

chunk.

VideoDrafter [21] uses a text-to-image model to obtain

an anchor frame. A video diffusion model is conditioned

on the driving anchor to generate independently multiple

videos that share the same high-level context. However, no

consistency among the video chunks is enforced, leading

to drastic scene cuts. Several works [7, 8, 44] concatenate

the (encoded) conditionings with an additional mask (which

indicates which frame is provided) to the input of the video

diffusion model.

In addition to concatenating the conditioning to the in-

put of the diffusion model, several works [4, 40, 48] replace

the text embeddings in the cross-attentions of the diffusion

model by CLIP [25] image embeddings of the conditional

frames. However, according to our experiments, their appli-

cability for long video generation is limited. SVD [4] shows

severe quality degradation over time (see Fig.7), and both,

I2VGen-XL[48] and SVD[4] generate often inconsistencies

between chunks, still indicating that the conditioning mech-

anism is too weak.

Some works [6, 43] such as DynamiCrafter-XL [43] thus

add to each text cross-attention an image cross-attention,

which leads to better quality, but still to frequent inconsis-

tencies between chunks.

The concurrent work SparseCtrl [12] adds a ControlNet

[46]-like branch to the model, which takes the conditional

frames and a frame-indicating mask as input. It requires by

design to append additional frames consisting of black pix-

els to the conditional frames. This inconsistency is difficult

to compensate for the model, leading to frequent and severe

scene cuts between frames.

Overall, only a small number of keyframes can currently

be generated at once with high quality. While in-between

frames can be interpolated, it does not lead to new content.

Also, while image-to-video methods can be used autore-

gressively, their used conditional mechanisms lead either to

inconsistencies, or the method suffers from video stagna-

tion. We conclude that existing works are not suitable for

high-quality and consistent long video generation without

video stagnation.

3. Preliminaries

Diffusion Models. Our text-to-video model, which we term

StreamingT2V, is a diffusion model that operates in the la-

tent space of the VQ-GAN [9, 35] autoencoder D(E(·)),

where E and D are the corresponding encoder and decoder,

respectively. Given a video V ∈ R

F ×H×W ×3

, composed

of F frames with spatial resolution H × W , its latent code

x

0

∈ R

F ×h×w×c

is obtained through frame-by-frame ap-

plication of the encoder. More precisely, by identifying

each tensor x ∈ R

F ×

ˆ

h× ˆw׈c

as a sequence (x

f

)

F

f=1

with

x

f

∈ R

ˆ

h× ˆw׈c

, we obtain the latent code via x

f

0

:= E(V

f

),

for all f = 1, . . . , F . The diffusion forward process gradu-

ally adds Gaussian noise ϵ ∼ N (0, I) to the signal x

0

:

q(x

t

|x

t−1

) = N (x

t

;

p

1 − β

t

x

t−1

, β

t

I), t = 1, . . . , T

(1)

where q(x

t

|x

t−1

) is the conditional density of x

t

given

x

t−1

, and {β

t

}

T

t=1

are hyperparameters. A high value for T

is chosen such that the forward process completely destroys

3

Figure 2. The overall pipeline of StreamingT2V: In the Initialization Stage the first 16-frame chunk is synthesized by a text-to-video

model (e.g. Modelscope [39]). In the Streaming T2V Stage the new content for further frames are autoregressively generated. Finally, in

the Streaming Refinement Stage the generated long video (600, 1200 frames or more) is autoregressively enhanced by applying a high-

resolution text-to-short-video model (e.g. MS-Vid2Vid-XL [48]) equipped with our randomized blending approach.

the initial signal x

0

resulting in x

T

∼ N (0, I). The goal of

a diffusion model is then to learn a backward process

p

θ

(x

t−1

|x

t

) = N (x

t−1

; µ

θ

(x

t

, t), Σ

θ

(x

t

, t)) (2)

for t = T, . . . , 1 (see DDPM [15]), which allows to gen-

erate a valid signal x

0

from standard Gaussian noise x

T

.

Once x

0

is obtained from x

T

, we obtain the generated video

through frame-wise application of the decoder:

e

V

f

:=

D(x

f

0

), for all f = 1, . . . , F . Yet, instead of learning

a predictor for mean and variance in Eq. (2), we learn a

model ϵ

θ

(x

t

, t) to predict the Gaussian noise ϵ that was

used to form x

t

from input signal x

0

(which is a common

reparametrization [15]).

To guide the video generation by a textual prompt τ , we

use a noise predictor ϵ

θ

(x

t

, t, τ ) that is conditioned on τ.

We model ϵ

θ

(x

t

, t, τ ) as a neural network with learnable

weights θ and train it on the denoising task:

min

θ

E

t,(x

0

,τ )∼p

data

,ϵ∼N (0,I)

||ϵ − ϵ

θ

(x

t

, t, τ )||

2

2

, (3)

using the data distribution p

data

. To simplify notation, we

will denote by x

r:s

t

= (x

j

t

)

s

j=r

the latent sequence of x

t

from frame r to frame s, for all r, t, s ∈ N.

Text-To-Video Models. Most text-to-video models [5, 11,

16, 32, 39] extend pre-trained text-to-image models [26, 28]

by inserting new layers that operate on the temporal axis.

Modelscope (MS) [39] follows this approach by extend-

ing the UNet-like [29] architecture of Stable Diffusion [28]

with temporal convolutional and attentional layers. It was

trained in a large-scale setup to generate videos with 3

FPS@256x256 and 16 frames. The quadratic growth in

memory and compute of the temporal attention layers (used

in recent text-to-video models) together with very high

training costs limits current text-to-video models to gen-

erate long sequences. In this paper, we demonstrate our

StreamingT2V method by taking MS as a basis and turn it

into an autoregressive model suitable for long video gener-

ation with high motion dynamics and consistency.

4. Method

In this section, we introduce our method for high-resolution

text-to-long video generation. We first generate 256 × 256

resolution long videos for 5 seconds (16fps), then enhance

them to higher resolution (720 × 720). The overview of the

whole pipeline is provided in Fig. 2. The long video gener-

ation part consists of (Initialization Stage) synthesizing the

first 16-frame chunk by a pre-traiend text-to-video model

(for example one may take Modelscope [39]), and (Stream-

ing T2V Stage) autoregressively generating the new con-

tent for further frames. For the autoregression (see Fig. 3),

we propose our conditional attention module (CAM) that

leverages short-term information from the last F

cond

= 8

frames of the previous chunk to enable seamless transitions

between chunks. Furthermore, we leverage our appearance

preservation module (APM), which extracts long-term in-

formation from a fixed anchor frame making the autore-

gression process robust against loosing object appearance

or scene details in the generation.

After having a long video (80, 240, 600, 1200 frames

or more) generated, we apply the Streaming Refinement

Stage which enhances the video by autoregressively apply-

ing a high-resolution text-to-short-video model (for exam-

ple one may take MS-Vid2Vid-XL [48]) equipped by our

randomized blending approach for seamless chunk process-

ing. The latter step is done without additional training by so

making our approach affordable with lower computational

costs.

4

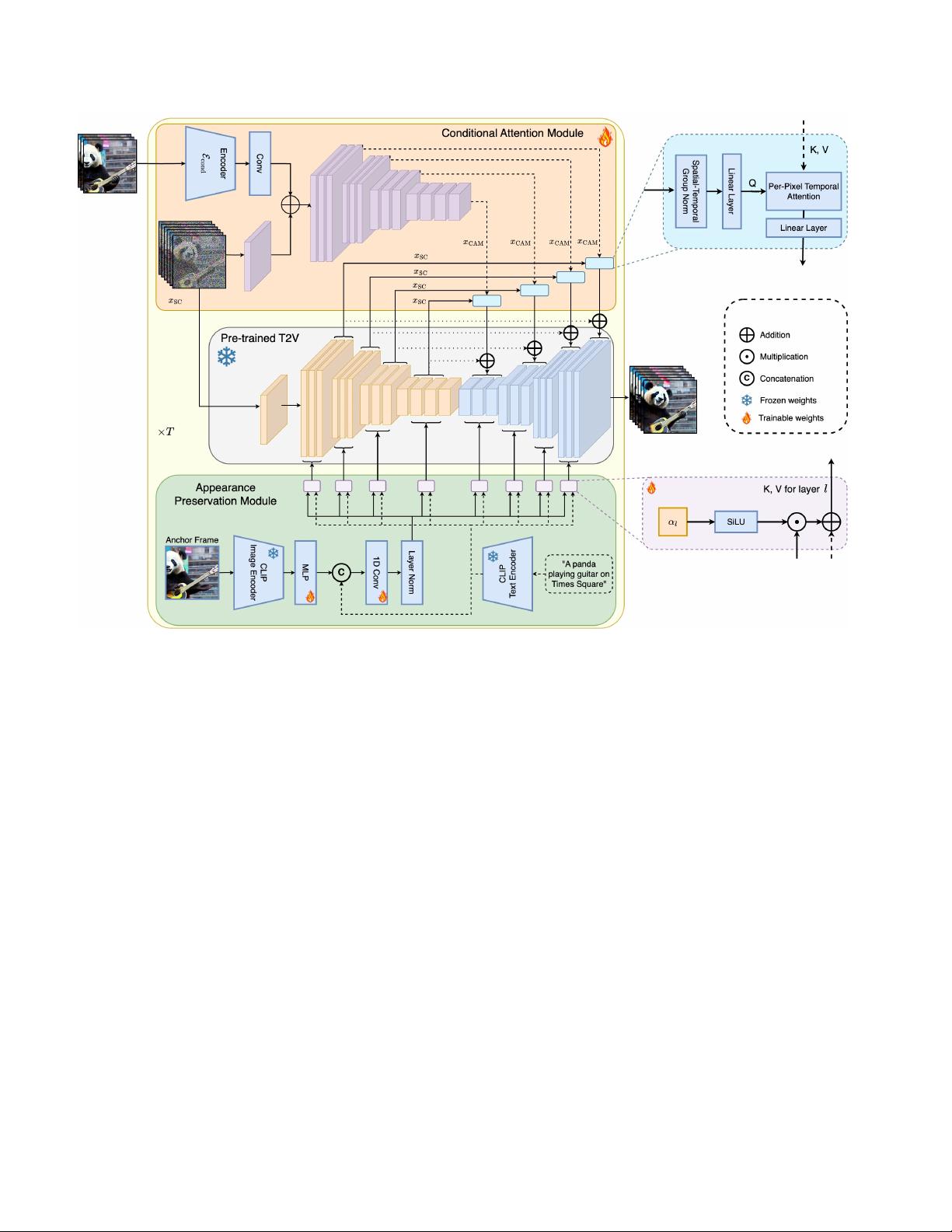

Figure 3. Method overview: StreamingT2V extends a video diffusion model (VDM) by the conditional attention module (CAM) as short-

term memory, and the appearance preservation module (APM) as long-term memory. CAM conditions the VDM on the previous chunk

using a frame encoder E

cond

. The attentional mechanism of CAM leads to smooth transitions between chunks and videos with high motion

amount at the same time. APM extracts from an anchor frame high-level image features and injects it to the text cross-attentions of the

VDM. APM helps to preserve object/scene features across the autogressive video generation.

4.1. Conditional Attention Module

To train a conditional network for our Streaming T2V stage,

we leverage the pre-trained power of a text-to-video model

(e.g. Modelscope [39]), as a prior for long video gener-

ation in an autoregressive manner. In the further writing

we will refer this pre-trained text-to-(short)video model as

Video-LDM. To autoregressively condition Video-LDM by

some short-term information from the previous chunk (see

Fig. 2, mid), we propose the Conditional Attention Mod-

ule (CAM), which consists of a feature extractor, and a fea-

ture injector into Video-LDM UNet, inspired by ControlNet

[46]. The feature extractor utilizes a frame-wise image en-

coder E

cond

, followed by the same encoder layers that the

Video-LDM UNet uses up to its middle layer (and initial-

ized with the UNet’s weights). For the feature injection,

we let each long-range skip connection in the UNet at-

tend to corresponding features generated by CAM via cross-

attention.

Let x denote the output of E

cond

after zero-convolution.

We use addition, to fuse x with the output of the first

temporal transformer block of CAM. For the injection of

CAM’s features into the Video-LDM Unet, we consider

the UNet’s skip-connection features x

SC

∈ R

b×F ×h×w×c

(see Fig.3) with batch size b. We apply spatio-temporal

group norm, and a linear projection P

in

on x

SC

. Let

x

′

SC

∈ R

(b·w·h)×F ×c

be the resulting tensor after reshap-

ing. We condition x

′

SC

on the corresponding CAM fea-

ture x

CAM

∈ R

(b·w·h)×F

cond

×c

(see Fig.3), where F

cond

is the number of conditioning frames, via temporal multi-

head attention (T-MHA) [36], i.e. independently for each

spatial position (and batch). Using learnable linear maps

P

Q

, P

K

, P

V

, for queries, keys, and values, we apply T-

MHA using keys and values from x

CAM

and queries from

5

剩余21页未读,继续阅读

资源评论

雨过朦胧影

- 粉丝: 82

- 资源: 13

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜最新资源

- python tkinter-08-盒子模型.ev4.rar

- Doozy UI Manager 2023

- 基于matlab实现夜间车牌识别程序(1).rar

- 基于matlab实现无线传感器网络无需测距定位算法matlab源代码 包括apit,dv-hop,amorphous在内的共7个

- 基于python的yolov5实现的旋转目标检测

- 基于matlab实现无线传感器网络 CAB定位仿真程序 这是无线传感器节点定位CAB算法的仿真程序,由matlab完成.rar

- 基于matlab实现图像处理,本程序使用背景差分法对来往车辆进行检测和跟踪.rar

- 基于matlab实现视频监控中车型识别代码,自己写的,希望和大家多多交流.rar

- springcodespringcodespringcodespringcode

- 基于matlab实现权值的MAXDEV无线传感器网络定位算法研究 MAXDEV 无线传感器 定位 算法.rar

资源上传下载、课程学习等过程中有任何疑问或建议,欢迎提出宝贵意见哦~我们会及时处理!

点击此处反馈

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功