没有合适的资源?快使用搜索试试~ 我知道了~

补充材料-隐马尔科夫模型1

需积分: 0 0 下载量 30 浏览量

2022-08-03

14:47:03

上传

评论

收藏 2.62MB PDF 举报

温馨提示

试读

30页

补充材料-隐马尔科夫模型1

资源详情

资源评论

资源推荐

A Tutorial on Hidden Markov Models and

Selected Applications in Speech Recognition

LAWRENCE

R.

RABINER,

FELLOW,

IEEE

Although initially introduced and studied in the late 1960s and

early 1970s, statistical methods of Markov source or hidden Markov

modeling have become increasingly popular in the last several

years. There are two strong reasons why this has occurred. First the

models are very rich in mathematical structure and hence can form

the theoretical basis for use in a wide range of applications. Sec-

ond the models, when applied properly, work very well in practice

for several important applications. In this paper we attempt

to

care-

fully and methodically review the theoretical aspects of this type

of statistical modeling and show how they have been applied to

selected problems in machine recognition of speech.

I.

INTRODUCTION

Real-world processes generally produce observable out-

puts which can be characterized as signals. The signals can

bediscrete in

nature(e.g.,charactersfrom

afinitealphabet,

quantized vectors from a codebook, etc.), or continuous in

nature (e.g., speech samples, temperature measurements,

music, etc.). The signal source can be stationary (i.e., its sta-

tistical properties do not vary with time), or nonstationary

(i.e., the signal properties vary over time). The signals can

be pure (i.e., coming strictly from a single source), or can

be corrupted from other signal sources (e.g., noise) or by

transmission distortions, reverberation, etc.

A

problem of fundamental interest

is

characterizing such

real-world signals in terms of signal models. There are sev-

eral reasons why one

is

interested in applying signal models.

First of

all,

a

signal model can provide the basis for a the-

oretical description of a signal processing system which can

be used to process the signal

so

as to provide a desired out-

put. For example if we are interested in enhancing a speech

signal corrupted by noise and transmission distortion, we

can use the signal model to design a system which will opti-

mally remove the noise and undo the transmission distor-

tion.

A

second reason why signal models are important

is

that they are potentially capable of letting

us

learn a great

deal about the signal source (i.e., the real-world process

which produced the signal) without having to have the

sourceavailable. This property

is

especially important when

the cost of getting signals from the actual source

is

high.

Manuscript received January 15,1988; revised October 4,1988.

The author

is

with AT&T Bell Laboratories, Murray Hill,

NJ

07974-

IEEE

Log

Number 8825949.

2070, USA.

In this case, with a good signal model, we can simulate the

source and learn

as

much

as

possible via simulations.

Finally, the most important reason why signal models are

important

is

that they often workextremelywell in practice,

and enable

us

to realize important practical systems-e.g.,

prediction systems, recognition systems, identification sys-

tems, etc., in

a

very efficient manner.

These are several possible choices for what type of signal

model

is

used for characterizing the properties of a given

signal. Broadly one can dichotomize the types

of

signal

models into the class of deterministic models, and the class

of statistical models. Deterministic models generally exploit

some known specific properties of the signal, e.g., that the

signal

is

a

sine wave, or a sum

of

exponentials, etc. In these

cases, specification of the signal model

is

generally straight-

forward;all that

is

required

istodetermine(estimate)values

of the parameters

of

the signal model (e.g., amplitude, fre-

quency, phase of a sine wave, amplitudes and rates of expo-

nentials, etc.). The second broad class of signal models

is

the set

of

statistical models in which one tries to charac-

terize only the statistical properties of the signal. Examples

of such statistical models include Gaussian processes, Pois-

son processes, Markov processes, and hidden Markov pro-

cesses, among others. The underlying assumption of the

statistical model

is

that the signal can be well characterized

as

a

parametric random process, and that the parameters

of the stochastic process can be determined (estimated) in

a precise, well-defined manner.

For the applications of interest, namely speech process-

ing, both deterministic and stochastic signal models have

had good success. In this paper we will concern ourselves

strictlywith one typeof stochastic signal model, namelythe

hidden Markov model (HMM). (These models are referred

to

as

Markov sources or probabilistic functions of Markov

chains in the communications literature.) We will first

review the theory of Markov chains and then extend the

ideas to the class of hidden Markov models using several

simple examples. We will then focus our attention on the

three fundamental problems' for HMM design, namely: the

'The idea of characterizing the theoretical aspects of hidden

Markov modeling in terms of solving three fundamental problems

is

due to Jack Ferguson of IDA (Institute for Defense Analysis) who

introduced it in lectures and writing.

0018-9219/89/02000257$01.00

0

1989

IEEE

PROCEEDINGS OF THE IEEE,

VOL.

77,

NO.

2,

FEBRUARY

1989

257

evaluation of the probability (or likelihood) of

a

sequence

of observations given a specific HMM; the determination

of

a

best sequence of model states; and the adjustment of

model parameters

so

as to best account for the observed

signal. We will show that once these three fundamental

problems are solved, we can apply HMMs to selected prob-

lems in speech recognition.

Neither the theory of hidden Markov models nor its

applications to speech recognition

is

new. The basic theory

was published in

a

series of classic papers by Baum and his

colleagues

[I]-[5]

in the late

1960s

and early

1970s

and was

implemented for speech processing applications by Baker

161

at CMU, and by Jelinek and his colleagues at IBM

[7-[13]

in the

1970s.

However, widespread understanding and

application of the theory of HMMs to speech processing

has occurred only within the past several years. There are

several reasons why this has been the case. First, the basic

theory of hidden Markov models was published in math-

ematical journals which were not generally read by engi-

neers working on problems in speech processing. The sec-

ond reason was that the original applications of the theory

to speech processing did not provide sufficient tutorial

material for most readers to understand the theory and to

be able to apply it to their own research.

As

a

result, several

tutorial papers were written which provided

a

sufficient

level of detail for

a

number of research labs to begin work

using HMMs in individual speech processing applications

[14]-[19].

This tutorial

is

intended to provide an overview

of the basic theory of HMMs (as originated by Baum and

his colleagues), provide practical details on methods of

implementation of the theory, and describe a couple

of

selected applications of the theory to distinct problems in

speech recognition. The paper combines results from a

number of original sources and hopefully provides

a

single

source for acquiring the background required to pursue

further this fascinating area of research.

The organization of this paper

is

as

follows. In Section I1

we review the theory of discrete Markov chains and show

how the concept of hidden states, where the observation

is

a

probabilistic function of the state, can be used effec-

tively. We illustrate the theory with two simple examples,

namely coin-tossing, and the classic balls-in-urns system.

In Section Ill we discuss the three fundamental problems

of HMMs, and give several practical techniques for solving

these problems. In Section IV we discuss the various types

of HMMs that have been studied including ergodic

as

well

as

left-right models. In this section we also discuss the var-

ious model features including the form of the observation

density function, the state duration density, and the opti-

mization criterion for choosing optimal HMM parameter

values. In Section Vwe discuss the issues that arise in imple-

menting HMMs including the topics of scaling, initial

parameter estimates, model size, model form, missingdata,

and multiple observation sequences. In Section

VI

we

describean isolated word speech recognizer, implemented

with HMM ideas, and show how it performs

as

compared

to alternative implementations. In Section

VI1

we extend

the ideas presented in Section VI to the problem of recog-

nizing a string of spoken words based on concatenating

individual HMMsofeachword in thevocabulary. In Section

Vlll

we briefly outline how the ideas of HMM have been

applied to

a

largevocabulary speech recognizer, and in Sec-

tion

IX

we summarize the ideas discussed throughout the

paper.

11.

DISCRETE

MARKOV

PROCESSES~

Consider a system which may be described at any time

as

being in one of

a

set of

N

distinct states,

S1,

SzI

. . .

,

SN,

as illustrated in Fig.

1

(where

N

=

5

for simplicity). At reg-

Fig.

1.

A

Markov chain with

5

states (labeled

S,

to

S,)

with

selected state transitions.

ularlyspaced discrete times, the system undergoesachange

of state (possibly back to the same state) according to

a

set

of probabilities associated with the state. We denote the

time instants associated with state changes as

t

=

1,

2,

. . .

,

and we denote the actual state at time

t

as

qr.

A

full

probabilistic description of the above system would, in gen-

eral, require specification of the current state (at time

t),

as

well

as

all

the predecessor states. For the special case of a

discrete, first order, Markov chain, this probabilistic

description

is

truncated to just the current and the pre-

decessor state, i.e.,

99,

=

qqt-1

=

SI,

qt-2

=

Skr

. .

.I

=

9s:

=

S&:

=

SJ.

(1

)

Furthermoreweonlyconsider

those processes in which the

right-hand side of

(1)

is

independent of time, thereby lead-

ing to the set of state transition probabilities

a,,

of the form

(2)

with the state transition coefficients having the properties

a,,

=

99,

=

S,(q,-,

=

S,],

1

5

i,j

5

N

a,,

2

0

C

a,,

=

I

N

/=1

(3a)

(3b)

since they obey standard stochastic constraints.

The above stochastic process could be called an observ-

able Markov model since the output of the process

is

the

set of states at each instant of time, where each state cor-

responds to

a

physical (observable) event.

To

set ideas, con-

sider

a

simple 3-state Markov model of the weather. We

assume that once

a

day (e.g., at noon), the weather

is

'A

good overview

of

discrete Markov processes is in [20, ch.

51.

258

PROCEEDINGS

OF

THE

IEEE,

VOL. 77,

NO.

2,

FEBRUARY

1989

observed as being one of the following:

State

1:

rain or (snow)

State 2: cloudy

State

3:

sunny.

We postulate that the weather on day tis characterized by

a single one of the three states above, and that the matrix

A

of state transition probabilities

is

0.4

0.3 0.3

LO.1

0.1

0.81

Given that the weather on day

1

(t

=

1)

is

sunny (state

3),

we can ask the question: What

is

the probability (according

to the model) that the weather for the next 7 days will be

"sun-sun-rain-rain-sun-cloudy-sun

* *

a"?

Stated more for-

mally, we define the observation sequence

0

as

0

=

{S3,

S3,

S3,

S1, S1,

S3,

Sz,

S3} corresponding to

t

=

1,

2,

. .

.

,

8,

and we wish to determine the probability of

0,

given the

model. This probability can be expressed (and evaluated)

as

P(0IModel)

=

RS,,

S3,

S3,

S1, S1,

S3,

Sz,

S31Modell

=

SS31

.

RS3lS3l

SS3lS3l

RSlIS31

=

7r3

=

1

.

(0.8)(0.8)(0.1)(0.4)(0.3)(0.1)(0.2)

=

1.536

X

a33

*

a33

.

a31

*

all

.

a13

.

a32

.

aZ3

where we use the notation

K,

=

491

=

S;],

1

5

i

5

N

(4)

to denote the initial state probabilities.

Another interesting question we can ask (and answer

using the model)

is:

Given that the model

is

in a known state,

what

is

the probabilityit stays in that stateforexactlyddays?

This probability can be evaluated as the probability of the

observation sequence

0

=

{Si,

Si, Si,

.

*

*

,

S.

s

#

S;},

123

d'

dkl

given the model, which

is

P(OIMode1, ql

=

S;)

=

(aJd-'(l

-

a;;)

=

p,(d).

(5)

The quantityp;(d)

is

the (discrete) probability density func-

tion of duration din state

i.

This exponential duration den-

sity

is

characteristic of the state duration in a Markovchain.

Based on pi(d), we can readily calculate the expected num-

ber of observations (duration) in

a

state, conditioned on

starting in that state as

m

-

d;

=

c

dpi(d)

d=l

(6a)

m

(6b)

1

=

c

d(ajJd-'(1

-

a;,)

=

-.

d=l

1

-

ai;

Thus the expected number of consecutive days of sunny

weather, according to the model,

is

140.2)

=

5;

for cloudy

it

is

2.5; for rain it

is

1.67.

A.

Extension to Hidden Markov Models

So far we have considered Markov models in which each

state corresponded to an observable (physical) event. This

model

is

too restrictive to be applicable to many problems

of interest. In this section we extend the concept of Markov

models to include the case where the observation

is

a

prob-

abilistic function of the state-i.e., the resulting model

(which iscalled a hidden Markovmodel) isadoublyembed-

ded stochastic process with an underlying stochastic pro-

cess that

is

not observable (it

is

hidden), but can only be

observed through another set of stochastic processes that

produce the sequence of observations.

To

fix ideas, con-

sider the following model of some simple coin tossing

experiments.

Coin

Toss

Models: Assume the following scenario. You

are in a room with

a

barrier (e.g.,

a

curtain) through which

you cannot see what

is

happening. On the other side

of

the

barrier

is

another person who

is

performing a coin (or mul-

tiplecoin) tossing experiment. Theother person will not tell

you anything about what he

is

doing exactly; he will only

tell you the result of each coin flip. Thus

a

sequence of

hid-

den coin tossing experiments

is

performed, with the obser-

vation sequence consisting of a series of heads and tails;

e.g., a typical observation sequence would be

0

=

O1

O2

O3

. . .

OT

=

x

x333x

33

x

...

x

where

X

stands for heads and

3

stands for tails.

Given the above scenario, the problem of interest

is

how

do we build an HMM to explain (model) the observed

sequence of heads and tails. The first problem one faces

is

deciding what the states in the model correspond to, and

then deciding how many states should be in the model. One

possiblechoicewould

betoassumethatonlyasingle

biased

coin was being tossed. In this case we could model the sit-

uation with a 2-state model where each state corresponds

to a side of the coin (i.e., heads or tails). This model

is

depicted in Fig. 2(a).3 In this case the Markov model

is

observable, and the only issue for complete specification

of the model would be to decide on the best value for the

bias (i.e., the probability of, say, heads). Interestingly, an

equivalent HMM to that of Fig. 2(a) would be

a

degenerate

I-state model, where the state corresponds to the single

biased coin, and the unknown parameter

is

the bias of the

coin.

A second form of HMM for explaining the observed

sequence of coin toss outcome

is

given in Fig. 2(b). In this

case there are 2 states in the model and each state corre-

sponds to a different, biased, coin being tossed. Each state

is

characterized by a probability distribution of heads and

tails, and transitions between states are characterized by a

state transition matrix. The physical mechanism which

accounts for how state transitions are selected could itself

be

a

set of independent coin tosses, or some other prob-

abilistic event.

A

third form of HMM for explaining the observed

sequence of coin toss outcomes

is

given in Fig. 2(c). This

model corresponds to using

3

biased coins, and choosing

from among the three, based on some probabilistic event.

3The model

of

Fig.

2(a)

is

a memoryless process and thus

is

a

degenerate case

of

a Markov model.

RABINER: HIDDEN MARKOV MODELS

259

P(H1

4

-

P(HI

HEADS TAILS

0

33

STATE

t23

P(H1 PI Pp P3

P(T) I-P, i-Pp I-P3

---

0-HHTTHTHHTTH

...

S=l122421122i

...

0

=HHTTHTHHTTH

...

S=21I22212212

...

O=

HHTTHTHHTTH

...

s=3123314

231

3...

Fig.

2.

Three possible Markov models which can account

for the resultsof hidden coin tossing experiments.

(a)

I-coin

model. (b) 2-coins model.

(c)

3-coins model.

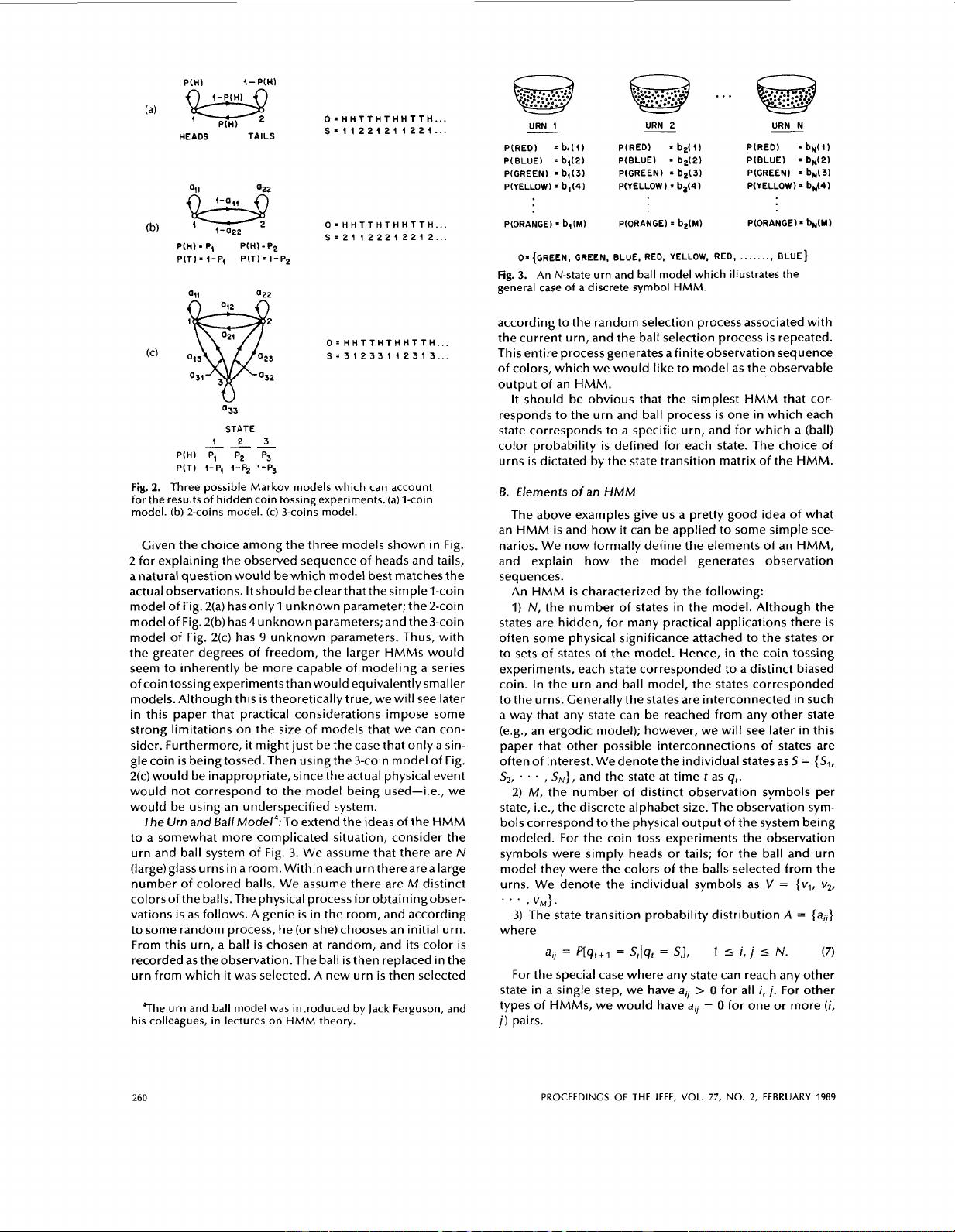

Given the choice among the three models shown in Fig.

2 for explaining the observed sequence of heads and tails,

a natural question would bewhich model best matches the

actual observations. It should beclearthat the simple I-coin

model of Fig. 2(a) has only

1

unknown parameter; the 2-coin

model of Fig. 2(b) has4 un known parameters; and the 3-coin

model of Fig. 2(c) has

9

unknown parameters. Thus, with

the greater degrees of freedom, the larger

HMMs

would

seem to inherently be more capable of modeling

a

series

of coin tossing experiments than would equivalently smaller

models. Although this

is

theoretically true, we will see later

in this paper that practical considerations impose some

strong limitations on the size of models that we can con-

sider. Furthermore, it might just be the case that only

a

sin-

glecoin

is

being tossed. Then using the 3-coin model of Fig.

2(c) would be inappropriate, since the actual physical event

would not correspond to the model being used-i.e., we

would be using an underspecified system.

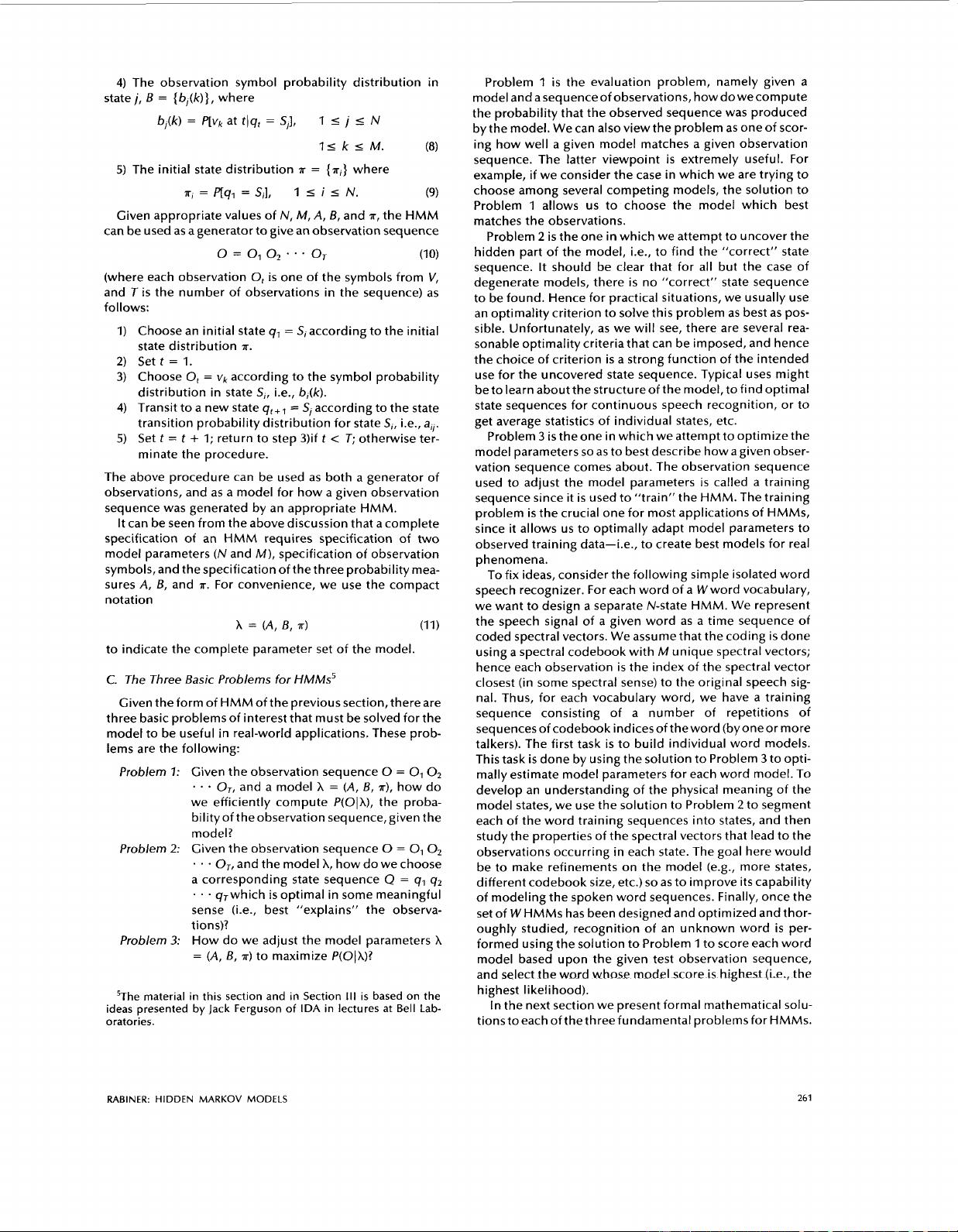

The

Urn

and

BallMode14:To extend the ideas of the HMM

to a somewhat more complicated situation, consider the

urn and ball system of Fig.

3.

We assume that there are

N

(1arge)glassurnsin aroom. Withineach urntherearealarge

number of colored balls. We assume there are

M

distinct

colorsofthe balls. The physical

processforobtainingobser-

vations

is

as follows. A genie

is

in the room, and according

to some random process, he (or she) chooses an initial urn.

From this urn, a ball

is

chosen at random, and

its

color

is

recorded as theobservation.The ball

is

then replaced in the

urn from which it was selected. A new urn

is

then selected

4The urn and ball model

was

introduced by

Jack

Ferguson, and

his colleagues,

in

lectures

on HMM theory.

os

{GREEN,

GREEN,

BLUE,

RED,

YELLOW,

RED,

..

. . .

...

BLUE}

Fig.

3.

An

N-state urn and ball model which illustrates the

general

case

of

a

discrete symbol HMM.

according to the random selection process associated with

the current urn, and the ball selection process

is

repeated.

This entire process generates afinite observation sequence

of colors, which we would like to model as the observable

output of an

HMM.

It should be obvious that the simplest

HMM

that cor-

responds to the urn and ball process

is

one in which each

state corresponds to a specific urn, and for which a (ball)

color probability

is

defined for each state. The choice of

urns

is

dictated by the state transition matrix of the

HMM.

5.

Elements

of

an

HMM

The above examples give us a pretty good idea of what

an

HMM

is

and how

it

can be applied to some simple sce-

narios. We now formally define the elements of an

HMM,

and explain how the model generates observation

sequences.

An

HMM

is

characterized by the following:

1)

N,

the number of states in the model. Although the

states are hidden, for many practical applications there

is

often some physical significance attached to the states or

to sets of states of the model. Hence,

in

the coin tossing

experiments, each state corresponded to a distinct biased

coin. In the urn and ball model, the states corresponded

to the urns. Generally the states are interconnected in such

a way that any state can be reached from any other state

(e.g., an ergodic model); however, we will see later in this

paper that other possible interconnections of states are

often of interest. We denote the individual states as

S

=

{Sl,

S2,

. . .

,

SN},

and the state at time

t

as

g,.

2)

M,

the number of distinct observation symbols per

state, i.e., the discrete alphabet size. The observation sym-

bols

correspond to the physical output

of

the system being

modeled. For the coin toss experiments the observation

symbols were simply heads or tails; for the ball and urn

model they were the colors of the balls selected from the

urns. We denote the individual symbols as

V

=

{vl,

v,,

3)

The state transition probability distribution

A

=

{a,}

(7)

For the special case where any state can reach any other

state in a single step, we have

a,

>

0

for all

i,

j.

For other

types of

HMMs,

we would have

a,]

=

0

for one or more

(i,

j)

pairs.

*.

*

,

VM).

where

a,,

=

p[q,+l

=

S,lq,

=

S,],

1

5

i,

j

I

N.

260

PROCEEDINGS

OF

THE

IEEE,

VOL.

77,

NO.

2,

FEBRUARY

1989

4)

The observation symbol probability distribution in

statej,

B

=

{b,(k)}, where

b,(k)

=

p[vk

at

t)qt

=

S,],

1

I

j

5

N

IikiM.

(8)

5)

The initial state distribution

T

=

{T~}

where

T,

=

p[ql

=

SI],

1

i

i

i

N.

(9)

Given appropriate values of

N,

M,

A,

B,

and

ir,

the HMM

can be used as a generator to give an observation sequence

0=01O~~~~oJ

(10)

(where each observation

0,

is

one of the symbols from

V,

and Tis the number of observations

in

the sequence) as

follows:

1)

Choose an initial state

q,

=

SI

according to the initial

state distribution

T.

2)

Set

t

=

1.

3) Choose

0,

=

vk

according to the symbol probability

distribution in state

SI,

i.e., b,(k).

4)

Transit to a new state

q,,,

=

S,

according to the state

transition probability distribution for state

S,,

i.e.,

a,.

5)

Set

t

=

t

+

1;

return to step 3)if

t

<

T;

otherwise ter-

minate the procedure.

The above procedure can be used as both a generator

of

observations, and as a model for how a given observation

sequence was generated by an appropriate HMM.

It can be seen from the above discussion that a complete

specification of an HMM requires specification of two

model parameters

(N

and

M),

specification of observation

symbols, and the specification of the three probability mea-

sures

A,

B,

and

T.

For convenience, we use the compact

notation

A

=

(A,

6,

T)

(11)

to indicate the complete parameter set of the model.

C.

The Three Basic Problems

for

HMMs5

Given the form of HMM of the previous section, there are

three basic problems of interest that must be solved for the

model to be useful in real-world applications. These prob-

lems are the following:

Problem

7:

Given the observation sequence

0

=

O1

O2

. .

*

Or,

and a model

A

=

(A,

6,

ir),

how do

we efficiently compute P(OIA), the proba-

bilityof theobservation sequence,given the

model?

Problem

2:

Given the observation sequence

0

=

0,

O2

. . .

Or,

and the model

A,

how do we choose

a corresponding state sequence

Q

=

q1

q2

. . .

qJwhich

is

optimal in some meaningful

sense (i.e., best “explains” the observa-

t

ion

s)?

Problem

3:

How do we adjust the model parameters

A

=

(A,

B,

T)

to maximize P(OJA)?

5The material in this section and in Section

Ill

is

based on the

ideas presented by Jack Ferguson

of

IDA

in lectures at

Bell

Lab-

oratories.

Problem

1

is

the evaluation problem, namely given a

model and asequenceof observations, how dowecompute

the probability that the observed sequence was produced

by the model. We can also view the problem as one of scor-

ing how well a given model matches a given observation

sequence. The latter viewpoint

is

extremely useful. For

example, if we consider the case in which we are trying to

choose among several competing models, the solution to

Problem

1

allows

us

to choose the model which best

matches the observations.

Problem

2

is

the one

in

which we attempt to uncover the

hidden part of the model, i.e., to find the “correct” state

sequence. It should be clear that for all but the case of

degenerate models, there

is

no “correct” state sequence

to be found. Hence for practical situations, we usually use

an optimality criterion to solve this problem as best as pos-

sible. Unfortunately, as we will see, there are several rea-

sonable optimality criteria that can be imposed, and hence

the choice of criterion

is

a strong function of the intended

use for the uncovered state sequence. Typical uses might

be to learn about the structure of the model, to find optimal

state sequences for continuous speech recognition, or to

get average statistics of individual states, etc.

Problem

3

is

the one in which we attempt to optimize the

model parameters

so

as to best describe how a given obser-

vation sequence comes about. The observation sequence

used to adjust the model parameters

is

called a training

sequence since

it

is

used to “train” the HMM. The training

problem

is

the crucial one for most applications of HMMs,

since

it

allows us to optimally adapt model parameters to

observed training data-i.e., to create best models for real

phenomena.

To fix ideas, consider the following simple isolated word

speech recognizer. For each word of a Wword vocabulary,

we want to design a separate N-state HMM. We represent

the speech signal of

a

given word as a time sequence of

coded spectral vectors. We assume that the coding

is

done

using

a

spectral codebook with

M

unique spectral vectors;

hence each observation

is

the index of the spectral vector

closest (in some spectral sense) to the original speech sig-

nal. Thus, for each vocabulary word, we have a training

sequence consisting of a number of repetitions of

sequencesofcodebook indicesoftheword (byoneor more

talkers). The first task

is

to build individual word models.

This task

is

done by using the solution to Problem

3

to opti-

mally estimate model parameters for each word model. To

develop an understanding of the physical meaning of the

model states, we use the solution to Problem

2

to segment

each of the word training sequences into states, and then

study the properties of the spectral vectors that lead to the

observations occurring in each state. The goal here would

be to make refinements on the model (e.g., more states,

different codebook size, etc.)

so

as to improve its capability

of modeling the spoken word sequences. Finally, once the

set of

W

HMMs has been designed and optimized and thor-

oughly studied, recognition of an unknown word

is

per-

formed using the solution to Problem

1

to score each word

model based upon the given test observation sequence,

and select the word

whose

modelscore

is

highe5t

[k,?

the

highest

I

i

kel

i

hood).

In the next section we present formal mathematical solu-

tionstoeachofthethreefundamental

problemsfor HMMs.

RABINER:

HIDDEN

MARKOV MODELS

261

剩余29页未读,继续阅读

本本纲目

- 粉丝: 27

- 资源: 293

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜最新资源

- 2331308JS课堂案例.zip

- STM32H750VBT6单片机最小系统开发板AD设计硬件(原理图+PCB+3D封装库)工程文件.zip

- 基于74LS161+ 74LS192芯片实现倒计时定时器Multisim仿真源文件,Multisim10以上版本可打开运行

- 科大讯飞语音引擎 jar包 demo,科大讯飞语音合成引擎3.0,支持4.0系统以上,文字转语音输出.zip

- Java架构面试笔试专题资料及经验(含答案)SpringBoot面试Linux面试专题及答案 合集.zip

- 头歌c语言实验答案tion-model-for-ne开发笔记

- docker配置使用-model-for-networK开发demo

- docker配置使用vaWeb-mas笔记

- c语言连接两个字符串-mas开发笔记

- 俄罗斯引擎yandex进入x-master 笔记

资源上传下载、课程学习等过程中有任何疑问或建议,欢迎提出宝贵意见哦~我们会及时处理!

点击此处反馈

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功

评论0