没有合适的资源?快使用搜索试试~ 我知道了~

资源推荐

资源详情

资源评论

机器学习系列(4)

提高深度网络性能之 - 优化算法

深度学习中反向传播的目标是,找到最优的参数(如W、b),使得代价函数(cost function)最小,如何使得代价函数更好收敛以及如何加快收敛过

程,分别对应着深度网络对精度和速度的要求,那么好的优化算法就显得至关重要了,一个好的优化算法能够大大提高整个团队的效率。本次将讨论反

向传播中的优化算法。

优化算法:

梯度下降

mini-bacth梯度下降

随机梯度下降

动量梯度下降

RMSprop

Adam

学习率衰减

Adamw

Python实现:

见文章内容

申明

本文原理解释及公式推导部分均由LSayhi完成,供学习参考,可传播;代码实现部分的框架由Coursera提供,由LSayhi完成,详细数据及代码可在

github查阅。

https://github.com/LSayhi/DeepLearning (https://github.com/LSayhi/DeepLearning)

微信公众号:AI有点可ai

优化算法

一、Bacth梯度下降

Bacth梯度下降指的是批量梯度下降(Batch Gradient Descent),是在寻找最优参数W和b的过程中,我们使用凸优化理论中的梯度下降方式,而

且每一步操作都是对整个训练集(所有m个样本)一起操作的。批量梯度下降算法,for l = 1, ..., L:

这里的L指的是网络层数,lpha指的是学习率(learning_rate).

批量体现在,将所有m个样本向量化,这样就可以避免使用显式for循环,从而降低时间复杂度,这样做的好处是能够大大减小梯度下降所需的的时

间,很可能原本需要几天的过程,现在只需几个小时。

二、Mini-bacth 梯度下降

Mini-bacth梯度下降是指将所有m个样本分为多个小集合(每个小集合就称为mini-bacth),然后再分别应用梯度下降法,这样做的原因是,虽然批

量梯度下降法已经通过向量化大大减小了训练时间,但是当训练集的数目很大的话,处理速度仍然很慢,因为你必须每次处理所有的训练样本,然

后更新参数,再不断迭代。Mini-bacth梯度下降把m个样本分成了很多子训练集,先处理一个子集,更新参数,然后再处理一个子集,再更新参数,

这样会让算法速度更快。

mini-bacth梯度下降速度比bacth梯度下降更快,但由于不是对整个训练集进行操作,最优化的过程“摆动性”更强,会在cost function会在最小值附近

摆动。

三、Stochastic梯度下降

Stochastic梯度下降即随机梯度下降,随机梯度下降可以看作是mini-batch的大小为1,这种方式最优化过程摆动性比mini-batch还要强,但是优点是

速度会比mini-batch还要快。

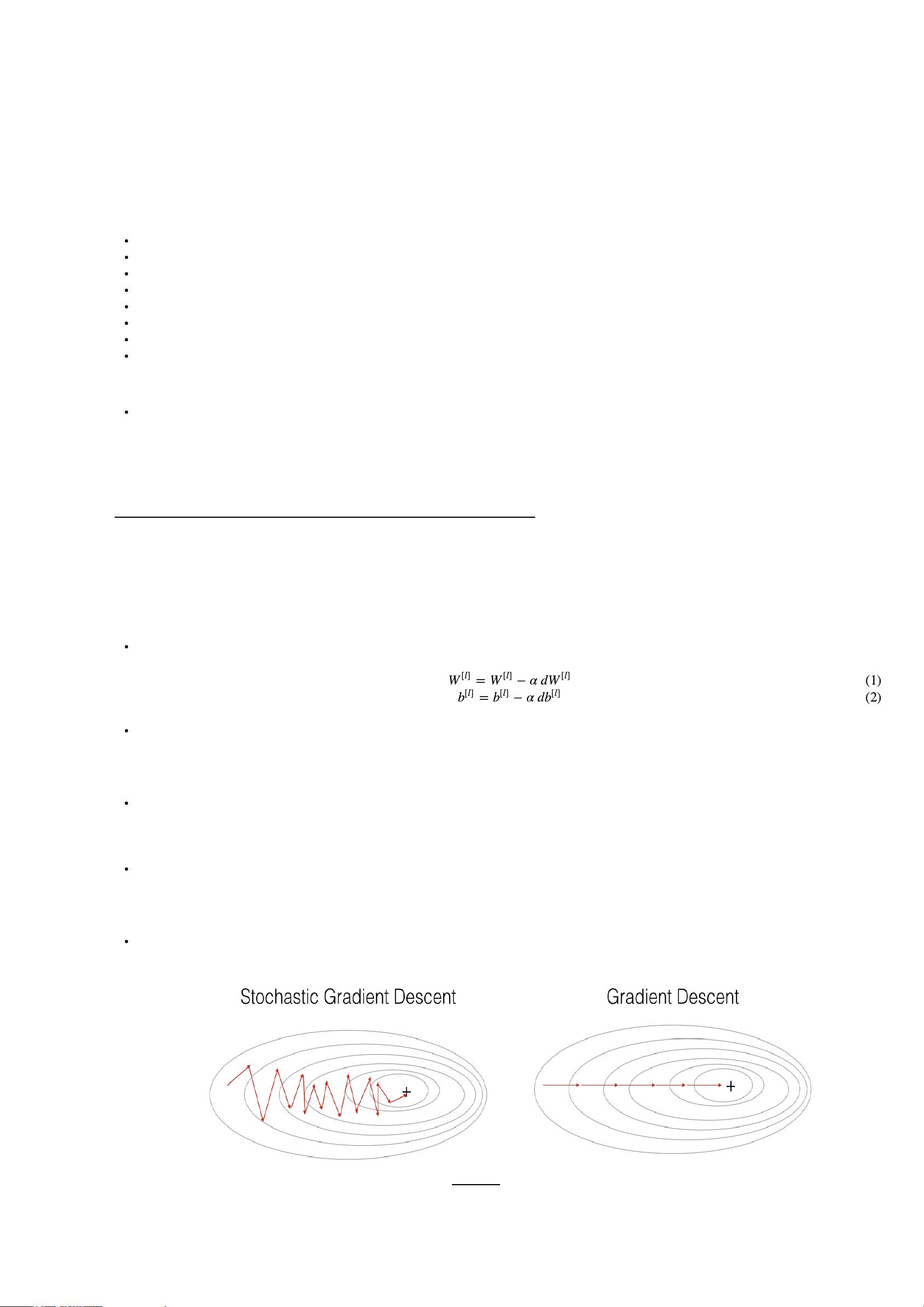

Figure 1 : SGD vs GD

"+" denotes a minimum of the cost. SGD leads to many oscillations to reach convergence. But each step is a lot faster to compute for SGD than

for GD, as it uses only one training example (vs. the whole batch for GD).

=

−

α

d

W

[

l

]

W

[

l

]

W

[

l

]

(1)

=

−

α

d

b

[

l

]

b

[

l

]

b

[

l

]

(2)

Figure 2 : SGD vs Mini-Batch GD

"+" denotes a minimum of the cost. Using mini-batches in your optimization algorithm often leads to faster optimization.

四、momentun

Momentun梯度下降能够使得以上三种方式的梯度下降更加快速。以最常用的mini-batch梯度下降为例,在最小化cost function的过程中,在纵轴方

向会不停摆动,如果想要加速收敛,需调大学习率,但是就会引起cost再最小值附近摆动加大,如果调小学习率,那么收敛的速度减慢,如何在不

影响cost收敛精度的同时加快收敛?momentum梯度下降刚好解决了这一问题,我们使用新的参数更新方式,使得最优化过程中,纵轴的摆动减

小,横轴的速度加大,这样可以实现加快收敛。

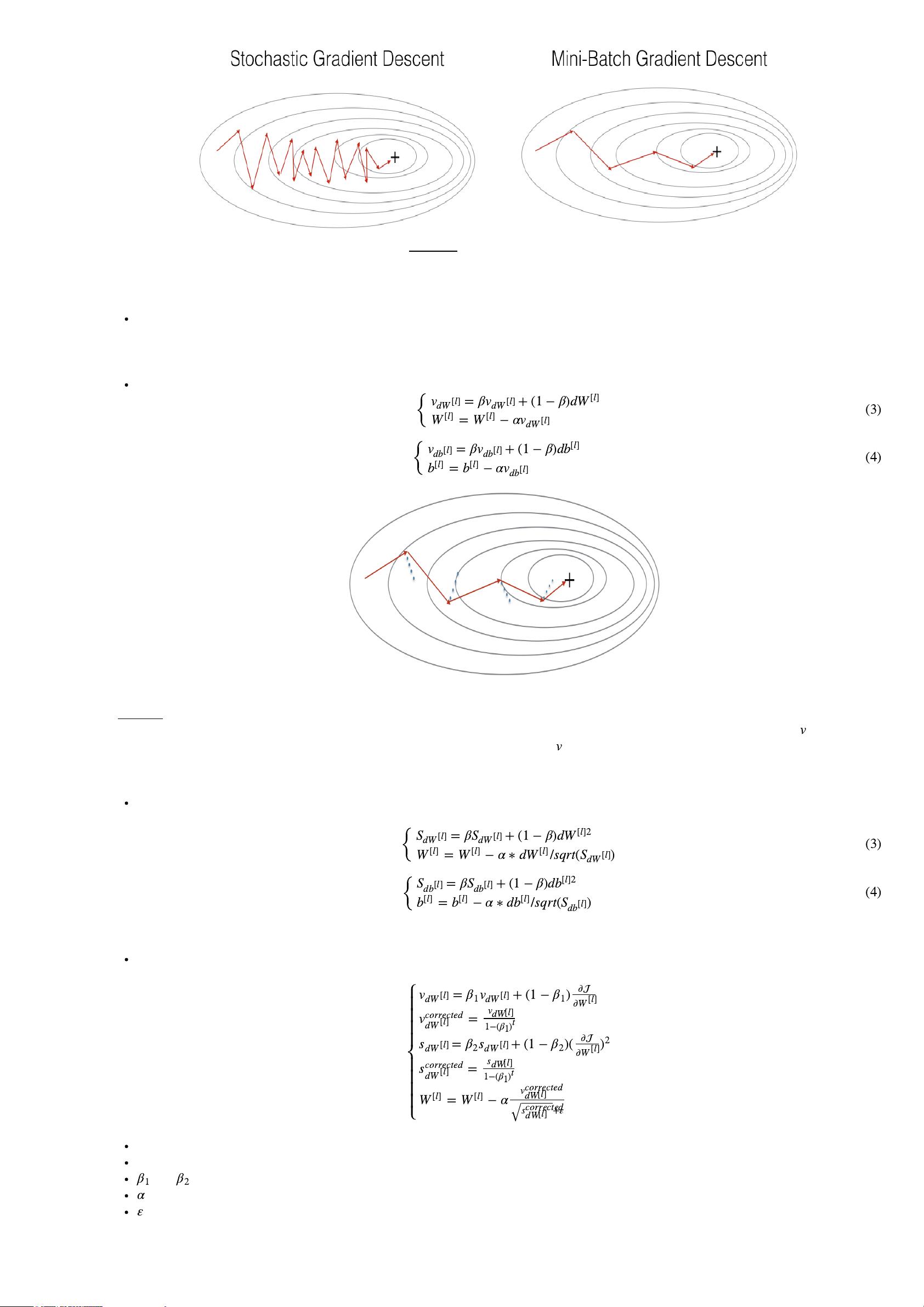

Momentun梯度下降实现方式,, \beta is the momentum and \alpha is the learning rate.

Figure 3: The red arrows shows the direction taken by one step of mini-batch gradient descent with momentum. The blue points show the direction of

the gradient (with respect to the current mini-batch) on each step. Rather than just following the gradient, we let the gradient influence and then take

a step in the direction of .

五、RMSprop

RMSprop可以加速梯度下降,momentum是对dW、db先指数加权平均,而RMSprop是对dW、db的平方指数加权平均,更新参数时也不同,详见

公式。以二维平面为例,这样做的效果是,减缓纵轴方向,加快横轴方向,当然处于高维空间时,RMSprop同样是消除摆动,加快收敛。

六、Adam

Adam算法是Adapitive Moment Estimation。深度学习的历史中出现了很多优化算法,有许多适用有局限,momentum和RMSprop是两种经受住考

验的算法,而Adam算法就是将两种算法结合的算法,这是一种极其常用的算法,被证明能适用于不同的神经网络结构。

where:

t counts the number of steps taken of Adam

L is the number of layers

and are hyperparameters that control the two exponentially weighted averages.

is the learning rate

is a very small number to avoid dividing by zero

七、学习率衰减

{

=

β

+ (1

−

β

)

d

v

d

W

[

l

]

v

d

W

[

l

]

W

[

l

]

=

−

α

W

[

l

]

W

[

l

]

v

d

W

[

l

]

(3)

{

=

β

+ (1

−

β

)

d

v

d

b

[

l

]

v

d

b

[

l

]

b

[

l

]

=

−

α

b

[

l

]

b

[

l

]

v

d

b

[

l

]

(4)

v

v

{

=

β

+ (1

−

β

)

d

S

d

W

[

l

]

S

d

W

[

l

]

W

[

l

]2

=

−

α

∗

d

/

sqrt

( )

W

[

l

]

W

[

l

]

W

[

l

]

S

d

W

[

l

]

(3)

{

=

β

+ (1

−

β

)

d

S

d

b

[

l

]

S

d

b

[

l

]

b

[

l

]2

=

−

α

∗

d

/

sqrt

( )

b

[

l

]

b

[

l

]

b

[

l

]

S

d

b

[

l

]

(4)

= + (1

−

)

v

d

W

[

l

]

β

1

v

d

W

[

l

]

β

1

∂

∂

W

[

l

]

=

v

corrected

d

W

[

l

]

v

d

W

[

l

]

1

−

(

β

1

)

t

= + (1

−

)(

s

d

W

[

l

]

β

2

s

d

W

[

l

]

β

2

∂

∂

W

[

l

]

)

2

=

s

corrected

d

W

[

l

]

s

d

W

[

l

]

1

−

(

β

1

)

t

=

−

α

W

[

l

]

W

[

l

]

v

corrected

d

W

[

l

]

+

ε

s

corrected

d

W

[

l

]

√

β

1

β

2

α

ε

学习率衰减是随时间慢慢减小学习率,开始阶段可以使用较大的学习率,加快收敛速度,当接近最小值时可以减小学习率,从而提高收敛精度。

申明

本文原理解释和公式推导均由LSayhi完成,供学习参考,可传播;代码实现的框架由Coursera提供,由LSayhi完成,详细数据和代码可在github中查询,

请勿用于Coursera刷分。

https://github.com/LSayhi/DeepLearning (https://github.com/LSayhi/DeepLearning)

Optimization Methods

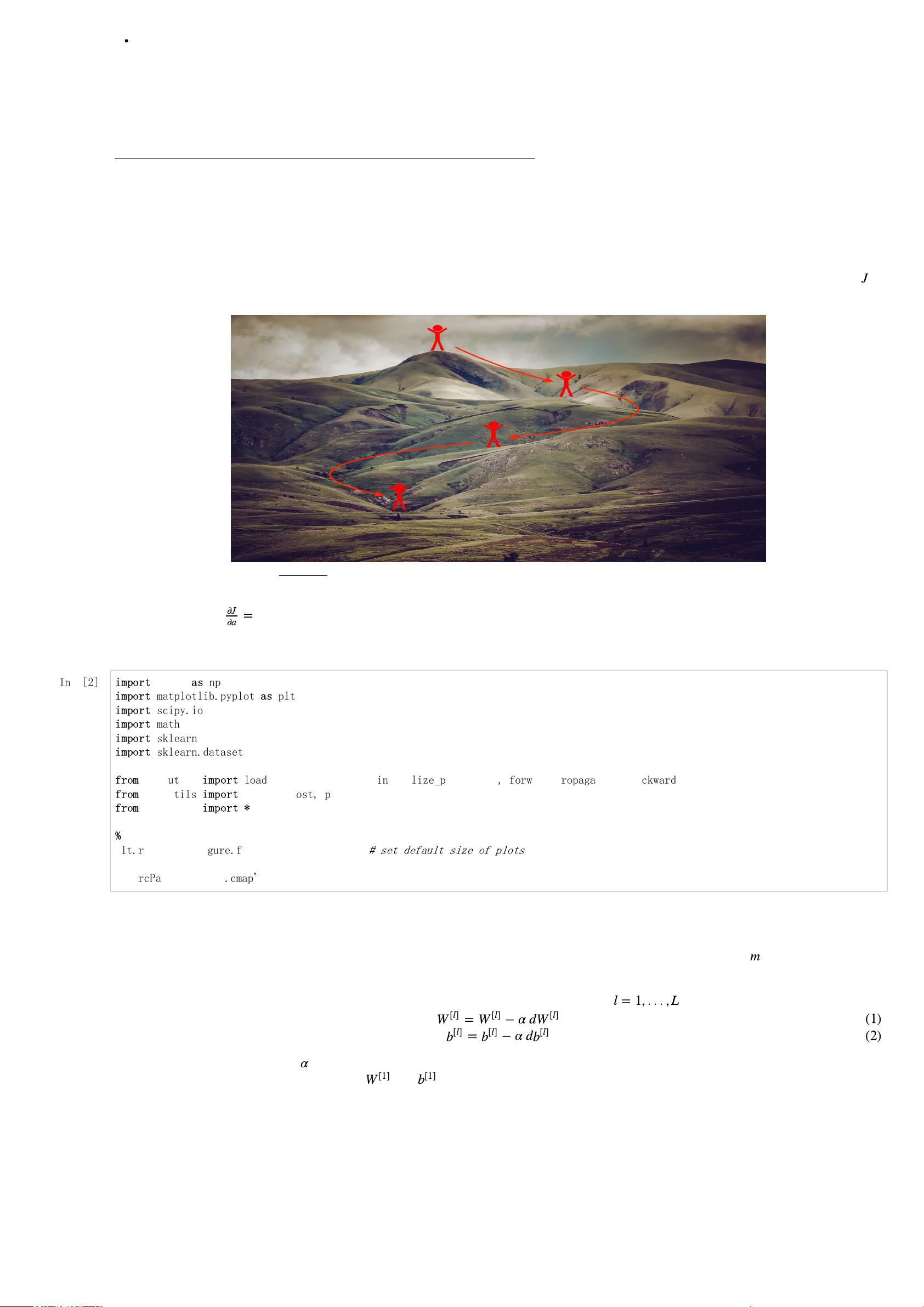

Until now, you've always used Gradient Descent to update the parameters and minimize the cost. In this notebook, you will learn more advanced

optimization methods that can speed up learning and perhaps even get you to a better final value for the cost function. Having a good optimization

algorithm can be the difference between waiting days vs. just a few hours to get a good result. Gradient descent goes "downhill" on a cost function .

Think of it as trying to do this:

Figure 1 : Minimizing the cost is like finding the lowest point in a hilly landscape

At each step of the training, you update your parameters following a certain direction to try to get to the lowest possible point.

Notations: As usual, da for any variable a.

To get started, run the following code to import the libraries you will need.

J

=

∂

J

∂

a

In[2]:

1 - Gradient Descent

A simple optimization method in machine learning is gradient descent (GD). When you take gradient steps with respect to all examples on each

step, it is also called Batch Gradient Descent.

Warm-up exercise: Implement the gradient descent update rule. The gradient descent rule is, for :

where L is the number of layers and is the learning rate. All parameters should be stored in the parameters dictionary. Note that the iterator l starts

at 0 in the for loop while the first parameters are and . You need to shift l to l+1 when coding.

m

l

= 1, . . . ,

L

=

−

α

d

W

[

l

]

W

[

l

]

W

[

l

]

(1)

=

−

α

d

b

[

l

]

b

[

l

]

b

[

l

]

(2)

α

W

[1]

b

[1]

import

numpy

as

np

import

matplotlib.pyplot

as

plt

import

scipy.io

import

math

import

sklearn

import

sklearn.datasets

from

opt_utils

import

load_params_and_grads, initialize_parameters, forward_propagation, backward_propagation

from

opt_utils

import

compute_cost, predict, predict_dec, plot_decision_boundary, load_dataset

from

testCases

import

*

%

matplotlib inline

plt.rcParams['figure.figsize'] = (7.0, 4.0)

# set default size of plots

plt.rcParams['image.interpolation'] = 'nearest'

plt.rcParams['image.cmap'] = 'gray'

剩余19页未读,继续阅读

资源评论

AshleyK

- 粉丝: 16

- 资源: 315

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功