没有合适的资源?快使用搜索试试~ 我知道了~

(有启发意义的文章)EE with Generative Adversarial Imitation Learning1

需积分: 0 0 下载量 165 浏览量

2022-08-03

12:31:08

上传

评论

收藏 963KB PDF 举报

温馨提示

试读

13页

(有启发意义的文章)EE with Generative Adversarial Imitation Learning1

资源推荐

资源详情

资源评论

Joint Entity and Event Extraction with

Generative Adversarial Imitation Learning

Tongtao Zhang

†

, Heng Ji

†

and Avirup Sil

∗

†

Computer Science Department, Rensselaer Polytechnic Institute

∗

IBM Research AI

Abstract

We propose a new framework for entity and

event extraction based on generative adversar-

ial imitation learning – an inverse reinforce-

ment learning method using generative adver-

sarial network (GAN). We assume that in-

stances and labels yield to various extents of

difficulty and the gains and penalties (rewards)

are expected to be diverse. We utilize discrim-

inators to estimate proper rewards according

to the difference between the labels committed

by the ground-truth (expert) and the extractor

(agent). Our experiments demonstrate that the

proposed framework outperforms state-of-the-

art methods.

1 Introduction

Event extraction (EE) is a crucial information ex-

traction (IE) task that focuses on extracting struc-

tured information (i.e., a structure of event trigger

and arguments, “what is happening”, and “who or

what is involved”) from unstructured texts. For

example, in the sentence “Masih’s alleged com-

ments of blasphemy are punishable by death un-

der Pakistan Penal Code” shown in Figure 1,

there is a Sentence event (“punishable”) and

Execute event (“death”) involving the person

entity “Masih”. Most event extraction research

has been in the context of the 2005 NIST Au-

tomatic Content Extraction (ACE) sentence-level

event mention task (Walker et al., 2006), which

also provides the standard corpus. The annotation

guideline of the ACE program defines an event as

a specific occurrence of something that happens

involving participants, often described as a change

of state. More recently, the TAC KBP community

has introduced document-level event argument ex-

traction shared tasks for 2014 and 2015 (KBP EA).

In the last five years, many event extraction

approaches have brought forth encouraging re-

sults by retrieving additional related text docu-

ments (Song et al., 2015), introducing rich fea-

tures of multiple categories (Li et al., 2013; Zhang

et al., 2017b), incorporating relevant information

within or beyond context (Yang and Mitchell,

2016; Judea and Strube, 2016; Yang and Mitchell,

2017; Duan et al., 2017) and adopting neural net-

work frameworks (Chen et al., 2015; Nguyen and

Grishman, 2015; Feng et al., 2016; Nguyen et al.,

2016; Huang et al., 2016; Nguyen and Grish-

man, 2018; Sha et al., 2018; Huang et al., 2018;

Hong et al., 2018; Zhao et al., 2018; Nguyen and

Nguyen, 2018).

However, there are still challenging cases: for

example, in the following sentences: “Masih’s al-

leged comments of blasphemy are punishable by

death under Pakistan Penal Code” and “Scott is

charged with first-degree homicide for the death

of an infant.”, the word death can trigger an

Execute event in the former sentence and a Die

event in the latter one. With similar local infor-

mation (word embeddings) or contextual features

(both sentences include legal events), supervised

models pursue the probability distribution which

resembles that in the training set (e.g., we have

overwhelmingly more Die annotation on death

than Execute), and will label both as a Die

event, causing error in the former instance.

Such mistake is due to the lack of a mecha-

nism that explicitly deals with wrong and confus-

ing labels. Many multi-classification approaches

utilize cross-entropy loss, which aims at boost-

ing the probability of the correct labels and usu-

ally treat wrong labels equally and merely inhibits

them indirectly. Models are trained to capture fea-

tures and weights to pursue correct labels, but will

become vulnerable and unable to avoid mistakes

when facing ambiguous instances, where the prob-

abilities of the confusing and wrong labels are not

sufficiently “suppressed”. Therefore, exploring in-

formation from wrong labels is a key to make the

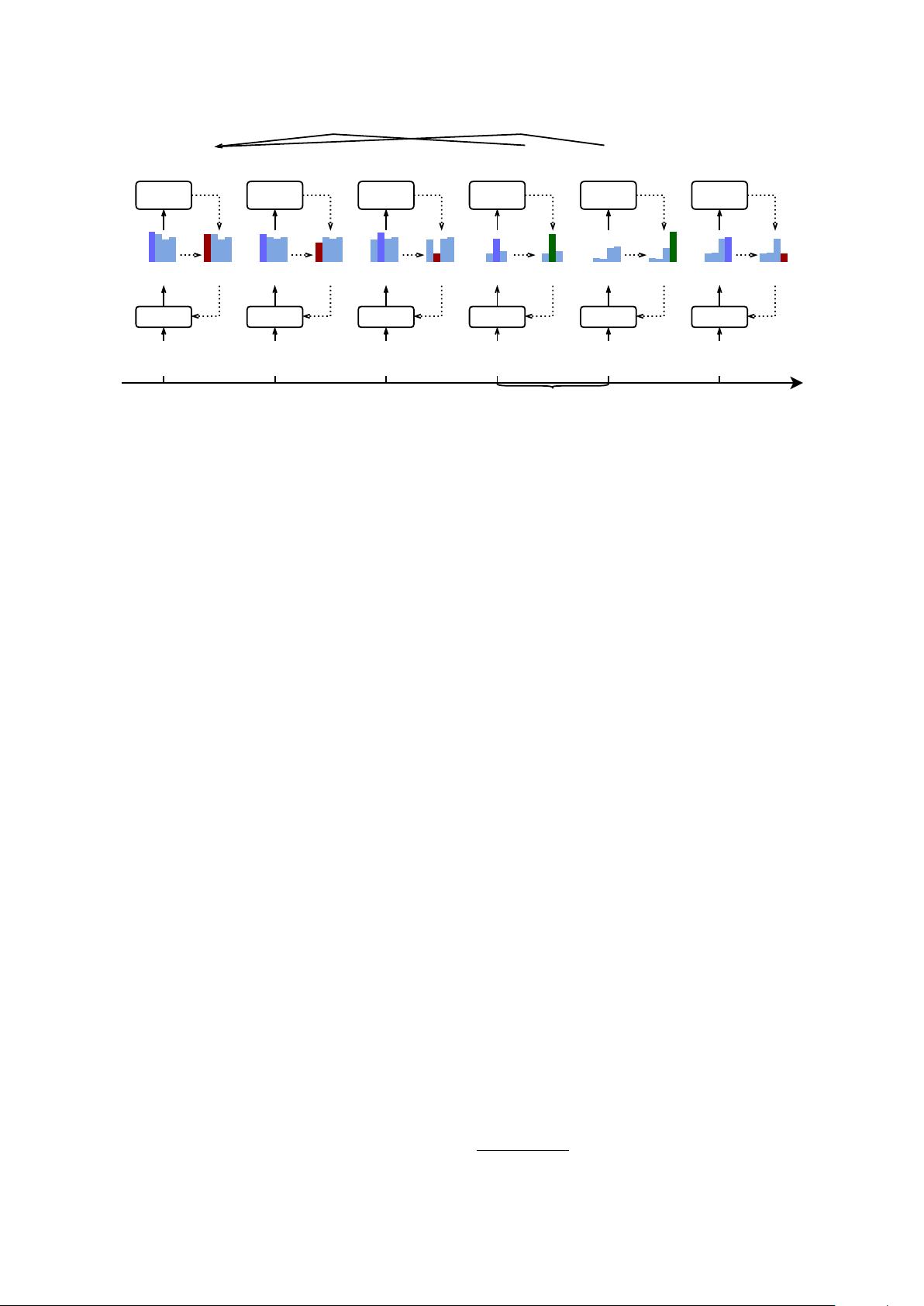

Masih'sallegedcommentsofblasphemyarepunishablebydeathunder...

Epoch 1 Epoch 10 Epoch 20

Epoch 30

Epoch 40

Reward

Estimator

Model

Sentence ExecutePER

Person

-0.9

Execute

O

Die

PER

Execute

O

Die

PER

Defendant

Reward

Estimator

Model

Execute

O

Die

PER

Execute

O

Die

PER

-2.3

Reward

Estimator

Model

Masih's

...death...

-5.7

Reward

Estimator

Model

Execute

O

Die

PER

Execute

O

Die

PER

6.4

Reward

Estimator

Model

...are

punishableby

...

Execute

O

Sentence

Execute

O

Sentence

1.7

Reward

Estimator

Model

Execute

O

Die

PER

Execute

O

Die

PER

-5.5

...bydeath

under...

...bydeath

under...

...bydeath

under...

...bydeath

under...

Place

N/A

Person

Agent

Place

N/A

Person

Agent

Figure 1: Our framework includes a reward estimator based on GAN to issue dynamic rewards with regard to the

labels (actions) committed by event extractor (agent). The reward estimator is trained upon the difference between

the labels from ground truth (expert) and extractor (agent). If the extractor repeatedly misses Execute label

for “death”, the penalty (negative reward values) is strengthened; if the extractor make surprising mistakes: label

“death” as Person or label Person “Masih” as Place role in Sentence event, the penalty is also strong.

For cases where extractor is correct, simpler cases such as Sentence on “death” will take a smaller gain while

difficult cases Execute on “death” will be awarded with larger reward values.

models robust.

In this paper, to combat the problems of previ-

ous approaches towards this task, we propose a dy-

namic mechanism – inverse reinforcement learn-

ing – to directly assess correct and wrong labels

on instances in entity and event extraction. We as-

sign explicit scores on cases – or rewards in terms

of Reinforcement Learning (RL). We adopt dis-

criminators from generative adversarial networks

(GAN) to estimate the reward values. Discrimi-

nators ensures the highest reward for ground-truth

(expert) and the extractor attempts to imitate the

expert by pursuing highest rewards. For chal-

lenging cases, if the extractor continues selecting

wrong labels, the GAN keeps expanding the mar-

gins between rewards for ground-truth labels and

(wrong) extractor labels and eventually deviates

the extractor from wrong labels.

The main contributions of this paper can be

summarized as follows:

• We apply reinforcement learning framework to

event extraction tasks, and the proposed frame-

work is an end-to-end and pipelined approach

that extracts entities and event triggers and de-

termines the argument roles for detected enti-

ties.

• With inverse reinforcement learning propelled

by GAN, we demonstrate that a dynamic reward

function ensures more optimal performance in a

complicated RL task.

2 Task and Term Preliminaries

In this paper we follow the schema of Automatic

Content Extraction (ACE) (Walker et al., 2006) to

detect the following elements from unstructured

natural language data:

• Entity: word or phrase that describes a real

world object such as a person (“Masih” as PER

in Figure 1). ACE schema defines 7 types of

entities.

• Event Trigger: the word that most clearly ex-

presses an event (interaction or change of sta-

tus). ACE schema defines 33 types of events

such as Sentence (“punishable” in Figure 1)

and Execute (“death”).

• Event argument: an entity that serves as a par-

ticipant or attribute with a specific role in an

event mention, in Figure 1 e.g., a PER “Masih”

serves as a Defendant in a Sentence event

triggered by “punishable”.

The ACE schema also comes with a data set –

ACE2005

1

– which has been used as a benchmark

for information extraction frameworks and we will

introduce this data set in Section 6.

1

https://catalog.ldc.upenn.edu/

LDC2006T06

For broader readers who might not be familiar

with reinforcement learning, we briefly introduce

by their counterparts or equivalent concepts in su-

pervised models with the RL terms in the paren-

theses: our goal is to train an extractor (agent A)

to label entities, event triggers and argument roles

(actions a) in text (environment e); to commit cor-

rect labels, the extractor consumes features (state

s) and follow the ground truth (expert E); a re-

ward R will be issued to the extractor according

to whether it is different from the ground truth and

how serious the difference is – as shown in Fig-

ure 1, a repeated mistake is definitely more serious

– and the extractor improves the extraction model

(policy π) by pursuing maximized rewards.

Our framework can be briefly described as fol-

lows: given a sentence, our extractor scans the

sentence and determines the boundaries and types

of entities and event triggers using Q-Learning

(Section 3.1); meanwhile, the extractor determines

the relations between triggers and entities – argu-

ment roles with policy gradient (Section 3.2). Dur-

ing the training epochs, GANs estimate rewards

which stimulate the extractor to pursue the most

optimal joint model (Section 4).

3 Framework and Approach

3.1 Q-Learning for Entities and Triggers

The entity and trigger detection is often mod-

eled as a sequence labeling problem, where long-

term dependency is a core nature; and reinforce-

ment learning is a well-suited method (Maes et al.,

2007).

From RL perspective, our extractor (agent A)

is exploring the environment, or unstructured nat-

ural language sentences when going through the

sequences and committing labels (actions a) for

the tokens. When the extractor arrives at tth to-

ken in the sentence, it observes information from

the environment and its previous action a

t−1

as its

current state s

t

; the extractor commits a current

action a

t

and moves to the next token, it has a new

state s

t+1

. The information from the environment

is token’s context embedding v

t

, which is usually

acquired from Bi-LSTM (Hochreiter and Schmid-

huber, 1997) outputs; previous action a

t−1

may

impose some constraint for current action a

t

, e.g.,

I-ORG does not follow B-PER

2

. With the afore-

2

In this work, we use BIO, e.g., “B-Meet” indicates the

token is beginning of Meet trigger, “I-ORG” means that the

token is inside an organization phrase, and “O” denotes null.

mentioned notations, we have

s

t

=< v

t

, a

t−1

> . (1)

To determine the current action a

t

, we generate

a series of Q-tables with

Q

sl

(s

t

, a

t

) = f

sl

(s

t

|s

t−1

, s

t−2

, . . . , a

t−1

, a

t−2

, . . .),

(2)

where f

sl

(·) denotes a function that determine the

Q-values using the current state as well as previ-

ous states and actions. Then we achieve

ˆa

t

= arg max

a

t

Q

sl

(s

t

, a

t

). (3)

Equation 2 and 3 suggest that an RNN-based

framework which consumes current input and pre-

vious inputs and outputs can be adopted, and we

use a unidirectional LSTM as (Bakker, 2002). We

have a full pipeline as illustrated in Figure 2.

For each label (action a

t

) with regard to s

t

, a

reward r

t

= r(s

t

, a

t

) is assigned to the extractor

(agent). We use Q-learning to pursue the most op-

timal sequence labeling model (policy π) by max-

imizing the expected value of the sum of future re-

wards E(R

t

), where R

t

represents the sum of dis-

counted future rewards r

t

+ γr

t+1

+ γ

2

r

t+2

+ . . .

with a discount factor γ, which determines the in-

fluence between current and next states.

We utilize Bellman Equation to update the Q-

value with regard to the current assigned label to

approximate an optimal model (policy π

∗

).

Q

π

∗

sl

(s

t

, a

t

) = r

t

+ γ max

a

t+1

Q

sl

(s

t+1

, a

t+1

). (4)

As illustrated in Figure 3, when the extractor

assigns a wrong label on the “death” token be-

cause the Q-value of Die ranks first, Equation 4

will penalize the Q-value with regard to the wrong

label; while in later epochs, if the extractor com-

mits a correct label of Execute, the Q-value will

be boosted and make the decision reinforced.

We minimize the loss in terms of mean squared

error between the original and updated Q-values

notated as Q

0

sl

(s

t

, a

t

):

L

sl

=

1

n

n

X

t

X

a

(Q

0

sl

(s

t

, a

t

) − Q

sl

(s

t

, a

t

))

2

(5)

and apply back propagation to optimize the param-

eters in the neural network.

剩余12页未读,继续阅读

资源评论

食色也

- 粉丝: 29

- 资源: 351

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功