没有合适的资源?快使用搜索试试~ 我知道了~

[2016].DenseCap.(李飞飞)1

需积分: 0 2 下载量 48 浏览量

2022-08-03

19:08:23

上传

评论

收藏 7.9MB PDF 举报

温馨提示

试读

10页

[2016].DenseCap.(李飞飞)1

资源详情

资源评论

资源推荐

DenseCap: Fully Convolutional Localization Networks for Dense Captioning

Justin Johnson

∗

Andrej Karpathy

∗

Li Fei-Fei

Department of Computer Science, Stanford University

{jcjohns,karpathy,feifeili}@cs.stanford.edu

Abstract

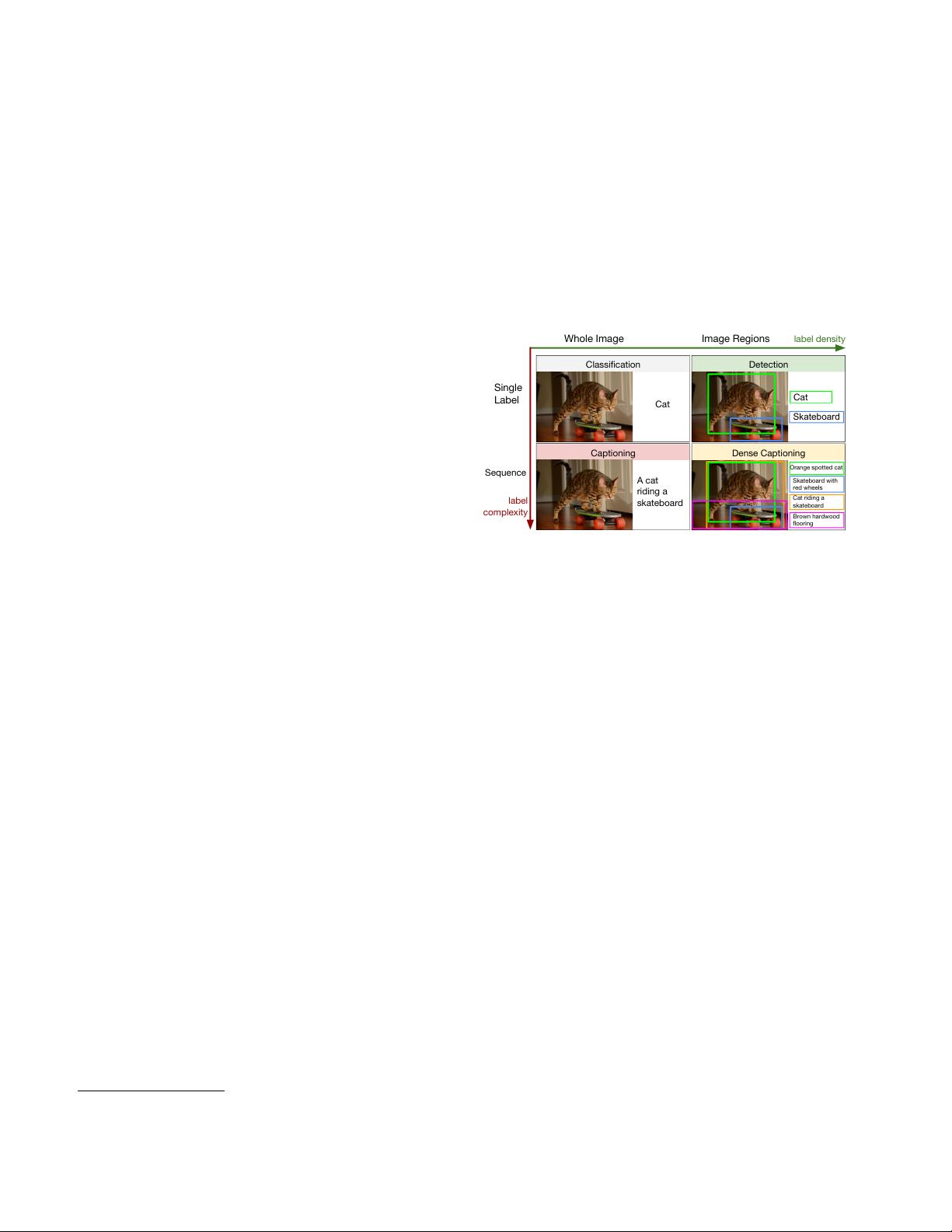

We introduce the dense captioning task, which requires a

computer vision system to both localize and describe salient

regions in images in natural language. The dense caption-

ing task generalizes object detection when the descriptions

consist of a single word, and Image Captioning when one

predicted region covers the full image. To address the local-

ization and description task jointly we propose a Fully Con-

volutional Localization Network (FCLN) architecture that

processes an image with a single, efficient forward pass, re-

quires no external regions proposals, and can be trained

end-to-end with a single round of optimization. The archi-

tecture is composed of a Convolutional Network, a novel

dense localization layer, and Recurrent Neural Network

language model that generates the label sequences. We

evaluate our network on the Visual Genome dataset, which

comprises 94,000 images and 4,100,000 region-grounded

captions. We observe both speed and accuracy improve-

ments over baselines based on current state of the art ap-

proaches in both generation and retrieval settings.

1. Introduction

Our ability to effortlessly point out and describe all aspects

of an image relies on a strong semantic understanding of a

visual scene and all of its elements. However, despite nu-

merous potential applications, this ability remains a chal-

lenge for our state of the art visual recognition systems.

In the last few years there has been significant progress

in image classification [39, 26, 53, 45], where the task is

to assign one label to an image. Further work has pushed

these advances along two orthogonal directions: First, rapid

progress in object detection [40, 14, 46] has identified mod-

els that efficiently identify and label multiple salient regions

of an image. Second, recent advances in image captioning

[3, 32, 21, 49, 51, 8, 4] have expanded the complexity of

the label space from a fixed set of categories to sequence of

words able to express significantly richer concepts.

However, despite encouraging progress along the label

density and label complexity axes, these two directions have

∗

Both authors contributed equally to this work.

Classification

Cat

Captioning

A cat

riding a

skateboard

Detection

Cat

Skateboard

Dense Captioning

Orange spotted cat

Skateboard with

red wheels

Cat riding a

skateboard

Brown hardwood

flooring

label density

Whole Image Image Regions

label

complexity

Single

Label

Sequence

Figure 1. We address the Dense Captioning task (bottom right)

with a model that jointly generates both dense and rich annotations

in a single forward pass.

remained separate. In this work we take a step towards uni-

fying these two inter-connected tasks into one joint frame-

work. First, we introduce the dense captioning task (see

Figure 1), which requires a model to predict a set of descrip-

tions across regions of an image. Object detection is hence

recovered as a special case when the target labels consist

of one word, and image captioning is recovered when all

images consist of one region that spans the full image.

Additionally, we develop a Fully Convolutional Local-

ization Network (FCLN) for the dense captioning task.

Our model is inspired by recent work in image captioning

[49, 21, 32, 8, 4] in that it is composed of a Convolutional

Neural Network and a Recurrent Neural Network language

model. However, drawing on work in object detection [38],

our second core contribution is to introduce a new dense lo-

calization layer. This layer is fully differentiable and can

be inserted into any neural network that processes images

to enable region-level training and predictions. Internally,

the localization layer predicts a set of regions of interest in

the image and then uses bilinear interpolation [19, 16] to

smoothly crop the activations in each region.

We evaluate the model on the large-scale Visual Genome

dataset, which contains 94,000 images and 4,100,000 region

captions. Our results show both performance and speed im-

provements over approaches based on previous state of the

art. We make our code and data publicly available to sup-

port further progress on the dense captioning task.

1

郑瑜伊

- 粉丝: 19

- 资源: 318

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功

评论0