没有合适的资源?快使用搜索试试~ 我知道了~

Deterministic Variational Inference for Robust Bayesian Neural N

需积分: 0 0 下载量 105 浏览量

2022-08-03

13:26:06

上传

评论

收藏 3.2MB PDF 举报

温馨提示

试读

24页

Deterministic Variational Inference for Robust Bayesian Neural Networks_变分贝叶斯应用改进1

资源详情

资源评论

资源推荐

Published as a conference paper at ICLR 2019

DETERMINISTIC VARIATIONAL INFERENCE FOR

ROBUST BAYESIAN NEURAL NETWORKS

Anqi Wu

1∗

, Sebastian Nowozin

2†

, Edward Meeds

4

,

Richard E. Turner

3,4

, Jos

´

e Miguel Hern

´

andez-Lobato

3,4

&

Alexander L. Gaunt

4

1

Princeton Neuroscience Institute, Princeton University

2

Google AI Berlin

3

Department of Engineering, University of Cambridge

4

Microsoft Research, Cambridge

anqiw@princeton.edu, nowozin@google.com,

{ret26,jmh233}@cam.ac.uk, {ted.meeds, algaunt}@microsoft.com

ABSTRACT

Bayesian neural networks (BNNs) hold great promise as a flexible and principled

solution to deal with uncertainty when learning from finite data. Among approaches

to realize probabilistic inference in deep neural networks, variational Bayes (VB)

is theoretically grounded, generally applicable, and computationally efficient. With

wide recognition of potential advantages, why is it that variational Bayes has seen

very limited practical use for BNNs in real applications? We argue that variational

inference in neural networks is fragile: successful implementations require careful

initialization and tuning of prior variances, as well as controlling the variance of

Monte Carlo gradient estimates. We provide two innovations that aim to turn VB

into a robust inference tool for Bayesian neural networks: first, we introduce a novel

deterministic method to approximate moments in neural networks, eliminating

gradient variance; second, we introduce a hierarchical prior for parameters and

a novel Empirical Bayes procedure for automatically selecting prior variances.

Combining these two innovations, the resulting method is highly efficient and

robust. On the application of heteroscedastic regression we demonstrate good

predictive performance over alternative approaches.

1 INTRODUCTION

Bayesian approaches to neural network training marry the representational flexibility of deep neural

networks with principled parameter estimation in probabilistic models. Compared to “standard”

parameter estimation by maximum likelihood, the Bayesian framework promises to bring key

advantages such as better uncertainty estimates on predictions and automatic model regularization

(MacKay, 1992; Graves, 2011). These features are often crucial for informing downstream decision

tasks and reducing overfitting, particularly on small datasets. However, despite potential advantages,

such Bayesian neural networks (BNNs) are often overlooked due to two limitations: First, posterior

inference in deep neural networks is analytically intractable and approximate inference with Monte

Carlo (MC) techniques can suffer from crippling variance given only a reasonable computation

budget (Kingma et al., 2015; Molchanov et al., 2017; Miller et al., 2017; Zhu et al., 2018). Second,

performance of the Bayesian approach is sensitive to the choice of prior (Neal, 1993), and although

we may have a priori knowledge concerning the function represented by a neural network, it is

generally difficult to translate this into a meaningful prior on neural network weights. Sensitivity to

priors and initialization makes BNNs non-robust and thus often irrelevant in practice.

In this paper, we describe a novel approach for inference in feed-forward BNNs that is simple to

implement and aims to solve these two limitations. We adopt the paradigm of variational Bayes (VB)

for BNNs (Hinton & van Camp, 1993; MacKay, 1995c) which is normally deployed using Monte

∗

Work done during an internship at Microsoft Research, Cambridge.

†

Work done while at Microsoft Research, Cambridge.

1

Published as a conference paper at ICLR 2019

Carlo variational inference (MCVI) (Graves, 2011; Blundell et al., 2015). Within this paradigm we

address the two shortcomings of current practice outlined above: First, we address the issue of high

variance in MCVI, by reducing this variance to zero through novel deterministic approximations to

variational inference in neural networks. Second, we derive a general and robust Empirical Bayes (EB)

approach to prior choice using hierarchical priors. By exploiting conjugacy we derive data-adaptive

closed-form variance priors for neural network weights, which we experimentally demonstrate to be

remarkably effective.

Combining these two novel ingredients gives us a performant and robust BNN inference scheme

that we refer to as “deterministic variational inference” (DVI). We demonstrate robustness and

improved predictive performance in the context of non-linear regression models, deriving novel

closed-form results for expected log-likelihoods in homoscedastic and heteroscedastic regression

(similar derivations for classification can be found in the appendix).

Experiments on standard regression datasets from the UCI repository, (Dheeru & Karra Taniskidou,

2017), show that for identical models DVI converges to local optima with better predictive log-

likelihoods than existing methods based on MCVI. In direct comparisons, we show that our Empirical

Bayes formulation automatically provides better or comparable test performance than manual tuning

of the prior and that heteroscedastic models consistently outperform the homoscedastic models.

Concretely, our contributions are:

•

Development of a deterministic procedure for propagating uncertain activations through

neural networks with uncertain weights and ReLU or Heaviside activation functions.

•

Development of an EB method for principled tuning of weight priors during BNN training.

•

Experimental results showing the accuracy and efficiency of our method and applicability to

heteroscedastic and homoscedastic regression on real datasets.

2 VARIATIONAL INFERENCE IN BAYESIAN NEURAL NETWORKS

We start by describing the inference task that our method must solve to successfully train a BNN.

Given a model

M

parameterized by weights

w

and a dataset

D = (x, y)

, the inference task is

to discover the posterior distribution

p(w|x, y)

. A variational approach acknowledges that this

posterior generally does not have an analytic form, and introduces a variational distribution q(w; θ)

parameterized by

θ

to approximate

p(w|x, y)

. The approximation is considered optimal within the

variational family for

θ

∗

that minimizes the Kullback-Leibler (KL) divergence between

q

and the

true posterior.

θ

∗

= argmin

θ

D

KL

[q(w; θ)||p(w|x, y)].

Introducing a prior

p(w)

and applying Bayes rule allows us to rewrite this as optimization of the

quantity known as the evidence lower bound (ELBO):

θ

∗

= argmax

θ

{E

w∼q

[log p(y|w, x)] − D

KL

[q(w; θ)||p(w)]}. (1)

Analytic results exist for the KL term in the ELBO for careful choice of prior and variational

distributions (e.g. Gaussian families). However, when

M

is a non-linear neural network, the first

term in equation 1 (referred to as the reconstruction term) cannot be computed exactly: this is where

MC approximations with finite sample size S are typically employed:

E

w∼q

[log p(y|w, x)] ≈

1

S

S

X

s=1

log p(y|w

(s)

, x), w

(s)

∼ q(w; θ). (2)

Our goal in the next section is to develop an explicit and accurate approximation for this expectation,

which provides a deterministic, closed-form expectation calculation, stabilizing BNN training by

removing all stochasticity due to Monte Carlo sampling.

3 DETERMINISTIC VARIATIONAL APPROXIMATION

Figure 1 shows the architecture of the computation of

E

w∼q

[log p(D|w)]

for a feed-forward neural

network. The computation can be divided into two parts: first, propagation of activations though

2

Published as a conference paper at ICLR 2019

Layer 1 Layer 2

Layer

…

(a) (b)

Figure 1

: Architecture of a Bayesian

neural network. Computation is divided

into (a) propagation of activations (

a

)

from an input

x

and (b) computation of

a log-likelihood function

L

for outputs

y

. Weights are represented as high di-

mensional variational distributions (blue)

that induce distributions over activations

(yellow). MCVI computes using sam-

ples (dots); our method propagates a full

distribution.

parameterized layers and second, evaluation of an unparameterized log-likelihood function (

L

). In

this section, we describe how each of these stages is handled in our deterministic framework.

3.1 MOMENT PROPAGATION

We begin by considering activation propagation (figure 1(a)), with the aim of deriving the form

of an approximation

˜q(a

L

)

to the final layer activation distribution

q(a

L

)

that will be passed to

the likelihood computation. We compute

a

L

by sequentially computing the distributions for the

activations in the preceding layers. Concretely, we define the action of the

l

th

layer that maps

a

(l−1)

to a

l

as follows:

h

l

= f(a

(l−1)

),

a

l

= h

l

W

l

+ b

l

,

where

f

is a non-linearity and

{W

l

, b

l

} ⊂ w

are random variables representing the weights and

biases of the

l

th

layer that are assumed independent from weights in other layers. For notational

clarity, in the following we will suppress the explicit layer index

l

, and use primed symbols to denote

variables from the

(l − 1)

th

layer, e.g.

a

0

= a

(l−1)

. Note that we have made the non-conventional

choice to draw the boundaries of the layers such that the linear transform is applied after the non-

linearity. This is to emphasize that

a

l

is constructed by linear combination of many distinct elements

of

h

0

, and in the limit of vanishing correlation between terms in this combination, we can appeal

to the central limit theorem (CLT). Under the CLT, for a large enough hidden dimension and for

variational distributions with finite first and second moments, elements

a

i

will be normally distributed

regardless of the potentially complicated distribution for

h

j

induced by

f

1

. We empirically observe

that this claim is approximately valid even when (weak) correlations appear between the elements of

h during training (see section 3.1.1).

Having argued that

a

adopts a Gaussian form, it remains to compute the first and second moments. In

general, these cannot be computed exactly, so we develop an approximate expression. An overview

of this derivation is presented here with more details in appendix A. First, we model

W

,

b

and

h

as

independent random variables, allowing us to write:

ha

i

i = hh

j

ihW

ji

i + hb

i

i,

Cov(a

i

, a

k

) = hh

j

h

l

iCov(W

ji

, W

lk

) + hW

ji

iCov(h

j

, h

l

) hW

lk

i + Cov(b

i

, b

k

), (3)

where we have employed the Einstein summation convention and used angle brackets to indicate

expectation over

q

. If we choose a variational family with analytic forms for weight means and

covariances (e.g. Gaussian with variational parameters

hW

ji

i

and

Cov(W

ji

, W

lk

)

), then the only

difficult terms are the moments of h:

hh

j

i ∝

Z

f(α

j

) exp

−

(

α

j

−

h

a

0

j

i)

2

2Σ

0

jj

dα

j

, (4)

hh

j

h

l

i ∝

Z

f(α

j

)f(α

l

) exp

"

−

1

2

α

j

−

h

a

0

j

i

α

l

−

h

a

0

l

i

>

Σ

0

jj

Σ

0

jl

Σ

0

lj

Σ

0

ll

−1

α

j

−

h

a

0

j

i

α

l

−

h

a

0

l

i

#

dα

j

dα

l

, (5)

1

We are also required to choose a Gaussian variational approximation for

b

to preserve the Gaussian

distribution of a.

3

Published as a conference paper at ICLR 2019

A(µ

1

, µ

2

, ρ) Q(µ

1

, µ

2

, ρ)

Heaviside

Φ(µ

1

)Φ(µ

2

)

−log(

g

h

ρ

2π

) +

ρ

2g

h

¯ρ

h

µ

2

1

+ µ

2

2

−

2ρ

1+¯ρ

µ

1

µ

2

i

+ O(µ

4

)

ReLU

SR(µ

1

)SR(µ

2

)

+ ρΦ(µ

1

)Φ(µ

2

)

−log(

g

r

2π

) +

h

ρ

2g

r

(1+¯ρ)

µ

2

1

+ µ

2

2

−

arcsin ρ−ρ

g

r

ρ

µ

1

µ

2

i

+ O(µ

4

)

Table 1: Forms for the components of the approximation in equation 6 for Heaviside and ReLU

non-linearities.

Φ

is the CDF of a standard Gaussian, SR is a “soft ReLU” that we define as

SR(x) =

φ(x) + xΦ(x) where φ is a standard Gaussian, ¯ρ =

p

1 − ρ

2

, g

h

= arcsin ρ and g

r

= g

h

+

ρ

1+¯ρ

where we have used the Gaussian form of

a

0

parameterized by mean

ha

0

i

and covariance

Σ

0

, and

for brevity we have omitted the normalizing constants. Closed form solutions for the integral in

equation 4 exist for Heaviside or ReLU choices of non-linearity

f

(see appendix A). Furthermore, for

these non-linearities, the

a

0

j

→ ±∞

and

ha

0

l

i → ±∞

asymptotes of the integral in equation 5 have

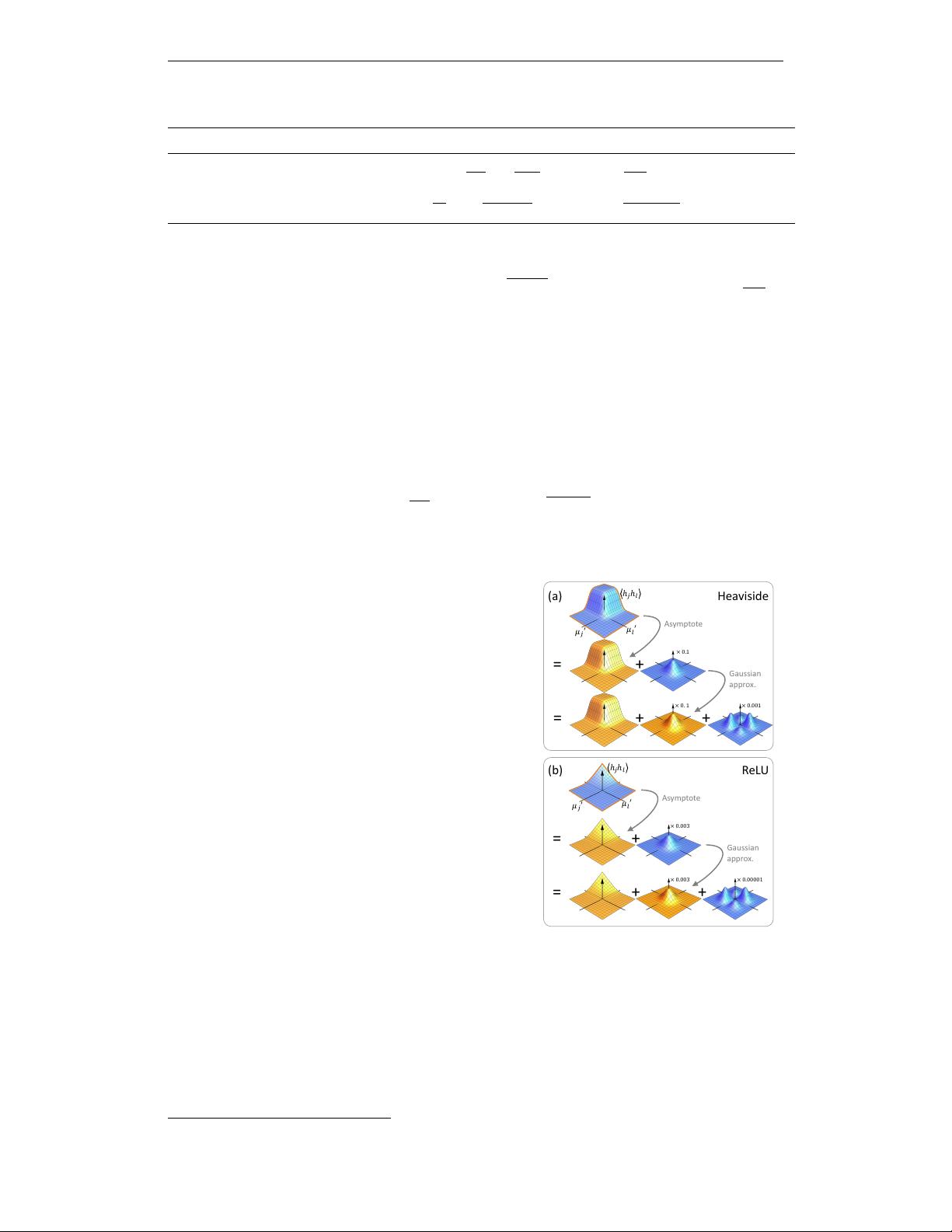

closed form. Figure 2 shows schematically how these asymptotes can be used as a first approximation

for equation 5. This approximation is improved by considering that (by definition) the residual decays

to zero far from the origin in the

(

a

0

j

, ha

0

l

i)

plane, and so is well modelled by a decaying function

exp[−Q(

a

0

j

, ha

0

l

i, Σ

0

)]

, where

Q

is a polynomial in

ha

0

i

with a dominant positive even term. In

practice we truncate

Q

at the quadratic term, and calculate the polynomial coefficients by matching

the moments of the resulting Gaussian with the analytic moments of the residual. Specifically, using

dimensionless variables

µ

0

i

= ha

0

i

i/

p

Σ

0

ii

and

ρ

0

ij

= Σ

0

ij

/

q

Σ

0

ii

Σ

0

jj

, this improved approximation

takes the form

hh

j

h

l

i = S

0

jl

A(µ

0

j

, µ

0

l

, ρ

0

jl

) + exp

−Q(µ

0

j

, µ

0

l

, ρ

0

jl

)

, (6)

= +

× 0.1

= + +

× 0.001× 0. 1

Asymptote

Gaussian

approx.

Heaviside(a)

𝜇

𝑗

′

𝜇

𝑙

′

ℎ

𝑗

ℎ

𝑙

= +

= + +

× 0.003

× 0.003 × 0.00001

Asymptote

Gaussian

approx.

ℎ

𝑗

ℎ

𝑙

ReLU(b)

𝜇

𝑗

′

𝜇

𝑙

′

Figure 2: Approximation of

hh

j

h

l

i

using

an asymptote and Gaussian correction for

(a) Heaviside and (b) ReLU non-linearities.

Yellow functions have closed-forms, and blue

indicates residuals. The examples are plotted

for

−6 < µ

0

< 6

and

ρ

0

jl

= 0.5

, and the

relative magnitude of each correction term is

indicated on the vertical axis.

where the expressions for the dimensionless asymptote

A

and quadratic

Q

are given in table table 1 and derived in

appendix A.2.1 and A.2.2. The dimensionful scale fac-

tor

S

0

jl

is 1 for a Heaviside non-linearity or

Σ

0

jl

/ρ

0

jl

for

ReLU. Using equation 6 in equation 3 gives a closed form

approximation for the moments of

a

as a function of mo-

ments of

a

0

. Since

a

is approximately normally distributed

by the CLT, this is sufficient information to sequentially

propagate moments all the way through the network to

compute the mean and covariances of ˜q(a

L

), our explicit

multivariate Gaussian approximation to

q(a

L

)

. Any deep

learning framework supporting special functions

arcsin

and

Φ

will immediately support backpropagation through

the deterministic expressions we have presented. Below

we briefly empirically verify the presented approximation,

and in section 3.2 we will show how it is used to compute

an approximate log-likelihood and posterior predictive

distribution for regression and classification tasks.

3.1.1 EMPIRICAL VERIFICATION

Approximation accuracy

The approximation derived

above relies on three assumptions. First, that some form of

CLT holds for the hidden units during training where the

iid assumption of the classic CLT is not strictly enforced;

second, that a quadratic truncation of

Q

is sufficient

2

; and

third that there are only weak correlation between layers

so that they can be represented using independent vari-

ables in the variational distribution. To provide evidence

that these assumptions hold in practice, we train a small

ReLU network with two hidden layers each of 128 units

2

Additional Taylor expansion terms can be computed if this assumption fails.

4

Published as a conference paper at ICLR 2019

0

Data samples

Data 1-std

Model 1-std

MC

ours

Before Training

After Training

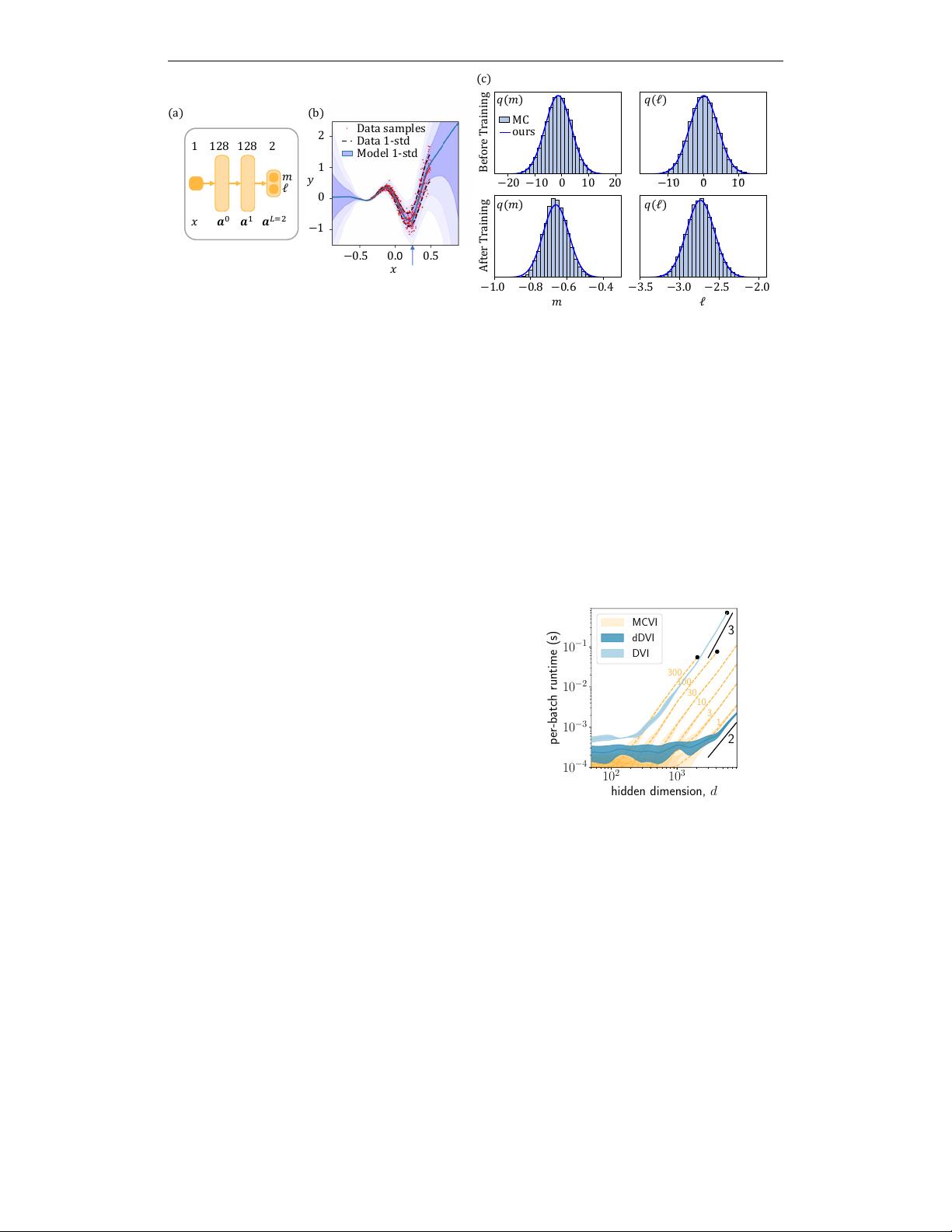

Figure 3: Empirical accuracy of our approximation on toy 1-dimensional data. (a) We train a 2 layer ReLU

network to perform heteroscedastic regression on the dataset shown in (b) and obtain the fit shown in blue. (c)

The output distributions for the activation units

m

and

`

evaluated at

x = 0.25

are in excellent agreement with

Monte Carlo (MC) integration with a large number (20k) of samples both before and after training.

to perform 1D heteroscedastic regression on a toy dataset of 500 points drawn from the distribution

shown in figure 3(b). Deeper networks and skip connections are considered in appendix C. The

training objective is taken from section 4, and the only detail required here is that

a

L

is a 2-element

vector where the elements are labelled as

(m, `)

. We use a diagonal Gaussian variational family to

represent the weights, but we preserve the full covariance of

a

during propagation. Using an input

x = 0.25

(see arrow, Figure 3(b)) we compute the distributions for

m

and

`

both at the start of training

(where we expect the iid assumption to hold) and at convergence (where iid does not necessarily

hold). Figure 3(c) shows the comparison between

a

L

distributions reported by our deterministic

approximation and MC evaluation using 20k samples from

q(w; θ)

. This comparison is qualitatively

excellent for all cases considered.

10

2

10

3

hidden dimension, d

10

−4

10

−3

10

−2

10

−1

per-batch runtime (s)

1

3

10

30

100

300

3

2

MCVI

dDVI

DVI

Figure 4: Runtime performance of VI methods.

We show the time to propagate a batch of 10 ac-

tivation vectors through a single

d × d

layer. For

MCVI we label curves with the number of sam-

ples used, and we show quadratic and cubic scal-

ing guides-to-the-eye (black). Black dots indicate

where our implementation runs out of memory

(16GB).

Computational efficiency

In traditional MCVI,

propagation of

S

samples of

d

-dimensional activa-

tions through a layer containing a

d × d

-dimensional

transformation requires

O(Sd

2

)

compute and

O(Sd)

memory. Our DVI method approximates the

S → ∞

limit, while only demanding

O(d

3

)

compute and

O(d

2

)

memory (the additional factor of

d

arises from

manipulation of the quadratically large covariance

matrix

Cov[h

j

, h

l

]

). Whereas MCVI can always

trade compute and memory for accuracy by choosing

a small value for

S

, the inherent scaling of DVI with

d

could potentially limit its practical use for networks

with large hidden size. To avoid this limitation, we

also consider the case where only the diagonal entries

Cov(h

j

, h

j

)

are computed and stored at each layer.

We refer to this method as “diagonal-DVI” (dDVI),

and in section 6 we show the surprising result that

the strong test performance of DVI is largely retained

by dDVI across a range of datasets. Figure 4 shows

the time required to propagate activations through a

single layer using the MCVI, DVI and dDVI methods

on a Tesla V100 GPU. As a rough rule of thumb (on this hardware), for layer sizes of practical

relevance, we see that absolute DVI runtimes roughly equate to MCVI with

S = 300

and dDVI

runtime equates to S = 1.

3.2 LOG-LIKELIHOOD EVALUATION

To use the moment propagation procedure derived above for training BNNs, we need to build a

function

L

that maps final layer activations

a

L

to the expected log-likelihood term in equation 1 (see

figure 1(b)). In appendix B.1 we show the intuitive result that this expected log-likelihood over

q(w)

5

剩余23页未读,继续阅读

江水流春去

- 粉丝: 42

- 资源: 352

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功

评论0