没有合适的资源?快使用搜索试试~ 我知道了~

50-段佳昂-(2021 SoCC)Sequoia Enabling Quality-of-Service in Serverl

需积分: 0 0 下载量 35 浏览量

2022-08-04

13:46:36

上传

评论

收藏 2.7MB PDF 举报

温馨提示

试读

17页

Serverless computing is a rapidly growing paradigm thateasily harnesses the powe

资源详情

资源评论

资源推荐

Sequoia: Enabling �ality-of-Service in Serverless

Computing

Ali Tariq

University of Colorado Boulder

Boulder, Colorado

ali.tariq@colorado.edu

Austin Pahl

University of Colorado Boulder

Boulder, Colorado

austin.pahl@colorado.edu

Sharat Nimmagadda

University of Colorado Boulder

Boulder, Colorado

sharat.nimmagadda@colorado.edu

Eric Rozner

University of Colorado Boulder

Boulder, Colorado

eric.rozner@colorado.edu

Siddharth Lanka

University of Colorado Boulder

Boulder, Colorado

sai.lanka@colorado.edu

ABSTRACT

Serverless computing is a rapidly growing paradigm that

easily harnesses the power of the cloud. With serverless

computing, developers simply provide an event-driven func-

tion to cloud providers, and the provider seamlessly scales

function invocations to meet demands as event-triggers oc-

cur. As current and future serverless oerings support a

wide variety of serverless applications, eective techniques

to manage serverless workloads becomes an important issue.

This work examines current management and scheduling

practices in cloud providers, uncovering many issues includ-

ing inated application run times, function drops, inecient

allocations, and other undocumente d and unexpected be-

havior. To x these issues, a new quality-of-service function

scheduling and allocation framework, called Sequoia, is de-

signed. Sequoia allows developers or administrators to easily

dene how serverless functions and applications should be

deployed, capped, prioritized, or altered based on easily con-

gured, exible policies. Results with controlled and realistic

workloads show Sequoia seamlessly adapts to policies, elimi-

nates mid-chain drops, reduces queuing times by up to 6

.

4

⇥

,

enforces tight chain-level fairness, and improves run-time

performance up to 25⇥.

Permission to make digital or hard copies of all or part of this work for

personal or classroom use is granted without fee provided that copies are not

made or distributed for prot or commercial advantage and that copies bear

this notice and the full citation on the rst page. Copyrights for components

of this work owned by others than ACM must be honored. Abstracting with

credit is permitted. To copy otherwise, or republish, to post on servers or to

redistribute to lists, requires prior specic permission and/or a fee. Request

permissions from permissions@acm.org.

SoCC ’20, October 19–21, 2020, Virtual Event, USA

© 2020 Association for Computing Machiner y.

ACM ISBN 978-1-4503-8137-6/20/10. ..$15.00

https://doi.org/10.1145/3419111.3421306

CCS CONCEPTS

• Computer systems organization ! Cloud comput-

ing; n-tier architectures.

KEYWORDS

Serverless Computing, Quality-of-Service, Measurement

ACM Reference Format:

Ali Tariq, Austin Pahl, Sharat Nimmagadda, Eric Rozner, and Sid-

dharth Lanka. 2020. Sequoia: Enabling Quality-of-Service in Server-

less Computing. In ACM Symposium on Cloud Computing (SoCC

’20), October 19–21, 2020, Virtual Event, USA. ACM, New York, NY,

USA, 17 pages. https://doi.org/10.1145/3419111.3421306

1 INTRODUCTION

In serverless computing, also referred to as Functions-as-

a-Service (FaaS), application developers provide an event-

driven function to cloud providers, and the cloud provider

is responsible for seamlessly scaling function invocations to

meet demands as event triggers occur. Serverless is powerful

and expressive, with applications designed for video process-

ing [

29

,

41

], HPC and scientic computing [

36

,

51

,

89

,

93

],

machine learning [

35

,

39

,

50

], data analytics [

44

,

55

], chat-

bots [

103

], backends [

31

,

67

], IoT [

69

,

102

], and even general

applications [

40

,

92

]. Indeed, a recent study of a production

serverless oering indicates applications range from single

functions to hundreds of functions in size, with function exe-

cution times ranging from less than a second to the order of

minutes [

88

]. Therefore, the future promises a fast-growing

serverless-native ecosystem [

71

], in which diverse serverless

function chains, where serverless functions call subsequent

serverless functions to create comp ositions, must be sup-

ported over a common infrastructure.

As serverless function chains b ecome more common, com-

plex, and relied upon, tools must b e provided to ensure ad-

ministrators and serverless developers can eectively man-

age these new workloads. Better manageability will more

311

SoCC ’20, October 19–21, 2020, Virtual Event, USA Ali Tariq, Austin Pahl, Sharat Nimmagadda, Eric Rozner, and Siddharth Lanka

easily enable serverless applications to achieve service-level

agreements (SLAs) by ensuring predictable and ecient

cloud performance and hence maximizing revenue [

27

,

37

,

60

,

95

]. Beyond SLAs, management is important to developers or

administrators for a variety of reasons. For example, manag-

ing where functions or chains can run (e.g., public or private

cloud) is important for privacy and regulator y reasons. Man-

agement ensures how multiple applications, or functions

within applications, can consume resources, ensuring im-

portant workloads or functions are prioritized when neede d.

Additionally, controlling consumption simplies budgeting

operational expenditures.

As shown in this paper, the current state of serverless

function chain management leaves much to be desired. Poli-

cies to manage serverless functions and function chains are

relatively simple: scheduling policies today typically imple-

ment basic rst-come-rst-served algorithms. When limits

are imposed on serverless workloads running in parallel, ei-

ther from hard concurrency limits enforced by the provider

or soft concurrency limits observed due to inecient re-

source allocation, this leaves little exibility to dictate how

serverless applications should be managed under challeng-

ing conditions. Our measurements (Section 2) show current

management practices can lead to a variety of issues with

serverless performance, including inconsistent and incor-

rect limitations, inecient resource allocation, inated run

times, mid-chain function drops, concurrency collapse, and

undocumented function prioritization.

To help alleviate these problems, as well as provide a more

mature deployment ecosystem, we introduce a Quality-of-

Service (QoS) scheduler for serverless functions and chains.

Our framework, called Sequoia, allows policies to dictate how

or where function chains, or functions within chains, should

be prioritized, scheduled, or queued. Our QoS scheduler is

implemented as a drop-in frontend so its p erformance can be

analyzed across ve dierent commercial and open-source

serverless oerings. Se quoia’s design enables exible poli-

cies to be easily dened and realized without changes to the

serverless functions themselves. We show how management

policies can avoid performance issues and enable rich sched-

uling techniques such as seamlessly scheduling over a hybrid

private-public cloud or managing performance at a chain (i.e.,

application) level. In short, we aim to make QoS a rst-class

citizen in serverless deployments. The contributions of our

work are as follows:

•

A measurement study showing the current state of QoS

scheduling for ser verless function chains over ve ma-

jor providers. The measurements show how current

techniques can adversely aect function chain perfor-

mance, leading to drops, inated completion times, and

unexpected behavior.

•

A new drop-in QoS function chain scheduler that alle-

viates problems uncovered in the measurement study.

The scheduler can accurately realize a variety of exi-

ble policies to make serverless function chain manage-

ment more eective. Our code is published at:

https://github.com/CU-BISON-LAB/sequoia.

•

Evaluation of controlled and realistic workloads show-

ing Sequoia eliminates mid-chain drops, reduces queu-

ing times by up to 6

.

4

⇥

, enforces tight chain-level

fairness, and improves run-time performance up to

25⇥.

2 BACKGROUND

This section rst details how function chains are supporte d

across serverless platforms and then presents a QoS-related

measurement study.

Serverless Function Chains

Serverless function chains,

consisting of one or more serverless functions, can be re-

alized via three main invocation mechanisms. First, with

synchronous function calls, developers call a serverless func-

tion from within the current function directly. Examples

include an HTTP request or an output from a load balancer.

Second, in asynchronous function calls, a serverless function

will output some event, which then triggers a subsequent

function call. Examples include adding elements to a storage

service or using a pub/sub system. Last, a special case of syn-

chronous chains exists with composition frameworks, such

as AWS Step Functions [

2

], Azure Durable Functions [

6

], or

IBM Composer [

14

]. In composition frameworks developers

specify a call graph, and the provider ensures functions are

called accordingly.

2.1 QoS in Serverless Oerings

While many FaaS oerings exist [

3

,

4

,

10

,

12

,

15

,

17

,

18

,

20

],

relatively basic techniques to manage serverless function

invocations are provided today. As function requests are re-

ceived, the cloud provider schedules functions, mostly in

a rst-in-rst-out manner. Opportunities to invoke a more

informed sche duling policy are missed, however, in chal-

lenging scenarios such as when incoming demands cannot

be satised by currently available resources. Such scenarios

occur when providers cannot accommodate a rise of invo-

cations due to cold starts or inecient resource allo cation

or alternatively when function invocation limits are imposed.

Function invocation limits b ound the number of functions

running either instantaneously or over a time period. Be-

cause serverless technologies automatically scale to meet

demands, function invocation limits ensure a bug or miscon-

guration in tenant workloads does not inappropriately scale.

In addition, limits help developers manage costs and better

312

Sequoia: Enabling �ality-of-Service in Serverless Computing SoCC ’20, October 19–21, 2020, Virtual Event, USA

understand expected workload characteristics. Changing lim-

its requires out-of-band approval from support centers [

5

,

7

].

To better understand issues with serverless QoS, ve major

serverless providers are detailed below.

AWS Lambda

AWS Lambda provides users with a total

concurrency threshold shared by all serverless functions. In-

dividual serverless functions can further be congured to use

a dedicated concurrency share which is deducted from the

total concurrency threshold. For synchronous trac, AWS

Lambda does not provide any queuing mechanism and there-

fore any demand or invocations above the concurrency limit

gets dropped or returns with an error. For asynchronous

workloads, AWS Lambda can queue when concurrency lim-

its are exceeded, running queued functions when current

concurrency levels drop below the threshold. Every function

is run in isolatio n (its own micro-VM [

9

,

65

]), although the

same VM can later be reused for another instance of the same

function. Existing VMs are destroyed automatically after a

timeout period of up to a few hours [30].

IBM Cloud Functions

IBM Cloud Functions follows a to-

tal concurrency threshold model similar to AWS. According

to ocial documentation [

13

], a 1,000 concurrency limit is

enforced across all running functions. As with AWS, IBM en-

ables queuing of asynchronous functions when concurrency

limits cross the threshold.

Apache OpenWhisk

Apache OpenWhisk is an open-source

serverless platform very similar to IBM Cloud Functions as

both share a similar design. OpenWhisk follows a total con-

currency pool model.

Google Cloud Functions (GCF)

GCF divides serverless

functions into HTTP functions and background (i.e., asyn-

chronous) functions [

11

]. GCF enforces concurrency limits

on individual functions as opposed to a total concurrency

pool. For HTTP functions, there is no mentioned limit on

the concurrency however, in practice we observe a varying

concurrency limit between 1,000 & 2,000 (Section 2.2). All

requests beyond this are queued and run in-turn. For back-

ground functions, a strict concurrency limit is enforced per

function. Unlike the previous providers, GCF provides vari-

ous conguration options for its users to limit resource usage,

with limits available on total CPU or memory usage over all

functions. GCF uses a 100 second interval for assessing and

enforcing resource limits. GCF handles synchronous work-

loads in best eort fashion: it seems to perform queuing but

doesn’t ensure zero drops (Section 2.2). For asynchronous

workloads, GCF provides queuing just as other platforms do.

Azure Function Apps

Azure Function Apps group func-

tions into “Function Apps" which automatically add VMs,

or “instances," to match the current load on all of the func-

tions within the app. A single function app may have up

to 200 VMs allocated at once, and each VM can host mul-

tiple functions running in parallel based on the resource

demand of each function [

8

]. Users have the option to con-

gure various other quotas as well, such as HTTP function

concurrency, outstanding function queue sizes, and timeouts

for long-running functions. If the Function App does not

have enough instances allocated to support a sudden burst

of function invocations, we found some of the invocations

will be enqueued or dropped.

2.2 Measurement Study

1

2 3

1

2 3

3

3

2

1

Fan-2

Linear-3 Combo

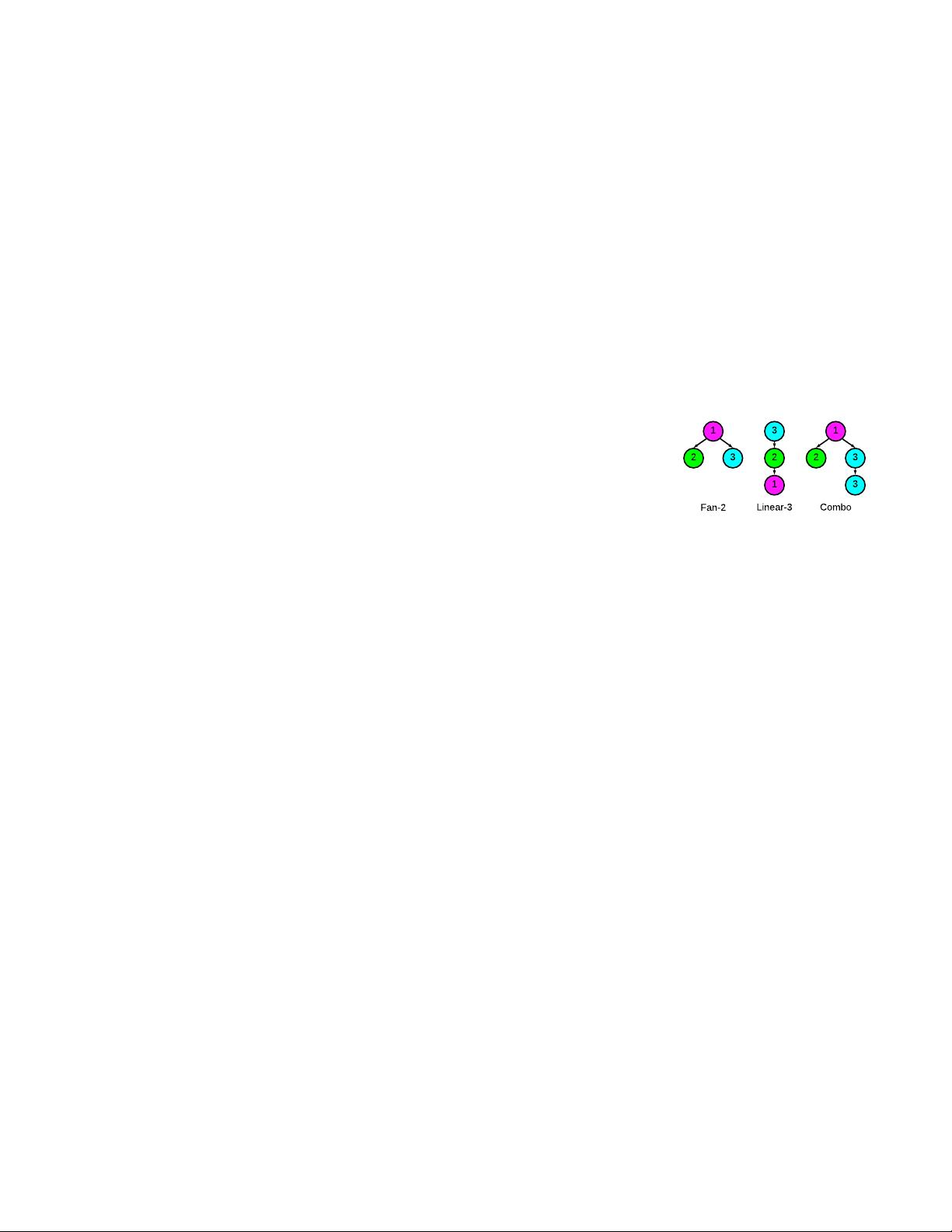

Figure 1: Example function

chains in study

This section presents a

measurement study to

better understand the

current state of QoS in

today’s serverless plat-

forms. Our study con-

sists of results from No-

vember 2019 to May

2020. Figure 1 shows the

tested workloads. Func-

tions in the workload al-

locate 256 MB memory and sleep for 15 seconds. The work-

loads are as follows: Single: This is the simplest workload,

consisting of individual independent requests. Linear-N: A

serverless chain where every serverless function invokes up

to one new serverless function. Fan-N: Another chain where

multiple tasks depend on a previous function’s completion.

Single, Linear-N and Fan-N are important to study because

they serve as building blocks for more complex applications.

Combo: This chain includes combinations of Single, Linear-

N, and Fan-N (unless otherwise stated, Combo refers to the

chain in Figure 1). MixedChain: A workload in which the

chains in Figure 1 are run simultaneously. Note the chains

share similar functions, e.g.,

1

is run in all three chains and

3

is run twice in Comb o.

The above workloads are run under dierent demands.

Burst-N sends a burst of N simultaneous requests at once.

Some initial studies have shown burst workloads to be com-

mon in serverless applications [

40

,

52

,

56

]. Continuous-N

sends constant N requests per second. An open-loop Pois-

son process, which has been extensively used in serverless

evaluations [

25

,

63

,

70

,

87

,

91

] and approximates large-scale,

web-driven workloads [

86

]. Cold start issues are mitigated by

running all results multiple times in succession and verifying

trends hold.

We conduct a series of experiments in which functions

and chains are assigned identiers and function start and end

times are logged to enable reverse engineering of provider

313

SoCC ’20, October 19–21, 2020, Virtual Event, USA Ali Tariq, Austin Pahl, Sharat Nimmagadda, Eric Rozner, and Siddharth Lanka

queuing policies. Although results are omitted due to space,

we nd (i) scheduling across frameworks follows a simple

FIFO queuing model and (ii) scheduling is performed on a

per-function basis (instead of other policies like per-chain).

2.2.1 Limitations. This section shows limitations in exist-

ing serverless oerings and how these impact QoS for in-

coming requests and overall performance. Sp ecically, it is

shown that inconsistent and incorrect concurrency limits

are prevalent, mid-chain function drops occur, workloads

such as bursts are not easily supported, HTTP functions are

prioritized without documentation, inecient resource allo-

cation is common, and concurrency collapses under certain

conditions.

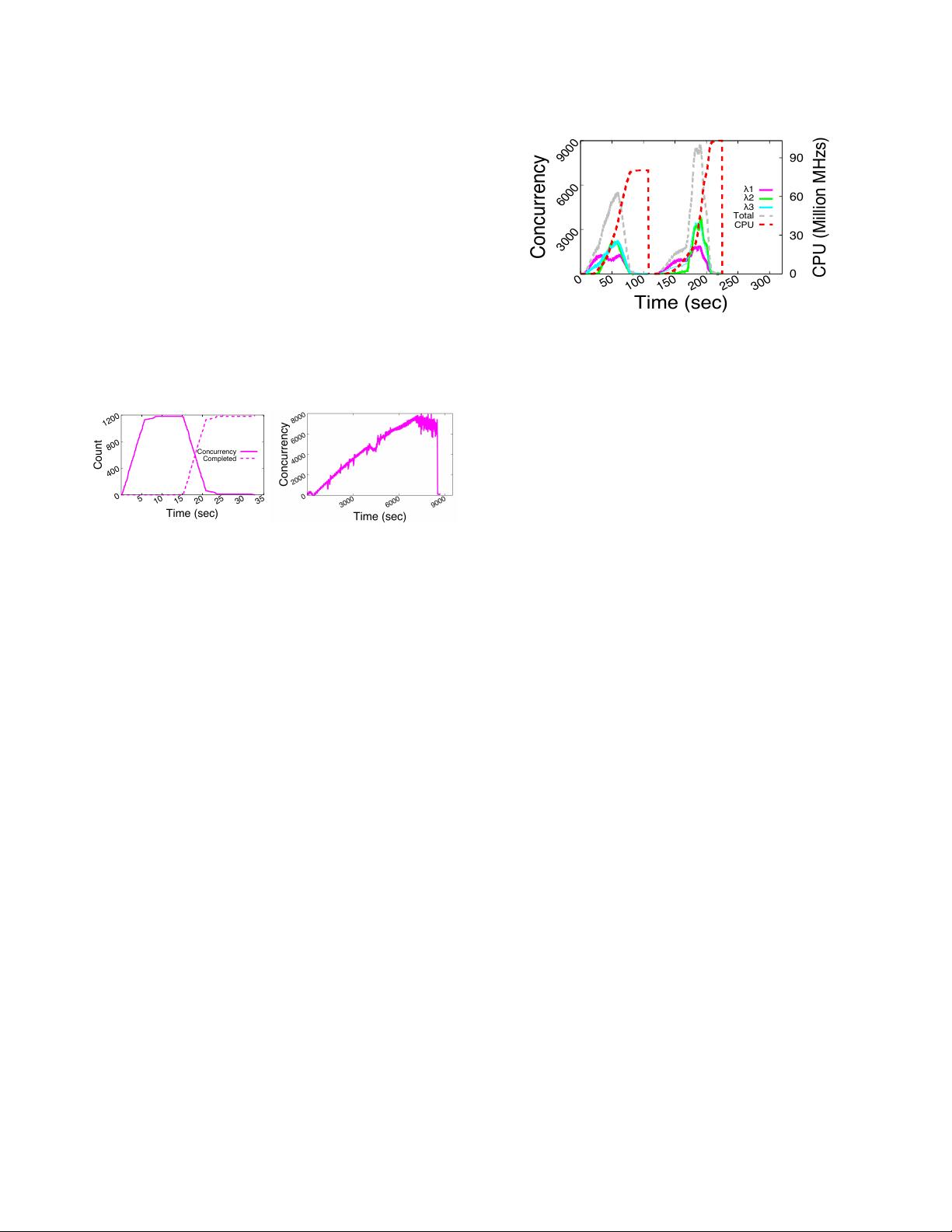

0

400

800

1200

5

10

15

20

25

30

35

Count

Time (sec)

Concurrency

Completed

(a) IBM Cloud Functions (b) Azure Functions

Figure 2: Incorrect concurrency limits

Inconsistent and incorrect concurrency limits

We nd

numerous issues with concurrency limits on serverless plat-

forms. IBM suers from a simple issue: default concurrency

limits are documented to be 1,000, but up to 1,200 concurrent

functions are run in parallel. Figure 2a shows a burst of 1,200

Single functions. The x-axis is time, the y-axis is number

of concurrently running functions, the dotted line tracks

completions, and the solid line shows up to 1,200 functions

running simultaneously.

In the worst case, no enforcement can occur in Azure. A

workload is created in which demand is slowly ramped up

over time. Azure does not limit the numb er of concurrent

HTTP functions, which was congured to 1,000, or the num-

ber of instances, which is 200 by default. During the test,

the Function App’s Live Metrics Stream reported up to 440

instances allocated to the Function App with up to 8,000

concurrent requests run at a time, as shown in Figure 2b.

Last, GCF does not limit total CPU consumption in a tight

manner. GCF caps total CPU usage over all functions to a

specied threshold over a 100 second period. CPU consump-

tion is tracked during the period, and when the threshold

is reached, no new functions are invoked. We nd two is-

sues, however. First, any outstanding functions are able to

complete after the limit is reached, violating CPU limits. Sec-

ond, a slow trickle of invocations still occurs after the CPU

limit is reached. Figure 3 shows CPU usage is more than

doubled in the MixedChain workload: CPU limits were set

3000

6000

9000

0

50

100

150

200

250

300

0

30

60

90

Concurrency

CPU (Million MHzs)

Time (sec)

1

2

3

Total

CPU

Figure 3: GCF: MixedChain workload CPU usage

to 40M MHz/s, but over 90M MHz/s consumption was en-

countered (dotted red line). Concurrency for each

and total

concurrency, the sum of all

concurrencies, are also shown.

The above ndings indicate concurrency limits are of-

ten inconsistent or incorrect, placing additional burden on

serverless developers. When limits are under intended values,

workloads may unexpecte dly encounter p oor performance

or increased drops. Dealing with such issues increases server-

less application complexity. When limits are over intended

values, developers may incur higher costs than budgeted for.

And when limits are inconsistent, developers can have di-

culty managing and reasoning about serverless performance.

Mid-chain drops

Some serverless platforms provide a hard

concurrency limit (AWS and IBM) beyond which all subse-

quent requests are dropped. When demand rises above a

specied function invocation limit, functions can be queued

(up to 4 days in the case of AWS [

81

]), silently dropped [

82

],

or returned with an error (in the synchronous case only).

This is problematic for several reasons. First, developers may

rely on function chain completion, and when function chains

drop mid-chain, incorrectness may arise. Alternatively, de-

velopers can solve the problem at the application layer, but

this increases complexity and developer burden, two prob-

lems serverless aims to solve. Third, drops mid-chain result

in ineciency because the resources spent running func-

tions before the drop are wasted and could have been better

used to nish some other outstanding function chain. And

last, if providers queue requests mid-chain, then the total

function chain running time variance can be signicantly

increased, impacting SLAs or otherwise negatively aecting

performance.

To assess the impact of mid-chain drops, a Fan-2 Burst

workload is run on AWS Step Functions and IBM Cloud

Functions, where the burst is the size of the concurrency

limit. Note the “fan” portion of Fan-2 invokes twice as many

functions after

1

completion, meaning a burst of 1,000 Fan-

2’s will ultimately result in 2,000 concurrent functions (i.e.,

2

&

3

) and a violation of concurrency limits. Figure 4 shows

314

剩余16页未读,继续阅读

艾斯·歪

- 粉丝: 30

- 资源: 343

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功

评论0