没有合适的资源?快使用搜索试试~ 我知道了~

43-华勤-firm-osdi20-qiu1

需积分: 0 0 下载量 117 浏览量

2022-08-04

12:01:11

上传

评论

收藏 1.21MB PDF 举报

温馨提示

试读

22页

43-华勤-firm-osdi20-qiu1

资源详情

资源评论

资源推荐

This paper is included in the Proceedings of the

14th USENIX Symposium on Operating Systems

Design and Implementation

November 4–6, 2020

978-1-939133-19-9

Open access to the Proceedings of the

14th USENIX Symposium on Operating

Systems Design and Implementation

is sponsored by USENIX

FIRM: An Intelligent Fine-grained Resource

Management Framework for SLO-Oriented

Microservices

Haoran Qiu, Subho S. Banerjee, Saurabh Jha, Zbigniew T. Kalbarczyk,

and Ravishankar K. Iyer, University of Illinois at Urbana–Champaign

https://www.usenix.org/conference/osdi20/present ation/qiu

FIRM: An Intelligent Fine-Grained Resource Management Framework

for SLO-Oriented Microservices

Haoran Qiu

1

Subho S. Banerjee

1

Saurabh Jha

1

Zbigniew T. Kalbarczyk

2

Ravishankar K. Iyer

1,2

1

Department of Computer Science

2

Department of Electrical and Computer Engineering

University of Illinois at Urbana-Champaign

Abstract

User-facing latency-sensitive web services include numerous

distributed, intercommunicating microservices that promise

to simplify software development and operation. However,

multiplexing of compute resources across microservices is

still challenging in production because contention for shared

resources can cause latency spikes that violate the service-

level objectives (SLOs) of user requests. This paper presents

FIRM, an intelligent fine-grained resource management frame-

work for predictable sharing of resources across microser-

vices to drive up overall utilization. FIRM leverages online

telemetry data and machine-learning methods to adaptively

(a) detect/localize microservices that cause SLO violations,

(b) identify low-level resources in contention, and (c) take ac-

tions to mitigate SLO violations via dynamic reprovisioning.

Experiments across four microservice benchmarks demon-

strate that FIRM reduces SLO violations by up to 16

×

while

reducing the overall requested CPU limit by up to 62%. More-

over, FIRM improves performance predictability by reducing

tail latencies by up to 11×.

1 Introduction

User-facing latency-sensitive web services, like those at Net-

flix [68], Google [77], and Amazon [89], are increasingly

built as microservices that execute on shared/multi-tenant

compute resources either as virtual machines (VMs) or as

containers (with containers gaining significant popularity of

late). These microservices must handle diverse load char-

acteristics while efficiently multiplexing shared resources

in order to maintain service-level objectives (SLOs) like

end-to-end latency. SLO violations occur when one or more

“critical” microservice instances (defined in §2) experience

load spikes (due to diurnal or unpredictable workload pat-

terns) or shared-resource contention, both of which lead to

longer than expected times to process requests, i.e., latency

spikes [4,11,22,30,35,44,53,69,98,99]. Thus, it is critical to

efficiently multiplex shared resources among microservices

to reduce SLO violations.

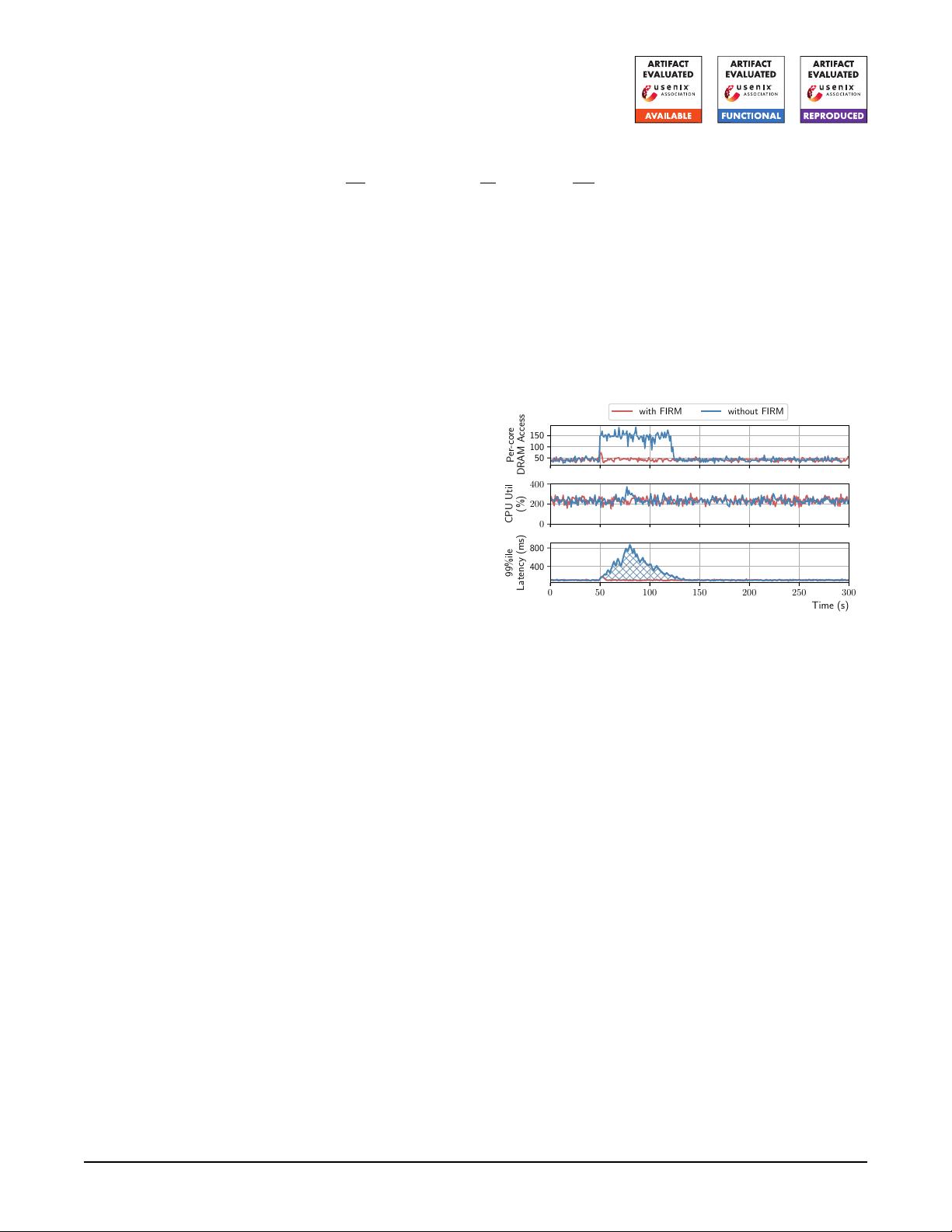

0 50 100 150 200 250 300

Time (s)

400

800

99%ile

Latency (ms)

0

200

400

CPU Util

(%)

50

100

150

Per-core

DRAM Access

with FIRM without FIRM

Figure 1:

Latency spikes on microservices due to low-level

resource contention.

Traditional approaches (e.g., overprovisioning [36, 87], re-

current provisioning [54,66], and autoscaling [39,56,65,81,84,

88,127]) reduce SLO violations by allocating more CPUs and

memory to microservice instances by using performance mod-

els, handcrafted heuristics (i.e., static policies), or machine-

learning algorithms.

Unfortunately, these approaches suffer from two main prob-

lems. First, they fail to efficiently multiplex resources, such as

caches, memory, I/O channels, and network links, at fine gran-

ularity, and thus may not reduce SLO violations. For example,

in Fig. 1, the Kubernetes container-orchestration system [20]

is unable to reduce the tail latency spikes arising from con-

tention for a shared resource like memory bandwidth, as its

autoscaling algorithms were built using heuristics that only

monitor CPU utilization, which does not change much dur-

ing the latency spike. Second, significant human-effort and

training are needed to build high-fidelity performance models

(and related scheduling heuristics) of large-scale microservice

deployments (e.g., queuing systems [27, 39]) that can capture

low-level resource contention. Further, frequent microservice

updates and migrations can lead to recurring human-expert-

driven engineering effort for model reconstruction.

FIRM Framework.

This paper addresses the above prob-

USENIX Association 14th USENIX Symposium on Operating Systems Design and Implementation 805

lems by presenting FIRM, a multilevel machine learning (ML)

based resource management (RM) framework to manage

shared resources among microservices at finer granularity

to reduce resource contention and thus increase performance

isolation and resource utilization. As shown in Fig. 1, FIRM

performs better than a default Kubernetes autoscaler because

FIRM adaptively scales up the microservice (by adding local

cores) to increase the aggregate memory bandwidth alloca-

tion, thereby effectively maintaining the per-core allocation.

FIRM leverages online telemetry data (such as request-tracing

data and hardware counters) to capture the system state, and

ML models for resource contention estimation and mitigation.

Online telemetry data and ML models enable FIRM to adapt

to workload changes and alleviate the need for brittle, hand-

crafted heuristics. In particular, FIRM uses the following ML

models:

•

Support vector machine (SVM) driven detection and lo-

calization of SLO violations to individual microservice

instances. FIRM first identifies the “critical paths,” and

then uses per-critical-path and per-microservice-instance

performance variability metrics (e.g., sojourn time [1]) to

output a binary decision on whether or not a microservice

instance is responsible for SLO violations.

•

Reinforcement learning (RL) driven mitigation of SLO vio-

lations that reduces contention on shared resources. FIRM

then uses resource utilization, workload characteristics, and

performance metrics to make dynamic reprovisioning deci-

sions, which include (a) increasing or reducing the partition

portion or limit for a resource type, (b) scaling up/down,

i.e., adding or reducing the amount of resources attached to

a container, and (c) scaling out/in, i.e., scaling the number

of replicas for services. By continuing to learn mitigation

policies through reinforcement, FIRM can optimize for

dynamic workload-specific characteristics.

Online Training for FIRM.

We developed a performance

anomaly injection framework that can artificially create re-

source scarcity situations in order to both train and assess the

proposed framework. The injector is capable of injecting re-

source contention problems at a fine granularity (such as last-

level cache and network devices) to trigger SLO violations.

To enable rapid (re)training of the proposed system as the un-

derlying systems [67] and workloads [40,42,96,98] change in

datacenter environments, FIRM uses transfer learning. That

is, FIRM leverages transfer learning to train microservice-

specific RL agents based on previous RL experience.

Contributions.

To the best of our knowledge, this is the

first work to provide an SLO violation mitigation framework

for microservices by using fine-grained resource management

in an application-architecture-agnostic way with multilevel

ML models. Our main contributions are:

1.

SVM-based SLO Violation Localization: We present (in

§3.2 and §3.3) an efficient way of localizing the microser-

vice instances responsible for SLO violations by extracting

critical paths and detecting anomaly instances in near-real

time using telemetry data.

2.

RL-based SLO Violation Mitigation: We present (in §3.4)

an RL-based resource contention mitigation mechanism

that (a) addresses the large state space problem and (b)

is capable of tuning tailored RL agents for individual mi-

croservice instances by using transfer learning.

3.

Online Training & Performance Anomaly Injection: We

propose (in §3.6) a comprehensive performance anomaly

injection framework to artificially create resource con-

tention situations, thereby generating the ground-truth data

required for training the aforementioned ML models.

4.

Implementation & Evaluation: We provide an open-source

implementation of FIRM for the Kubernetes container-

orchestration system [

20]. We demonstrate and vali-

date this implementation on four real-world microservice

benchmarks [34, 116] (in §4).

Results.

FIRM significantly outperforms state-of-the-art

RM frameworks like Kubernetes autoscaling [

20, 55] and

additive increase multiplicative decrease (AIMD) based meth-

ods [38, 101].

•

It reduces overall SLO violations by up to 16

×

compared

with Kubernetes autoscaling, and 9

×

compared with the

AIMD-based method, while reducing the overall requested

CPU by as much as 62%.

•

It outperforms the AIMD-based method by up to 9

×

and

Kubernetes autoscaling by up to 30

×

in terms of the time

to mitigate SLO violations.

•

It improves overall performance predictability by reducing

the average tail latencies up to 11×.

•

It successfully localizes SLO violation root-cause microser-

vice instances with 93% accuracy on average.

FIRM mitigates SLO violations without overprovisioning

because of two main features. First, it models the dependency

between low-level resources and application performance in

an RL-based feedback loop to deal with uncertainty and noisy

measurements. Second, it takes a two-level approach in which

the online critical path analysis and the SVM model filter

only those microservices that need to be considered to miti-

gate SLO violations, thus making the framework application-

architecture-agnostic as well as enabling the RL agent to be

trained faster.

2 Background & Characterization

The advent of microservices has led to the development and

deployment of many web services that are composed of “mi-

cro,” loosely coupled, intercommunicating services, instead

of large, monolithic designs. This increased popularity of

service-oriented architectures (SOA) of web services has been

made possible by the rise of containerization [21,70, 92, 108]

and container-orchestration frameworks [19,20,90, 119] that

enable modular, low-overhead, low-cost, elastic, and high-

efficiency development and production deployment of SOA

microservices [8,9,33,34,46,68,77,89,104]. A deployment of

806 14th USENIX Symposium on Operating Systems Design and Implementation USENIX Association

Nginx (N)

video (V)

text (T)

writeTimeline

composePost (C)

followUser

recommender

uniqueID

urlShorten

video

image

text

userTag

favorite

search

readPost blockedUser

ads

login

composePost

index

0

index

1

index

n

postStorage

writeTimeline

writeGraph

memcached

mongoDB

memcached

mongoDB

memcached

mongoDB

userInfo

readTimeline

memcached

mongoDB

memcached

mongoDB

...

Nginx

Client Request

(b) Execution History Graph

(a) Service Dependency Graph

N

V

U I

T

W

C

CP3

CP2CP1 Requests

in Sequential

Requests

in Parallel

memcached mongoDB

Non-User-Facing

Timeline

Service Response

s s s s

r r r r

r

s

r

s

r

s

s

s

s

s

s

s

s

r

r

r

r

r

r

r

r

r

s

s

userTag (U)

uniqueID (I)

(W: run in background)

s

Send

r

Receive Anomaly

Figure 2:

Microservices overview: (a) Service dependency graph of Social Network from the DeathStarBench [34] benchmark;

(b) Execution history graph of a post-compose request in the same microservice.

400 500 600 700

Latency (ms)

0.00

0.25

0.50

0.75

1.00

CDF

Max-CP

Min-CP

(a) Social network service.

600 800 1000

Latency (ms)

0.00

0.25

0.50

0.75

1.00

CDF

Max-CP

Min-CP

(b) Media service.

300 400 500 600

Latency (ms)

0.00

0.25

0.50

0.75

1.00

CDF

Max-CP

Min-CP

(c) Hotel reservation service.

400 600

Latency (ms)

0.00

0.25

0.50

0.75

1.00

CDF

Max-CP

Min-CP

(d) Train-ticket booking service.

Figure 3:

Distributions of end-to-end latencies of different microservices in the DeathStarBench [34] and Train-Ticket [116]

benchmarks. Dashed and solid lines correspond to the minimum and maximum critical path latencies on serving a request.

such microservices can be visualized as a service dependency

graph or an execution history graph. The performance of a

user request, i.e., its end-to-end latency, is determined by the

critical path of its execution history graph.

Definition 2.1.

A service dependency graph captures

communication-based dependencies (the edges of the graph)

between microservice instances (the vertices of the graph),

such as remote procedure calls (RPCs). It tells how requests

are flowing among microservices by following parent-child

relationship chains. Fig.

2(a) shows the service dependency

graph of the Social Network microservice benchmark [34].

Each user request traverses a subset of vertices in the graph.

For example, in Fig. 2(a),

post-compose

requests traverse

only those microservices highlighted in darker yellow.

Definition 2.2.

An execution history graph is the space-

time diagram of the distributed execution of a user request,

where a vertex is one of

send_req

,

recv_req

, and

compute

,

and edges represent the RPC invocations corresponding to

send_req

and

recv_req

. The graph is constructed using the

global view of execution provided by distributed tracing of

all involved microservices. For example, Fig. 2(b) shows the

execution history graph for the user request in Fig. 2(a).

Definition 2.3.

The critical path (CP) to a microservice

m

in the execution history graph of a request is the path of

maximal duration that starts with the client request and ends

with

m

[64, 125]. When we mention CP alone without the

target microservice

m

, it means the critical path of the “Service

Response” to the client (see Fig.

2(b)), i.e., end-to-end latency.

To understand SLO violation characteristics and study

the relationship between runtime performance and the un-

derlying resource contention, we have run extensive perfor-

mance anomaly injection experiments on widely used mi-

croservice benchmarks (i.e. DeathStarBench [34] and Train-

Ticket [116]) and collected around 2 TB of raw tracing data

(over 4.1 ×10

7

traces). Our key insights are as follows.

Insight 1: Dynamic Behavior of CPs.

In microservices,

the latency of the CP limits the overall latency of a user request

in a microservice. However, CPs do not remain static over

the execution of requests in microservices, but rather change

dynamically based on the performance of individual service

instances because of underlying shared-resource contention

and their sensitivity to this interference. Though other causes

may also lead to CP evolution at real-time (e.g., distributed

rate limiting [

86], and cacheability of requested data [2]),

it can still be used as an efficient manifestation of resource

interference.

For example, in Fig. 2(b), we show the existence of three

different CPs (i.e., CP1–CP3) depending on which microser-

vice (i.e.,

V

,

U

,

T

) encounters resource contention. We ar-

tificially create resource contention by using performance

USENIX Association 14th USENIX Symposium on Operating Systems Design and Implementation 807

Table 1:

CP changes in Fig. 2(b) under performance anomaly

injection. Each case is represented by a <

service,CP

> pair.

N, V , U, I, T , and C are microservices from Fig. 2.

Case

Average Individual Latency (ms)

Total (ms)

N V U I T C

<V,CP1> 13 603 166 33 71 68 614 ± 106

<U,CP2> 14 237 537 39 62 89 580 ± 113

<T,CP3> 13 243 180 35 414 80 507 ± 75

40 60 80 100

Individual Latency (ms)

0.00

0.25

0.50

0.75

1.00

CDF

Text

Compose

100 125 150

Total Latency (ms)

0.00

0.25

0.50

0.75

1.00

CDF

Before

Text

Compose

Figure 4:

Improvement of end-to-end latency by scaling

“highest-variance” and “highest-median” microservices.

anomaly injections.

1

Table 1 lists the changes observed in the

latencies of individual microservices, as well as end-to-end la-

tency. We observe as much as 1.2–2

×

variation in end-to-end

latency across the three CPs. Such dynamic behavior exists

across all our benchmark microservices. Fig. 3 illustrates the

latency distributions of CPs with minimum and maximum

latency in each microservice benchmark, where we observe

as much as 1.6

×

difference in median latency and 2.5

×

dif-

ference in 99th percentile tail latency across these CPs.

Recent approaches (e.g., [3,47]) have explored static identi-

fication of CPs based on historic data (profiling) and have built

heuristics (e.g., application placement, level of parallelism)

to enable autoscaling to minimize CP latency. However, our

experiment shows that this by itself is not sufficient. The re-

quirement is to adaptively capture changes in the CPs, in

addition to changing resource allocations to microservice

instances on the identified CPs to mitigate tail latency spikes.

Insight 2: Microservices with Larger Latency Are Not

Necessarily Root Causes of SLO Violations.

It is impor-

tant to find the microservices responsible for SLO violations

to mitigate them. While it is clear that such microservices

will always lie on the CP, it is less clear which individual

service on the CP is the culprit. A common heuristic is to

pick the one with the highest latency. However, we find that

that rarely leads to the optimal solution. Consider Fig. 4. The

left side shows the CDF of the latencies of two services (i.e.,

composePost

and

text

) on the CP of the

post-compose

re-

quest in the Social Network benchmark. The

composePost

service has a higher median/mean latency while the

text

ser-

vice has a higher variance. Now, although the

composePost

1

Performance anomaly injections (§3.6) are used to trigger SLO vio-

lations by generating fine-grained resource contention with configurable

resource types, intensity, duration, timing, and patterns, which helps with

both our characterization (§2) and ML model training (§3.4).

250 500 750 1000 1250 1500 1750 2000 2250

Load (# requests/s)

10

4

10

6

10

8

10

4

10

6

10

8

Scale Up Scale Out CPU Memory

End-to-End Latency (us)

Figure 5:

Dynamic behavior of mitigation strategies: Social

Network (top); Train-Ticket Booking (bottom). Error bars

show 95% confidence intervals on median latencies.

service contributes a larger portion of the total latency, it

does not benefit from scaling (i.e., getting more resources),

as it does not have resource contention. That phenomenon is

shown on the right side of Fig. 4, which shows the end-to-

end latency for the original configuration (labeled “Before”)

and after the two microservices were scaled from a single to

two containers each (labeled “Text” and “Compose”). Hence,

scaling microservices with higher variances provides better

performance gain.

Insight 3: Mitigation Policies Vary with User Load and

Resource in Contention.

The only way to mitigate the ef-

fects of dynamically changing CPs, which in turn cause dy-

namically changing latencies and tail behaviors, is to effi-

ciently identify microservice instances on the CP that are

resource-starved or contending for resources and then provide

them with more of the resources. Two common ways of doing

so are (a) to scale out by spinning up a new instance of the

container on another node of the compute cluster, or (b) to

scale up by providing more resources to the container via

either explicitly partitioning resources (e.g., in the case of

memory bandwidth or last-level cache) or granting more re-

sources to an already deployed container of the microservice

(e.g., in the case of CPU cores).

As described before, recent approaches [23,38,39,56, 65,

84,94,101, 127]) address the problem by building static poli-

cies (e.g., AIMD for controlling resource limits [38, 101],

and rule/heuristics-based scaling relying on profiling of his-

toric data about a workload [23, 94]), or modeling perfor-

mance [39, 56]. However, we found in our experiments with

the four microservice benchmarks that such static policies

are not well-suited for dealing with latency-critical workloads

because the optimal policy must incorporate dynamic contex-

tual information. That is, information about the type of user

requests, and load (in requests per second), as well as the crit-

ical resource bottlenecks (i.e, the resource being contended

for), must be jointly analyzed to make optimal decisions. For

example, in Fig. 5 (top), we observe that the trade-off between

808 14th USENIX Symposium on Operating Systems Design and Implementation USENIX Association

剩余21页未读,继续阅读

大禹倒杯茶

- 粉丝: 12

- 资源: 331

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜最新资源

- 信息办公个人求职管理系统-jobgljsp.rar

- 信息办公一流网络JSP网络管理系统 v1.0-yljsp10.rar

- chirpstack学习

- 管家婆辉煌、财贸、工贸、服装,食品,千方模拟狗

- 基于python开发的工业环境老鼠检测+源码+文档(毕业设计&课程设计&项目开发)

- USB转以太网的芯片SR9900全套设计资料包括(参考设计原理图PCB+ Linux -Windows驱动程序+量产工具)

- 信息办公XML考试系统-xmlks.rar

- 基于python开发的无人机图像目标检测+实验数据+开发文档+操作流程+源码(毕业设计&课程设计&项目开发)

- 全球智能商品管理与优化系统

- IDM下载器(电脑小工具)

资源上传下载、课程学习等过程中有任何疑问或建议,欢迎提出宝贵意见哦~我们会及时处理!

点击此处反馈

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功

评论0