# Deep Learning for Multi-Label Text Classification

[](https://www.python.org/downloads/) [](https://travis-ci.org/RandolphVI/Multi-Label-Text-Classification) [](https://www.codacy.com/app/chinawolfman/Multi-Label-Text-Classification?utm_source=github.com&utm_medium=referral&utm_content=RandolphVI/Multi-Label-Text-Classification&utm_campaign=Badge_Grade) [](https://www.apache.org/licenses/LICENSE-2.0) [](https://github.com/RandolphVI/Multi-Label-Text-Classification/issues)

This repository is my research project, and it is also a study of TensorFlow, Deep Learning (Fasttext, CNN, LSTM, etc.).

The main objective of the project is to solve the multi-label text classification problem based on Deep Neural Networks. Thus, the format of the data label is like [0, 1, 0, ..., 1, 1] according to the characteristics of such a problem.

## Requirements

- Python 3.6

- Tensorflow 1.15.0

- Tensorboard 1.15.0

- Sklearn 0.19.1

- Numpy 1.16.2

- Gensim 3.8.3

- Tqdm 4.49.0

## Project

The project structure is below:

```text

.

âââ Model

â  âââ test_model.py

â  âââ text_model.py

â  âââ train_model.py

âââ data

â  âââ word2vec_100.model.* [Need Download]

â  âââ Test_sample.json

â  âââ Train_sample.json

â  âââ Validation_sample.json

âââ utils

â  âââ checkmate.py

â  âââ data_helpers.py

â  âââ param_parser.py

âââ LICENSE

âââ README.md

âââ requirements.txt

```

## Innovation

### Data part

1. Make the data support **Chinese** and English (Can use `jieba` or `nltk` ).

2. Can use **your pre-trained word vectors** (Can use `gensim`).

3. Add embedding visualization based on the **tensorboard** (Need to create `metadata.tsv` first).

### Model part

1. Add the correct **L2 loss** calculation operation.

2. Add **gradients clip** operation to prevent gradient explosion.

3. Add **learning rate decay** with exponential decay.

4. Add a new **Highway Layer** (Which is useful according to the model performance).

5. Add **Batch Normalization Layer**.

### Code part

1. Can choose to **train** the model directly or **restore** the model from the checkpoint in `train.py`.

2. Can predict the labels via **threshold** and **top-K** in `train.py` and `test.py`.

3. Can calculate the evaluation metrics --- **AUC** & **AUPRC**.

4. Can create the prediction file which including the predicted values and predicted labels of the Testset data in `test.py`.

5. Add other useful data preprocess functions in `data_helpers.py`.

6. Use `logging` for helping to record the whole info (including **parameters display**, **model training info**, etc.).

7. Provide the ability to save the best n checkpoints in `checkmate.py`, whereas the `tf.train.Saver` can only save the last n checkpoints.

## Data

See data format in `/data` folder which including the data sample files. For example:

```json

{"testid": "3935745", "features_content": ["pore", "water", "pressure", "metering", "device", "incorporating", "pressure", "meter", "force", "meter", "influenced", "pressure", "meter", "device", "includes", "power", "member", "arranged", "control", "pressure", "exerted", "pressure", "meter", "force", "meter", "applying", "overriding", "force", "pressure", "meter", "stop", "influence", "force", "meter", "removing", "overriding", "force", "pressure", "meter", "influence", "force", "meter", "resumed"], "labels_index": [526, 534, 411], "labels_num": 3}

```

- **"testid"**: just the id.

- **"features_content"**: the word segment (after removing the stopwords)

- **"labels_index"**: The label index of the data records.

- **"labels_num"**: The number of labels.

### Text Segment

1. You can use `nltk` package if you are going to deal with the English text data.

2. You can use `jieba` package if you are going to deal with the Chinese text data.

### Data Format

This repository can be used in other datasets (text classification) in two ways:

1. Modify your datasets into the same format of [the sample](https://github.com/RandolphVI/Multi-Label-Text-Classification/blob/master/data).

2. Modify the data preprocessing code in `data_helpers.py`.

Anyway, it should depend on what your data and task are.

**ð¤Before you open the new issue about the data format, please check the `data_sample.json` and read the other open issues first, because someone maybe ask me the same question already. For example:**

- [è¾å

¥æ件çæ ¼å¼æ¯ä»ä¹æ ·åçï¼](https://github.com/RandolphVI/Multi-Label-Text-Classification/issues/1)

- [Where is the dataset for training?](https://github.com/RandolphVI/Multi-Label-Text-Classification/issues/7)

- [å¨ data_helpers.py ä¸ç content.txt ä¸ metadata.tsv æ¯ä»ä¹ï¼å

·ä½æ ¼å¼æ¯ä»ä¹ï¼è½å¦æä¾ä¸ä¸ªæ ·ä¾ï¼](https://github.com/RandolphVI/Multi-Label-Text-Classification/issues/12)

### Pre-trained Word Vectors

**You can download the [Word2vec model file](https://drive.google.com/file/d/1S33iejwuQOIaNQfXW7fA_6zBwHHClT--/view?usp=sharing) (dim=100). Make sure they are unzipped and under the `/data` folder.**

You can pre-training your word vectors (based on your corpus) in many ways:

- Use `gensim` package to pre-train data.

- Use `glove` tools to pre-train data.

- Even can use a **fasttext** network to pre-train data.

## Usage

See [Usage](https://github.com/RandolphVI/Multi-Label-Text-Classification/blob/master/Usage.md).

## Network Structure

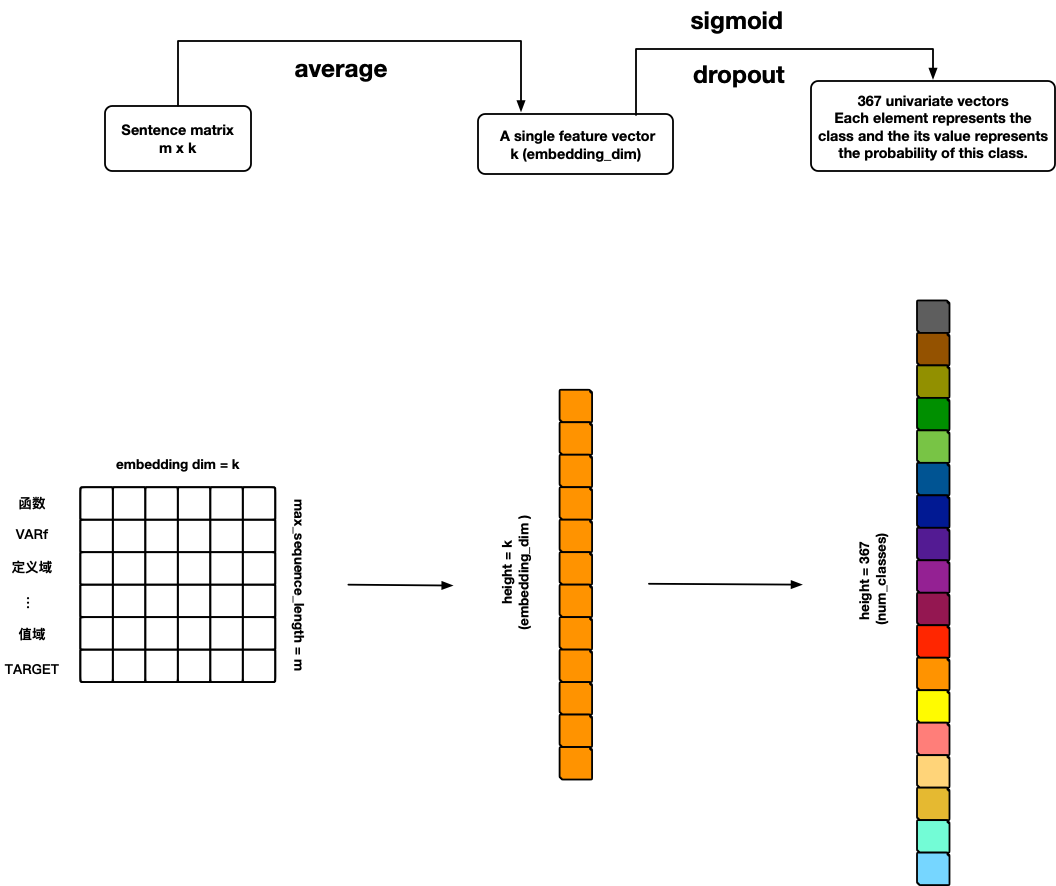

### FastText

References:

- [Bag of Tricks for Efficient Text Classification](https://arxiv.org/pdf/1607.01759.pdf)

---

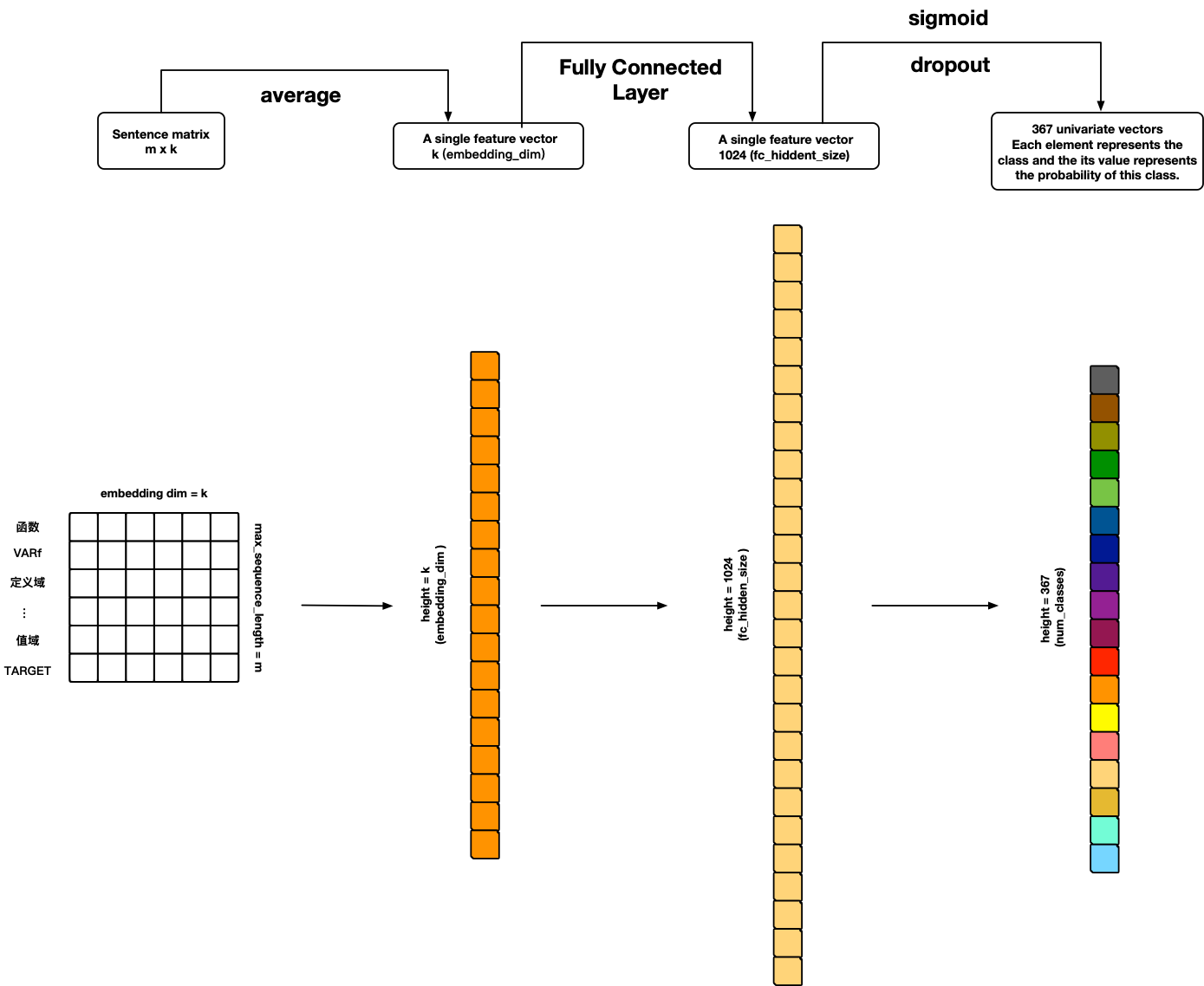

### TextANN

References:

- **Personal ideas ð**

---

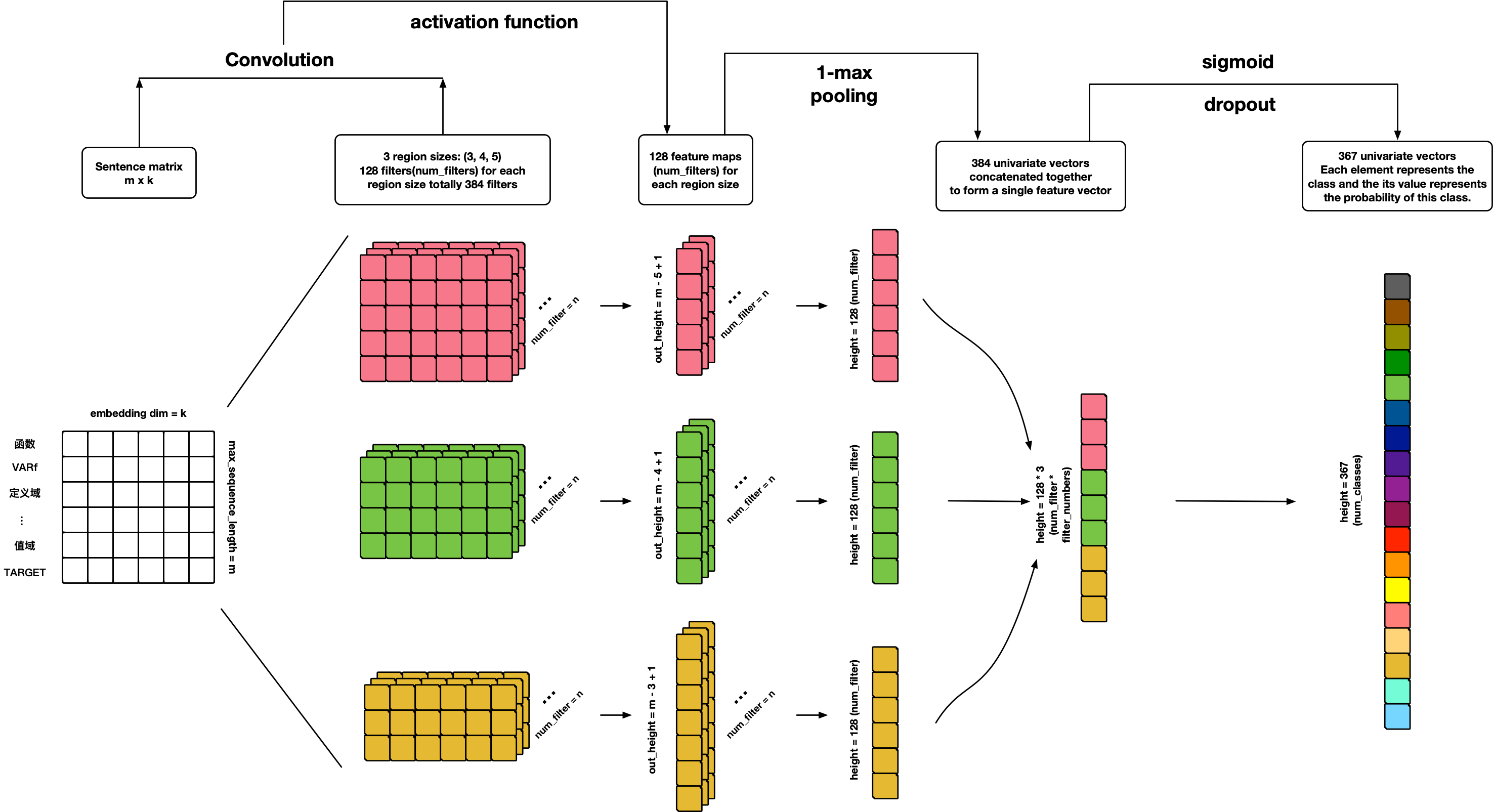

### TextCNN

References:

- [Convolutional Neural Networks for Sentence Classification](http://arxiv.org/abs/1408.5882)

- [A Sensitivity Analysis of (and Practitioners' Guide to) Convolutional Neural Networks for Sentence Classification](http://arxiv.org/abs/1510.03820)

---

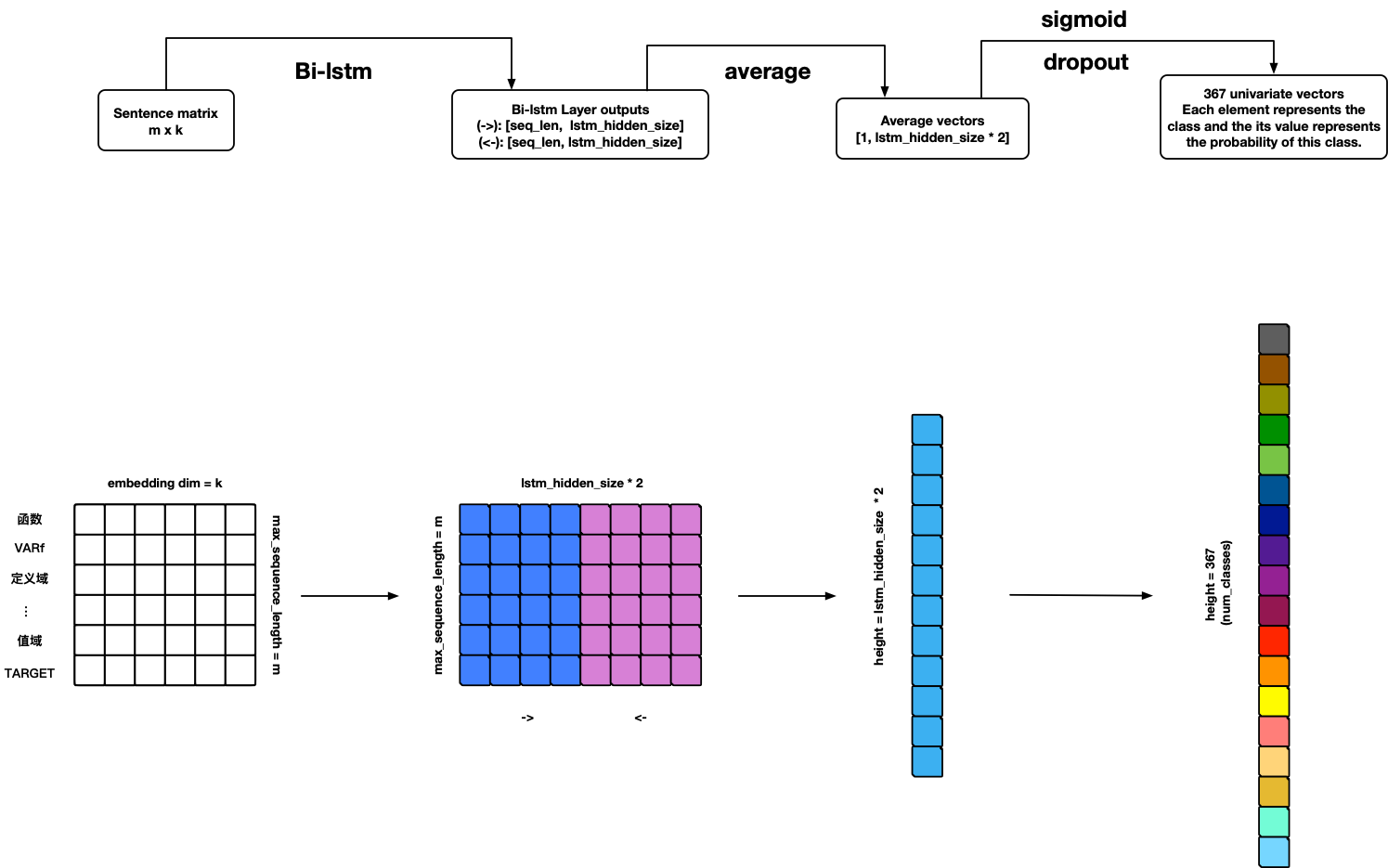

### TextRNN

**Warning: Model can use but not finished yet ð¤ª!**

#### TODO

1. Add BN-LSTM cell unit.

2. Add attention.

References:

- [Recurrent Neural Network for Text Classification with Multi-Task Learning](http://www.aaai.org/ocs/index.php/AAAI/AAAI15/paper/download/9745/9552)

---

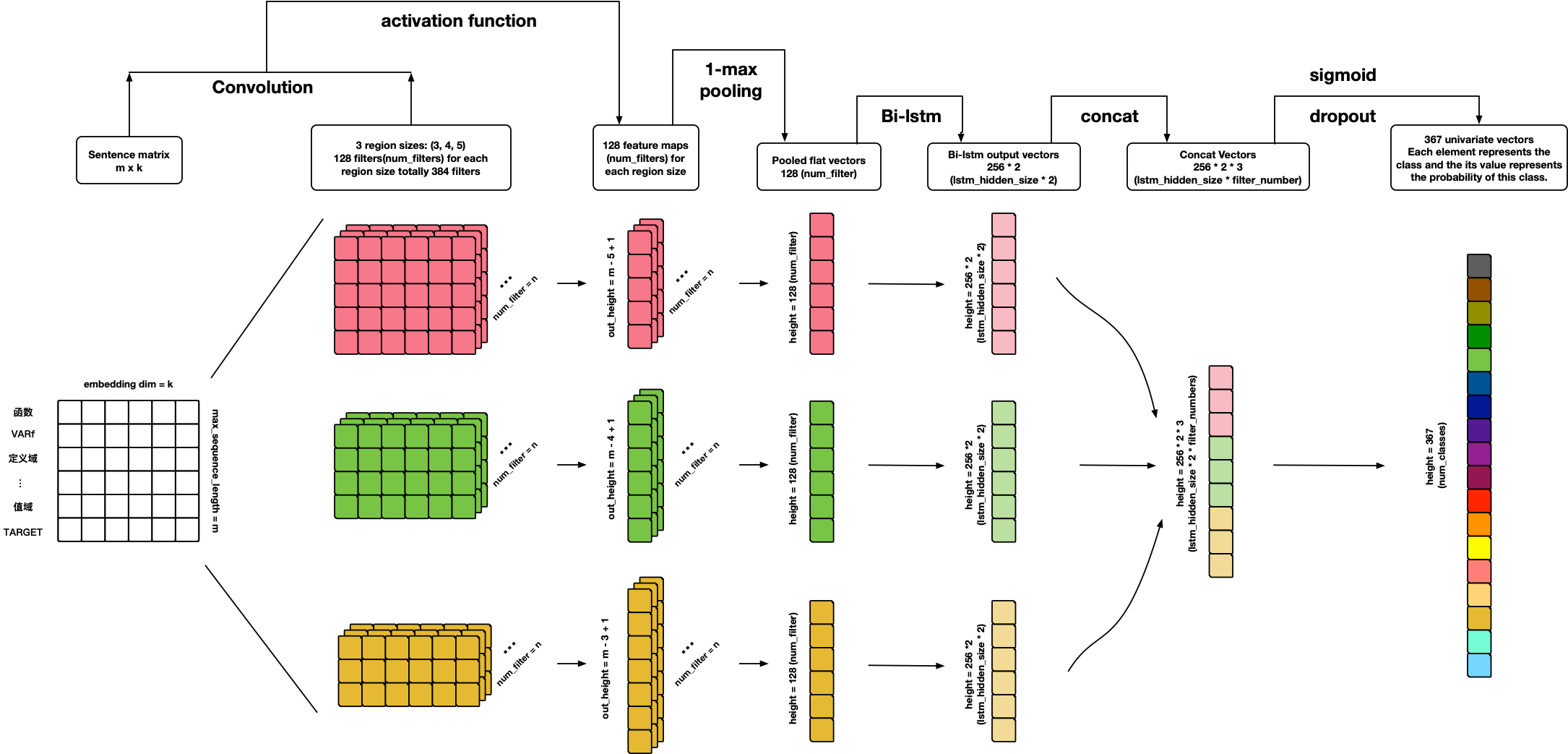

### TextCRNN

References:

- **Personal ideas ð**

---

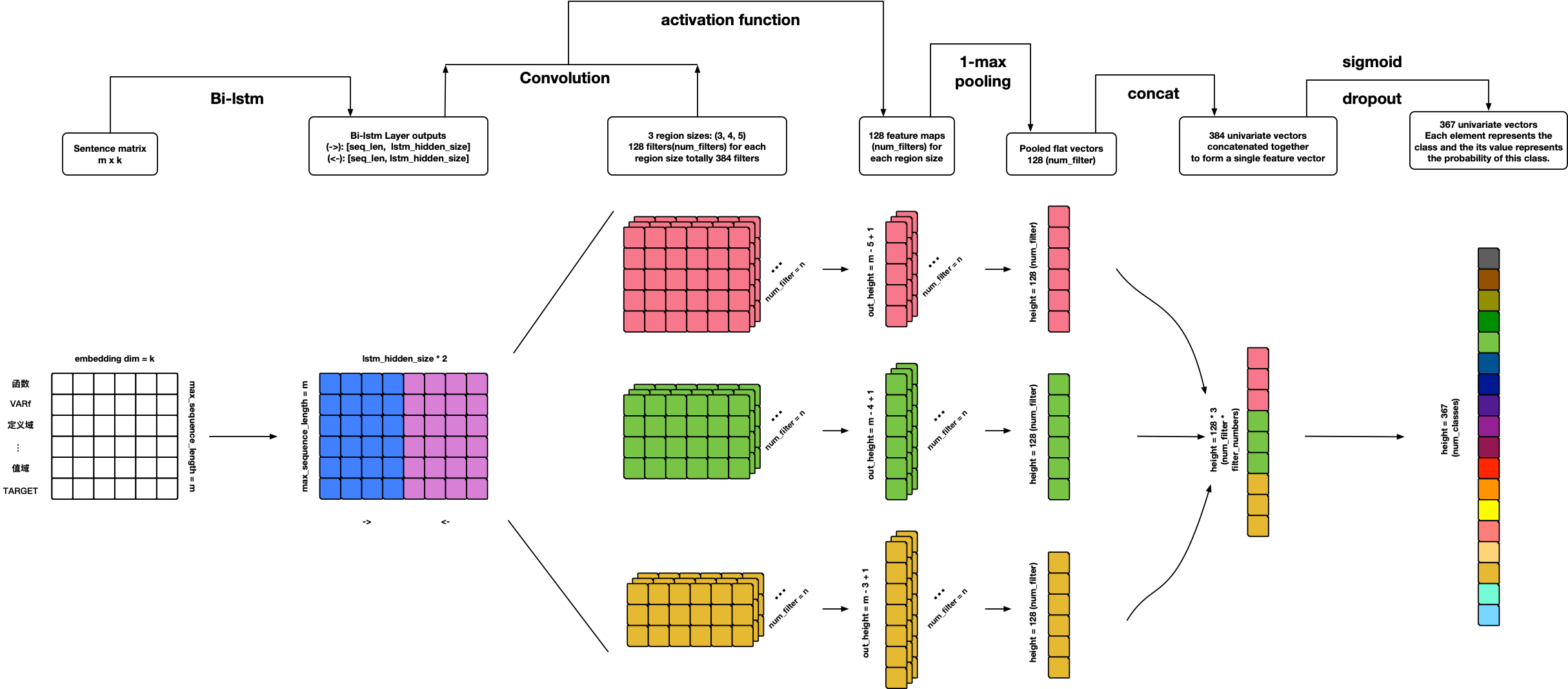

### TextRCNN

References:

- **Personal ideas ð**

---

### TextHAN

References:

- [Hierarchical Attention Networks for Document Classification](https://www.cs.cmu.edu/~diyiy/docs/naacl16.pdf)

---

### TextSANN

**Warning: Model can use but not finished yet ð¤ª!**

#### TODO

1. Add attention penalization loss.

2. Add visualization.

References:

- [A STRUCTURED SELF-ATTENTIVE SENTENCE EMBEDDING](https://arxiv.org/pdf/1703.03130.pdf)

---

## About Me

é»å¨ï¼Randolph

SCU SE Bachelor; USTC CS Ph.D.

Email: chinawolfman@hotmail.com

My Blog: [randolph.pro](http://randolph.pro)

LinkedIn: [randolph's linkedin](https://www.linkedin.com/in/randolph-%E9%BB%84%E5%A8%81/)

基于fasttext的文本多分类算法.zip

版权申诉

188 浏览量

2023-12-20

11:53:01

上传

评论

收藏 97KB ZIP 举报

小黑码蚁

- 粉丝: 2414

- 资源: 2082

最新资源

- 论文(最终)_20240430235101.pdf

- 基于python编写的Keras深度学习框架开发,利用卷积神经网络CNN,快速识别图片并进行分类

- 最全空间计量实证方法(空间杜宾模型和检验以及结果解释文档).txt

- 5uonly.apk

- 蓝桥杯Python组的历年真题

- 2023-04-06-项目笔记 - 第一百十九阶段 - 4.4.2.117全局变量的作用域-117 -2024.04.30

- 2023-04-06-项目笔记 - 第一百十九阶段 - 4.4.2.117全局变量的作用域-117 -2024.04.30

- 前端开发技术实验报告:内含4四实验&实验报告

- Highlight Plus v20.0.1

- 林周瑜-论文.docx

资源上传下载、课程学习等过程中有任何疑问或建议,欢迎提出宝贵意见哦~我们会及时处理!

点击此处反馈