ZHANG AND LI: MOEA/D: A MULTIOBJECTIVE EVOLUTIONARY ALGORITHM BASED ON DECOMPOSITION 713

originally designed for scalar optimization, cannot be directly

used in nondecomposition MOEAs. Arguably, if there is a

fitness assignment scheme for assigning an individual solution

a relative fitness value to reflect its utility for selection, then

scalar optimization EAs can be readily extended for dealing

with MOPs, although other techniques such as mating restric-

tion [20], diversity maintaining [21], some properties of MOPs

[22], and external populations [23] may also be needed for

enhancing the performances of these extended algorithms. For

this reason, fitness assignment has been a major issue in current

MOEA research. The popular fitness assignment strategies

include alternating objectives-based fitness assignment such

as the vector evaluation genetic algorithm (VEGA) [24], and

domination-based fitness assignment such as Pareto archived

evolutionary strategy (PAES) [14], strength Pareto evolutionary

algorithm II (SPEA-II) [15], and nondominated sorting genetic

algorithm II (NSGA-II) [16].

The idea of decomposition has been used to a certain extent

in several metaheuristics for MOPs [25]–[29]. For example, the

two-phase local search (TPLS) [25] considers a set of scalar op-

timization problems, in which the objectives are aggregations

of the objectives in the MOP under consideration, a scalar opti-

mization algorithm is applied to these scalar optimization prob-

lems in a sequence based on aggregation coefficients, a solution

obtained in the previous problem is set as a starting point for

solving the next problem since its aggregation objective is just

slightly different from that in the previous one. The multiob-

jective genetic local search (MOGLS) aims at simultaneous op-

timization of all aggregations constructed by the weighted sum

approach or Tchebycheff approach [29]. At each iteration, it op-

timizes a randomly generated aggregation objective.

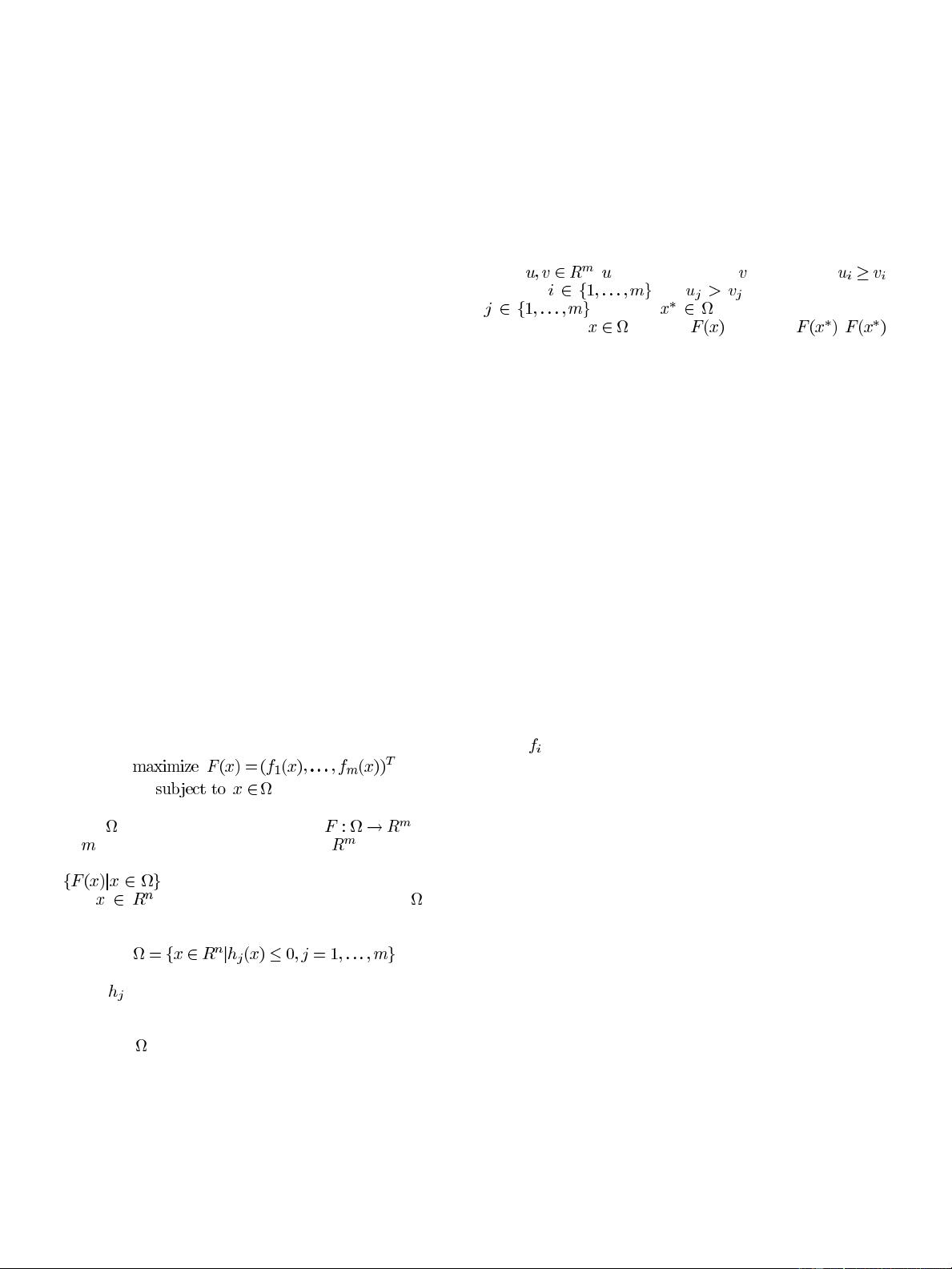

In this paper, we propose a newmultiobjective evolutionary al-

gorithm based on decomposition (MOEA/D). MOEA/D explic-

itlydecomposestheMOP(1) into

scalaroptimization subprob-

lems. It solves these subproblems simultaneously by evolving

a population of solutions. At each generation, the population is

composed of the best solution found so far (i.e. since the start of

the run of the algorithm) for each subproblem. The neighborhood

relations among these subproblems are defined based on the dis-

tances between their aggregation coefficientvectors. The optimal

solutions to twoneighboring subproblems should be very similar.

Each subproblem (i.e., scalar aggregation function) is optimized

in MOEA/D by using information only from its neighboring sub-

problems. MOEA/D has the following features.

• MOEA/D provides a simple yet efficient way of intro-

ducing decomposition approaches into multiobjective evo-

lutionary computation. A decomposition approach, often

developed in the community of mathematical program-

ming, can be readily incorporated into EAs in the frame-

work MOEA/D for solving MOPs.

• Since MOEA/D optimizes

scalar optimization problems

rather than directly solving a MOP as a whole, issues such

as fitness assignment and diversity maintenance that cause

difficulties for nondecomposition MOEAS could become

easier to handle in the framework of MOEA/D.

• MOEA/D has lower computational complexity at each

generation than NSGA-II and MOGLS. Overall, MOEA/D

outperforms, in terms of solution quality, MOGLS on 0–1

multiobjective knapsack test instances when both algo-

rithms use the same decomposition approach. MOEA/D

with the Tchebycheff decomposition approach performs

similarly to NSGA-II on a set of continuous MOP test

instances. MOEA/D with an advanced decomposition

approach performs much better than NSGA-II on 3-ob-

jective continuous test instances. MOEA/D using a small

population is able to produce a small number of very

evenly distributed solutions.

• Objective normalization techniques can be incorporated

into MOEA/D for dealing with disparately scaled objec-

tives.

• It is very natural to use scalar optimization methods in

MOEA/D since each solution is associated with a scalar

optimization problem. In contrast, one of the major short-

comings of nondecomposition MOEAs is that there is no

easy way for them to take the advantage of scalar optimiza-

tion methods.

This paper is organized as follows. Section II introduces

three decomposition approaches for MOPs. Section III presents

MOEA/D. Sections IV and V compare MOEA/D with MOGLS

and NSGA-II and show that MOEA/D outperforms or performs

similarly to MOGLS and NSGA-II. Section VI presents more

experimental studies on MOEA/D. Section VII concludes this

paper.

II. D

ECOMPOSITION OF

MULTIOBJECTIVE OPTIMIZATION

There are several approaches for converting the problem of

approximation of the PF into a number of scalar optimization

problems. In the following, we introduce three approaches,

which are used in our experimental studies.

A. Weighted Sum Approach [1]

This approach considers a convex combination of the dif-

ferent objectives. Let

be a weight vector,

i.e.,

for all and . Then, the

optimal solution to the following scalar optimization problem:

(2)

is a Pareto optimal point to (1),

2

where we use

to em-

phasize that

is a coefficient vector in this objective function,

while

is the variables to be optimized. To generate a set of

different Pareto optimal vectors, one can use different weight

vectors

in the above scalar optimization problem. If the PF

is concave (convex in the case of minimization), this approach

could work well. However, not every Pareto optimal vector can

be obtained by this approach in the case of nonconcave PFs.

To overcome these shortcomings, some effort has been made to

incorporate other techniques such as

-constraint into this ap-

proach, more details can be found in [1].

B. Tchebycheff Approach [1]

In this approach, the scalar optimization problem is in the

form

(3)

where

is the reference point, i.e.,

3

for each . For each Pareto

2

If (1) is for minimization, “maximize” in (2) should be changed to “mini-

mize.”

3

In the case when the goal of (1) is minimization,

z

=min

f

f

(

x

)

j

x

2

g

.

Authorized licensed use limited to: Northeastern University. Downloaded on November 02,2020 at 14:30:56 UTC from IEEE Xplore. Restrictions apply.

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜

信息提交成功

信息提交成功