[Readme in Chinese](https://github.com/code4craft/webmagic/tree/master/README-zh.md)

[](https://travis-ci.org/code4craft/webmagic)

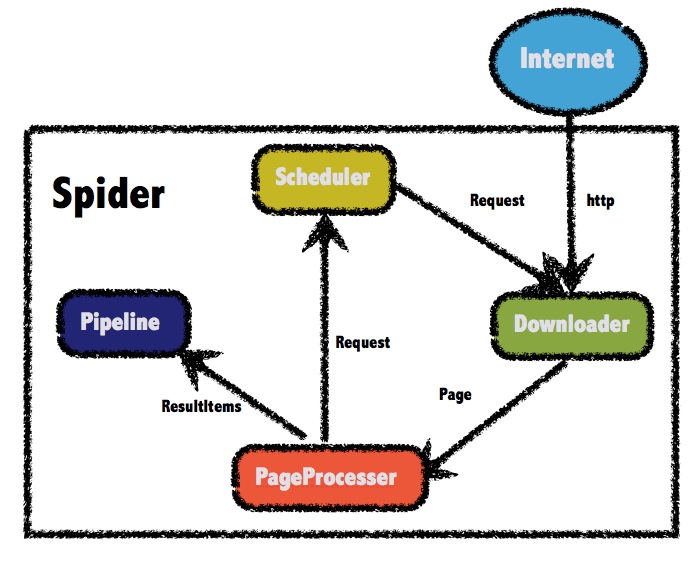

>A scalable crawler framework. It covers the whole lifecycle of crawler: downloading, url management, content extraction and persistent. It can simplify the development of a specific crawler.

## Features:

* Simple core with high flexibility.

* Simple API for html extracting.

* Annotation with POJO to customize a crawler, no configuration.

* Multi-thread and Distribution support.

* Easy to be integrated.

## Install:

Add dependencies to your pom.xml:

```xml

<dependency>

<groupId>us.codecraft</groupId>

<artifactId>webmagic-core</artifactId>

<version>0.6.1</version>

</dependency>

<dependency>

<groupId>us.codecraft</groupId>

<artifactId>webmagic-extension</artifactId>

<version>0.6.1</version>

</dependency>

```

WebMagic use slf4j with slf4j-log4j12 implementation. If you customized your slf4j implementation, please exclude slf4j-log4j12.

```xml

<exclusions>

<exclusion>

<groupId>org.slf4j</groupId>

<artifactId>slf4j-log4j12</artifactId>

</exclusion>

</exclusions>

```

## Get Started:

### First crawler:

Write a class implements PageProcessor. For example, I wrote a crawler of github repository infomation.

```java

public class GithubRepoPageProcessor implements PageProcessor {

private Site site = Site.me().setRetryTimes(3).setSleepTime(1000);

@Override

public void process(Page page) {

page.addTargetRequests(page.getHtml().links().regex("(https://github\\.com/\\w+/\\w+)").all());

page.putField("author", page.getUrl().regex("https://github\\.com/(\\w+)/.*").toString());

page.putField("name", page.getHtml().xpath("//h1[@class='public']/strong/a/text()").toString());

if (page.getResultItems().get("name")==null){

//skip this page

page.setSkip(true);

}

page.putField("readme", page.getHtml().xpath("//div[@id='readme']/tidyText()"));

}

@Override

public Site getSite() {

return site;

}

public static void main(String[] args) {

Spider.create(new GithubRepoPageProcessor()).addUrl("https://github.com/code4craft").thread(5).run();

}

}

```

* `page.addTargetRequests(links)`

Add urls for crawling.

You can also use annotation way:

```java

@TargetUrl("https://github.com/\\w+/\\w+")

@HelpUrl("https://github.com/\\w+")

public class GithubRepo {

@ExtractBy(value = "//h1[@class='public']/strong/a/text()", notNull = true)

private String name;

@ExtractByUrl("https://github\\.com/(\\w+)/.*")

private String author;

@ExtractBy("//div[@id='readme']/tidyText()")

private String readme;

public static void main(String[] args) {

OOSpider.create(Site.me().setSleepTime(1000)

, new ConsolePageModelPipeline(), GithubRepo.class)

.addUrl("https://github.com/code4craft").thread(5).run();

}

}

```

### Docs and samples:

Documents: [http://webmagic.io/docs/](http://webmagic.io/docs/)

The architecture of webmagic (refered to [Scrapy](http://scrapy.org/))

There are more examples in `webmagic-samples` package.

### Lisence:

Lisenced under [Apache 2.0 lisence](http://opensource.org/licenses/Apache-2.0)

### Thanks:

To write webmagic, I refered to the projects below :

* **Scrapy**

A crawler framework in Python.

[http://scrapy.org/](http://scrapy.org/)

* **Spiderman**

Another crawler framework in Java.

[http://git.oschina.net/l-weiwei/spiderman](http://git.oschina.net/l-weiwei/spiderman)

### Mail-list:

[https://groups.google.com/forum/#!forum/webmagic-java](https://groups.google.com/forum/#!forum/webmagic-java)

[http://list.qq.com/cgi-bin/qf_invite?id=023a01f505246785f77c5a5a9aff4e57ab20fcdde871e988](http://list.qq.com/cgi-bin/qf_invite?id=023a01f505246785f77c5a5a9aff4e57ab20fcdde871e988)

QQ Group: 373225642

### Related Project

* <a href="https://github.com/gsh199449/spider" target="_blank">Gather Platform</a>

A web console based on WebMagic for Spider configuration and management.

没有合适的资源?快使用搜索试试~ 我知道了~

java 爬虫 爬福利图片

共770个文件

class:270个

java:202个

xml:133个

需积分: 50 72 下载量 154 浏览量

2017-05-02

22:38:00

上传

评论 3

收藏 53.79MB RAR 举报

温馨提示

java 爬虫 爬福利图片 自己去掉数据库方面的操作就能爬到本地 我是存数据库的,仅供学习哈 java 爬虫 爬福利图片 自己去掉数据库方面的操作就能爬到本地 我是存数据库的,仅供学习哈

资源推荐

资源详情

资源评论

收起资源包目录

java 爬虫 爬福利图片 (770个子文件)

java 爬虫 爬福利图片 (770个子文件)  webmagic-create-spider.bmml 26KB

webmagic-create-spider.bmml 26KB webmagic-spider-manage.bmml 7KB

webmagic-spider-manage.bmml 7KB page-extract-rule.bmml 458B

page-extract-rule.bmml 458B XpathSelectorTest.class 75KB

XpathSelectorTest.class 75KB MockGithubDownloader.class 54KB

MockGithubDownloader.class 54KB Spider.class 18KB

Spider.class 18KB PageModelExtractor.class 17KB

PageModelExtractor.class 17KB HttpClientDownloader.class 8KB

HttpClientDownloader.class 8KB HttpClientDownloaderTest.class 8KB

HttpClientDownloaderTest.class 8KB HttpUriRequestConverter.class 8KB

HttpUriRequestConverter.class 8KB FileCacheQueueScheduler.class 7KB

FileCacheQueueScheduler.class 7KB HttpClientGenerator.class 7KB

HttpClientGenerator.class 7KB ScriptConsole.class 7KB

ScriptConsole.class 7KB Site.class 7KB

Site.class 7KB Page.class 7KB

Page.class 7KB WebDriverPool.class 6KB

WebDriverPool.class 6KB ImageWatermarkProcess.class 6KB

ImageWatermarkProcess.class 6KB HtmlNode.class 5KB

HtmlNode.class 5KB MysqlConnection.class 5KB

MysqlConnection.class 5KB Xpath2Selector.class 5KB

Xpath2Selector.class 5KB SeleniumDownloader.class 5KB

SeleniumDownloader.class 5KB RedisPriorityScheduler.class 5KB

RedisPriorityScheduler.class 5KB ZipCodePageProcessor.class 5KB

ZipCodePageProcessor.class 5KB QiuPipeline.class 5KB

QiuPipeline.class 5KB RedisScheduler.class 5KB

RedisScheduler.class 5KB ScriptConsole$Params.class 5KB

ScriptConsole$Params.class 5KB ModelPageProcessor.class 5KB

ModelPageProcessor.class 5KB MultiPagePipeline.class 5KB

MultiPagePipeline.class 5KB ScriptProcessor.class 4KB

ScriptProcessor.class 4KB PhantomJSDownloader.class 4KB

PhantomJSDownloader.class 4KB UrlUtils.class 4KB

UrlUtils.class 4KB Request.class 4KB

Request.class 4KB NeihanNetPageProcessor.class 4KB

NeihanNetPageProcessor.class 4KB QiuoxPageProcess.class 4KB

QiuoxPageProcess.class 4KB AbstractSelectable.class 4KB

AbstractSelectable.class 4KB SpiderMonitor.class 4KB

SpiderMonitor.class 4KB RegexSelector.class 4KB

RegexSelector.class 4KB HttpClientDownloaderTest$2.class 4KB

HttpClientDownloaderTest$2.class 4KB MysqlPipeline.class 4KB

MysqlPipeline.class 4KB Html.class 4KB

Html.class 4KB GithubRepo.class 4KB

GithubRepo.class 4KB HttpClientDownloaderTest$1.class 3KB

HttpClientDownloaderTest$1.class 3KB CssSelector.class 3KB

CssSelector.class 3KB ExtractRule.class 3KB

ExtractRule.class 3KB FilePipeline.class 3KB

FilePipeline.class 3KB OOSpider.class 3KB

OOSpider.class 3KB BaiduBaikePageProcessor.class 3KB

BaiduBaikePageProcessor.class 3KB News163.class 3KB

News163.class 3KB SpiderStatus.class 3KB

SpiderStatus.class 3KB GithubRepo.class 3KB

GithubRepo.class 3KB BloomFilterDuplicateRemoverTest.class 3KB

BloomFilterDuplicateRemoverTest.class 3KB HtmlTest.class 3KB

HtmlTest.class 3KB ModelPageProcessorTest.class 3KB

ModelPageProcessorTest.class 3KB DoubleKeyMap.class 3KB

DoubleKeyMap.class 3KB BasicTypeFormatter.class 3KB

BasicTypeFormatter.class 3KB SmartContentSelector.class 3KB

SmartContentSelector.class 3KB Xpath2Selector$XPath2NamespaceContext.class 3KB

Xpath2Selector$XPath2NamespaceContext.class 3KB HttpRequestBody.class 3KB

HttpRequestBody.class 3KB CountableThreadPool.class 3KB

CountableThreadPool.class 3KB BaiduBaike.class 3KB

BaiduBaike.class 3KB OneFilePipeline.class 3KB

OneFilePipeline.class 3KB GithubRepoApi.class 3KB

GithubRepoApi.class 3KB PlainText.class 3KB

PlainText.class 3KB HuabanProcessor.class 3KB

HuabanProcessor.class 3KB ModelPipeline.class 3KB

ModelPipeline.class 3KB DelayQueueScheduler.class 3KB

DelayQueueScheduler.class 3KB PatternProcessorExample$2.class 3KB

PatternProcessorExample$2.class 3KB CompositePageProcessor.class 3KB

CompositePageProcessor.class 3KB SeleniumDownloaderTest.class 3KB

SeleniumDownloaderTest.class 3KB DelayQueueScheduler$RequestWrapper.class 3KB

DelayQueueScheduler$RequestWrapper.class 3KB PatternProcessorExample.class 3KB

PatternProcessorExample.class 3KB ConfigurablePageProcessor.class 3KB

ConfigurablePageProcessor.class 3KB PriorityScheduler.class 3KB

PriorityScheduler.class 3KB ConfigurablePageProcessorTest.class 3KB

ConfigurablePageProcessorTest.class 3KB JsonPathSelectorTest.class 3KB

JsonPathSelectorTest.class 3KB DuplicateRemovedScheduler.class 3KB

DuplicateRemovedScheduler.class 3KB GithubRepoPageProcessor.class 3KB

GithubRepoPageProcessor.class 3KB GithubRepoPageMapper.class 3KB

GithubRepoPageMapper.class 3KB SeleniumTest.class 2KB

SeleniumTest.class 2KB ExtractorsTest.class 2KB

ExtractorsTest.class 2KB ExtractorUtils.class 2KB

ExtractorUtils.class 2KB DuplicateRemovedSchedulerTest.class 2KB

DuplicateRemovedSchedulerTest.class 2KB SpiderTest.class 2KB

SpiderTest.class 2KB ZhihuPageProcessor.class 2KB

ZhihuPageProcessor.class 2KB OschinaBlog.class 2KB

OschinaBlog.class 2KB CharsetUtils.class 2KB

CharsetUtils.class 2KB PatternProcessorExample$1.class 2KB

PatternProcessorExample$1.class 2KB BloomFilterDuplicateRemover.class 2KB

BloomFilterDuplicateRemover.class 2KB Kr36NewsModel.class 2KB

Kr36NewsModel.class 2KB PageModelCollectorPipeline.class 2KB

PageModelCollectorPipeline.class 2KB ScriptProcessorBuilder.class 2KB

ScriptProcessorBuilder.class 2KB JsonFilePageModelPipeline.class 2KB

JsonFilePageModelPipeline.class 2KB Json.class 2KB

Json.class 2KB ResultItems.class 2KB

ResultItems.class 2KB JsonFilePipeline.class 2KB

JsonFilePipeline.class 2KB CompositePipeline.class 2KB

CompositePipeline.class 2KB FilePageModelPipeline.class 2KB

FilePageModelPipeline.class 2KB OschinaBlog.class 2KB

OschinaBlog.class 2KB SimpleProxyProvider.class 2KB

SimpleProxyProvider.class 2KB ObjectFormatters.class 2KB

ObjectFormatters.class 2KB共 770 条

- 1

- 2

- 3

- 4

- 5

- 6

- 8

资源评论

angerYang

- 粉丝: 5

- 资源: 7

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功