consistencies, bilinearly pooling them to identify tampered

regions. Second, we show that the two streams are comple-

mentary for detecting different tampered techniques, lead-

ing to improved performance on four image manipulation

datasets compared to state-of-the-art methods.

2. Related Work

Research on image forensics consists of various ap-

proaches to detect the low-level tampering artifacts within

a tampered image, including double JPEG compression [4],

CFA color array anaylsis [19] and local noise analysis [7].

Specifically, Bianchi et al. [4] propose a probabilistic model

to estimate the DCT coefficients and quantization factors

for different regions. CFA based methods analyze low-

level statistics introduced by the camera internal filter pat-

terns under the assumption that the tampered regions disturb

these patterns. Goljan et al. [19] propose a Gaussian Mix-

ture Model (GMM) to classify CFA present regions (authen-

tic regions) and CFA absent regions (tampered regions).

Recently, local noise features based methods, like the

steganalysis rich model (SRM) [15], have shown promis-

ing performance in image forensics tasks. These methods

extract local noise features from adjacent pixels, capturing

the inconsistency between tampered regions and authentic

regions. Cozzolino et al. [7] explore and demonstrate the

performance of SRM features in distinguishing tampered

and authentic regions. They also combine SRM features by

including the quantization and truncation operations with a

Convolutional Neural Network (CNN) to perform manipu-

lation localization [8]. Rao et al. [27] use an SRM filter

kernel as initialization for a CNN to boost the detection ac-

curacy. Most of these methods focus on specific tampering

artifacts and are limited to specific tampering techniques.

We also use these SRM filter kernels to extract low-level

noise that is used as the input to a Faster R-CNN network,

and learn to capture tampering traces from the noise fea-

tures. Moreover, a parallel RGB stream is trained jointly to

model mid- and high-level visual tampering artifacts.

With the success of deep learning techniques in various

computer vision and image processing tasks, a number of

recent techniques have also employed deep learning to ad-

dress image manipulation detection. Chen et al. [5] add a

low pass filter layer before a CNN to detect median filter-

ing tampering techniques. Bayar et al. [3] change the low

pass filter layer to an adaptive kernel layer to learn the fil-

tering kernel used in tampered regions. Beyond filtering

learning, Zhang et al. [34] propose a stacked autoencoder

to learn context features for image manipulation detection.

Cozzolino et al. [9] treat this problem as an anomaly de-

tection task and use an autoencoder based on extracted fea-

tures to distinguish those regions that are difficult to recon-

struct as tampered regions. Salloum et al. [29] use a Fully

Convolutional Network (FCN) framework to directly pre-

dict the tampering mask given an image. They also learn a

boundary mask to guide the FCN to look at tampered edges,

which assists them in achieving better performance in vari-

ous image manipulation datasets. Bappy et al. [2] propose

an LSTM based network applied to small image patches to

find the tampering artifacts on the boundaries between tam-

pered patches and image patches. They jointly train this

network with pixel level segmentation to improve the per-

formance and show results under different tampering tech-

niques. However, only focusing on nearby boundaries pro-

vides limited success in different scenarios, e.g., removing

the whole object might leave no boundary evidence for de-

tection. Instead, we use global visual tampering artifacts

as well as the local noise features to model richer tamper-

ing artifacts. We use a two-stream network built on Faster

R-CNN to learn rich features for image manipulation detec-

tion. The network shows robustness to splicing, copy-move

and removal. In addition, the network enables us to make a

classification of the suspected tampering techniques.

3. Proposed Method

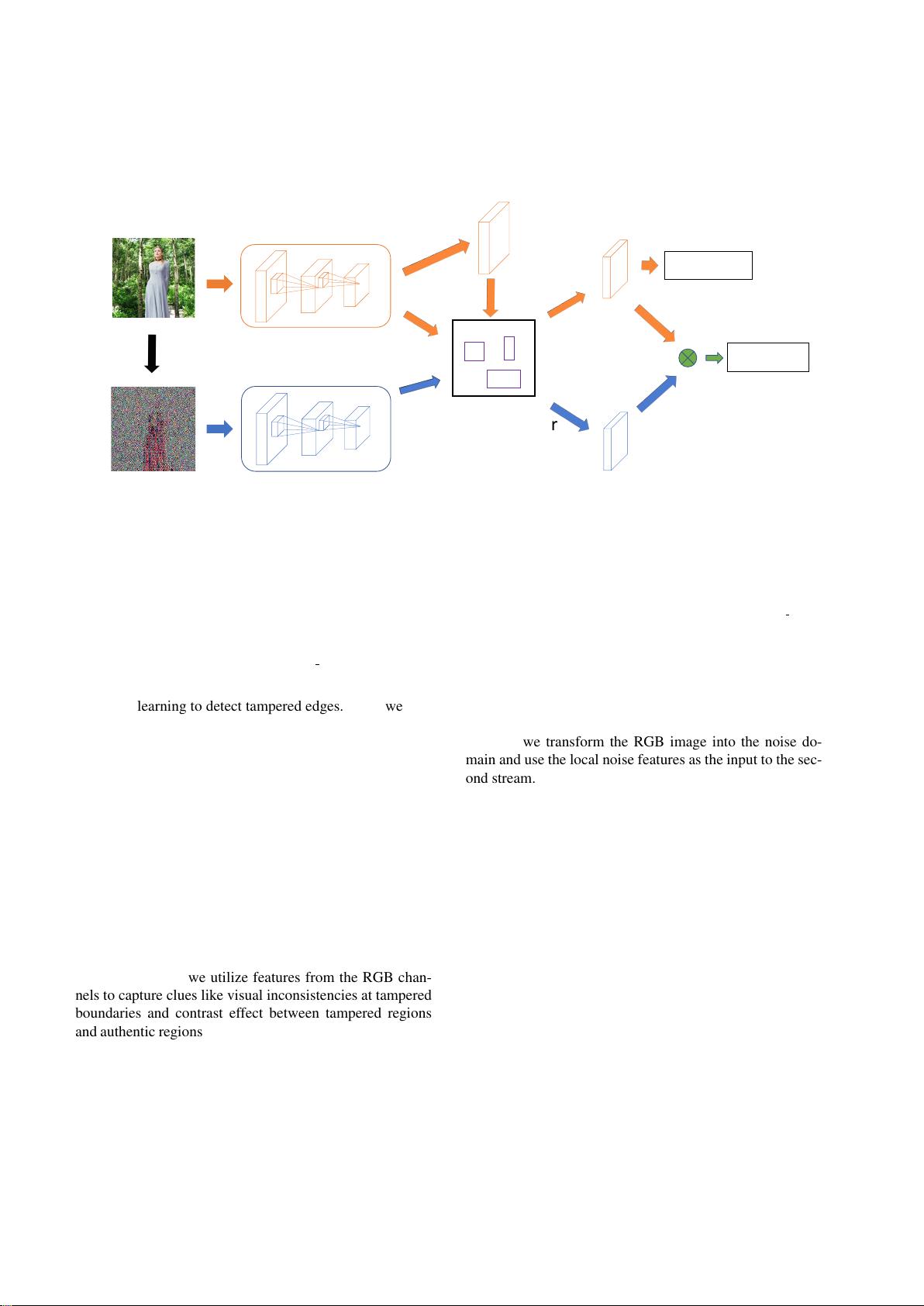

We employ a multi-task framework that simultaneously

performs manipulation classification and bounding box re-

gression. RGB images are provided in the RGB stream (the

top stream in Figure 2), and SRM images in the noise stream

(the bottom stream in Figure 2). We fuse the two streams

through bilinear pooling before a fully connected layer for

manipulation classification. The RPN uses the RGB stream

to localize tampered regions.

3.1. RGB Stream

The RGB stream is a single Faster R-CNN network and

is used both for bounding box regression and manipulation

classification. We use a ResNet 101 network [20] to learn

features from the input RGB image. The output features of

the last convolutional layer of ResNet are used for manipu-

lation classification.

The RPN network in the RGB stream utilizes these fea-

tures to propose RoI for bounding box regression. Formally,

the loss for the RPN network is defined as

L

RP N

(g

i

, f

i

) =

1

N

cls

X

i

L

cls

(g

i

, g

?

i

)

+λ

1

N

reg

X

i

g

?

i

L

reg

(f

i

, f

?

i

), (1)

where g

i

denotes the probability of anchor i being a poten-

tial manipulated region in a mini batch, and g

?

i

denotes the

ground-truth label for anchor i to be positive. The terms f

i

,

f

?

i

are the 4 dimensional bounding box coordinates for an-

chor i and the ground-truth, respectively. L

cls

denotes cross

entropy loss for RPN network and L

reg

denotes smooth L

1

图像篡改检测.zip (2个子文件)

图像篡改检测.zip (2个子文件)  图像篡改检测

图像篡改检测  Learning Rich Features for Image Manipulation Detection.pdf 2.46MB

Learning Rich Features for Image Manipulation Detection.pdf 2.46MB LSN-图像篡改检测.pptx 3MB

LSN-图像篡改检测.pptx 3MB

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜

信息提交成功

信息提交成功