databricks-spark-knowledge-base.pdf

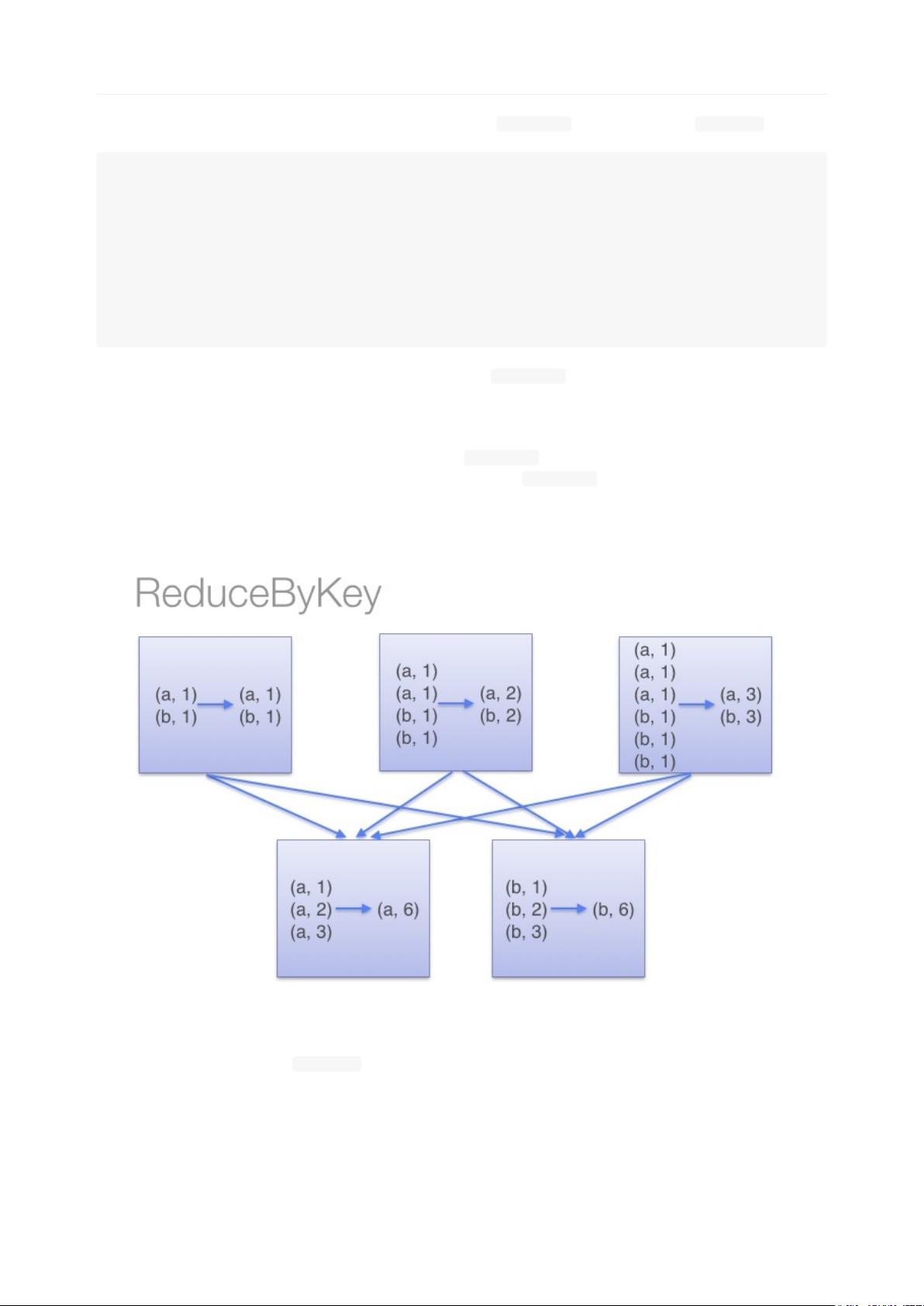

Apache Spark是一个开源的分布式计算系统,它提供了一个快速且通用的计算引擎,支持多种工作负载,如批处理、迭代算法、交互式查询和流处理。Spark以其高速、易用和多种数据源兼容性而闻名。它有一个高级的DAG(有向无环图)执行引擎,支持循环数据流和内存计算。Databricks是为Apache Spark提供支持的服务和云平台。 本知识点来源于文件“databricks-spark-knowledge-base.pdf”,接下来会详细阐述文件中所提及的几个关键知识点: 1. 最佳实践: a. 避免使用GroupByKey:GroupByKey操作会将所有相同键的值拉到同一个分区中,这会导致大量的网络传输和不均匀的数据分布。相反,建议使用reduceByKey操作,因为它可以在每个分区内部先进行数据的合并,然后才进行数据的打散和传输。 b. 不要将大型RDD的元素全部复制到驱动程序:驱动程序负责整个Spark作业的调度和结果的收集,如果将大型RDD的元素全部复制到驱动程序,会导致内存溢出或性能瓶颈。 c. 妥善处理错误输入数据:在处理外部数据源时,应进行数据清洗和验证,确保输入数据的质量,以避免因数据质量问题导致的作业失败。 2. 通用故障排除: a. 因阶段失败导致的作业中止:这可能是因为序列化问题,即一些数据或对象不能被序列化进行网络传输。 b. Jar文件中缺失依赖:在分布式环境中运行Spark作业时,需要确保所有必需的依赖都包含在Jar文件中。 c. 连接拒绝错误:运行start-all.sh脚本时出现“Connection refused”通常是因为网络连接问题,或者需要启动的服务没有正确运行。 d. Spark组件之间的网络连接问题:确保集群中的所有节点都可互通,并且没有网络配置错误。 3. 性能优化: a. RDD分区数量:适当调整RDD的分区数可改善计算的并行度,分区过小会导致任务调度开销变大,分区过大则可能导致内存溢出。 b. 数据局部性:Spark中的数据局部性指的是数据和处理它的计算节点之间的接近程度,优先在数据所在节点上进行处理,避免不必要的数据移动,可以显著提升性能。 4. Spark Streaming: a. ERROROneForOneStrategy:这是指在Spark Streaming中,如果遇到错误,错误处理策略默认是逐个处理,如果错误处理不当,可能会导致无限循环的错误。 在文档中提到了关于word count的示例,比较了使用reduceByKey和groupByKey两种方法。reduceByKey方法通过在每个分区中先合并相同键的值,从而减少了网络传输,并允许Spark在Shuffle之前优化计算。而groupByKey方法则是先将所有键值对聚集到一个分区,然后再进行合并,这会增加网络负载和Shuffle过程中的计算量。在处理大规模数据集时,reduceByKey通常能提供更好的性能。 通过阅读这些内容,可以更好地理解和使用Spark来解决大数据处理中的常见问题,优化性能,以及快速定位和解决故障。这些最佳实践和故障排除技巧是使用Spark平台时必须掌握的关键知识点,有助于编写更高效、更健壮的Spark应用程序。

剩余21页未读,继续阅读

- 粉丝: 21

- 资源: 51

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜最新资源

- (174808034)webgis课程设计文件

- (177121232)windows电脑下载OpenHarmony鸿蒙命令行工具hdc-std

- (177269606)使用Taro开发鸿蒙原生应用.zip

- (170644008)Eclipse+MySql+JavaSwing选课成绩管理系统

- (14173842)条形码例子

- (176419244)订餐系统-小程序.zip

- Java Web实现电子购物系统

- (30485858)SSM(Spring+springmvc+mybatis)项目实例.zip

- (172760630)数据结构课程设计文档1

- 基于simulink的悬架仿真模型,有主动悬架被动悬架天棚控制半主动悬架 1基于pid控制的四自由度主被动悬架仿真模型 2基于模糊控制的二自由度仿真模型,对比pid控制对比被动控制,的比较说明

信息提交成功

信息提交成功