没有合适的资源?快使用搜索试试~ 我知道了~

MiniGPT-4: Enhancing Vision-Language Understanding with Advanced

需积分: 1 1 下载量 184 浏览量

2023-04-24

14:59:32

上传

评论

收藏 6.31MB PDF 举报

温馨提示

试读

18页

MiniGPT-4论文英文版 MiniGPT-4: Enhancing Vision-Language Understanding with Advanced Large Language Models

资源推荐

资源详情

资源评论

MiniGPT-4:

Enhancing Vision-Language Understanding with

Advanced Large Language Models

Deyao Zhu

∗

Jun Chen

∗

Xiaoqian Shen Xiang Li Mohamed Elhoseiny

King Abdullah University of Science and Technology

{deyao.zhu, jun.chen, xiaoqian.shen, xiang.li.1, mohamed.elhoseiny}@kaust.edu.sa

Abstract

The recent GPT-4 has demonstrated extraordinary multi-modal abilities, such

as directly generating websites from handwritten text and identifying humorous

elements within images. These features are rarely observed in previous vision-

language models. We believe the primary reason for GPT-4’s advanced multi-modal

generation capabilities lies in the utilization of a more advanced large language

model (LLM). To examine this phenomenon, we present MiniGPT-4, which aligns

a frozen visual encoder with a frozen LLM, Vicuna, using just one projection

layer. Our findings reveal that MiniGPT-4 possesses many capabilities similar to

those exhibited by GPT-4 like detailed image description generation and website

creation from hand-written drafts. Furthermore, we also observe other emerging

capabilities in MiniGPT-4, including writing stories and poems inspired by given

images, providing solutions to problems shown in images, teaching users how to

cook based on food photos, etc. In our experiment, we found that only performing

the pretraining on raw image-text pairs could produce unnatural language outputs

that lack coherency including repetition and fragmented sentences. To address

this problem, we curate a high-quality, well-aligned dataset in the second stage to

finetune our model using a conversational template. This step proved crucial for

augmenting the model’s generation reliability and overall usability. Notably, our

model is highly computationally efficient, as we only train a projection layer utiliz-

ing approximately 5 million aligned image-text pairs. Our code, pre-trained model,

and collected dataset are available at https://minigpt-4.github.io/.

1 Introduction

In recent years, large language models (LLMs) have experienced rapid advancements [

21

,

18

,

4

,

24

,

32

,

9

,

14

]. With exceptional language understanding capabilities, these models can perform a variety

of intricate linguistic tasks in a zero-shot manner. Notably, GPT-4 [

19

], a large-scale multimodal

model, has been recently introduced with demonstrating many impressive capabilities. For example,

GPT-4 can produce very detailed and accurate image descriptions, explain unusual visual phenomena,

and even construct websites based on handwritten text instructions.

Although GPT-4 has exhibited remarkable capabilities, the methods behind its exceptional abilities

are still a mystery [

19

]. We believe that these superior skills may stem from the utilization of a

more advanced large language model (LLM). LLMs have demonstrated various emergent abilities, as

evidenced in GPT-3’s few-shot prompting setup [

4

] and the findings of Wei et al. (2022) [

34

]. Such

emergent properties are hard to find in smaller-scale models. It is conjectured that these emergent

∗

Equal contribution

Preprint. Work in progress.

abilities are also applicable to multi-modal models, which could be the foundation of GPT-4’s

impressive visual description capabilities.

To substantiate our hypothesis, we present a novel model named MiniGPT-4. It utilizes an advanced

large language model (LLM), Vicuna [

8

], which is built upon LLaMA [

32

] and reported to achieve

90% of ChatGPT’s quality as per GPT-4’s evaluation, as the language decoder. In terms of visual

perception, we employ the same pretrained vision component of BLIP-2 [

16

] that consists of a

ViT-G/14 from EVA-CLIP [

13

] and a Q-Former. MiniGPT-4 adds a single projection layer to align

the encoded visual features with the Vicuna language model and freezes all the other vision and

language components. MiniGPT-4 is initially trained for 20k steps using a batch size of 256 on 4

A100 GPUs, leveraging a combined dataset that includes images from LAION [

26

], Conceptual

Captions [

5

,

27

], and SBU [

20

] to align visual features with the Vicuna language model. However,

simply aligning the visual features with the LLM is insufficient to train high-performing model

with visual conversation abilities like a chatbot, and the noises underlying the raw image-text pairs

may result in incoherent language output. Therefore, we collect another 3,500 high-quality aligned

image-text pairs to further fine-tune the model with a designed conversational template in order to

improve the naturalness of the generated language and its usability.

In our experiments, we discovered that MiniGPT-4 possesses numerous capabilities similar to those

demonstrated by GPT-4. For instance, MiniGPT-4 can generate intricate image descriptions, create

websites based on handwritten text instructions, and explain unusual visual phenomena. Furthermore,

our findings revealed that MiniGPT-4 also has a variety of other intriguing abilities not showcased

in the GPT-4 demonstrations. For example, MiniGPT-4 can directly generate detailed recipes by

observing appetizing food photos, craft stories or rap songs inspired by images, write advertisements

for products in images, distinguish problems shown in photos and provide corresponding solutions,

and retrieve rich facts about people, movies, or art directly from images, among other capabilities.

These abilities are absent in previous vision-language models like Kosmos-1 [

15

] and BLIP-2 [

16

],

which do not apply a stronger language model such as Vicuna. This contrast validates that integrating

visual features with an advanced language model can yield emergent vision-language abilities.

We present a summary of our key findings:

•

Our research reveals that by aligning visual features with the advanced large language model,

Vicuna, we can achieve emergent vision-language capabilities. We demonstrate that our

MiniGPT-4 can process abilities similar to those showcased in the GPT-4 demonstrations.

•

By utilizing a pretrained vision encoder and a large language model, MiniGPT-4 achieves

greater computational efficiency. Our findings suggest that training merely one projection

layer can effectively align the visual features with the large language model. Our MiniGPT-4

only requires training approximately 10 hours on 4 A100 GPUs.

•

We discovered that simply aligning visual features with large language models using raw

image-text pairs from public datasets is not sufficient for developing a well-performing

MiniGPT-4 model. It may produce unnatural language outputs that lack coherency including

repetition and fragmented sentences. Addressing this limitation requires training with a

high-quality, well-aligned dataset, which significantly improves its usability.

2 Related Works

Large language models have experienced tremendous success in recent years due to the scaling

up of training data and an increase in the number of parameters. Early models, such as BERT [

11

],

GPT-2 [

22

], and T5 [

23

], laid the foundation for this progress. Subsequently, GPT-3 [

4

], with a

massive scale of 175 billion parameters, was introduced, demonstrating significant breakthroughs

across numerous language benchmarks. This development inspired the creation of various other

large language models, including Megatron-Turing NLG [

28

], Chinchilla [

14

], PaLM [

9

], OPT [

38

],

BLOOM [

25

], and LLaMA [

32

], among others. Wei et al. [

34

] further discovered several emergent

abilities, which appear exclusively in large models. The emergence of these abilities underscores

the importance of scaling up in the development of large language models. Moreover, by aligning

the pre-trained large language model GPT-3 with human intent, instructions and human feedback,

InstructGPT [

21

] and ChatGPT [

18

] enable conversational interactions with humans and can answer

a wide range of diverse and complex questions. More recently, several open-sourced models, such

2

The logo design is simple and minimalistic, with a pink line drawing of a flamingo

standing on one leg in the water. The design is clean and easy to recognize, making it

suitable for use in various contexts such as a logo for a beach resort or a flamingo-

themed event. The use of a flamingo as a symbol adds a touch of whimsy and fun to

the design, making it memorable and eye-catching. Overall, it's a well-designed logo

that effectively communicates the brand's message.

### Human:

What do you think of this

logo design? ### Assistant:

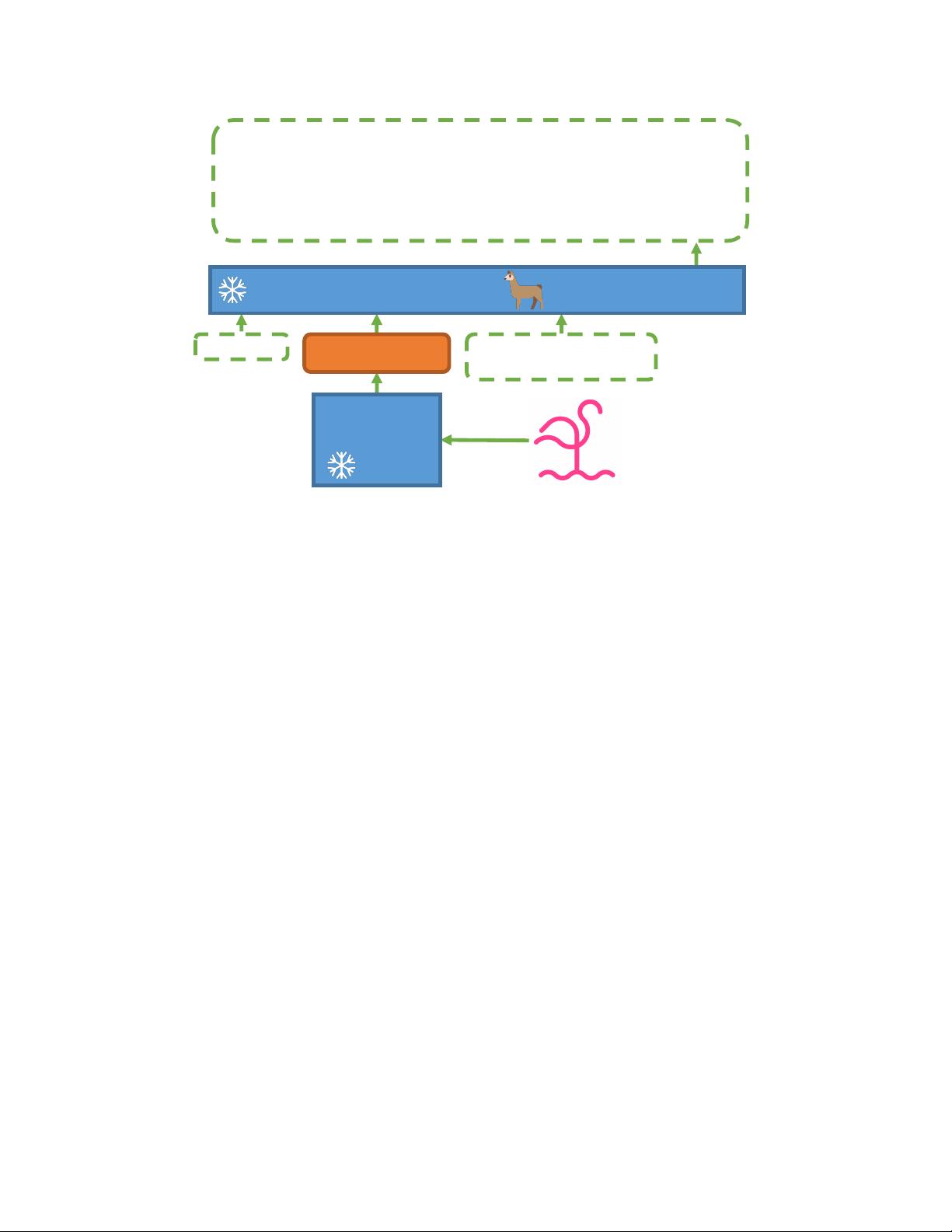

Linear Layer

Q-Former

&

ViT

Vicuna

Figure 1: The architecture of MiniGPT-4. It consists of a vision encoder with a pretrained ViT and

Q-Former, a single linear projection layer, and an advanced Vicuna large language model. MiniGPT-4

only requires training the linear projection layer to align the visual features with the Vicuna.

as Alpaca [

30

] and Vicuna [

8

], have been developed based on LLaMA [

32

] and also exhibit similar

performance.

Leveraging Pre-trained LLMs in Vision-Language Tasks. In recent years, the trend of using

autoregressive language models as decoders in vision-language tasks has gained significant traction [

6

,

15

,

36

,

31

,

2

,

16

,

17

,

12

]. This approach takes advantage of cross-modal transfer, allowing knowledge

to be shared between language and multimodal domains. Pioneering studies like VisualGPT [

6

]

and Frozen [

33

] have demonstrated the benefits of employing a pre-trained language model as a

vision-language model decoder. Flamingo [

2

] was then developed to align a pre-trained vision

encoder and language model using gated cross-attention, and was trained on billions of image-text

pairs, showcasing impressive in-context few-shot learning capabilities. Following that, BLIP-2 [

16

]

was introduced, employing a Flan-T5 [

10

] with a Q-Former to efficiently align visual features with the

language model. Most recently, PaLM-E [

12

], featuring 562 billion parameters, has been developed

to integrate real-world continuous sensor modalities into an LLM, thereby establishing a connection

between real-world perceptions and human languages. GPT-4 [

19

] has also been recently released,

showcasing more powerful visual understanding and reasoning abilities after pre-training on a vast

collection of aligned image-text data.

LLMs, such as ChatGPT, have proven to be powerful tools in enhancing the performance of vision-

language tasks by collaborating with other specialized models. For instance, Visual ChatGPT [

35

]

and MM-REACT [

37

] showcase how ChatGPT can act as a coordinator, integrating with diverse

visual foundation models and facilitating their collaboration to tackle more complex challenges.

ChatCaptioner [

39

] treats ChatGPT as a questioner, prompting diverse questions for BLIP-2 to

answer. Through multi-round conversations, ChatGPT extracts visual information from BLIP-2

and effectively summarizes the image content. Video ChatCaptioner [

7

] extends this approach,

applying it to video spatiotemporal understanding. ViperGPT [

29

] demonstrates the potential of

combining an LLM with different vision models to address complex visual queries programmatically.

In contrast, MiniGPT4 directly aligns visual information with the language model to accomplish

diverse vision-language tasks without the usage of external vision models.

3

3 Method

MiniGPT-4 aims to align visual information from a pretrained vision encoder with an advanced large

language model (LLM). Specifically, we utilize the Vicuna [

8

] as our language decoder, which is

constructed upon LLaMA [

32

] and can perform a wide range of complex linguistic tasks. For visual

perception, we employ the same visual encoder as used in BLIP-2 [

16

], a ViT backbone [

13

] coupled

with their pre-trained Q-Former. Both language and vision models are open-sourced. We target to

bridge the gap between the visual encoder and LLM using a linear projection layer, with an overview

of our model displayed in Fig.1.

To achieve an effective MiniGPT-4, we propose a two-stage training approach. The initial stage

involves pretraining the model on a large collection of aligned image-text pairs to acquire vision-

language knowledge. In the second stage, we fine-tune the pretrained model with a smaller but

high-quality image-text dataset with a designed conversational template to enhance the model’s

generation reliability and usability.

3.1 First pretraining stage

During the initial pretraining stage, the model is designed to acquire vision-language knowledge from

a large collection of aligned image-text pairs. We regard the output from the injected projection layer

as a soft prompt for the LLM, prompting it to generate the corresponding ground-truth texts.

Throughout the entire pretraining process, both the pretrained vision encoder and the LLM remain

frozen, with only the linear projection layer being pretrained. We use a combined dataset of Concep-

tual Caption [

5

,

27

], SBU [

20

] and LAION [

26

] to train our model. Our model undergoes 20,000

training steps with a batch size of 256, covering approximately 5 million image-text pairs. The entire

process takes about 10 hours to complete, utilizing 4 A100 (80GB) GPUs.

Issues of the first pretraining stage. Following the first pretraining stage, our MiniGPT-4 demon-

strates the capacity to possess a wealth of knowledge and offer reasonable responses to human

inquiries. However, we have observed instances where it struggles to produce coherent linguistic

output, such as generating repetitive words or sentences, fragmented sentences, or irrelevant content.

These issues hinder MiniGPT-4’s ability to engage in a fluent visual conversation with humans.

We have also noticed that similar issues were also faced in GPT-3. Despite being pretrained on an

extensive language dataset, GPT-3 could not directly generate language outputs that are in accordance

with the users’ intentions. Through a process of instruction fine-tuning and reinforcement learning

from human feedback, GPT-3 evolves into GPT-3.5 [

21

,

18

] and becomes capable of producing more

human-friendly outputs. This phenomenon bears a resemblance to the current state of MiniGPT-4

following its initial pretraining stage. As such, it is not surprising that our model may struggle to

generate fluent and natural human language outputs at this stage.

3.2 Curating a high-quality alignment dataset for vision-language domain.

To achieve greater naturalness in the generated language and enhance the model’s usability, a second-

stage alignment process is essential. While in the realm of NLP, instruction fine-tuning datasets

[30] and conversations [1] are easily accessible, no equivalent datasets exist for the vision-language

domain. To address this deficiency, we carefully curated a high-quality image-text dataset, specifically

tailored for alignment purposes. This dataset is subsequently utilized to fine-tune our MiniGPT-4

during the second-stage alignment process.

Initial aligned image-text generation In the initial phase, we employ the model derived from

the first pretraining stage to generate a comprehensive description of a given image. To enable our

model to produce the more detailed image descriptions, we have designed a prompt that adheres to

the conversational format of the Vicuna [8] language model, as shown below:

###Human: <Img><ImageFeature></Img> Describe this image in detail. Give as many details as

possible. Say everything you see. ###Assistant:

In this prompt, <ImageFeature> represents the visual features produced by the linear projection

layer.

4

剩余17页未读,继续阅读

资源评论

BOTOAI

- 粉丝: 525

- 资源: 14

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功