没有合适的资源?快使用搜索试试~ 我知道了~

RelationNet++: Bridging Visual Representations for Object Detect

0 下载量 192 浏览量

2023-11-25

15:53:20

上传

评论

收藏 611KB PDF 举报

温馨提示

试读

11页

SCI原文:RelationNet++: Bridging Visual Representations for Object Detect

资源推荐

资源详情

资源评论

RelationNet++: Bridging Visual Representations for

Object Detection via Transformer Decoder

Cheng Chi

∗

Institute of Automation, CAS

chicheng15@mails.ucas.ac.cn

Fangyun Wei

Microsoft Research Asia

fawe@microsoft.com

Han Hu

Microsoft Research Asia

hanhu@microsoft.com

Abstract

Existing object detection frameworks are usually built on a single format of ob-

ject/part representation, i.e., anchor/proposal rectangle boxes in RetinaNet and

Faster R-CNN, center points in FCOS and RepPoints, and corner points in Corner-

Net. While these different representations usually drive the frameworks to perform

well in different aspects, e.g., better classification or finer localization, it is in gen-

eral difficult to combine these representations in a single framework to make good

use of each strength, due to the heterogeneous or non-grid feature extraction by

different representations. This paper presents an attention-based decoder module

similar as that in Transformer [

31

] to bridge other representations into a typical

object detector built on a single representation format, in an end-to-end fashion.

The other representations act as a set of key instances to strengthen the main query

representation features in the vanilla detectors. Novel techniques are proposed

towards efficient computation of the decoder module, including a key sampling

approach and a shared location embedding approach. The proposed module is

named bridging visual representations (BVR). It can perform in-place and we

demonstrate its broad effectiveness in bridging other representations into prevalent

object detection frameworks, including RetinaNet, Faster R-CNN, FCOS and ATSS,

where about

1.5 ∼ 3.0

AP improvements are achieved. In particular, we improve a

state-of-the-art framework with a strong backbone by about

2.0

AP, reaching

52.7

AP on COCO test-dev. The resulting network is named RelationNet++. The code

is available at https://github.com/microsoft/RelationNet2.

1 Introduction

Object detection is a vital problem in computer vision that many visual applications build on. While

there have been numerous approaches towards solving this problem, they usually leverage a single

visual representation format. For example, most object detection frameworks [

9

,

8

,

24

,

18

] utilize

the rectangle box to represent object hypotheses in all intermediate stages. Recently, there have also

been some frameworks adopting points to represent an object hypothesis, e.g., center point in Center-

Net [

38

] and FCOS [

29

], point set in RepPoints [

35

,

36

,

3

] and PSN [

34

]. In contrast to representing

whole objects, some keypoint-based methods, e.g., CornerNet [

15

], leverage part representations of

corner points to compose an object. In general, different representation methods usually steer the

detectors to perform well in different aspects. For example, the bounding box representation is better

aligned with annotation formats for object detection. The center representation avoids the need for an

anchoring design and is usually friendly to small objects. The corner representation is usually more

accurate for finer localization.

It is natural to raise a question: could we combine these representations into a single framework to

make good use of each strength? Noticing that different representations and their feature extractions

∗

The work is done when Cheng Chi is an intern at Microsoft Research Asia.

34th Conference on Neural Information Processing Systems (NeurIPS 2020), Vancouver, Canada.

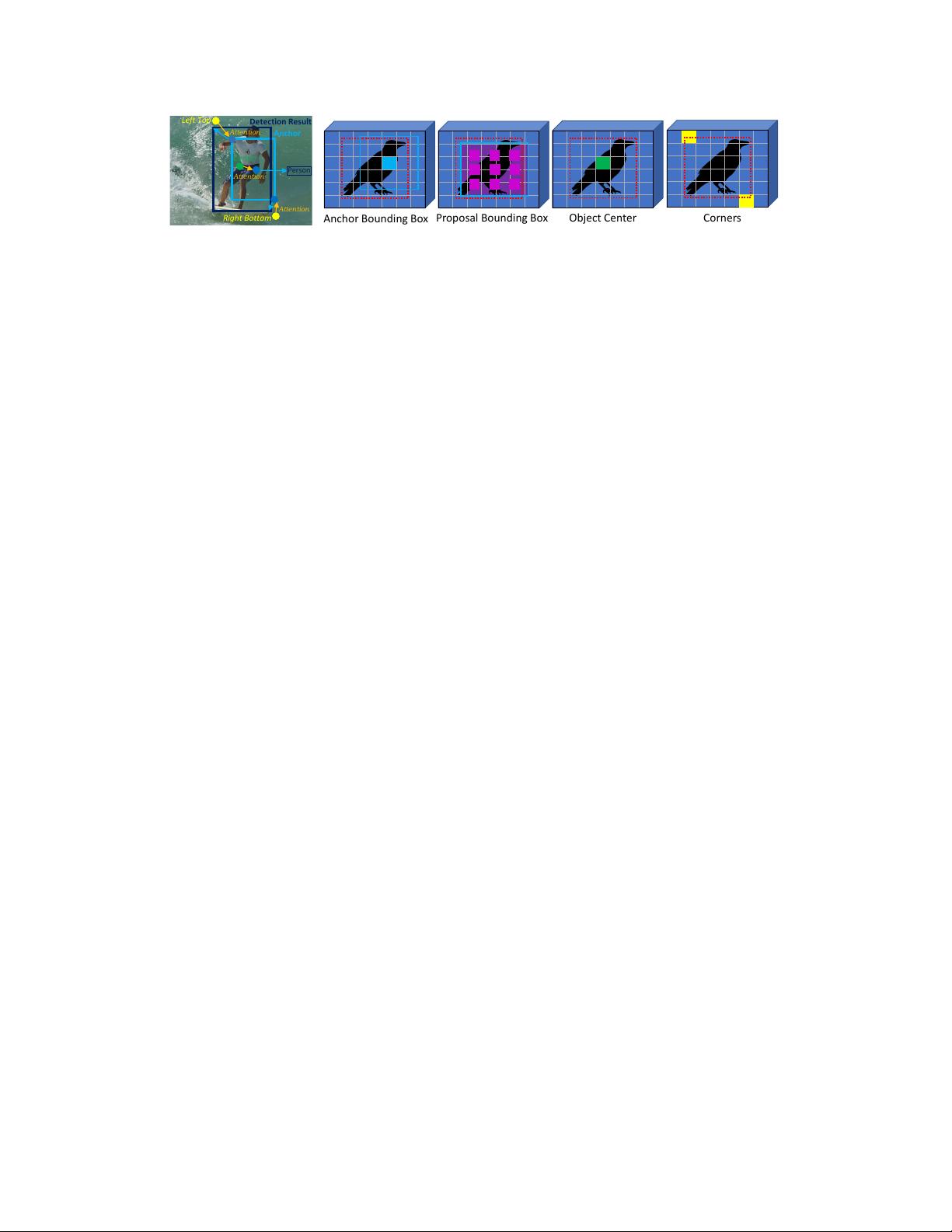

Anchor

Detection Result

Center

Left Top

Right Bottom

Attention

Attention

Attention

Person

(a) Bridge representations.

Anchor Bounding Box

Proposal Bounding Box

Object Center Corners

(b) Typical object/part representations.

Figure 1: (a) An illustration of bridging various representations, specifically leveraging corner/center

representations to enhance the anchor box features. (b) Object/part representations used in object

detection (geometric description and feature extraction). The red dashed box denotes ground-truth.

are usually heterogeneous, combination is difficult. To address this issue, we present an attention

based decoder module similar as that in Transformer [

31

], which can effectively model dependency

between heterogeneous features. The main representations in an object detector are set as the query

input, and other visual representations act as the auxiliary keys to enhance the query features by

certain interactions, where both appearance and geometry relationships are considered.

In general, all feature map points can act as corner/center key instances, which are usually too

many for practical attention computation. In addition, the pairwise geometry term is computation

and memory consuming. To address these issues, two novel techniques are proposed, including a

key sampling approach and a shared location embedding approach for efficient computation of the

geometry term. The proposed module is named bridging visual representations (BVR).

Figure 1a illustrates the application of this module to bridge center and corner representations into an

anchor-based object detector. The center and corner representations act as key instances to enhance

the anchor box features, and the enhanced features are then used for category classification and

bounding box regression to produce the detection results. The module can work in-place. Compared

with the original object detector, the main change is that the input features for classification and

regression are replaced by the enhanced features, and thus the strengthened detector largely maintains

its convenience in use.

The proposed BVR module is general. It is applied to various prevalent object detection frame-

works, including RetinaNet, Faster R-CNN, FCOS and ATSS. Extensive experiments on the COCO

dataset [19] show that the BVR module substantially improves these various detectors by 1.5 ∼ 3.0

AP. In particular, we improve a strong ATSS detector by about

2.0

AP with small overhead, reaching

52.7

AP on COCO test-dev. The resulting network is named RelationNet++, which strengthens the

relation modeling in [12] from bbox-to-bbox to across heterogeneous object/part representations.

The main contributions of this work are summarized as:

•

A general module, named BVR, to bridge various heterogeneous visual representations and

combine the strengths of each. The proposed module can be applied in-place and does not

break the overall inference process by the main representations.

•

Novel techniques to make the proposed bridging module efficient, including a key sampling

approach and a shared location embedding approach.

• Broad effectiveness of the proposed module for four prevalent object detectors: RetinaNet,

Faster R-CNN, FCOS and ATSS.

2 A Representation View for Object Detection

2.1 Object / Part Representations

Object detection aims to find all objects in a scene with their location described by rectangle

bounding boxes. To discriminate object bounding boxes from background and to categorize objects,

intermediate geometric object/part candidates with associated features are required. We refer to the

joint geometric description and feature extraction as the representation, where typical representations

used in object detection are illustrated in Figure 1b and summarized below.

Object bounding box representation

Object detection uses bounding boxes as the final output.

Probably because of this, bounding box is now the most prevalent representation. Geometrically, a

2

Object Center

Detection

(c) FCOS

(a) Faster R-CNN

Anchor

Proposal

Detection

Anchor

Detection

(b) RetinaNet

Corner Points Grouping

(d) CornerNet

Figure 2: Representation flows for several typical detection frameworks.

bounding box can be described by a

4

-d vector, either as center-size

(x

c

, y

c

, w, h)

or as opposing

corners

(x

tl

, y

tl

, x

br

, y

br

)

. Besides the final output, this representation is also commonly used as initial

and intermediate object representations, such as anchors [

24

,

20

,

22

,

23

,

18

] and proposals [

9

,

4

,

17

,

11

]. For bounding box representations, features are usually extracted by pooling operators within

the bounding box area on an image feature map. Common pooling operators include RoIPool [

8

],

RoIAlign [

11

], and Deformable RoIPool [

5

,

40

]. There are also simplified feature extraction methods,

e.g., the box center features are usually employed in the anchor box representation [24, 18].

Object center representation

The

4

-d vector space of a bounding box representation is at a scale

of

O(H

2

× W

2

)

for an image with resolution

H × W

, which is too large to fully process. To

reduce the representation space, some recent frameworks [

29

,

35

,

38

,

14

,

32

] use the center point

as a simplified representation. Geometrically, a center point is described by a 2-d vector (x

c

, y

c

), in

which the hypothesis space is of the scale

O(H × W )

, which is much more tractable. For a center

point representation, the image feature on the center point is usually employed as the object feature.

Corner representation

A bounding box can be determined by two points, e.g., a top-left corner and

a bottom-right corner. Some approaches [

30

,

15

,

16

,

7

,

21

,

39

,

26

] first detect these individual points

and then compose bounding boxes from them. We refer to these representation methods as corner

representation. The image feature at the corner location can be employed as the part feature.

Summary and comparison

Different representation approaches usually have strengths in different

aspects. For example, object based representations (bounding box and center) are better in category

classification while worse in object localization than part based representations (corners). Object

based representations are also more friendly for end-to-end learning because they do not require

a post-processing step to compose objects from corners as in part-based representation methods.

Comparing different object-based representations, while the bounding box representation enables

more sophisticated feature extraction and multiple-stage processing, the center representation is

attractive due to the simplified system design.

2.2 Object Detection Frameworks in a Representation View

Object detection methods can be seen as evolving intermediate object/part representations until the

final bounding box outputs. The representation flows largely shape different object detectors. Several

major categorization of object detectors are based on such representation flow, such as top-down

(object-based representation) vs bottom-up (part-based representation), anchor-based (bounding

box based) vs anchor-free (center point based), and single-stage (one-time representation flow) vs

multiple-stage (multiple-time representation flow). Figure 2 shows the representation flows of several

typical object detection frameworks, as detailed below.

Faster R-CNN

[

24

] employs bounding boxes as its intermediate object representations in all stages.

At the beginning, multiple anchor boxes at each feature map position are hypothesized to coarsely

cover the 4-d bounding box space in an image, i.e.,

3

anchor boxes with different aspect ratios.

The image feature vector at the center point is extracted to represent each anchor box, which is

then used for foreground/background classification and localization refinement. After anchor box

selection and localization refinement, the object representation is evolved to a set of proposal boxes,

where the object features are usually extracted by an RoIAlign operator within each box area. The

final bounding box outputs are obtained by localization refinement, through a small network on the

proposal features.

RetinaNet

[

18

] is a one-stage object detector, which also employs bounding boxes as its intermediate

representation. Due to its one-stage nature, it usually requires denser anchor hypotheses, i.e.,

9

anchor

boxes at each feature map position. The final bounding box outputs are also obtained by applying a

localization refinement head network.

3

剩余10页未读,继续阅读

资源评论

DrYJ

- 粉丝: 40

- 资源: 24

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功