没有合适的资源?快使用搜索试试~ 我知道了~

DEFORMABLE DETR: DEFORMABLE TRANSFORMERS FOR END-TO-END OBJECT D

0 下载量 22 浏览量

2023-11-25

15:52:06

上传

评论

收藏 4.25MB PDF 举报

温馨提示

试读

16页

SCI原文:DEFORMABLE DETR: DEFORMABLE TRANSFORMERS FOR END-TO-END OBJECT DETECTION

资源推荐

资源详情

资源评论

Published as a conference paper at ICLR 2021

DEFORMABLE DETR: DEFORMABLE TRANSFORMERS

FOR END-TO-END OBJECT DETECTION

Xizhou Zhu

1∗

, Weijie Su

2∗‡

, Lewei Lu

1

, Bin Li

2

, Xiaogang Wang

1,3

, Jifeng Dai

1†

1

SenseTime Research

2

University of Science and Technology of China

3

The Chinese University of Hong Kong

{zhuwalter,luotto,daijifeng}@sensetime.com

jackroos@mail.ustc.edu.cn, binli@ustc.edu.cn

xgwang@ee.cuhk.edu.hk

ABSTRACT

DETR has been recently proposed to eliminate the need for many hand-designed

components in object detection while demonstrating good performance. However,

it suffers from slow convergence and limited feature spatial resolution, due to the

limitation of Transformer attention modules in processing image feature maps. To

mitigate these issues, we proposed Deformable DETR, whose attention modules

only attend to a small set of key sampling points around a reference. Deformable

DETR can achieve better performance than DETR (especially on small objects)

with 10× less training epochs. Extensive experiments on the COCO benchmark

demonstrate the effectiveness of our approach. Code is released at https://

github.com/fundamentalvision/Deformable-DETR.

1 INTRODUCTION

Modern object detectors employ many hand-crafted components (Liu et al., 2020), e.g., anchor gen-

eration, rule-based training target assignment, non-maximum suppression (NMS) post-processing.

They are not fully end-to-end. Recently, Carion et al. (2020) proposed DETR to eliminate the need

for such hand-crafted components, and built the first fully end-to-end object detector, achieving very

competitive performance. DETR utilizes a simple architecture, by combining convolutional neural

networks (CNNs) and Transformer (Vaswani et al., 2017) encoder-decoders. They exploit the ver-

satile and powerful relation modeling capability of Transformers to replace the hand-crafted rules,

under properly designed training signals.

Despite its interesting design and good performance, DETR has its own issues: (1) It requires

much longer training epochs to converge than the existing object detectors. For example, on the

COCO (Lin et al., 2014) benchmark, DETR needs 500 epochs to converge, which is around 10 to 20

times slower than Faster R-CNN (Ren et al., 2015). (2) DETR delivers relatively low performance

at detecting small objects. Modern object detectors usually exploit multi-scale features, where small

objects are detected from high-resolution feature maps. Meanwhile, high-resolution feature maps

lead to unacceptable complexities for DETR. The above-mentioned issues can be mainly attributed

to the deficit of Transformer components in processing image feature maps. At initialization, the

attention modules cast nearly uniform attention weights to all the pixels in the feature maps. Long

training epoches is necessary for the attention weights to be learned to focus on sparse meaning-

ful locations. On the other hand, the attention weights computation in Transformer encoder is of

quadratic computation w.r.t. pixel numbers. Thus, it is of very high computational and memory

complexities to process high-resolution feature maps.

In the image domain, deformable convolution (Dai et al., 2017) is of a powerful and efficient mech-

anism to attend to sparse spatial locations. It naturally avoids the above-mentioned issues. While it

lacks the element relation modeling mechanism, which is the key for the success of DETR.

∗

Equal contribution.

†

Corresponding author.

‡

Work is done during an internship at SenseTime Research.

1

Published as a conference paper at ICLR 2021

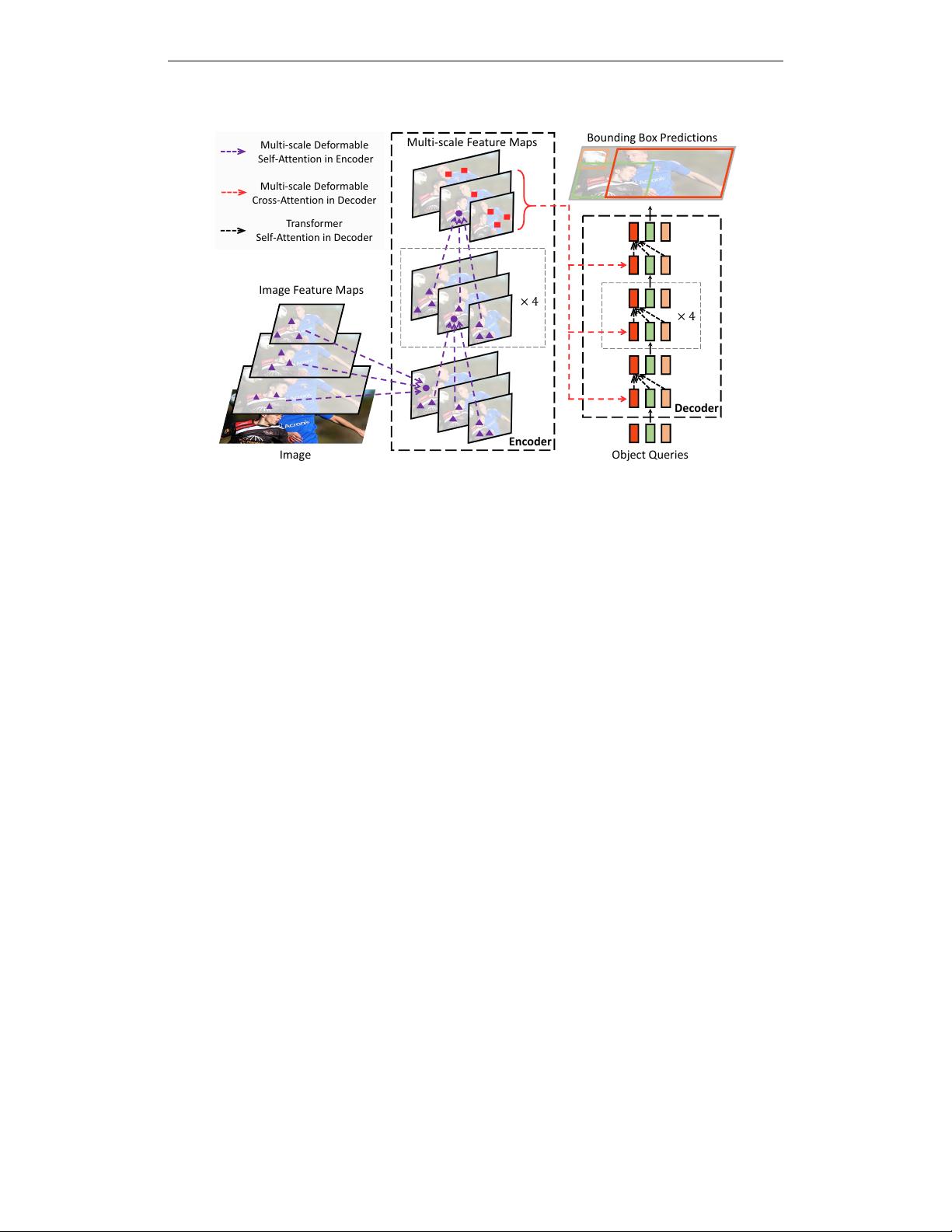

Decoder

Object Queries

Bounding Box Predictions

× 4

Multi-scale Feature Maps

Encoder

Multi-scale Deformable

Self-Attention in Encoder

Multi-scale Deformable

Cross-Attention in Decoder

Transformer

Self-Attention in Decoder

Image

Image Feature Maps

× 4

Figure 1: Illustration of the proposed Deformable DETR object detector.

In this paper, we propose Deformable DETR, which mitigates the slow convergence and high com-

plexity issues of DETR. It combines the best of the sparse spatial sampling of deformable convo-

lution, and the relation modeling capability of Transformers. We propose the deformable attention

module, which attends to a small set of sampling locations as a pre-filter for prominent key elements

out of all the feature map pixels. The module can be naturally extended to aggregating multi-scale

features, without the help of FPN (Lin et al., 2017a). In Deformable DETR , we utilize (multi-scale)

deformable attention modules to replace the Transformer attention modules processing feature maps,

as shown in Fig. 1.

Deformable DETR opens up possibilities for us to exploit variants of end-to-end object detectors,

thanks to its fast convergence, and computational and memory efficiency. We explore a simple and

effective iterative bounding box refinement mechanism to improve the detection performance. We

also try a two-stage Deformable DETR, where the region proposals are also generated by a vaiant of

Deformable DETR, which are further fed into the decoder for iterative bounding box refinement.

Extensive experiments on the COCO (Lin et al., 2014) benchmark demonstrate the effectiveness

of our approach. Compared with DETR, Deformable DETR can achieve better performance (es-

pecially on small objects) with 10× less training epochs. The proposed variant of two-stage De-

formable DETR can further improve the performance. Code is released at https://github.

com/fundamentalvision/Deformable-DETR.

2 RELATED WORK

Efficient Attention Mechanism. Transformers (Vaswani et al., 2017) involve both self-attention

and cross-attention mechanisms. One of the most well-known concern of Transformers is the high

time and memory complexity at vast key element numbers, which hinders model scalability in many

cases. Recently, many efforts have been made to address this problem (Tay et al., 2020b), which can

be roughly divided into three categories in practice.

The first category is to use pre-defined sparse attention patterns on keys. The most straightforward

paradigm is restricting the attention pattern to be fixed local windows. Most works (Liu et al.,

2018a; Parmar et al., 2018; Child et al., 2019; Huang et al., 2019; Ho et al., 2019; Wang et al.,

2020a; Hu et al., 2019; Ramachandran et al., 2019; Qiu et al., 2019; Beltagy et al., 2020; Ainslie

et al., 2020; Zaheer et al., 2020) follow this paradigm. Although restricting the attention pattern

to a local neighborhood can decrease the complexity, it loses global information. To compensate,

Child et al. (2019); Huang et al. (2019); Ho et al. (2019); Wang et al. (2020a) attend key elements

2

Published as a conference paper at ICLR 2021

at fixed intervals to significantly increase the receptive field on keys. Beltagy et al. (2020); Ainslie

et al. (2020); Zaheer et al. (2020) allow a small number of special tokens having access to all key

elements. Zaheer et al. (2020); Qiu et al. (2019) also add some pre-fixed sparse attention patterns to

attend distant key elements directly.

The second category is to learn data-dependent sparse attention. Kitaev et al. (2020) proposes a

locality sensitive hashing (LSH) based attention, which hashes both the query and key elements to

different bins. A similar idea is proposed by Roy et al. (2020), where k-means finds out the most

related keys. Tay et al. (2020a) learns block permutation for block-wise sparse attention.

The third category is to explore the low-rank property in self-attention. Wang et al. (2020b) reduces

the number of key elements through a linear projection on the size dimension instead of the channel

dimension. Katharopoulos et al. (2020); Choromanski et al. (2020) rewrite the calculation of self-

attention through kernelization approximation.

In the image domain, the designs of efficient attention mechanism (e.g., Parmar et al. (2018); Child

et al. (2019); Huang et al. (2019); Ho et al. (2019); Wang et al. (2020a); Hu et al. (2019); Ramachan-

dran et al. (2019)) are still limited to the first category. Despite the theoretically reduced complexity,

Ramachandran et al. (2019); Hu et al. (2019) admit such approaches are much slower in implemen-

tation than traditional convolution with the same FLOPs (at least 3× slower), due to the intrinsic

limitation in memory access patterns.

On the other hand, as discussed in Zhu et al. (2019a), there are variants of convolution, such as

deformable convolution (Dai et al., 2017; Zhu et al., 2019b) and dynamic convolution (Wu et al.,

2019), that also can be viewed as self-attention mechanisms. Especially, deformable convolution

operates much more effectively and efficiently on image recognition than Transformer self-attention.

Meanwhile, it lacks the element relation modeling mechanism.

Our proposed deformable attention module is inspired by deformable convolution, and belongs to

the second category. It only focuses on a small fixed set of sampling points predicted from the

feature of query elements. Different from Ramachandran et al. (2019); Hu et al. (2019), deformable

attention is just slightly slower than the traditional convolution under the same FLOPs.

Multi-scale Feature Representation for Object Detection. One of the main difficulties in object

detection is to effectively represent objects at vastly different scales. Modern object detectors usually

exploit multi-scale features to accommodate this. As one of the pioneering works, FPN (Lin et al.,

2017a) proposes a top-down path to combine multi-scale features. PANet (Liu et al., 2018b) further

adds an bottom-up path on the top of FPN. Kong et al. (2018) combines features from all scales

by a global attention operation. Zhao et al. (2019) proposes a U-shape module to fuse multi-scale

features. Recently, NAS-FPN (Ghiasi et al., 2019) and Auto-FPN (Xu et al., 2019) are proposed

to automatically design cross-scale connections via neural architecture search. Tan et al. (2020)

proposes the BiFPN, which is a repeated simplified version of PANet. Our proposed multi-scale

deformable attention module can naturally aggregate multi-scale feature maps via attention mecha-

nism, without the help of these feature pyramid networks.

3 REVISITING TRANSFORMERS AND DETR

Multi-Head Attention in Transformers. Transformers (Vaswani et al., 2017) are of a network

architecture based on attention mechanisms for machine translation. Given a query element (e.g.,

a target word in the output sentence) and a set of key elements (e.g., source words in the input

sentence), the multi-head attention module adaptively aggregates the key contents according to the

attention weights that measure the compatibility of query-key pairs. To allow the model focusing

on contents from different representation subspaces and different positions, the outputs of different

attention heads are linearly aggregated with learnable weights. Let q ∈ Ω

q

indexes a query element

with representation feature z

q

∈ R

C

, and k ∈ Ω

k

indexes a key element with representation feature

x

k

∈ R

C

, where C is the feature dimension, Ω

q

and Ω

k

specify the set of query and key elements,

respectively. Then the multi-head attention feature is calculated by

MultiHeadAttn(z

q

, x) =

M

X

m=1

W

m

X

k∈Ω

k

A

mq k

· W

0

m

x

k

, (1)

3

Published as a conference paper at ICLR 2021

where m indexes the attention head, W

0

m

∈ R

C

v

×C

and W

m

∈ R

C×C

v

are of learnable weights

(C

v

= C/M by default). The attention weights A

mq k

∝ exp{

z

T

q

U

T

m

V

m

x

k

√

C

v

} are normalized as

P

k∈Ω

k

A

mq k

= 1, in which U

m

, V

m

∈ R

C

v

×C

are also learnable weights. To disambiguate

different spatial positions, the representation features z

q

and x

k

are usually of the concatena-

tion/summation of element contents and positional embeddings.

There are two known issues with Transformers. One is Transformers need long training schedules

before convergence. Suppose the number of query and key elements are of N

q

and N

k

, respectively.

Typically, with proper parameter initialization, U

m

z

q

and V

m

x

k

follow distribution with mean of

0 and variance of 1, which makes attention weights A

mq k

≈

1

N

k

, when N

k

is large. It will lead

to ambiguous gradients for input features. Thus, long training schedules are required so that the

attention weights can focus on specific keys. In the image domain, where the key elements are

usually of image pixels, N

k

can be very large and the convergence is tedious.

On the other hand, the computational and memory complexity for multi-head attention can be

very high with numerous query and key elements. The computational complexity of Eq. 1 is of

O(N

q

C

2

+ N

k

C

2

+ N

q

N

k

C). In the image domain, where the query and key elements are both of

pixels, N

q

= N

k

C, the complexity is dominated by the third term, as O(N

q

N

k

C). Thus, the

multi-head attention module suffers from a quadratic complexity growth with the feature map size.

DETR. DETR (Carion et al., 2020) is built upon the Transformer encoder-decoder architecture,

combined with a set-based Hungarian loss that forces unique predictions for each ground-truth

bounding box via bipartite matching. We briefly review the network architecture as follows.

Given the input feature maps x ∈ R

C×H×W

extracted by a CNN backbone (e.g., ResNet (He et al.,

2016)), DETR exploits a standard Transformer encoder-decoder architecture to transform the input

feature maps to be features of a set of object queries. A 3-layer feed-forward neural network (FFN)

and a linear projection are added on top of the object query features (produced by the decoder) as

the detection head. The FFN acts as the regression branch to predict the bounding box coordinates

b ∈ [0, 1]

4

, where b = {b

x

, b

y

, b

w

, b

h

} encodes the normalized box center coordinates, box height

and width (relative to the image size). The linear projection acts as the classification branch to

produce the classification results.

For the Transformer encoder in DETR, both query and key elements are of pixels in the feature maps.

The inputs are of ResNet feature maps (with encoded positional embeddings). Let H and W denote

the feature map height and width, respectively. The computational complexity of self-attention is of

O(H

2

W

2

C), which grows quadratically with the spatial size.

For the Transformer decoder in DETR, the input includes both feature maps from the encoder, and

N object queries represented by learnable positional embeddings (e.g., N = 100). There are two

types of attention modules in the decoder, namely, cross-attention and self-attention modules. In the

cross-attention modules, object queries extract features from the feature maps. The query elements

are of the object queries, and key elements are of the output feature maps from the encoder. In it,

N

q

= N, N

k

= H × W and the complexity of the cross-attention is of O(HW C

2

+ NHW C).

The complexity grows linearly with the spatial size of feature maps. In the self-attention modules,

object queries interact with each other, so as to capture their relations. The query and key elements

are both of the object queries. In it, N

q

= N

k

= N, and the complexity of the self-attention module

is of O(2NC

2

+ N

2

C). The complexity is acceptable with moderate number of object queries.

DETR is an attractive design for object detection, which removes the need for many hand-designed

components. However, it also has its own issues. These issues can be mainly attributed to the

deficits of Transformer attention in handling image feature maps as key elements: (1) DETR has

relatively low performance in detecting small objects. Modern object detectors use high-resolution

feature maps to better detect small objects. However, high-resolution feature maps would lead to an

unacceptable complexity for the self-attention module in the Transformer encoder of DETR, which

has a quadratic complexity with the spatial size of input feature maps. (2) Compared with modern

object detectors, DETR requires many more training epochs to converge. This is mainly because

the attention modules processing image features are difficult to train. For example, at initialization,

the cross-attention modules are almost of average attention on the whole feature maps. While, at

the end of the training, the attention maps are learned to be very sparse, focusing only on the object

4

剩余15页未读,继续阅读

资源评论

DrYJ

- 粉丝: 40

- 资源: 24

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜最新资源

- 论文(最终)_20240430235101.pdf

- 基于python编写的Keras深度学习框架开发,利用卷积神经网络CNN,快速识别图片并进行分类

- 最全空间计量实证方法(空间杜宾模型和检验以及结果解释文档).txt

- 5uonly.apk

- 蓝桥杯Python组的历年真题

- 2023-04-06-项目笔记 - 第一百十九阶段 - 4.4.2.117全局变量的作用域-117 -2024.04.30

- 2023-04-06-项目笔记 - 第一百十九阶段 - 4.4.2.117全局变量的作用域-117 -2024.04.30

- 前端开发技术实验报告:内含4四实验&实验报告

- Highlight Plus v20.0.1

- 林周瑜-论文.docx

资源上传下载、课程学习等过程中有任何疑问或建议,欢迎提出宝贵意见哦~我们会及时处理!

点击此处反馈

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功