没有合适的资源?快使用搜索试试~ 我知道了~

Recurrent DETR: Transformer-Based Object Detection for Crowded S

0 下载量 148 浏览量

2023-11-25

15:45:34

上传

评论

收藏 5.88MB PDF 举报

温馨提示

试读

21页

SCI原文:Recurrent DETR: Transformer-Based Object Detection for Crowded Scenes

资源推荐

资源详情

资源评论

Received 17 June 2023, accepted 5 July 2023, date of publication 10 July 2023, date of current version 2 August 2023.

Digital Object Identifier 10.1109/ACCESS.2023.3293532

Recurrent DETR: Transformer-Based Object

Detection for Crowded Scenes

HYEONG KYU CHOI

1

, CHONG KEUN PAIK

2

, HYUN WOO KO

1,3

, MIN-CHUL PARK

1,3

,

AND HYUNWOO J. KIM

1

1

Department of Computer Science and Engineering, Korea University, Seoul 02841, Republic of Korea

2

Samsung Electro-Mechanics, Suwon 16674, Republic of Korea

3

Center for Opto-Electronic Materials and Device, Korea Institute of Science and Technology, Seoul 02792, Republic of Korea

Corresponding author: Hyunwoo J. Kim (hyunwoojkim@korea.ac.kr)

This work was supported in part by the Korea Institute of Planning and Evaluation for Technology in Food, Agriculture and Forestry (IPET)

and the Korea Smart Farm Research and Development Foundation (KosFarm) through the Smart Farm Innovation Technology

Development Program by the Ministry of Agriculture, Food and Rural Affairs (MAFRA), and Ministry of Science and ICT (MSIT),

Rural Development Administration (RDA), under Grant 421025-04; in part by the Grant of the Korea Health Technology Research and

Development Project through the Korea Health Industry Development Institute (KHIDI), funded by the Ministry of Health and Welfare,

Republic of Korea, under Grant HR20C0021; and in part by the Neubla.

ABSTRACT Recent Transformer-based object detectors have achieved remarkable performance on

benchmark datasets, but few have addressed the real-world challenge of object detection in crowded scenes

using transformers. This limitation stems from the fixed query set size of the transformer decoder, which

restricts the model’s inference capacity. To overcome this challenge, we propose Recurrent Detection

Transformer (Recurrent DETR), an object detector that iterates the decoder block to render more predictions

with a finite number of query tokens. Recurrent DETR can adaptively control the number of decoder

block iterations based on the image’s crowdedness or complexity, resulting in a variable-size prediction

set. This is enabled by our novel Pondering Hungarian Loss, which helps the model to learn when additional

computation is required to identify all the objects in a crowded scene. We demonstrate the effectiveness

of Recurrent DETR on two datasets: COCO 2017, which represents a standard setting, and CrowdHuman,

which features a crowded setting. Our experiments on both datasets show that Recurrent DETR achieves

significant performance gains of 0.8 AP and 0.4 AP, respectively, over its base architectures. Moreover,

we conduct comprehensive analyses under different query set size constraints to provide a thorough

evaluation of our proposed method.

INDEX TERMS Computer vision, object detection, detection transformers, dynamic computation.

I. INTRODUCTION

Object detection is a fundamental task in computer vision that

involves both recognizing and localizing objects within an

image. Several CNN-based methods have introduced models

to first locate probable objects and then categorize their

types [1], [2], [3], whereas others have tried to locate and

classify objects simultaneously in a single stage [4], [5], [6].

While convolutional neural networks (CNNs) have achieved

outstanding performance on this task, recent works have

explored the use of Transformer [7] architectures to further

The associate editor coordinating the review of this manuscript and

approving it for publication was Varuna De Silva

.

improve model performance. The self-attention mechanism

in Transformers allows for a wider receptive field per layer,

while the architecture’s high capacity yields outstanding

performance.

One of the main challenges with transformer-based object

detectors is their high computational overhead. The burden

stems from the large number of spurious tokens input into the

decoder blocks, which do not necessarily query foreground

objects. Several works have attempted to address this issue

by pruning tokens to improve computational efficiency [8],

[9]. However, few have addressed the real-world challenge of

object detection in crowded scenes, where there are too many

objects to identify with a fixed number of query tokens and

VOLUME 11, 2023

This work is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 License.

For more information, see https://creativecommons.org/licenses/by-nc-nd/4.0/

78623

H. K. Choi et al.: Recurrent DETR: Transformer-Based Object Detection for Crowded Scenes

FIGURE 1. Recurrent DETR ponders. Depending on the crowdedness of the image, Recurrent DETR adaptively controls the number of iteration. For each

iteration, it detects different objects in different positions.

more computation might be required. Considering that simply

increasing the number of query tokens may be infeasible

due to resource constraints, other measures to expand the

prediction set is necessary.

To address this challenge, we propose Recurrent Detec-

tion Transformer (Recurrent DETR), a learning method

for transformer detectors that iterates the decoder block to

generate more predictions. Recurrent DETR adaptively con-

trols the number of iterations depending on the crowdedness

and difficulty of the input image, resulting in a variable-

size prediction set. That is, our model ponders for longer

computational steps if the scene is crowded with foreground

objects (Figure 1), and vice versa for relatively sparse scenes.

This is enabled by our novel Pondering Hungarian Loss,

which helps the model learn when to halt the decoder iteration

process given the current token state.

To the best of our knowledge, our approach is the first

transformer-based object detector that dynamically computes

predictions based on sample difficulty. It can render a larger

prediction set with only a fixed number of query tokens,

vastly improving usability of previously trained object

detection Transformers. We first evaluate the effectiveness of

Recurrent DETR on the standard object detection benchmark

dataset, COCO 2017 [10]. To further verify our method’s

performance on crowded scenes, we provide experimental

results on CrowdHuman [11] and our self-constructed

CrowdHuman++, which contains four times more objects

per image compared to the original CrowdHuman dataset.

Then, our contributions are threefold:

• We propose Recurrent DETR, an object detector that

adaptively iterates the decoder block to output a

variable-size prediction set, specifically designed to

handle crowded scenes.

• We formulate the Pondering Hungarian Loss, which

enables dynamic iterations with transformer-based

object detectors. We also introduce the Novelty Bias to

enforce sufficient content difference among iterations,

guaranteeing dynamic halting.

• We improve the performance of base architectures on

COCO 2017 and CrowdHuman benchmark datasets

by significant margins. We also demonstrate Recurrent

DETR’s robustness under various query size settings

through extensive analyses on its learning properties.

See section II for background knowledge required for

Recurrent DETR. Architectural details and loss functions of

our method can be found in section III, whose effectiveness is

evidenced by our experimental results in section IV. We also

provide extensive analyses in the section to further support

Recurrent DETR’s utility in the experiment section.

II. RELATED WORKS

A. TRANSFORMERS FOR OB JECT DETECTION

The Transformer [7] model, proven to be successful in natural

language processing, has been widely applied across various

tasks including image classification [12], [13], [14], [15],

[16], [17], object detection [8], [9], [18], [19], [20], [21], [22],

[23], [24], [25], human-object interaction detection [26], [27],

[28], [29], [30], and efficient deep learning [31], [32], [33],

[34]. Especially in the object detection domain, DETR [18]

first introduced the transformer module to significantly

boost performance, and Deformable DETR [22] provided an

effective means to gather token information via deformable

attention. Its overall efficiency enabled the adoption of multi-

scale feature maps, thereby improving detection performance

on small objects. Many subsequent works tried to further

enhance detection performance [19], [20], [21], and some

have tried to address the high computational issue [8], [9],

[23], [24], [25] by reducing the number of tokens, encoder

layers, and decoder layers et cetera. Also, several works

studied object detection settings with crowded scenes with

occlusions [35], [36], [37]. On the other hand, transformers

are also actively adopted in various applications including

autonomous driving [38], [39], animal detection and segmen-

tation [40], [41], or produce detection [42], [43].

B. LEARNING TO PONDER

The required amount of computation varies not only by task

but also by sample. Relatively difficult samples may take

longer computation steps, and vice versa for easier ones.

Accordingly, methods to dynamically assign computational

graphs have long been researched. ACT [44] introduced

the pondering mechanism for RNN’s to adaptively control

computation time. Dynamic Routing Neural Networks [45]

is another branch of learning to ponder, where the network

learns to route different paths depending on the input signal.

Similar approaches have been applied for NLP transformers

78624 VOLUME 11, 2023

H. K. Choi et al.: Recurrent DETR: Transformer-Based Object Detection for Crowded Scenes

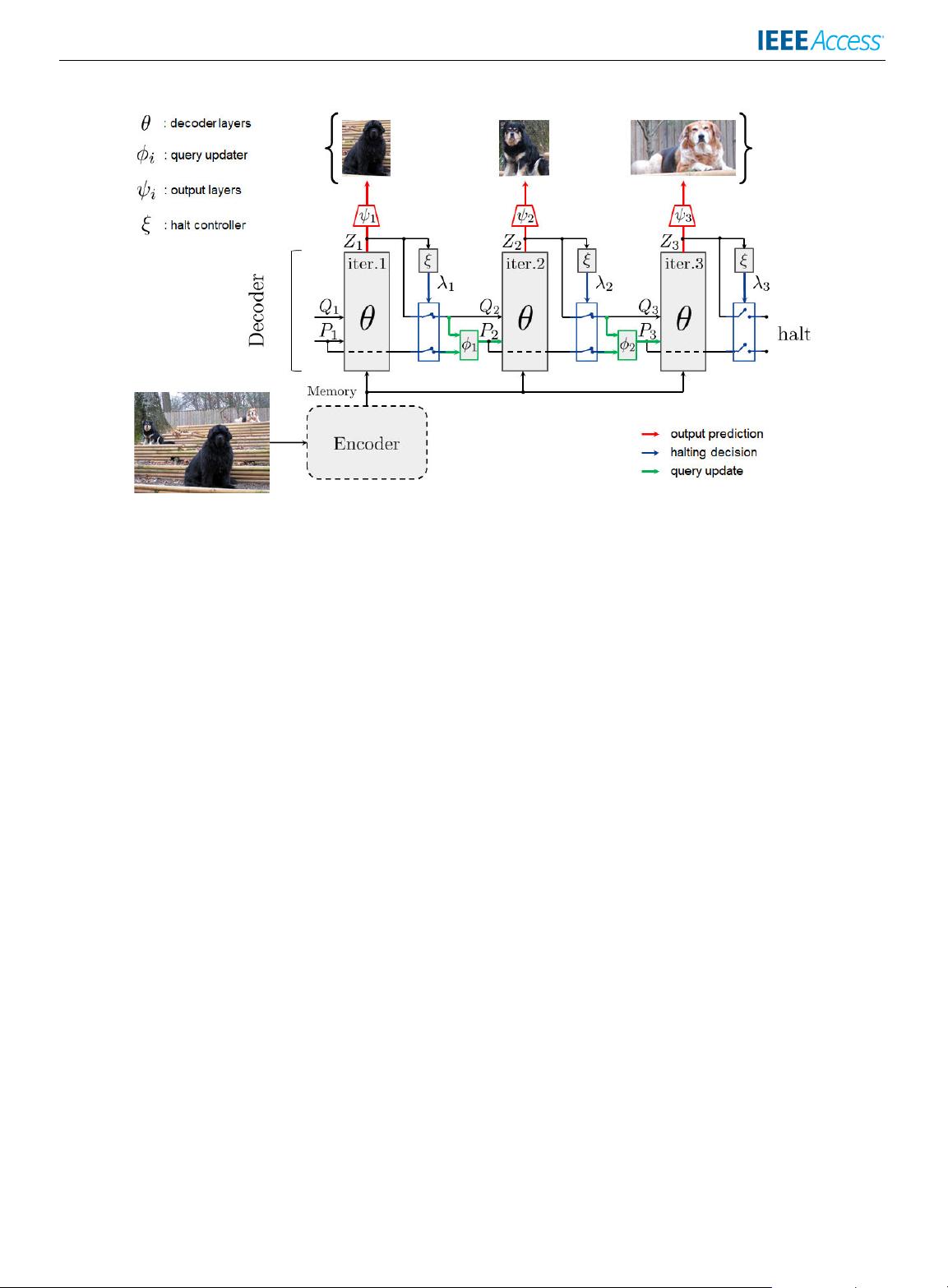

FIGURE 2. Overall Recurrent DETR architecture. The encoder extracts the input image feature, whose parameters are frozen.

The decoder (θ ) takes the image feature and initial query tokens Q

1

and P

1

to compute the output Z

1

, which is used to

localize and classify foreground objects (ψ

t

). This decoder block is iterated multiple times until the halt controller (ξ) decides

to halt, depending on the crowdedness of the scene. For each new iteration, the query updater φ

t

updates the positional

embeddings. When the iteration ends, the predictions from each computation step are finally unioned.

that adaptively iterate the encoder and decoder structure for

the prediction of word tokens [46]. Recently, PonderNet [47]

proposed a general loss form that learns to dynamically halt

when the target loss is minimized. AdaViT [48] applied these

concepts to learn to halt the processing of background tokens,

which can be redundant for image classification.

III. METHODS

In this section, we introduce Recurrent Detection Trans-

former (DETR), a simple and intuitive framework that allows

a variable-size prediction set. We first explain prerequisite

loss functions for our method in section III-A. In the follow-

ing section III-B, architectural details are introduced, and the

Pondering Hungarian Loss is proposed in section III-C.

A. PRELIMINARIES

We here describe the two primary loss functions that define

our approach: the Hungarian loss [49] and the Ponder

loss [47].

1) HUNGARIAN LOSS

The transformer-based detection models [9], [18], [22], [23]

formulate object detection as a set prediction problem. Since

detection transformers output a prediction set, the Hungarian

loss [49] is adopted to map the permuted outputs to ground

truth labels. Prior to loss computation, bipartite matching is

performed on the prediction set

ˆ

Y = {

ˆ

y

i

}

M

i=1

and target set Y,

which is padded with the no object class to equate its set size

with the former. Hungarian matching is performed by finding

the optimal permutation σ

∗

∈ S

M

of

ˆ

Y, where S

M

refers to

all possible permutations. This is expressed as

σ

∗

= arg min

σ ∈S

M

M

X

i=1

L

match

(

ˆ

Y

σ (i)

, Y

i

) (1)

such that

L

match

(

ˆ

Y

σ (i)

, Y

i

) = 1

{c

i

=φ}

L

cls

(

ˆ

Y

σ (i)

, Y

i

)

+ 1

{c

i

=φ}

L

bbox

(

ˆ

Y

σ (i)

, Y

i

) (2)

where σ (i) maps the original index i to a new permuted index

with respect to σ ∈ S

M

, c

i

is the class of the i

th

prediction

ˆ

Y

i

,

and 1

{c

i

=φ}

is an indicator function that returns 1 if c

i

is not

the no object class. Then, Hungarian loss L

hung

is computed

as

L

hung

=

M

X

i=1

[L

cls

(

ˆ

Y

σ

∗

(i)

, Y

i

) + 1

{c

i

=φ}

L

bbox

(

ˆ

Y

σ

∗

(i)

, Y

i

)]

(3)

where L

cls

refers to the classification loss between raw

prediction set

ˆ

Y

σ

∗

and target set Y . Specifically, the Focal

loss [6] is utilized as the classification loss in Deformable

DETR [22]. L

bbox

refers to the bounding box loss, which is

the sum of the L1 loss and the generalized IoU loss [50] of

the predicted bounding box coordinates.

2) PONDER LOSS

PonderNet [47] provides a general learning scheme that

helps learn to dynamically adapt computation based on

VOLUME 11, 2023 78625

H. K. Choi et al.: Recurrent DETR: Transformer-Based Object Detection for Crowded Scenes

the complexity of the given task. The loss requires each

computational step to take the general form of

ˆ

y

t

, h

t+1

, λ

t

= s(x, h

t

), (4)

where function s(·, ·) is the computational step function that

takes data x and state h

t

as input, and outputs the prediction

ˆ

y

t

, next state h

t+1

, and probability λ

t

by which to halt

computation at step t. Then, the ponder loss is defined as

L

ponder

=

T

X

t=1

p

t

L(

ˆ

y

t

, y) + β KL(P(ξ) || P

geom

(3)), (5)

where L refers to the task loss, and p

t

denotes the geometric

probability of halting at computation step t computed by

p

t

= λ

t

t−1

Y

k=1

(1 − λ

k

), (6)

which indicates the probability of an event occurring in step t

at last. Moreover, P(ξ ) and P

geom

(3) refer to the probability

distribution of p

t

parameterized by learnable parameter

ξ and the geometric distribution defined by the random

variable 3, respectively. The Kullback–Leibler divergence

term regularizes the learned distribution, P(ξ ), to be similar

to the geometric distribution parameterized by 3.

Recurrent DETR builds on these two base concepts, whose

architecture is designed to accommodate the dynamic ponder-

ing process. The architecture explanations are provided in the

following section.

B. RECURRENT DETR

Here, we introduce the architectural components of Recurrent

Detection Tranformer (DETR). Our method can easily be

applied to any transformer-based detector model with two

additional components: the query updater and the halt

controller. As depicted in Figure 2, the two components are

applied to the decoder blocks in the detection transformer

architecture. The decoder, denoted θ, predicts the foreground

objects with ψ. Then, the halt controller ξ decides whether to

halt with respect to the predicted probability λ

i

, and the query

updater φ

i

updates the input query embedding if Recurrent

DETR decides to iterate more. In this work, we specifically

extend the decoder module in Deformable DETR [22].

1) HALT CONTROLLER

Recurrent DETR iterates the decoder module to render

predictions in each iteration (the decoder is equivalent to

function s in Eq. (4)). To adaptively control when to stop

iterating, we define the halt controller network. At timestep

t, the halt decision is sampled by the network that takes

the M output token embeddings Z

t

∈ R

M×D

as input.

Global Average Pooling (GAP) is first applied to the output

embeddings Z

t

. Then, the pooled vector is propagated

through a two-layered FFN with sigmoid activation as

λ

t

= sigmoid(FFN

ξ

(GAP(Z

t

)), (7)

where λ

t

refers to the halting probability at iteration t, and ξ

is the parameter of the FFN layers shared across iterations.

At inference, the halting decision is made at the end of each

step, by sampling from a Bernoulli distribution parameterized

by λ

t

. In the training phase, in contrast, the decoder is always

iterated up to the maximal step T , where the predicted λ

t

(t =

1, 2, . . . , T ) are used for learning. The learning details will be

discussed in section III-C and IV-A.

2) QUERY UPDATER

For each additional iteration, we update the query tokens.

The query token embeddings are a sum of the semantic

query Q

t

and positional query P

t

, which are both learnable.

Generally, the decoder module processes the input semantic

query Q

t

, while positional query P

t

is only used to determine

the attention values and is not updated during forward

propagation. Thus, to encourage exploration of diverse image

regions, we also update P

t

as

P

t+1

= P

t

+ tanh(FFN

φ

t

(P

t

+ Z

t

)), (8)

where φ

t

refers to the query updater parameter at iteration

t. The FFN layer consists of two linear layers with a final

hyperbolic tangent function activation, tanh, to mitigate

aggressive updates. The output tokens from the previous

iteration, Z

t

, are also input into the query updater to provide

semantic context in deciding where to explore. In the case of

the next step semantic query Q

t+1

, we directly use Z

t

.

Overall, the linear layers of the halt controller and query

updater accounts for only a small portion of the entire model.

The number of additional parameters can be further reduced

by sharing the query updater parameters across iterations.

Hence, without much increase in model parameters, Recur-

rent DETR can modify the original detection transformer

architecture to a recurrent structure that iteratively renders a

larger prediction set. See Algorithm 1 for pseudocode.

C. PONDERING HUNGARIAN LOSS

A proper loss function is crucial to enabling dynamic

computation with the architecture described in Section III-

B. We thus present our Pondering Hungarian Loss to help

Recurrent DETR dynamically determine its iteration steps.

First, we modify the original Ponder loss [47] into an

adequate form, which is then merged with the Hungarian

loss [49].

1) MODIFIED PONDER LOSS

By omitting the KL divergence term in Eq. (5), the ponder

loss with infinite iterations (i.e. without step truncation) can

be expressed as

L

ponder

=

∞

X

t=1

p

t

L

t

, (9)

where L

t

is the loss value computed with the prediction

outputs at step t. Note that the step t predictions include all

78626 VOLUME 11, 2023

H. K. Choi et al.: Recurrent DETR: Transformer-Based Object Detection for Crowded Scenes

Algorithm 1 Recurrent DETR Inference

Input: X ∈ R

N ×D

; Q

1

∈ R

M×D

; P

1

∈ R

M×D

Output: Prediction Set S

1: Initialize set S ← {}

2: X ← Encoder(X)

3: for t = 1, 2, . . . , T do

4: Z

t

← Decoder

θ

(X, Q

t

+ P

t

)

5: Y

cls

← softmax(FFN

cls

(Z

t

))

6: Y

bbox

← sigmoid(FFN

bbox

(Z

t

))

7: S ← S ∪ {(Y

(i)

cls

, Y

(i)

bbox

)}

i=1,...,M

8: λ

t

← sigmoid(FFN

ξ

(GAP(Z

t

)) ▷ halt controller

9: if random(0, 1) ≤ λ

t

or t = T then

10: return S

11: else

12: Q

t+1

← Z

t

13: P

t+1

← P

t

+tanh(FFN

φ

t

(P

t

+Z

t

)) ▷ query updater

14: end if

15: end for

the outputs from step 1 to t. We then define the relation of

two consecutive losses in terms of their value difference as

L

t+1

= L

t

+ δ

t

. Applying this to Eq. (9) and developing the

equation results in

L

ponder

= L

1

+

∞

X

t=1

δ

t

t

Y

i=1

r

i

, (10)

where r

i

= 1 − λ

i

is the continuing probability at step i (See

Appendix D for derivation). Eq. (10) defines the final loss

in terms of the step-wise contribution to the total loss value.

We find this exposition particularly useful in our setting

where L

t+1

computes the loss with the prediction set that

subsumes the predictions from the previous t steps, i.e.,

ˆ

Y

1

⊂

· · · ⊂

ˆ

Y

t

⊂

ˆ

Y

t+1

⊂ · · · . Thus, it is straightforward to

consider the effect of additional predictions at each iteration,

where the evaluation of each timestep is crucial in deciding

when to halt computation.

In practice, the maximum pondering step T is set to avoid

unstopping computation. We simply truncate the infinite

summation in Eq. (10) as

L

ponder

= L

1

+

T −1

X

t=1

δ

t

t

Y

i=1

r

i

, (11)

on top of which we merge the Hungarian loss to derive our

Pondering Hungarian loss.

2) PONDERING HUNGARIAN LOSS

A straightforward way to incorporate the Hungarian loss

in Eq. (11) would be to substitute all L

i

(i = 1, . . . , T )

computations with the standard L

hung

in Eq. (3). However,

this is an inappropriate approach since the scale of the

hungarian loss generally decreases as the total prediction

set size increases. To illustrate, the accumulated predictions

at iteration t + 1 subsume predictions from the previous

iteration t. Then, the Hungarian matching at t + 1 should

at least be as optimal as the matching done at step t. That

is, the δ

t

in Eq. (11) generally has negative value, depriving

the halt controller the need to ever halt. This can cause

redundant computations without much gain in performance.

Thus, we need a way to regulate Recurrent DETR to halt

iteration at an adequate timestep.

Accordingly, we propose the Novelty Bias, 8

t

. The novelty

bias is added to the original δ

t

value in (11) to redefine

δ

t

= L

t+1

−

¯

L

t

+ 8

t

, (12)

where

¯

L

t

is a stop-gradient of L

t

. The bias term sets

a threshold on the level of loss difference between two

consecutive iterations. That is, the δ

t

value will become

negative only when the loss at t + 1 decreases more than 8

t

.

Here, the novelty bias is computed as

8

t

=

1

τ

1

M

M

X

i=1

[

1

t

t

X

j=1

¯

Z

(i)

j

⊤

¯

Z

(i)

t+1

], (13)

where M is the number of input query tokens, τ is a parameter

that scales the magnitude of the novelty bias, and

¯

Z

(i)

t

is a stop-

gradient of the i

th

output token embedding at decoder iteration

step t. Note, truncating the gradients in Eq. (12) and Eq. (13)

stabilized learning.

Furthermore, since Recurrent DETR is designed for

crowded scenes, we apply our Pondering Hungarian loss only

after the total prediction set size exceeds the target set size.

To be specific, if the query set size M is smaller than the given

ground truth target set size K , we begin computing the loss at

step I = ⌊(K − 1)/M⌋ + 1. Finally, our Pondering Hungarian

loss L

PH

is expressed as

L

PH

= L

I

+

T −1

X

t= I

(L

t+1

−

¯

L

t

+ 8

t

)

t

Y

i=1

r

i

, (14)

where T is the maximum iteration allowed for the model.

IV. EXPERIMENTS

In this section, we provide experimental results and relevant

analyses on Recurrent DETR to address the following

research questions:

Q1. In what cases is Recurrent DETR useful? (IV-B)

Q2. What are the effects of each component? (IV-C)

Q3. Does Recurrent DETR learn to ponder? (IV-D)

A. IMPLEMENTATION DETAILS

Here, we first provide implementation details of our

experiments, including dataset information and training &

evaluation protocols.

1) DATASETS

We evaluate Recurrent DETR on COCO 2017 [10] and

CrowdHuman [11]. COCO 2017 data samples are relatively

sparse, whereas CrowdHuman contains more densely pop-

ulated target objects per scene. We summarize the dataset

statistics in Table 1.

VOLUME 11, 2023 78627

剩余20页未读,继续阅读

资源评论

DrYJ

- 粉丝: 40

- 资源: 24

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜最新资源

- 基于matlab实现配电网三相潮流计算方法,对几种常用的配电网潮流计算方法进行了对比分析.rar

- 基于matlab实现配电网潮流 经典33节点 前推回代法潮流计算 回代电流 前推电压 带注释.rar

- 基于matlab实现模拟退火遗传算法的车辆调度问题研究,用MATLAB语言加以实现.rar

- 基于matlab实现蒙特卡洛的的移动传感器节点定位算法仿真代码.rar

- 华中数控系统818用户说明书

- 基于matlab实现卡尔曼滤波器完成多传感器数据融合 对多个机器人的不同传感器数据进行融合估计足球精确位置.rar

- 基于matlab实现进行简单车辆识别-车辆检测.rar

- 基于JSP物流信息网的设计与实现

- 基于matlab实现车牌识别程序,和论文,自己做的,做毕业设计的可以看看 .rar

- Windows系统下安装与配置Neo4j的步骤

资源上传下载、课程学习等过程中有任何疑问或建议,欢迎提出宝贵意见哦~我们会及时处理!

点击此处反馈

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功