没有合适的资源?快使用搜索试试~ 我知道了~

资源推荐

资源详情

资源评论

1

K-means and

Hierarchical

Clustering

K-means and Hierarchical Clustering: Slide 2

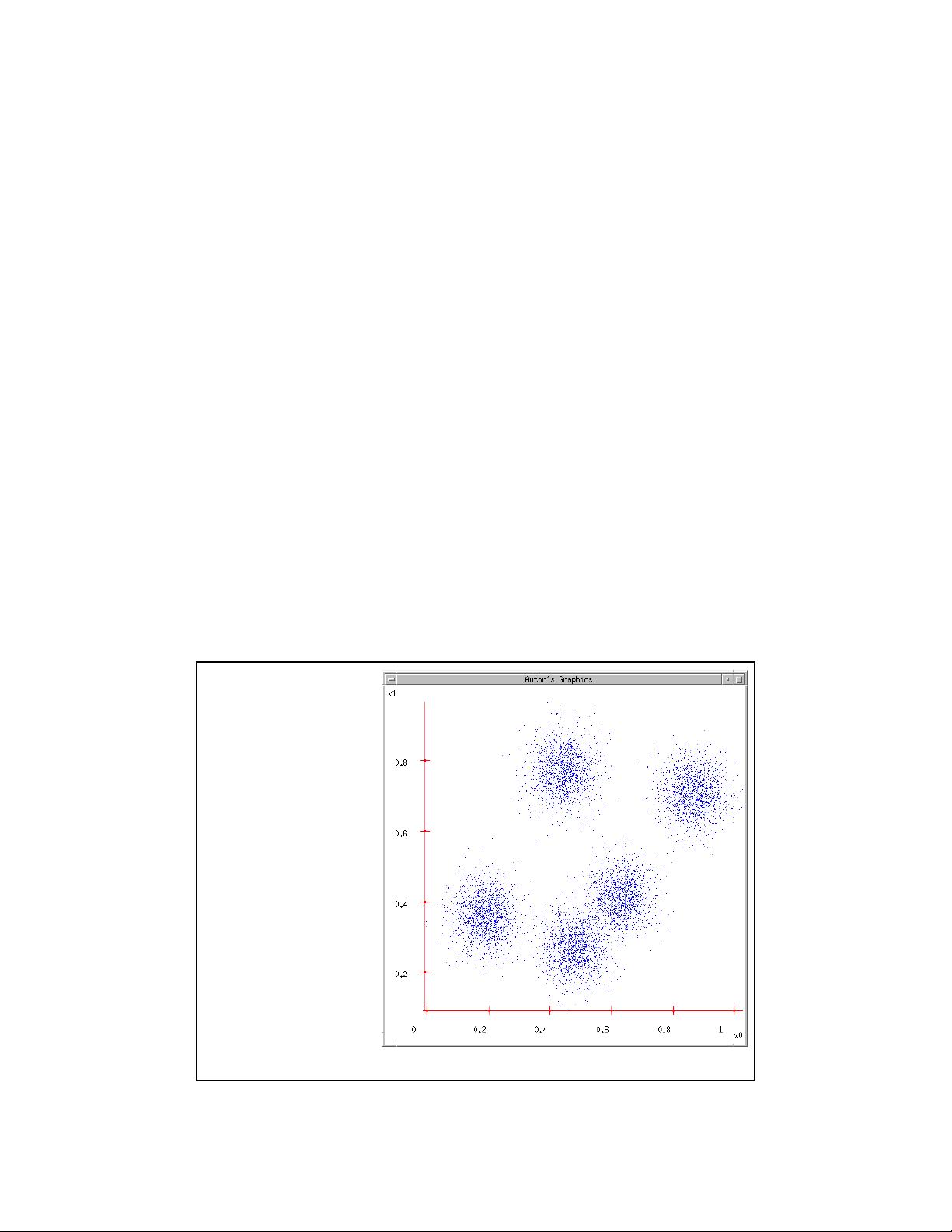

Some

Data

This could easily be

modeled by a

Gaussian Mixture

(with 5 components)

But let’s look at an

satisfying, friendly and

infinitely popular

alternative…

2

K-means and Hierarchical Clustering: Slide 3

Lossy Compression

Suppose you transmit the

coordinates of points drawn

randomly from this dataset.

You can install decoding

software at the receiver.

You’re only allowed to send

two bits per point.

It’ll have to be a “lossy

transmission”.

Loss = Sum Squared Error

between decoded coords and

original coords.

What encoder/decoder will

lose the least information?

K-means and Hierarchical Clustering: Slide 4

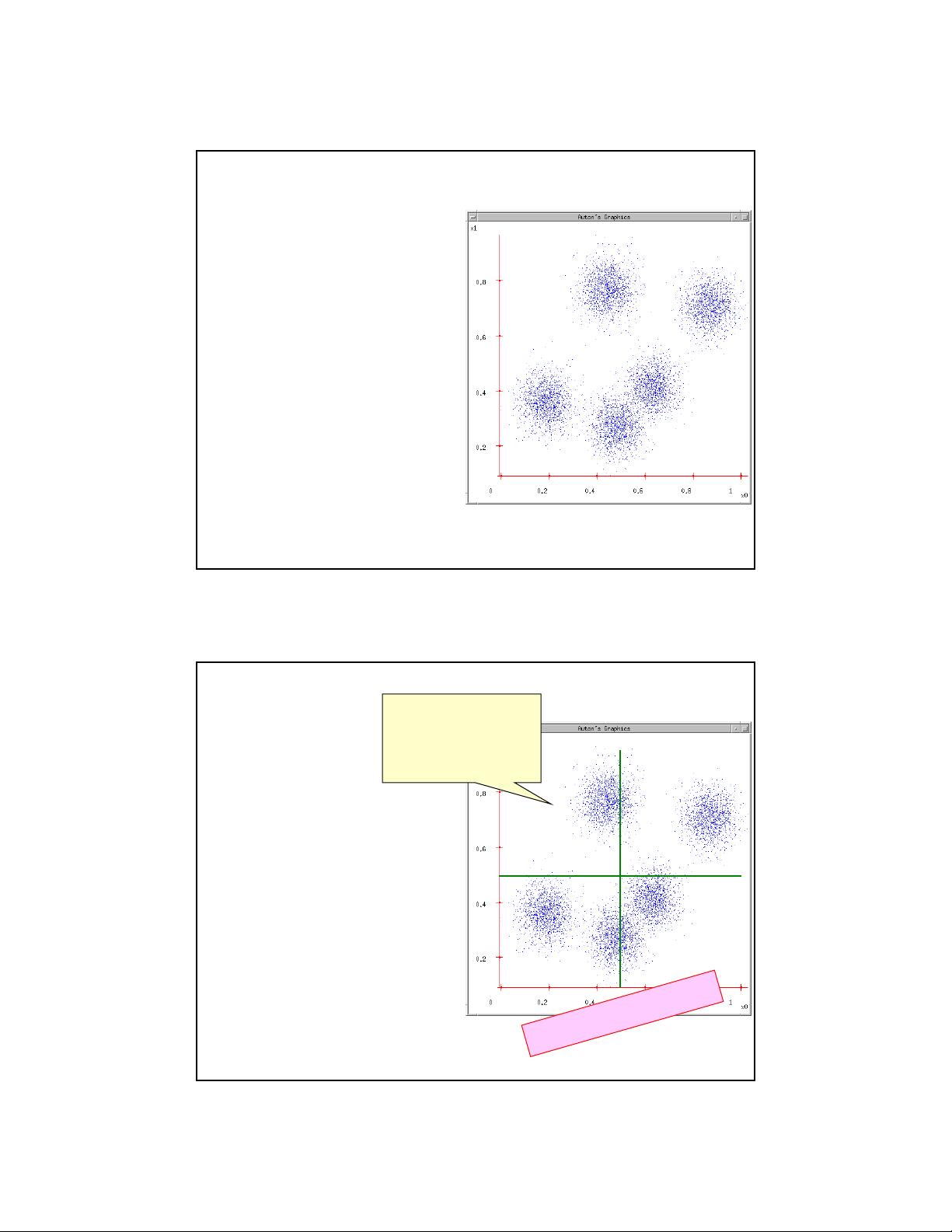

Suppose you transmit the

coordinates of points drawn

randomly from this dataset.

You can install decoding

software at the receiver.

You’re only allowed to send

two bits per point.

It’ll have to be a “lossy

transmission”.

Loss = Sum Squared Error

between decoded coords and

original coords.

What encoder/decoder will

lose the least information?

Idea One

00

1110

01

Break into a grid,

decode each bit-pair

as the middle of

each grid-cell

A

n

y

B

e

t

t

e

r

I

d

e

a

s

?

3

K-means and Hierarchical Clustering: Slide 5

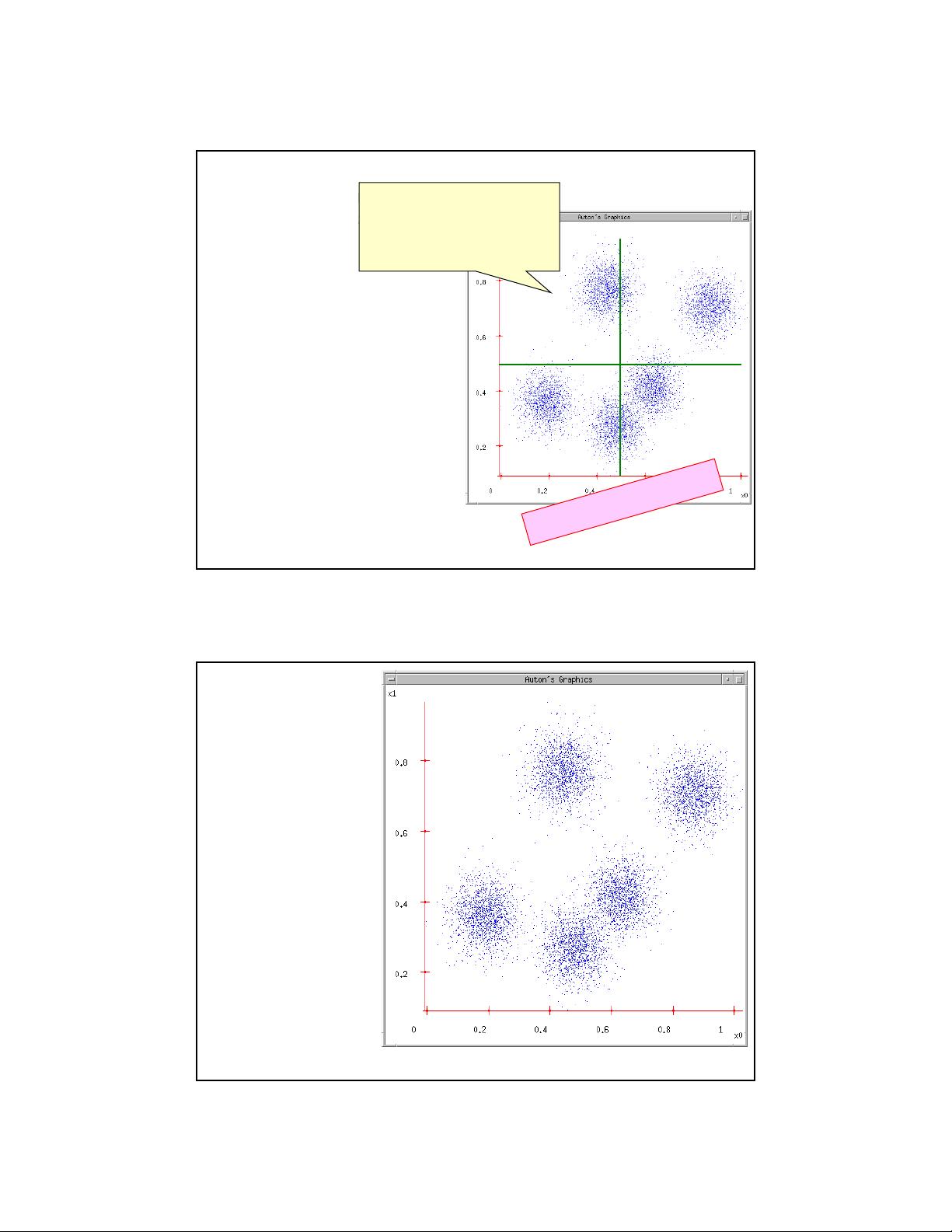

Suppose you transmit the

coordinates of points drawn

randomly from this dataset.

You can install decoding

software at the receiver.

You’re only allowed to send

two bits per point.

It’ll have to be a “lossy

transmission”.

Loss = Sum Squared Error

between decoded coords and

original coords.

What encoder/decoder will

lose the least information?

Idea Two

00

11

10

01

Break into a grid, decode

each bit-pair as the

centroid of all data in

that grid-cell

A

n

y

F

u

r

t

h

e

r

I

d

e

a

s

?

K-means and Hierarchical Clustering: Slide 6

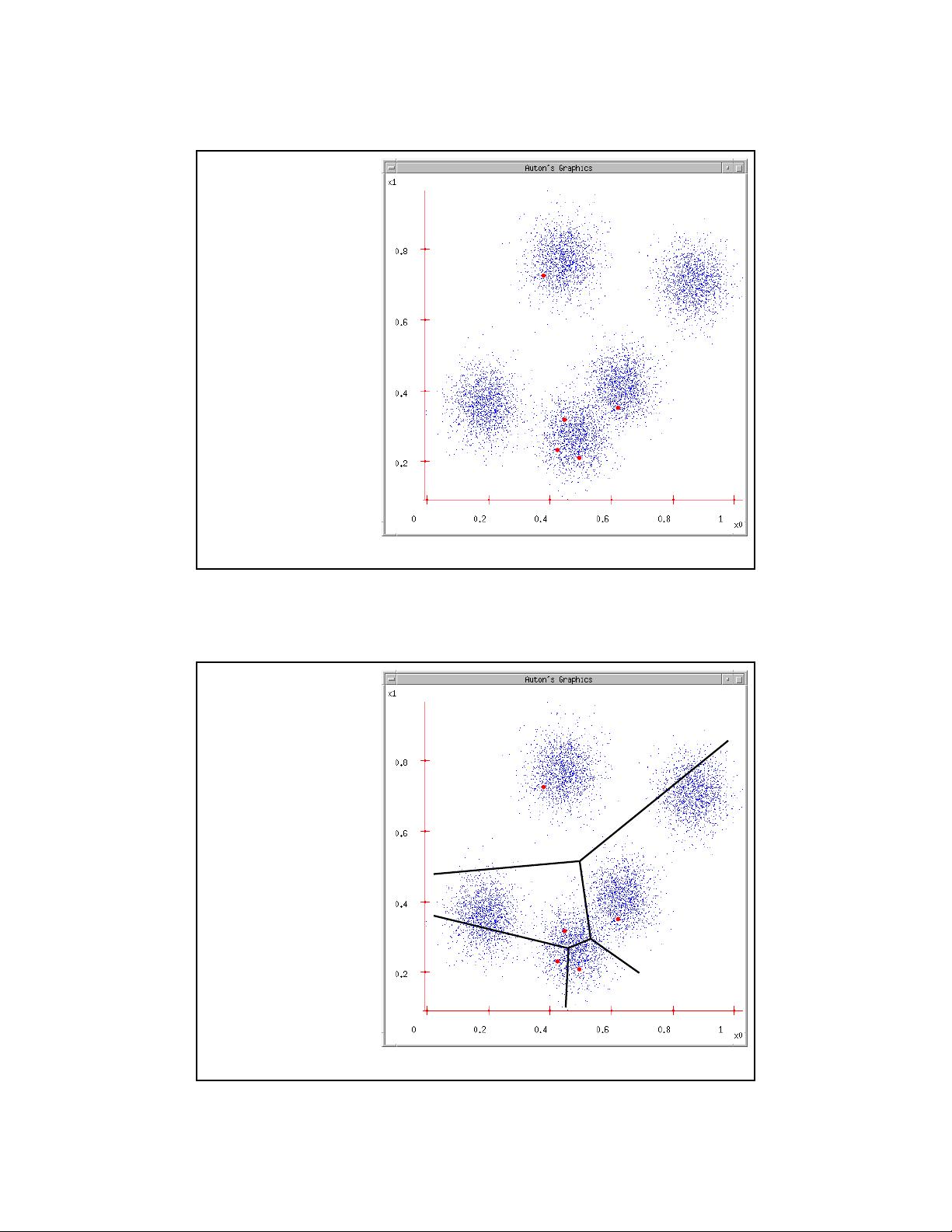

K-means

1. Ask user how many

clusters they’d like.

(e.g. k=5)

4

K-means and Hierarchical Clustering: Slide 7

K-means

1. Ask user how many

clusters they’d like.

(e.g. k=5)

2. Randomly guess k

cluster Center

locations

K-means and Hierarchical Clustering: Slide 8

K-means

1. Ask user how many

clusters they’d like.

(e.g. k=5)

2. Randomly guess k

cluster Center

locations

3. Each datapoint finds

out which Center it’s

closest to. (Thus

each Center “owns”

a set of datapoints)

剩余23页未读,继续阅读

资源评论

passionSnail

- 粉丝: 408

- 资源: 5624

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功