412 Aharon Ben-Tal, Arkadi Nemirovski

Observe that most of the coefficients in (C) are “ugly reals” like −15.79081 or

−84.644257.We have many reasons to believe that coefficients of this type characterize

certain technological devices/processes, and as such they could hardly be known to high

accuracy. It is quite natural to assume that the “ugly coefficients” are in fact uncertain

– they coincide with the “true” values of the corresponding data within accuracy of 3-4

digits, not more. The only exception is the coefficient 1 of x

880

– it perhaps reflects the

structure of the problem and is therefore exact – “certain”.

Assuming that the uncertain entries of a are, say, 0.1%-accurate approximations of

unknown entries of the “true” vector of coefficients ˜a, we looked what would be the

effect of this uncertainty on the validity of the “true” constraint ˜a

T

x ≥ b at x

∗

. Here is

what we have found:

• The minimum, (over all vectors of coefficients ˜a compatible with our “0.1%-

uncertainty hypothesis”), value of ˜a

T

x

∗

− b,is< −104.9; in other words, the

violation of the constraint can be as large as 450% of the right hand side!

• Treating the above worst-case violation as “too pessimistic” (why should the true

values of all uncertain coefficients differ from the values indicated in (C) in the

“most dangerous” way?), consider a morerealistic measureofviolation.Specifically,

assume that the true values of the uncertain coefficients in (C) are obtained from

the “nominal values” (those shown in (C)) by random perturbations a

j

→˜a

j

=

(1 + ξ

j

)a

j

with independent and, say, uniformly distributed on [−0.001, 0.001]

“relative perturbations” ξ

j

. What will be a “typical” relative violation

V =

b −˜a

T

x

∗

b

× 100%

of the “true” (now random) constraint ˜a

T

x ≥ b at x

∗

? The answer is nearly as bad

as for the worst scenario:

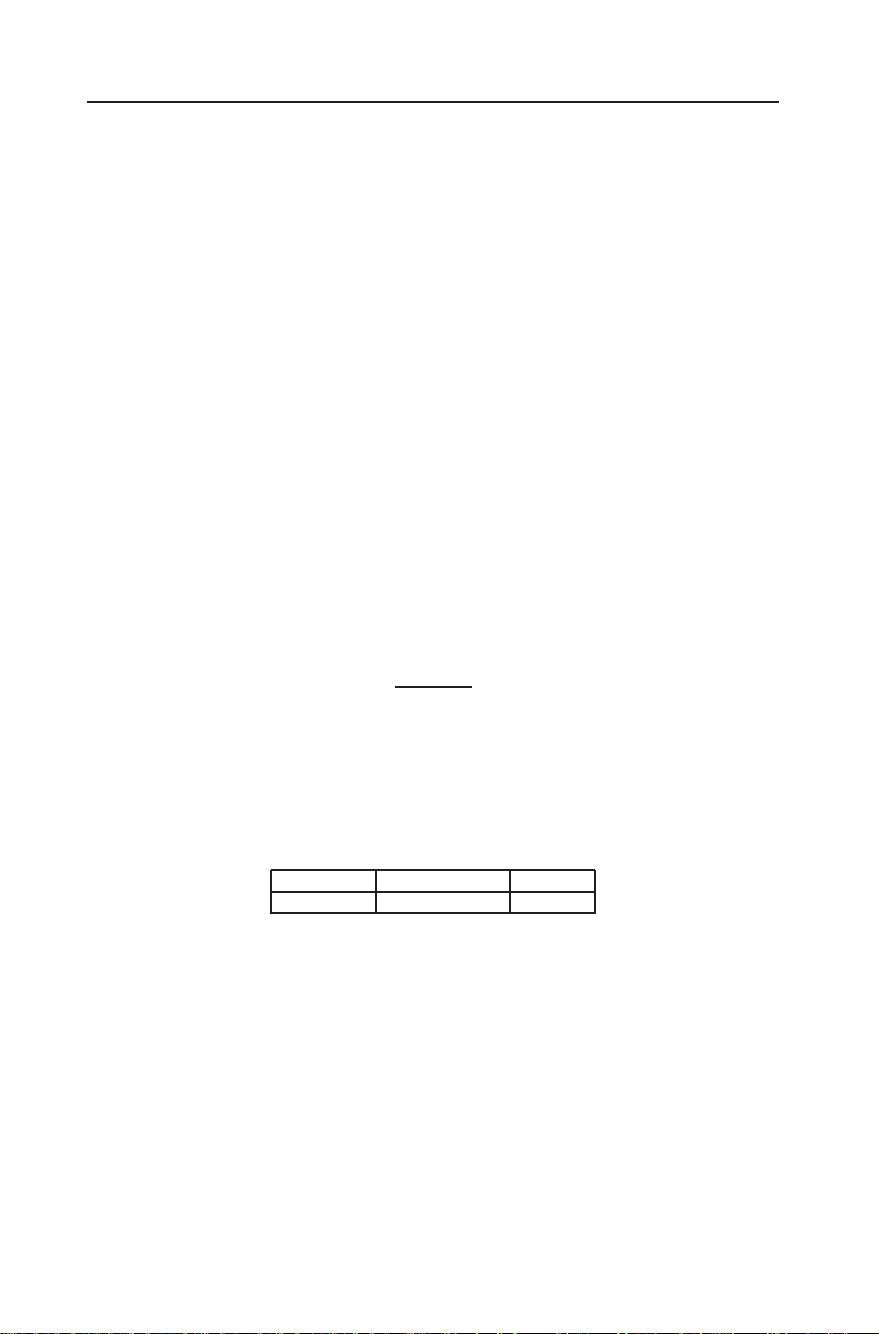

Table 1. Relative violation of constraint # 372 in PILOT4 (1,000-element sample of 0.1% perturbations of

the uncertain data)

Prob{V > 0} Prob{V > 150%} Mean(V)

0.50 0.18 125%

We see that quite small (just 0.1%) perturbations of “obviously uncertain” data co-

efficients can make the “nominal” optimal solution x

∗

heavily infeasible and thus –

practically meaningless. Our intention in this, mainly experimental, paper is to inves-

tigate how common is this phenomenon and how to struggle with it when it occurs.

Specifically, we look through the list of NETLIB problems and for every one of them

– quantify the level of infeasibility ofthe nominal solution in face of small uncertainty;

– when this infeasibility is “too large”, apply the Robust Optimization methodology

(see [1–6]), thus generating another solution which in a sense is immuned against

data perturbations, and, finally,

– look what is the price, in terms of the value of the objective function, of our

“immunization”.

评论0

最新资源