没有合适的资源?快使用搜索试试~ 我知道了~

温馨提示

试读

64页

内含有各种代码 9.1 Measure of Information - Entropy 9.2 Source Coding 9.2.1 Huffman Coding 9.2.2 Lempel-Ziv-Welch Coding 9.2.3 Source Coding vs. Channel Coding 9.3 Channel Model and Channel Capacity 9.4 Channel Coding 9.4.1 Waveform Coding 9.4.2 Linear Block Coding 9.4.3 Cyclic Coding 9.4.4 Convolutional Coding and Viterbi Decoding 9.4.5 Trellis-Coded Modulation 9.4.6 Turbo Coding 9.4.7 Low-Density Parity-Check (LDPC) Coding 9.4.8 Differential Space-Time Block Coding (DSTBC) 9.5 Coding Gain

资源推荐

资源详情

资源评论

CHAPTER 9 Information and Coding

9.1 Measure of Information - Entropy

9.2 Source Coding

9.2.1 Huffman Coding

9.2.2 Lempel-Ziv-Welch Coding

9.2.3 Source Coding vs. Channel Coding

9.3 Channel Model and Channel Capacity

9.4 Channel Coding

9.4.1 Waveform Coding

9.4.2 Linear Block Coding

9.4.3 Cyclic Coding

9.4.4 Convolutional Coding and Viterbi Decoding

9.4.5 Trellis-Coded Modulation

9.4.6 Turbo Coding

9.4.7 Low-Density Parity-Check (LDPC) Coding

9.4.8 Differential Space-Time Block Coding (DSTBC)

9.5 Coding Gain

Chapter Outline

9.1 MEASURE OF INFORMATION – ENTROPY

On the premise that the outputs from an information source such as data, speech, or audio/video

disk can be regarded as a random process, the information theory defines the self information of

an event with probability as

i

x

)(

i

xP

2 2

1

( ) log log [bits] (9.1.1)

( )

( )

i i

i

I x

P x

P x

This measure of information is large/small for an event of lower/higher probability. Why is it

defined like that? It can be intuitively justified/understood by observing the following:

- A message that there was or will be an earthquake in the area where earthquake is very rare has

a value of big news and therefore can be believed to have a lot of information. But, another

message that there will be an earthquake in the area where earthquakes are frequent is of

much

less value as a news and accordingly, can be regarded as having a little information.

- For instance, the information contained in an event which happens with probability is

computed to be zero according to this definition, which fits our intuition.

( ) 1

i

P x

Source: MATLAB/Simulink for Digital Communication by Won Y. Yang et al. © 2009 ( wyyang53@hanmail.net, http://wyyang53.com.ne.kr )

Why is it defined by using the logarithm?

Because the logarithm makes the combined information of two independent events and

(having joint probability of ) equal to the sum of the informations of the events:

i

x

j

y

( ) ( )

j

i

P x P y

(9.1.1)

2 2 2 2

1 1

( , ) log log log ) log ( ) ) ( ) (9.1.2)

( , ) ( ) ( )

( (

j

i j i i j

i j i j

I x y P P y I I

P x y P x P y

x x y

What makes the logarithm have base 2?

It is to make the information measured in bits. According to the definition (9.1.1), the

information of a bit whose value may be 0 or 1 with equal probability of 1/2 is

x

2

1

( ) log 1 (9.1.3)

1/ 2

I x

Now, suppose we have a random variable whose value is taken to be with probability

from the universe . Then, the average information that can be obtained

from observing its value is

x

} to1|{ MixX

i

i

x

( )

i

P x

(9.1.1)

2

( ) ( ) ( ) ( ) log ( ) (9.1.4)

i i

i i i i

x X x X

H P x I x P x P x

x

This is called the entropy of , which is the amount of uncertainty (mystery) contained in

before its value is known and will be lost after the value of is observed.

x

x

x

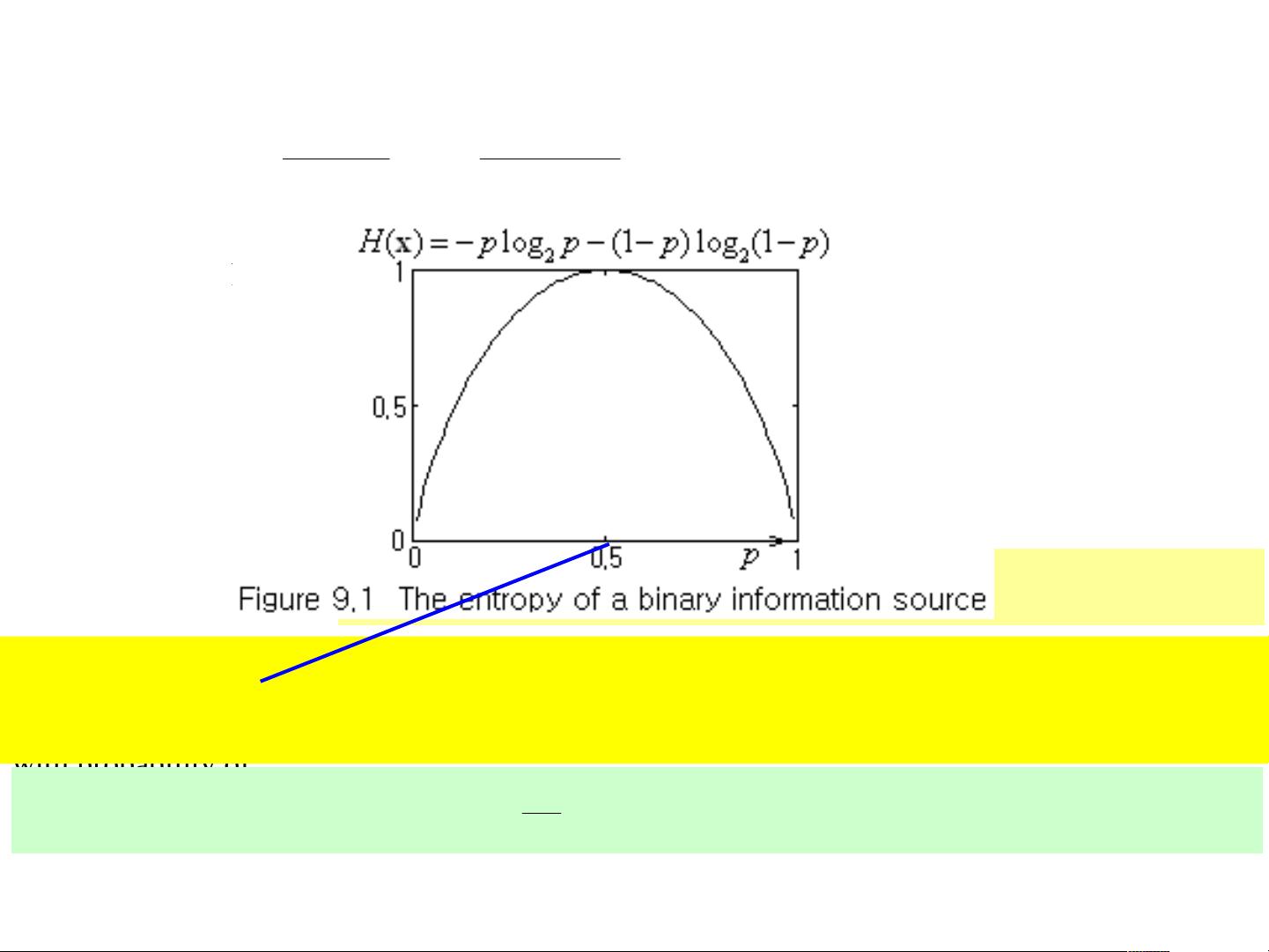

Especially in the case of a binary information source whose value is chosen from

with probability of

},{

21

xxX

1 2

( ) and ( ) 1 (9.1.5)P x p P x p

the entropy

(9.1.4)

2 2

( ) log (1 ) log (1 ) (9.1.6)H p p p p x

is depicted as a function of in Fig. 9.1.

This shows that the entropy is maximized when the two events and are equally likely so

that . More generally, the entropy defined by Eq. (9.1.4) is maximized when all

the events are equiprobable (equally probable), i.e.

1

x

2

x

2/11 pp

1

( ) for all 1 to (9.1.7)

i

P x i M

M

Problem 9.1 Entropy and Investment Value of a Date

Suppose you are dating someone, who is interested in you. When is your value maximized so

that he or she can give you the most valuable present? In connection with the concept of entropy

introduced in Sec. 9.1, choose one among the following examples:

(1) When you sit on the fence so that he or she cannot guess whether you are interested in him or

her.

(2) When you have shown him or her a negative sign.

(3) When you have shown him or her a positive sign.

In the same context, which fish would you offer the best bait to? Choose one among the

following examples:

(1) The fish you are not sure whether you can catch or not.

(2) The fish you are sure you can catch (even with no bait).

(3) The fish you are sure you cannot catch (with any bait).

Source: MATLAB/Simulink for Digital Communication by Won Y. Yang et al. © 2009

9.2 SOURCE CODING

Sometimes we want to find an efficient code using fewest bits to represent the information.

Shannon’s source coding theorem or noiseless coding theorem states that we need the number of

bits not less than the entropy in order to encode an information message in such a way that

perfect decoding is possible. In this section we examine Huffman coding and LZW coding.

9.2.1 Huffman Coding

Huffman coding is a variable length coding which has a strategy to minimize the average number

of bits required for coding a set of symbols by assigning shorter/longer length code to more/less

probable symbol. It is performed in two steps – merging and splitting as illustrated in Fig. 9.2.

(1) Merging: Repeat arranging the symbols in descending order of probability as in each column

of Table 9.1 and merging the least probable two entries into a new entry with a probability

equal to the sum of their probabilities in the next column, until only two enrties remain.

(2) Splitting: Assign 0/1 to each of the two remaining entries to make their initial parts of

codeword. Then repeat splitting back the merged entries into the two (previous) entries

and appending another 0/1 to each of their (unfinished) codewords.

The average codeword length of any code assigned to the set of symbols cannot be less than

the entropy defined by Eq. (9.1.4) and especially, the average codeword length of the

Huffman code constructed by this procedure is less than :

X

)(xH

L

1)( xH

( ) ( ) ( ) ( ) 1 (9.2.1)

i

i

x

i

x X

H L P x l c H

x x

where is the length of the codeword for each

symbol .

( )

i

x

l c

i

x

Source: MATLAB/Simulink for Digital Communication by Won Y. Yang et al. © 2009

function [h,L,H]=Huffman_code(p,opt)

% Huffman code generator gives a Huffman code matrix h,

% average codeword length L & entropy H

% for a source with probability vector p given as argin(1)

zero_one=['0'; '1'];

if nargin>1&&opt>0, zero_one=['1'; '0']; end

if abs(sum(p)-1)>1e-6

fprintf('\n The probabilities in p does not add up to 1!');

end

M=length(p); N=M-1; p=p(:); % Make p a column vector

h={zero_one(1),zero_one(2)}

if M>2

pp(:,1)=p;

for n=1:N

% To sort in descending order

[pp(1:M-n+1,n),o(1:M-n+1,n)]=sort(pp(1:M-n+1,n),1,'descend');

if n==1, ord0=o; end % Original descending order

if M-n>1, pp(1:M-n,n+1)=[pp(1:M-1-n,n); sum(pp(M-n:M-n+1,n))]; end

end

for n=N:-1:2

tmp=N-n+2; oi=o(1:tmp,n);

for i=1:tmp, h1{oi(i)}=h{i}; end

h=h1; h{tmp+1}=h{tmp};

h{tmp}=[h{tmp} zero_one(1)];

h{tmp+1}=[h{tmp+1} zero_one(2)];

end

for i=1:length(ord0), h1{ord0(i)}=h{i}; end

h=h1;

end

L=0;

for n=1:M, L=L+p(n)*length(h{n}); end % Average codeword length

H=-sum(p.*log2(p)); % Entropy by Eq.(9.1.4)

Source: MATLAB/Simulink for Digital Communication by Won Y. Yang et al. © 2009

剩余63页未读,继续阅读

qiaokuangyi

- 粉丝: 2

- 资源: 8

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功

- 1

- 2

前往页