WebGPT: Browser-assisted question-answering with

human feedback

Reiichiro Nakano

∗

Jacob Hilton

∗

Suchir Balaji

∗

Jeff Wu Long Ouyang

Christina Kim Christopher Hesse Shantanu Jain Vineet Kosaraju

William Saunders Xu Jiang Karl Cobbe Tyna Eloundou Gretchen Krueger

Kevin Button Matthew Knight Benjamin Chess John Schulman

OpenAI

Abstract

We fine-tune GPT-3 to answer long-form questions using a text-based web-

browsing environment, which allows the model to search and navigate the web.

By setting up the task so that it can be performed by humans, we are able to train

models on the task using imitation learning, and then optimize answer quality with

human feedback. To make human evaluation of factual accuracy easier, models

must collect references while browsing in support of their answers. We train and

evaluate our models on ELI5, a dataset of questions asked by Reddit users. Our

best model is obtained by fine-tuning GPT-3 using behavior cloning, and then

performing rejection sampling against a reward model trained to predict human

preferences. This model’s answers are preferred by humans 56% of the time to

those of our human demonstrators, and 69% of the time to the highest-voted answer

from Reddit.

1 Introduction

A rising challenge in NLP is long-form question-answering (LFQA), in which a paragraph-length

answer is generated in response to an open-ended question. LFQA systems have the potential

to become one of the main ways people learn about the world, but currently lag behind human

performance [Krishna et al., 2021]. Existing work tends to focus on two core components of the task,

information retrieval and synthesis.

In this work we leverage existing solutions to these components: we outsource document retrieval to

the Microsoft Bing Web Search API,

2

and utilize unsupervised pre-training to achieve high-quality

synthesis by fine-tuning GPT-3 [Brown et al., 2020]. Instead of trying to improve these ingredients,

we focus on combining them using more faithful training objectives. Following Stiennon et al. [2020],

we use human feedback to directly optimize answer quality, allowing us to achieve performance

competitive with humans.

We make two key contributions:

∗

Equal contribution, order randomized. Correspondence to:

reiichiro@openai.com

,

jhilton@openai.

com, suchir@openai.com, joschu@openai.com

2

https://www.microsoft.com/en-us/bing/apis/bing-web-search-api

arXiv:2112.09332v3 [cs.CL] 1 Jun 2022

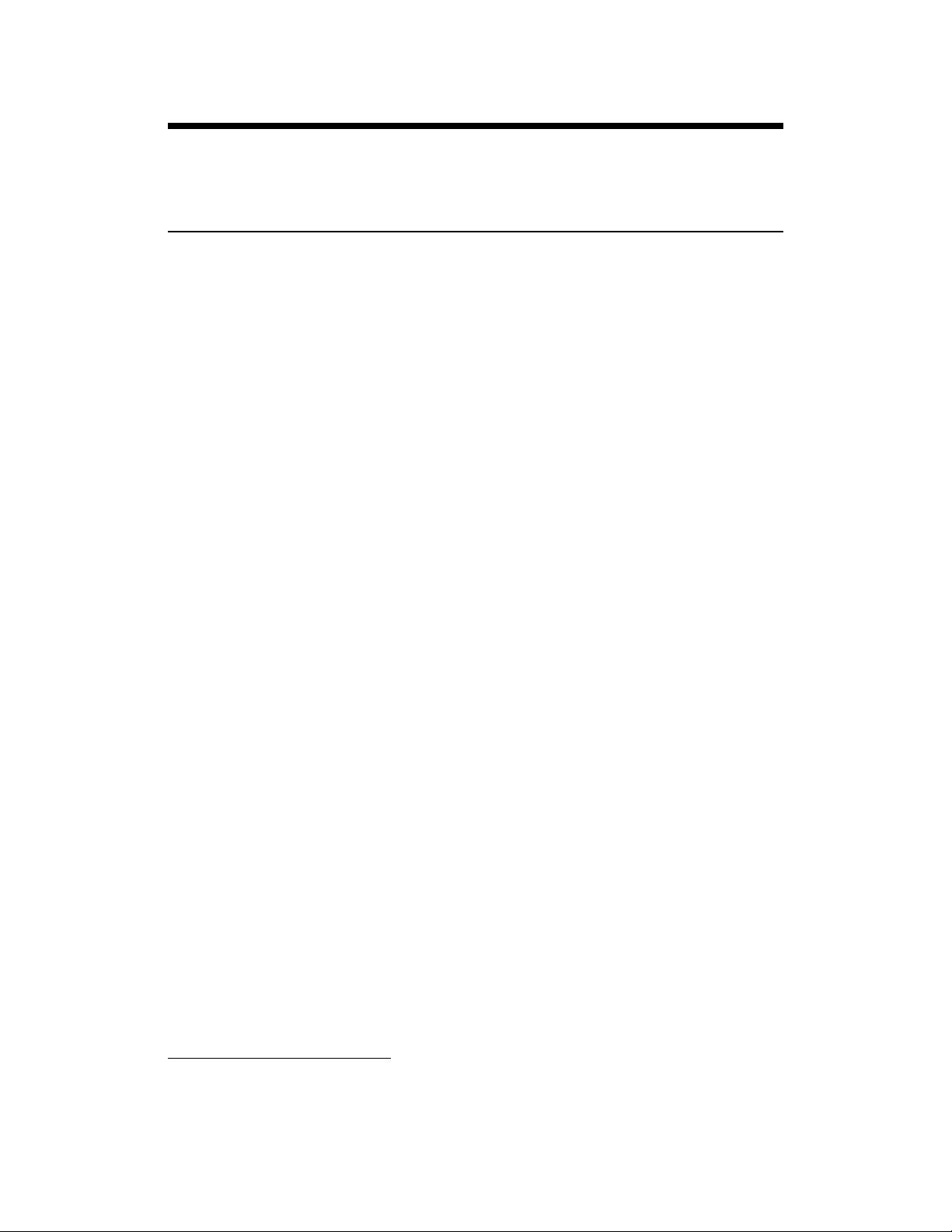

(a) Screenshot from the demonstration interface.

♦Question

How can I train the crows in my neighborhood to bring me gifts?

♦Quotes

From Gifts From Crows | Outside My Window (www.birdsoutsidemywindow.org)

> Many animals give gifts to members of their own species but crows and

other corvids are the only ones known to give gifts to humans.

♦Past actions

Search how to train crows to bring you gifts

Click Gifts From Crows | Outside My Window www.birdsoutsidemywindow.org

Quote

Back

♦Title

Search results for: how to train crows to bring you gifts

♦Scrollbar: 0 - 11

♦Text

【0†How to Make Friends With Crows - PetHelpf ul†pethelpful.com】

If you did this a few times, your crows would learn your new place, but

as I said, I’m not sure if they will follow or visit you there since it’s

probably not in their territory. The other option is simply to make new

crow friends with the crows that live in your new neighborhood.

【1†Gifts From Crows | Outside My Window†www.birdsoutsidemywindow.org】

The partial piece of apple may have been left behind when the crow was

startled rather than as a gift. If the crows bring bright objects you’ll

know for sure that it’s a gift because it’s not something they eat.

Brandi Williams says: May 28, 2020 at 7:19 am.

♦Actions left: 96

♦Next action

(b) Corresponding text given to the model.

Figure 1: An observation from our text-based web-browsing environment, as shown to human

demonstrators (left) and models (right). The web page text has been abridged for illustrative purposes.

•

We create a text-based web-browsing environment that a fine-tuned language model can

interact with. This allows us to improve both retrieval and synthesis in an end-to-end fashion

using general methods such as imitation learning and reinforcement learning.

•

We generate answers with references: passages extracted by the model from web pages

while browsing. This is crucial for allowing labelers to judge the factual accuracy of answers,

without engaging in a difficult and subjective process of independent research.

Our models are trained primarily to answer questions from ELI5 [Fan et al., 2019], a dataset of

questions taken from the “Explain Like I’m Five” subreddit. We collect two additional kinds of

data: demonstrations of humans using our web-browsing environment to answer questions, and

comparisons between two model-generated answers to the same question (each with their own set of

references). Answers are judged for their factual accuracy, coherence, and overall usefulness.

We use this data in four main ways: behavior cloning (i.e., supervised fine-tuning) using the demon-

strations, reward modeling using the comparisons, reinforcement learning against the reward model,

and rejection sampling against the reward model. Our best model uses a combination of behavior

cloning and rejection sampling. We also find reinforcement learning to provide some benefit when

inference-time compute is more limited.

We evaluate our best model in three different ways. First, we compare our model’s answers to answers

written by our human demonstrators on a held-out set of questions. Our model’s answers are preferred

56% of the time, demonstrating human-level usage of the text-based browser. Second, we compare

our model’s answers (with references stripped, for fairness) to the highest-voted answer provided

by the ELI5 dataset. Our model’s answers are preferred 69% of the time. Third, we evaluate our

model on TruthfulQA [Lin et al., 2021], an adversarial dataset of short-form questions. Our model’s

answers are true 75% of the time, and are both true and informative 54% of the time, outperforming

our base model (GPT-3), but falling short of human performance.

The remainder of the paper is structured as follows:

•

In Section 2, we describe our text-based web-browsing environment and how our models

interact with it.

• In Section 3, we explain our data collection and training methods in more detail.

•

In Section 4, we evaluate our best-performing models (for different inference-time compute

budgets) on ELI5 and TruthfulQA.

•

In Section 5, we provide experimental results comparing our different methods and how

they scale with dataset size, parameter count, and inference-time compute.

•

In Section 6, we discuss the implications of our findings for training models to answer

questions truthfully, and broader impacts.

2

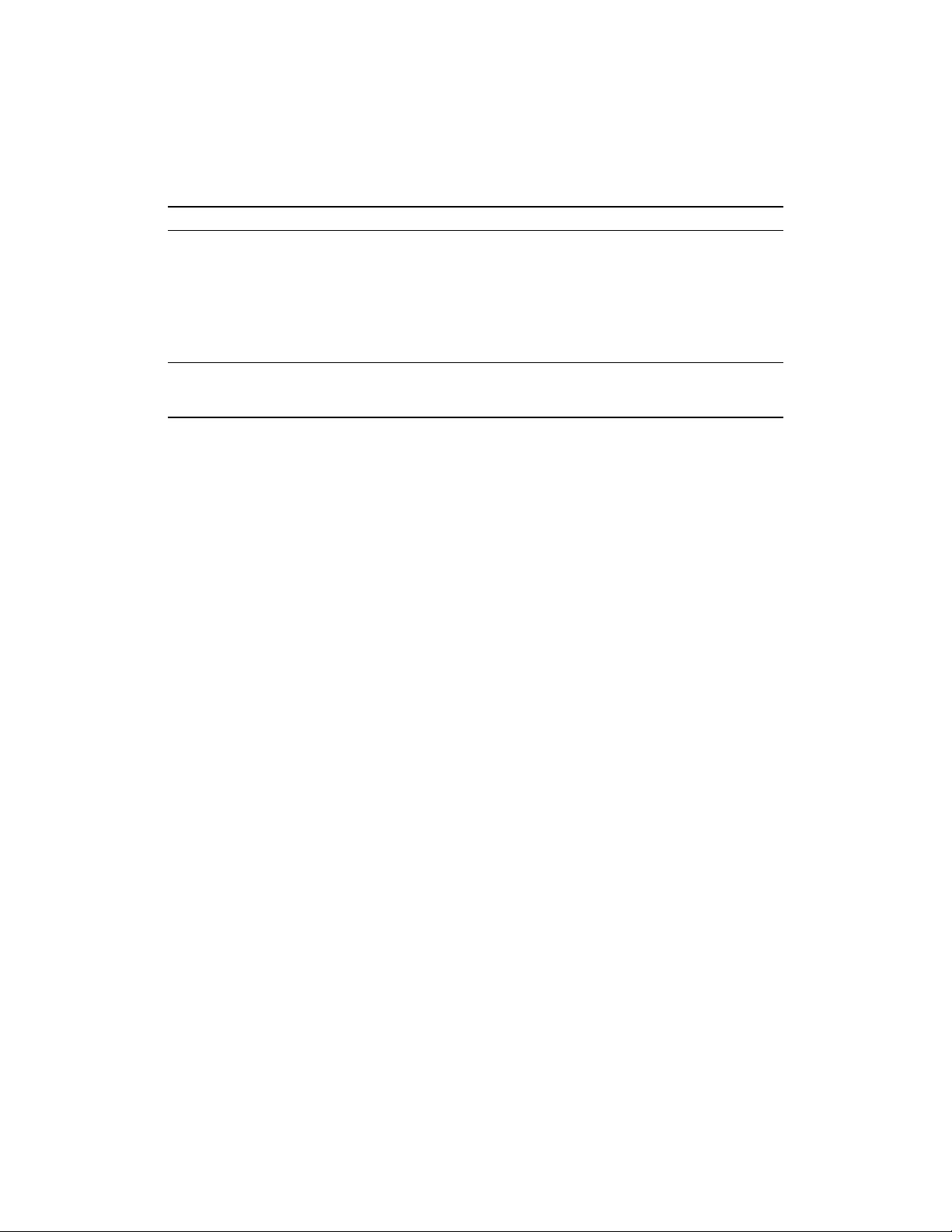

Table 1: Actions the model can take. If a model generates any other text, it is considered to be an

invalid action. Invalid actions still count towards the maximum, but are otherwise ignored.

Command Effect

Search <query> Send <query> to the Bing API and display a search results page

Clicked on link <link ID> Follow the link with the given ID to a new page

Find in page: <text> Find the next occurrence of <text> and scroll to it

Quote: <text> If <text> is found in the current page, add it as a reference

Scrolled down <1, 2, 3> Scroll down a number of times

Scrolled up <1, 2, 3> Scroll up a number of times

Top Scroll to the top of the page

Back Go to the previous page

End: Answer End browsing and move to answering phase

End: <Nonsense, Controversial> End browsing and skip answering phase

2 Environment design

Previous work on question-answering such as REALM [Guu et al., 2020] and RAG [Lewis et al.,

2020a] has focused on improving document retrieval for a given query. Instead, we use a familiar

existing method for this: a modern search engine (Bing). This has two main advantages. First,

modern search engines are already very powerful, and index a large number of up-to-date documents.

Second, it allows us to focus on the higher-level task of using a search engine to answer questions,

something that humans can do well, and that a language model can mimic.

For this approach, we designed a text-based web-browsing environment. The language model is

prompted with a written summary of the current state of the environment, including the question, the

text of the current page at the current cursor location, and some other information (see Figure 1(b)).

In response to this, the model must issue one of the commands given in Table 1, which performs an

action such as running a Bing search, clicking on a link, or scrolling around. This process is then

repeated with a fresh context (hence, the only memory of previous steps is what is recorded in the

summary).

While the model is browsing, one of the actions it can take is to quote an extract from the current

page. When this is performed, the page title, domain name and extract are recorded to be used later

as a reference. Browsing then continues until either the model issues a command to end browsing,

the maximum number of actions has been reached, or the maximum total length of references has

been reached. At this point, as long as there is at least one reference, the model is prompted with the

question and the references, and must compose its final answer.

Further technical details about our environment can be found in Appendix A.

3 Methods

3.1 Data collection

Guidance from humans is central to our approach. A language model pre-trained on natural language

would not be able to use our text-based browser, since it does not know the format of valid commands.

We therefore collected examples of humans using the browser to answer questions, which we call

demonstrations. However, training on demonstrations alone does not directly optimize answer quality,

and is unlikely to lead far beyond human performance [Stiennon et al., 2020]. We therefore collected

pairs of model-generated answers to the same question, and asked humans which one they preferred,

which we call comparisons.

For both demonstrations and comparisons, the vast majority of questions were taken from ELI5 [Fan

et al., 2019], a dataset of long-form questions. For diversity and experimentation, we also mixed in

a small number of questions from other sources, such as TriviaQA [Joshi et al., 2017]. In total, we

collected around 6,000 demonstrations, 92% of which were for questions from ELI5, and around

21,500 comparisons, 98% of which were for questions from ELI5. A more detailed breakdown of the

questions we used along with post-processing details can be found in Appendix B.

3

Table 2: An answer produced by our 175B best-of-64 model to a randomly-chosen question from the

ELI5 test set (not cherry-picked). The full text of the references can be found in Appendix J, along

with answers from our human demonstrators and the ELI5 dataset. Further samples are available at

https://openaipublic.blob.core.windows.net/webgpt-answer-viewer/index.html.

Question Why did we decide that certain words were "bad" and shouldn’t be used in social settings?

Answer

We have all been taught that there are certain words that should not be used in public, at least

not without good reason. But why do some words fall into this category while others do not? It

turns out that there is not a single answer to this question, as each word has a different history

and carries different meanings in different cultures and families [2]. However, there are some

general categories that "bad" words tend to fall into. "Bad" words generally relate to parts of life

that we don’t like talking about in public, like bathroom functions, or negative ways of talking

about people’s religion, skin color, ability, or gender [3]. Often, people say bad words when

they are experiencing strong emotions, and those words are linked to our emotions [3].

References

(titles

only)

[1, 2, 3] Why Are Some Words ‘Bad’? | Vermont Public Radio (www.vpr.org)

[4] On Words: ‘Bad’ Words and Why We Should Study Them | UVA Today (news.virginia.edu)

[5] The Science of Curse Words: Why The &@$! Do We Swear? (www.babbel.com)

To make it easier for humans to provide demonstrations, we designed a graphical user interface for

the environment (see Figure 1(a)). This displays essentially the same information as the text-based

interface and allows any valid action to be performed, but is more human-friendly. For comparisons,

we designed a similar interface, allowing auxiliary annotations as well as comparison ratings to be

provided, although only the final comparison ratings (better, worse or equally good overall) were

used in training.

For both demonstrations and comparisons, we emphasized that answers should be relevant, coherent,

and supported by trustworthy references. Further details about these criteria and other aspects of our

data collection pipeline can be found in Appendix C.

We are releasing a dataset of comparisons, the details of which can be found in Appendix K.

3.2 Training

The use of pre-trained models is crucial to our approach. Many of the underlying capabilities required

to successfully use our environment to answer questions, such as reading comprehension and answer

synthesis, emerge as zero-shot capabilities of language models [Brown et al., 2020]. We therefore

fine-tuned models from the GPT-3 model family, focusing on the 760M, 13B and 175B model sizes.

Starting from these models, we used four main training methods:

1. Behavior cloning (BC).

We fine-tuned on the demonstrations using supervised learning,

with the commands issued by the human demonstrators as labels.

2. Reward modeling (RM).

Starting from the BC model with the final unembedding layer

removed, we trained a model to take in a question and an answer with references, and output

a scalar reward. Following Stiennon et al. [2020], the reward represents an Elo score, scaled

such that the difference between two scores represents the logit of the probability that one

will be preferred to the other by the human labelers. The reward model is trained using a

cross-entropy loss, with the comparisons as labels. Ties are treated as soft 50% labels.

3. Reinforcement learning (RL).

Once again following Stiennon et al. [2020], we fine-tuned

the BC model on our environment using PPO [Schulman et al., 2017]. For the environment

reward, we took the reward model score at the end of each episode, and added this to a KL

penalty from the BC model at each token to mitigate overoptimization of the reward model.

4. Rejection sampling (best-of-n).

We sampled a fixed number of answers (4, 16 or 64) from

either the BC model or the RL model (if left unspecified, we used the BC model), and

selected the one that was ranked highest by the reward model. We used this as an alternative

method of optimizing against the reward model, which requires no additional training, but

instead uses more inference-time compute.

4

We used mutually disjoint sets of questions for each of BC, RM and RL.

For BC, we held out around 4% of the demonstrations to use as a validation set.

For RM, we sampled answers for the comparison datasets in an ad-hoc manner, using models of

various sizes (but primarily the 175B model size), trained using various combinations of methods and

hyperparameters, and combined them into a single dataset. This was for data efficiency: we collected

many comparisons for evaluation purposes, such as for tuning hyperparameters, and did not want to

waste this data. Our final reward models were trained on around 16,000 comparisons, the remaining

5,500 being used for evaluation only.

For RL, we trained on a mixture of 90% questions from ELI5 and 10% questions from TriviaQA.

To improve sample efficiency, at the end of each episode we inserted 15 additional answering-only

episodes using the same references as the previous episode. We were motivated to try this because

answering explained slightly more of the variance in reward model score than browsing despite taking

many fewer steps, and we found it to improve sample efficiency by approximately a factor of 2. We

also randomized the maximum number of browsing actions, sampling uniformly from the range

20–100 inclusive.

Hyperparameters for all of our training methods can be found in Appendix E.

4 Evaluation

In evaluating our approach, we focused on three “WebGPT” models, each of which was trained with

behavior cloning followed by rejection sampling against a reward model of the same size: a 760M

best-of-4 model, a 13B best-of-16 model and a 175B best-of-64 model. As discussed in Section 5.2,

these are compute-efficient models corresponding to different inference-time compute budgets. We

excluded RL for simplicity, since it did not provide significant benefit when combined with rejection

sampling (see Figure 4).

We evaluated all WebGPT models using a sampling temperature of 0.8, which was tuned using human

evaluations, and with a maximum number of browsing actions of 100.

4.1 ELI5

We evaluated WebGPT on the ELI5 test set in two different ways:

1.

We compared model-generated answers to answers written by demonstrators using our

web-browsing environment. For these comparisons, we used the same procedure as compar-

isons used for reward model training. We consider this to be a fair comparison, since the

instructions for demonstrations and comparisons emphasize a very similar set of criteria.

2.

We compared model-generated answers to the reference answers from the ELI5 dataset,

which are the highest-voted answers from Reddit. In this case, we were concerned about

ecological validity, since our detailed comparison criteria may not match those of real-life

users. We were also concerned about blinding, since Reddit answers do not typically include

citations. To mitigate these concerns, we stripped all citations and references from the

model-generated answers, hired new contractors who were not familiar with our detailed

instructions, and gave them a much more minimal set of instructions, which are given in

Appendix F.

In both cases, we treat ties as 50% preference ratings (rather than excluding them).

Our results are shown in Figure 2. Our best model, the 175B best-of-64 model, produces answers

that are preferred to those written by our human demonstrators 56% of the time. This suggests that

the use of human feedback is essential, since one would not expect to exceed 50% preference by

imitating demonstrations alone (although it may still be possible, by producing a less noisy policy).

The same model produces answers that are preferred to the reference answers from the ELI5 dataset

69% of the time. This is a substantial improvement over Krishna et al. [2021], whose best model’s

answers are preferred 23% of the time to the reference answers, although they use substantially less

compute than even our smallest model.

5