没有合适的资源?快使用搜索试试~ 我知道了~

Large-scale cluster management at Google with Borg

需积分: 0 13 下载量 144 浏览量

2017-09-18

12:43:25

上传

评论

收藏 793KB PDF 举报

温馨提示

试读

17页

Large-scale cluster management at Google with Borg 这是一篇关于混合负载调度的经典论文,主要介绍针对不同负载类型进行混合调度的框架和实现方法。

资源推荐

资源详情

资源评论

Large-scale cluster management at Google with Borg

Abhishek Verma

†

Luis Pedrosa

‡

Madhukar Korupolu

David Oppenheimer Eric Tune John Wilkes

Google Inc.

Abstract

Google’s Borg system is a cluster manager that runs hun-

dreds of thousands of jobs, from many thousands of differ-

ent applications, across a number of clusters each with up to

tens of thousands of machines.

It achieves high utilization by combining admission con-

trol, efficient task-packing, over-commitment, and machine

sharing with process-level performance isolation. It supports

high-availability applications with runtime features that min-

imize fault-recovery time, and scheduling policies that re-

duce the probability of correlated failures. Borg simplifies

life for its users by offering a declarative job specification

language, name service integration, real-time job monitor-

ing, and tools to analyze and simulate system behavior.

We present a summary of the Borg system architecture

and features, important design decisions, a quantitative anal-

ysis of some of its policy decisions, and a qualitative ex-

amination of lessons learned from a decade of operational

experience with it.

1. Introduction

The cluster management system we internally call Borg ad-

mits, schedules, starts, restarts, and monitors the full range

of applications that Google runs. This paper explains how.

Borg provides three main benefits: it (1) hides the details

of resource management and failure handling so its users can

focus on application development instead; (2) operates with

very high reliability and availability, and supports applica-

tions that do the same; and (3) lets us run workloads across

tens of thousands of machines effectively. Borg is not the

first system to address these issues, but it’s one of the few op-

erating at this scale, with this degree of resiliency and com-

pleteness. This paper is organized around these topics, con-

†

Work done while author was at Google.

‡

Currently at University of Southern California.

Permission to make digital or hard copies of part or all of this work for personal or

classroom use is granted without fee provided that copies are not made or distributed

for profit or commercial advantage and that copies bear this notice and the full citation

on the first page. Copyrights for third-party components of this work must be honored.

For all other uses, contact the owner/author(s).

EuroSys’15, April 21–24, 2015, Bordeaux, France.

Copyright is held by the owner/author(s).

ACM 978-1-4503-3238-5/15/04.

http://dx.doi.org/10.1145/2741948.2741964

web browsers

BorgMaster

link shard

UI shard

BorgMaster

link shard

UI shard

BorgMaster

link shard

UI shard

BorgMaster

link shard

UI shard

Cell

Scheduler

borgcfg

command-line

tools

web browsers

scheduler

Borglet Borglet Borglet Borglet

BorgMaster

link shard

read/UI

shard

config

file

persistent store

(Paxos)

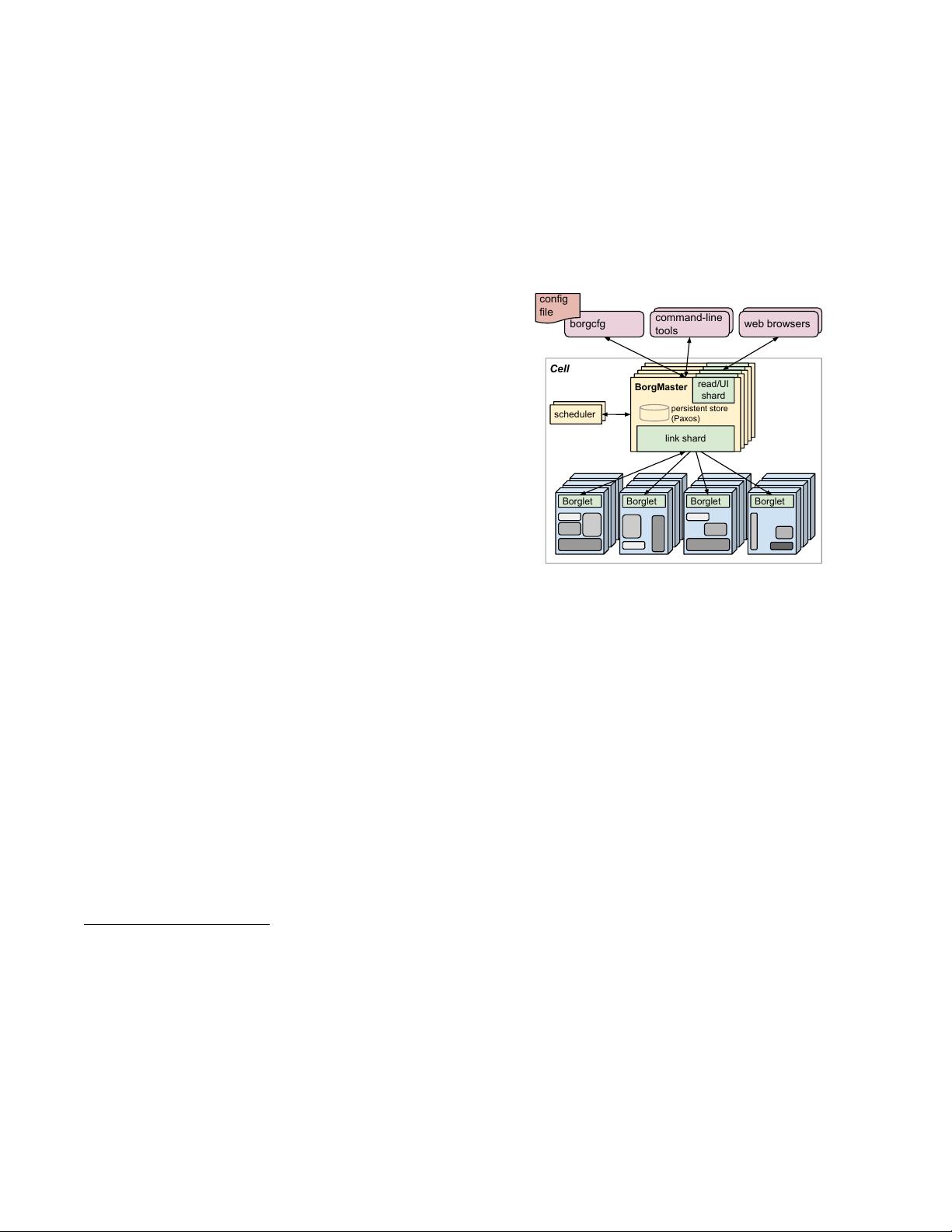

Figure 1: The high-level architecture of Borg. Only a tiny fraction

of the thousands of worker nodes are shown.

cluding with a set of qualitative observations we have made

from operating Borg in production for more than a decade.

2. The user perspective

Borg’s users are Google developers and system administra-

tors (site reliability engineers or SREs) that run Google’s

applications and services. Users submit their work to Borg

in the form of jobs, each of which consists of one or more

tasks that all run the same program (binary). Each job runs

in one Borg cell, a set of machines that are managed as a

unit. The remainder of this section describes the main fea-

tures exposed in the user view of Borg.

2.1 The workload

Borg cells run a heterogenous workload with two main parts.

The first is long-running services that should “never” go

down, and handle short-lived latency-sensitive requests (a

few µs to a few hundred ms). Such services are used for

end-user-facing products such as Gmail, Google Docs, and

web search, and for internal infrastructure services (e.g.,

BigTable). The second is batch jobs that take from a few

seconds to a few days to complete; these are much less sen-

sitive to short-term performance fluctuations. The workload

mix varies across cells, which run different mixes of applica-

tions depending on their major tenants (e.g., some cells are

quite batch-intensive), and also varies over time: batch jobs

come and go, and many end-user-facing service jobs see a

diurnal usage pattern. Borg is required to handle all these

cases equally well.

A representative Borg workload can be found in a publicly-

available month-long trace from May 2011 [80], which has

been extensively analyzed (e.g., [68] and [1, 26, 27, 57]).

Many application frameworks have been built on top of

Borg over the last few years, including our internal MapRe-

duce system [23], FlumeJava [18], Millwheel [3], and Pregel

[59]. Most of these have a controller that submits a master

job and one or more worker jobs; the first two play a similar

role to YARN’s application manager [76]. Our distributed

storage systems such as GFS [34] and its successor CFS,

Bigtable [19], and Megastore [8] all run on Borg.

For this paper, we classify higher-priority Borg jobs as

“production” (prod) ones, and the rest as “non-production”

(non-prod). Most long-running server jobs are prod; most

batch jobs are non-prod. In a representative cell, prod jobs

are allocated about 70% of the total CPU resources and rep-

resent about 60% of the total CPU usage; they are allocated

about 55% of the total memory and represent about 85% of

the total memory usage. The discrepancies between alloca-

tion and usage will prove important in §5.5.

2.2 Clusters and cells

The machines in a cell belong to a single cluster, defined by

the high-performance datacenter-scale network fabric that

connects them. A cluster lives inside a single datacenter

building, and a collection of buildings makes up a site.

1

A cluster usually hosts one large cell and may have a few

smaller-scale test or special-purpose cells. We assiduously

avoid any single point of failure.

Our median cell size is about 10 k machines after exclud-

ing test cells; some are much larger. The machines in a cell

are heterogeneous in many dimensions: sizes (CPU, RAM,

disk, network), processor type, performance, and capabili-

ties such as an external IP address or flash storage. Borg iso-

lates users from most of these differences by determining

where in a cell to run tasks, allocating their resources, in-

stalling their programs and other dependencies, monitoring

their health, and restarting them if they fail.

2.3 Jobs and tasks

A Borg job’s properties include its name, owner, and the

number of tasks it has. Jobs can have constraints to force

its tasks to run on machines with particular attributes such as

processor architecture, OS version, or an external IP address.

Constraints can be hard or soft; the latter act like preferences

rather than requirements. The start of a job can be deferred

until a prior one finishes. A job runs in just one cell.

Each task maps to a set of Linux processes running in

a container on a machine [62]. The vast majority of the

Borg workload does not run inside virtual machines (VMs),

1

There are a few exceptions for each of these relationships.

because we don’t want to pay the cost of virtualization.

Also, the system was designed at a time when we had a

considerable investment in processors with no virtualization

support in hardware.

A task has properties too, such as its resource require-

ments and the task’s index within the job. Most task proper-

ties are the same across all tasks in a job, but can be over-

ridden – e.g., to provide task-specific command-line flags.

Each resource dimension (CPU cores, RAM, disk space,

disk access rate, TCP ports,

2

etc.) is specified independently

at fine granularity; we don’t impose fixed-sized buckets or

slots (§5.4). Borg programs are statically linked to reduce

dependencies on their runtime environment, and structured

as packages of binaries and data files, whose installation is

orchestrated by Borg.

Users operate on jobs by issuing remote procedure calls

(RPCs) to Borg, most commonly from a command-line tool,

other Borg jobs, or our monitoring systems (§2.6). Most job

descriptions are written in the declarative configuration lan-

guage BCL. This is a variant of GCL [12], which gener-

ates protobuf files [67], extended with some Borg-specific

keywords. GCL provides lambda functions to allow calcula-

tions, and these are used by applications to adjust their con-

figurations to their environment; tens of thousands of BCL

files are over 1 k lines long, and we have accumulated tens

of millions of lines of BCL. Borg job configurations have

similarities to Aurora configuration files [6].

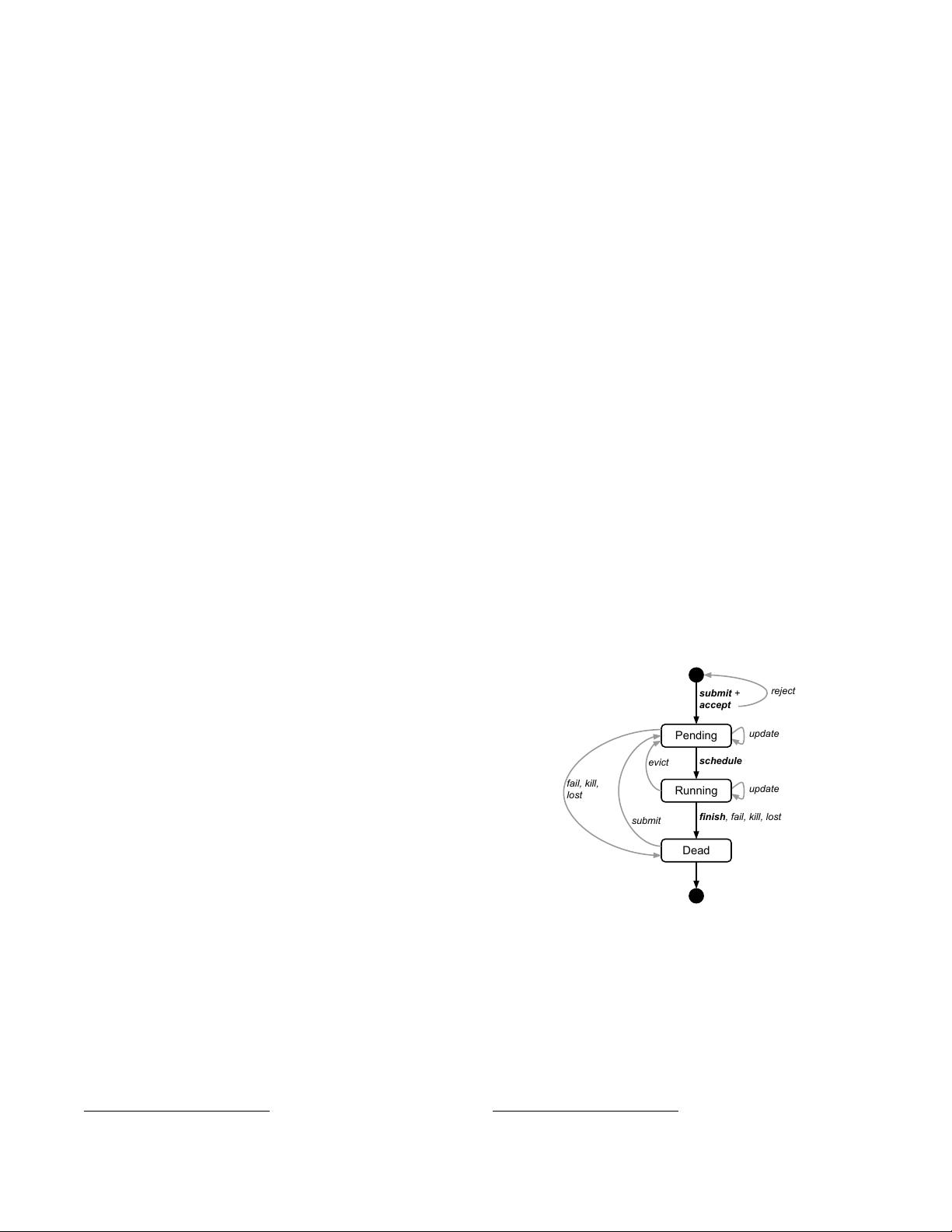

Figure 2 illustrates the states that jobs and tasks go

through during their lifetime.

submit +

accept

Pending

Running

Dead

update

schedule

update

finish, fail, kill, lost

submit

fail, kill,

lost

evict

reject

Figure 2: The state diagram for both jobs and tasks. Users can

trigger submit, kill, and update transitions.

A user can change the properties of some or all of the

tasks in a running job by pushing a new job configuration

to Borg, and then instructing Borg to update the tasks to

the new specification. This acts as a lightweight, non-atomic

transaction that can easily be undone until it is closed (com-

mitted). Updates are generally done in a rolling fashion, and

a limit can be imposed on the number of task disruptions

2

Borg manages the available ports on a machine and allocates them to tasks.

(reschedules or preemptions) an update causes; any changes

that would cause more disruptions are skipped.

Some task updates (e.g., pushing a new binary) will al-

ways require the task to be restarted; some (e.g., increasing

resource requirements or changing constraints) might make

the task no longer fit on the machine, and cause it to be

stopped and rescheduled; and some (e.g., changing priority)

can always be done without restarting or moving the task.

Tasks can ask to be notified via a Unix SIGTERM sig-

nal before they are preempted by a SIGKILL, so they have

time to clean up, save state, finish any currently-executing

requests, and decline new ones. The actual notice may be

less if the preemptor sets a delay bound. In practice, a notice

is delivered about 80% of the time.

2.4 Allocs

A Borg alloc (short for allocation) is a reserved set of re-

sources on a machine in which one or more tasks can be

run; the resources remain assigned whether or not they are

used. Allocs can be used to set resources aside for future

tasks, to retain resources between stopping a task and start-

ing it again, and to gather tasks from different jobs onto the

same machine – e.g., a web server instance and an associ-

ated logsaver task that copies the server’s URL logs from

the local disk to a distributed file system. The resources of

an alloc are treated in a similar way to the resources of a ma-

chine; multiple tasks running inside one share its resources.

If an alloc must be relocated to another machine, its tasks are

rescheduled with it.

An alloc set is like a job: it is a group of allocs that reserve

resources on multiple machines. Once an alloc set has been

created, one or more jobs can be submitted to run in it. For

brevity, we will generally use “task” to refer to an alloc or a

top-level task (one outside an alloc) and “job” to refer to a

job or alloc set.

2.5 Priority, quota, and admission control

What happens when more work shows up than can be ac-

commodated? Our solutions for this are priority and quota.

Every job has a priority, a small positive integer. A high-

priority task can obtain resources at the expense of a lower-

priority one, even if that involves preempting (killing) the

latter. Borg defines non-overlapping priority bands for dif-

ferent uses, including (in decreasing-priority order): moni-

toring, production, batch, and best effort (also known as

testing or free). For this paper, prod jobs are the ones in the

monitoring and production bands.

Although a preempted task will often be rescheduled

elsewhere in the cell, preemption cascades could occur if

a high-priority task bumped out a slightly lower-priority

one, which bumped out another slightly-lower priority task,

and so on. To eliminate most of this, we disallow tasks in

the production priority band to preempt one another. Fine-

grained priorities are still useful in other circumstances –

e.g., MapReduce master tasks run at a slightly higher priority

than the workers they control, to improve their reliability.

Priority expresses relative importance for jobs that are

running or waiting to run in a cell. Quota is used to decide

which jobs to admit for scheduling. Quota is expressed as

a vector of resource quantities (CPU, RAM, disk, etc.) at a

given priority, for a period of time (typically months). The

quantities specify the maximum amount of resources that

a user’s job requests can ask for at a time (e.g., “20 TiB

of RAM at prod priority from now until the end of July

in cell xx”). Quota-checking is part of admission control,

not scheduling: jobs with insufficient quota are immediately

rejected upon submission.

Higher-priority quota costs more than quota at lower-

priority. Production-priority quota is limited to the actual

resources available in the cell, so that a user who submits

a production-priority job that fits in their quota can expect it

to run, modulo fragmentation and constraints. Even though

we encourage users to purchase no more quota than they

need, many users overbuy because it insulates them against

future shortages when their application’s user base grows.

We respond to this by over-selling quota at lower-priority

levels: every user has infinite quota at priority zero, although

this is frequently hard to exercise because resources are over-

subscribed. A low-priority job may be admitted but remain

pending (unscheduled) due to insufficient resources.

Quota allocation is handled outside of Borg, and is inti-

mately tied to our physical capacity planning, whose results

are reflected in the price and availability of quota in differ-

ent datacenters. User jobs are admitted only if they have suf-

ficient quota at the required priority. The use of quota re-

duces the need for policies like Dominant Resource Fairness

(DRF) [29, 35, 36, 66].

Borg has a capability system that gives special privileges

to some users; for example, allowing administrators to delete

or modify any job in the cell, or allowing a user to access

restricted kernel features or Borg behaviors such as disabling

resource estimation (§5.5) on their jobs.

2.6 Naming and monitoring

It’s not enough to create and place tasks: a service’s clients

and other systems need to be able to find them, even after

they are relocated to a new machine. To enable this, Borg

creates a stable “Borg name service” (BNS) name for each

task that includes the cell name, job name, and task number.

Borg writes the task’s hostname and port into a consistent,

highly-available file in Chubby [14] with this name, which

is used by our RPC system to find the task endpoint. The

BNS name also forms the basis of the task’s DNS name,

so the fiftieth task in job jfoo owned by user ubar in cell

cc would be reachable via 50.jfoo.ubar.cc.borg.google.com.

Borg also writes job size and task health information into

Chubby whenever it changes, so load balancers can see

where to route requests to.

Almost every task run under Borg contains a built-in

HTTP server that publishes information about the health of

the task and thousands of performance metrics (e.g., RPC

latencies). Borg monitors the health-check URL and restarts

tasks that do not respond promptly or return an HTTP er-

ror code. Other data is tracked by monitoring tools for dash-

boards and alerts on service level objective (SLO) violations.

A service called Sigma provides a web-based user inter-

face (UI) through which a user can examine the state of all

their jobs, a particular cell, or drill down to individual jobs

and tasks to examine their resource behavior, detailed logs,

execution history, and eventual fate. Our applications gener-

ate voluminous logs; these are automatically rotated to avoid

running out of disk space, and preserved for a while after the

task’s exit to assist with debugging. If a job is not running

Borg provides a “why pending?” annotation, together with

guidance on how to modify the job’s resource requests to

better fit the cell. We publish guidelines for “conforming”

resource shapes that are likely to schedule easily.

Borg records all job submissions and task events, as well

as detailed per-task resource usage information in Infrastore,

a scalable read-only data store with an interactive SQL-like

interface via Dremel [61]. This data is used for usage-based

charging, debugging job and system failures, and long-term

capacity planning. It also provided the data for the Google

cluster workload trace [80].

All of these features help users to understand and debug

the behavior of Borg and their jobs, and help our SREs

manage a few tens of thousands of machines per person.

3. Borg architecture

A Borg cell consists of a set of machines, a logically central-

ized controller called the Borgmaster, and an agent process

called the Borglet that runs on each machine in a cell (see

Figure 1). All components of Borg are written in C++.

3.1 Borgmaster

Each cell’s Borgmaster consists of two processes: the main

Borgmaster process and a separate scheduler (§3.2). The

main Borgmaster process handles client RPCs that either

mutate state (e.g., create job) or provide read-only access

to data (e.g., lookup job). It also manages state machines

for all of the objects in the system (machines, tasks, allocs,

etc.), communicates with the Borglets, and offers a web UI

as a backup to Sigma.

The Borgmaster is logically a single process but is ac-

tually replicated five times. Each replica maintains an in-

memory copy of most of the state of the cell, and this state is

also recorded in a highly-available, distributed, Paxos-based

store [55] on the replicas’ local disks. A single elected mas-

ter per cell serves both as the Paxos leader and the state

mutator, handling all operations that change the cell’s state,

such as submitting a job or terminating a task on a ma-

chine. A master is elected (using Paxos) when the cell is

brought up and whenever the elected master fails; it acquires

a Chubby lock so other systems can find it. Electing a master

and failing-over to the new one typically takes about 10 s, but

can take up to a minute in a big cell because some in-memory

state has to be reconstructed. When a replica recovers from

an outage, it dynamically re-synchronizes its state from other

Paxos replicas that are up-to-date.

The Borgmaster’s state at a point in time is called a

checkpoint, and takes the form of a periodic snapshot plus a

change log kept in the Paxos store. Checkpoints have many

uses, including restoring a Borgmaster’s state to an arbitrary

point in the past (e.g., just before accepting a request that

triggered a software defect in Borg so it can be debugged);

fixing it by hand in extremis; building a persistent log of

events for future queries; and offline simulations.

A high-fidelity Borgmaster simulator called Fauxmaster

can be used to read checkpoint files, and contains a complete

copy of the production Borgmaster code, with stubbed-out

interfaces to the Borglets. It accepts RPCs to make state ma-

chine changes and perform operations, such as “schedule all

pending tasks”, and we use it to debug failures, by interact-

ing with it as if it were a live Borgmaster, with simulated

Borglets replaying real interactions from the checkpoint file.

A user can step through and observe the changes to the sys-

tem state that actually occurred in the past. Fauxmaster is

also useful for capacity planning (“how many new jobs of

this type would fit?”), as well as sanity checks before mak-

ing a change to a cell’s configuration (“will this change evict

any important jobs?”).

3.2 Scheduling

When a job is submitted, the Borgmaster records it persis-

tently in the Paxos store and adds the job’s tasks to the pend-

ing queue. This is scanned asynchronously by the scheduler,

which assigns tasks to machines if there are sufficient avail-

able resources that meet the job’s constraints. (The sched-

uler primarily operates on tasks, not jobs.) The scan pro-

ceeds from high to low priority, modulated by a round-robin

scheme within a priority to ensure fairness across users and

avoid head-of-line blocking behind a large job. The schedul-

ing algorithm has two parts: feasibility checking, to find ma-

chines on which the task could run, and scoring, which picks

one of the feasible machines.

In feasibility checking, the scheduler finds a set of ma-

chines that meet the task’s constraints and also have enough

“available” resources – which includes resources assigned

to lower-priority tasks that can be evicted. In scoring, the

scheduler determines the “goodness” of each feasible ma-

chine. The score takes into account user-specified prefer-

ences, but is mostly driven by built-in criteria such as mini-

mizing the number and priority of preempted tasks, picking

machines that already have a copy of the task’s packages,

spreading tasks across power and failure domains, and pack-

ing quality including putting a mix of high and low priority

剩余16页未读,继续阅读

资源评论

ShaoKaiyang

- 粉丝: 76

- 资源: 2

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜最新资源

- docker一键安装包

- Screenshot_20240430_144340_com.ss.android.ugc.live.jpg

- 回到山沟沟.mp3

- 基于matlab实现自适应波束形成RLS及LMS算法仿真源程序1.rar

- 基于matlab实现自己编写的基于卡尔曼滤波的利用加速度传感器的计步器,测试数据是传感器放在腰部和手臂 .rar

- 基于matlab实现阵列信号处理,波束形成.rar

- 111111111111111111

- 基于matlab实现计步器编程;对当前的计步器装置的数值算法模拟 .rar

- Mdb学习查看PW;access;mdb;pw;password;patch

- 基于matlab实现关于语音信号声源定位DOA估计所用的一些传统算法.rar

资源上传下载、课程学习等过程中有任何疑问或建议,欢迎提出宝贵意见哦~我们会及时处理!

点击此处反馈

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功