Fast Visual Tracking via

Dense Spatio-Temporal Context Learning

Kaihua Zhang

1

, Lei Zhang

2

, Qingshan Liu

1

, David Zhang

2

, and Ming-Hsuan Yang

3

1

S-mart Group, Nanjing University of Information Science & Technology

2

Dept. of Computing, The Hong Kong Polytechnic University

3

Electrical Engineering and Computer Science, University of California at Merced

zhkhua@gmail.com,cslzhang@comp.polyu.edu.hk,qsliu@nuist.edu.cn,

csdzhang@comp.polyu.edu.hk,mhyang@ucmerced.edu

Abstract. In this paper, we present a simple yet fast and robust algorithm which

exploits the dense spatio-temporal context for visual tracking. Our approach for-

mulates the spatio-temporal relationships between the object of interest and its

locally dense contexts in a Bayesian framework, which models the statistical cor-

relation between the simple low-level features (i.e., image intensity and position)

from the target and its surrounding regions. The tracking problem is then posed

by computing a confidence map which takes into account the prior information

of the target location and thereby alleviates target location ambiguity effectively.

We further propose a novel explicit scale adaptation scheme, which is able to deal

with target scale variations efficiently and effectively. The Fast Fourier Trans-

form (FFT) is adopted for fast learning and detection in this work, which only

needs 4 FFT operations. Implemented in MATLAB without code optimization,

the proposed tracker runs at 350 frames per second on an i7 machine. Extensive

experimental results show that the proposed algorithm performs favorably against

state-of-the-art methods in terms of efficiency, accuracy and robustness.

1 Introduction

Visual tracking is one of the most active research topics due to its wide range of applica-

tions such as motion analysis, activity recognition, surveillance, and human-computer

interaction, to name a few [29]. The main challenge for robust visual tracking is to han-

dle large appearance changes of the target object and the background over time due to

occlusion, illumination changes, and pose variation. Numerous algorithms have been

proposed with focus on effective appearance models, which are based on the target ap-

pearance [8,1,28,22,17,18,19,23,21,31] or the difference between appearances of the

target and its local background [11,16,14,2,30,15]. However, if the appearances are de-

graded severely, there does not exist enough information extracted for robustly tracking

the target, whereas its existing scene can provide useful context information to help

localizing it.

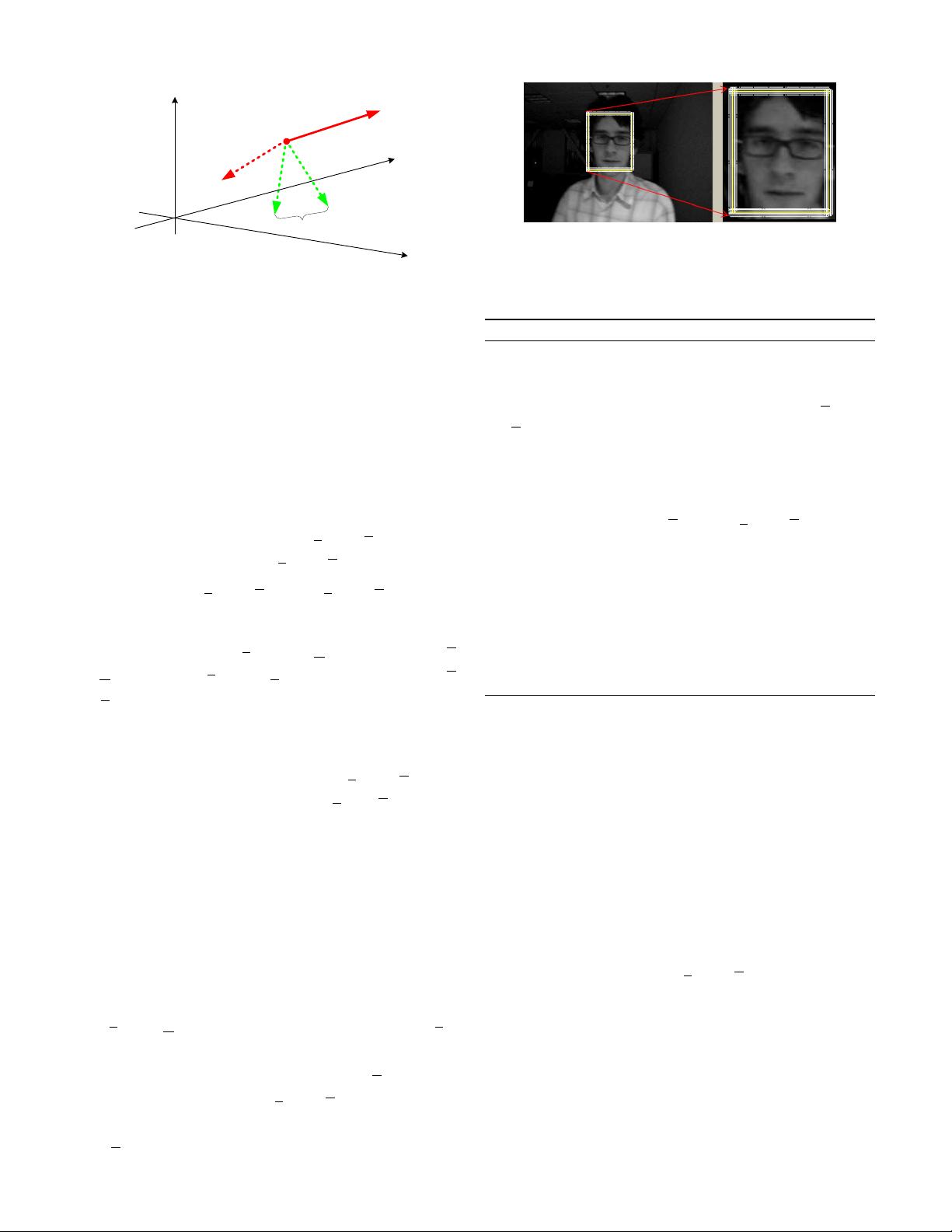

In visual tracking, a local context consists of a target object and its immediate sur-

rounding background within a determined region (see the regions inside the red rect-

angles in Figure 1). Most of local contexts remain unchanged as changes between two

consecutive frames can be reasonably assumed to be smooth as the time interval is usu-

ally small (30 frames per second (FPS)). Therefore, there exists a strong spatio-temporal