没有合适的资源?快使用搜索试试~ 我知道了~

资源推荐

资源详情

资源评论

Chapter 10

CART: Classification and Regression Trees

Dan Steinberg

Contents

10.1 Antecedents ......................................................... 180

10.2 Overview ........................................................... 181

10.3 A Running Example ................................................. 181

10.4 The Algorithm Briefly Stated ........................................ 183

10.5 Splitting Rules ...................................................... 185

10.6 Prior Probabilities and Class Balancing ............................... 187

10.7 Missing Value Handling ............................................. 189

10.8 Attribute Importance ................................................ 190

10.9 Dynamic Feature Construction ....................................... 191

10.10 Cost-Sensitive Learning ............................................. 192

10.11 Stopping Rules, Pruning, Tree Sequences, and Tree Selection ......... 193

10.12 Probability Trees .................................................... 194

10.13 Theoretical Foundations ............................................. 196

10.14 Post-CART Related Research ........................................ 196

10.15 Software Availability ................................................ 198

10.16 Exercises ............................................................ 198

References .................................................................. 199

The 1984 monograph, “CART: Classification and Regression Trees,” coauthored by

Leo Breiman, Jerome Friedman, Richard Olshen, and Charles Stone (BFOS), repre-

sents a major milestone in the evolution of artificial intelligence, machine learning,

nonparametric statistics, and data mining. The work is important for the compre-

hensiveness of its study of decision trees, the technical innovations it introduces, its

sophisticated examplesoftree-structureddata analysis, and its authoritativetreatment

of large sample theory for trees. Since its publication the CART monograph has been

cited some 3000 times according to the science and social science citation indexes;

Google Scholar reports about 8,450 citations. CART citations can be found in almost

any domain, with many appearing in fields such as credit risk, targeted marketing, fi-

nancial markets modeling, electrical engineering, quality control, biology, chemistry,

and clinical medical research. CART has also strongly influenced image compression

179

© 2009 by Taylor & Francis Group, LLC

180 CART: Classification and Regression Trees

via tree-structured vector quantization. This brief account is intended to introduce

CART basics, touching on the major themes treated in the CART monograph, and to

encourage readers to return to the rich original source for technical details, discus-

sions revealing the thought processes of the authors, and examples of their analytical

style.

10.1 Antecedents

CART was not the first decision tree to be introduced to machine learning, although

it is the first to be described with analytical rigor and supported by sophisticated

statistics and probability theory. CART explicitly traces its ancestry to the auto-

matic interaction detection (AID) tree of Morgan and Sonquist (1963), an automated

recursive method for exploring relationships in data intended to mimic the itera-

tive drill-downs typical of practicing survey data analysts. AID was introduced as a

potentially useful tool without any theoretical foundation. This 1960s-era work on

trees was greeted with profound skepticism amidst evidence that AID could radically

overfit the training data and encourage profoundly misleading conclusions (Einhorn,

1972; Doyle, 1973), especially in smaller samples. By 1973 well-read statisticians

were convinced that trees were a dead end; the conventional wisdom held that trees

were dangerous and unreliable tools particularly because of their lack of a theoretical

foundation. Other researchers, however, were not yet prepared to abandon the tree

line of thinking. The work of Cover and Hart (1967) on the large sample properties

of nearest neighbor (NN) classifiers was instrumental in persuading Richard Olshen

and Jerome Friedman that trees had sufficient theoretical merit to be worth pursu-

ing. Olshen reasoned that if NN classifiers could reach the Cover and Hart bound

on misclassification error, then a similar result should be derivable for a suitably

constructed tree because the terminal nodes of trees could be viewed as dynami-

cally constructed NN classifiers. Thus, the Cover and Hart NN research was the

immediate stimulus that persuaded Olshen to investigate the asymptotic properties of

trees. Coincidentally, Friedman’s algorithmic work on fast identification of nearest

neighbors via trees (Friedman, Bentley, and Finkel, 1977) used a recursive partition-

ing mechanism that evolved into CART. One predecessor of CART appears in the

1975 Stanford Linear Accelerator Center (SLAC) discussion paper (Friedman,1975),

subsequently published in a shorter form by Friedman (1977). While Friedman was

working out key elements of CART at SLAC, with Olshen conducting mathemat-

ical research in the same lab, similar independent research was under way in Los

Angeles by Leo Breiman and Charles Stone (Breiman and Stone, 1978). The two

separate strands of research (Friedman and Olshen at Stanford, Breiman and Stone

in Los Angeles) were brought together in 1978 when the four CART authors for-

mally began the process of merging their work and preparing to write the CART

monograph.

© 2009 by Taylor & Francis Group, LLC

10.3 A Running Example 181

10.2 Overview

The CART decision tree is a binary recursive partitioning procedure capable of pro-

cessing continuous and nominal attributes as targets and predictors. Data are handled

in their raw form; no binning is required or recommended. Beginning in the root

node, the data are split into two children, and each of the children is in turn split into

grandchildren. Trees are grown to a maximal size without the use of a stopping rule;

essentially the tree-growing process stops when no further splits are possible due to

lack of data. The maximal-sized tree is then pruned back to the root (essentially split

by split) via the novel method of cost-complexity pruning. The next split to be pruned

is the one contributingleast to the overallperformance of the tree on training data (and

more than one split may be removed at a time). The CART mechanism is intended

to produce not one tree, but a sequence of nested pruned trees, each of which is a

candidate to be the optimal tree. The “right sized” or “honest” tree is identified by

evaluating the predictive performance of every tree in the pruning sequence on inde-

pendent test data. Unlike C4.5, CART does not use an internal (training-data-based)

performance measure for tree selection. Instead, tree performance is alwaysmeasured

on independent test data (or via cross-validation) and tree selection proceeds only af-

ter test-data-based evaluation. If testing or cross-validation has not been performed,

CART remains agnostic regarding which tree in the sequence is best. This is in sharp

contrast to methods such as C4.5 or classical statistics that generate preferred models

on the basis of training data measures.

The CART mechanism includes (optional) automatic class balancing and auto-

matic missing value handling, and allows for cost-sensitive learning, dynamic feature

construction, and probability tree estimation. The final reports include a novel at-

tribute importance ranking. The CART authors also broke new ground in showing

how cross-validation can be used to assess performance for every tree in the pruning

sequence, given that trees in different cross-validation folds may not align on the

number of terminal nodes. It is useful to keep in mind that although BFOS addressed

all these topics in the 1970s, in some cases the BFOS treatment remains the state-of-

the-art. The literature of the 1990s contains a number of articles that rediscover core

insights first introduced in the 1984 CART monograph. Each of these major features

is discussed separately below.

10.3 A Running Example

To help make the details of CART concrete we illustrate some of our points using an

easy-to-understand real-world example. (The data have been altered to mask some of

the original specifics.) In the early 1990s the author assisted a telecommunications

company in understanding the market for mobile phones. Because the mobile phone

© 2009 by Taylor & Francis Group, LLC

182 CART: Classification and Regression Trees

TABLE 10.1

Example Data Summary Statistics

Attribute N N Missing % Missing N Distinct Mean Min Max

AGE 813 18 2.2 9 5.059 1 9

CITY 830 0 0 5 1.769 1 5

HANDPRIC 830 0 0 4 145.3 60 235

MARITAL 822 9 1.1 3 1.9015 1 3

PAGER 825 6 0.72 2 0.076364 0 1

RENTHOUS 830 0 0 3 1.7906 1 3

RESPONSE 830 0 0 2 0.1518 0 1

SEX 819 12 1.4 2 1.4432 1 2

TELEBILC 768 63 7.6 6 54.199 8 116

TRAVTIME 651 180 22 5 2.318 1 5

USEPRICE 830 0 0 4 11.151 10 30

MARITAL = Marital Status (Never Married, Married, Divorced/Widowed)

TRAVTIME = estimated commute time to major center of employment

AGE is recorded as an integer ranging from 1 to 9

was a new technology at that time, we needed to identify the major drivers of adoption

of this then-new technology and to identify demographics that might be related to

price sensitivity. The data consisted of a household’s response (yes/no) to a market

test offer of a mobile phone package; all prospects were offered an identical package

of a handset and service features, with one exception that the pricing for the package

was varied randomly according to an experimental design. The only choice open to

the households was to accept or reject the offer.

A total of 830 households were approached and 126 of the households agreed to

subscribe to the mobile phone service plan. One of our objectives was to learn as

much as possible about the differences between subscribers and nonsubscribers. A

set of summary statistics for select attributes appear in Table 10.1. HANDPRIC is the

price quoted for the mobile handset, USEPRIC is the quoted per-minute charge, and

the other attributes are provided with common names.

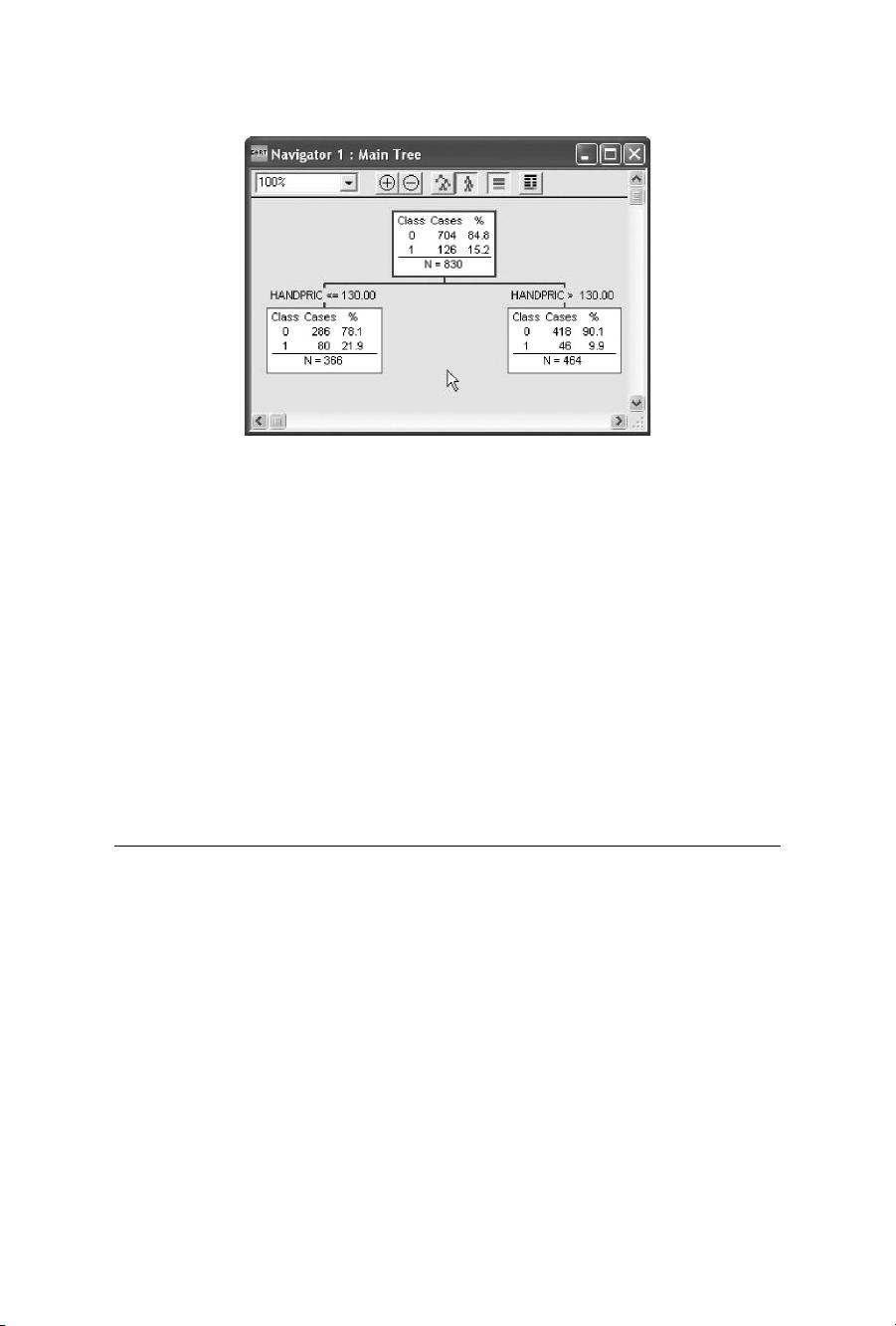

A CART classification tree was grown on these data to predict the RESPONSE

attribute using all the other attributes as predictors. MARITAL and CITY are cate-

gorical (nominal) attributes. A decision tree is grown by recursively partitioning the

training data using a splitting rule to identify the split to use at each node. Figure 10.1

illustrates this process beginning with the root node splitter at the top of the tree.

The root node at the top of the diagram contains all our training data, including 704

nonsubscribers (labeled with a 0) and 126 subscribers (labeled 1). Each of the 830

instances contains data on the 10 predictor attributes, although there are some missing

values. CART begins by searching the data for the best splitter available, testing each

predictor attribute-value pair for its goodness-of-split. In Figure 10.1 we see the

results of this search: HANDPRIC has been determined to be the best splitter using a

threshold of 130 to partition the data. All instances presented with a HANDPRIC less

than or equal to 130 are sent to the left child node and all other instances are sent to

the right. The resulting split yields two subsets of the data with substantially different

© 2009 by Taylor & Francis Group, LLC

10.4 The Algorithm Briefly Stated 183

Figure 10.1 Root node split.

response rates: 21.9% for those quoted lower prices and 9.9% for those quoted the

higher prices. Clearly both the root node splitter and the magnitude of the difference

between the two child nodes are plausible. Observe that the split always results in

two nodes: CART uses only binary splitting.

To generate a complete tree CART simply repeats the splitting process just

described in each of the two child nodes to produce grandchildren of the root. Grand-

children are split to obtain great-grandchildren and so on until further splitting is

impossible due to a lack of data. In our example, this growing process results in a

“maximal tree” consisting of 81 terminal nodes: nodes at the bottom of the tree that

are not split further.

10.4 The Algorithm Briefly Stated

A complete statement of the CART algorithm, including all relevant technical details,

is lengthy and complex; there are multiple splitting rules available for both classifica-

tion and regression, separate handling of continuous and categorical splitters, special

handling for categorical splitters with many levels, and provision for missing value

handling. Following the tree-growing procedure there is another complex procedure

for pruning the tree, and finally, there is tree selection. In Figure 10.2 a simplified

algorithm for tree growing is sketched out. Formal statements of the algorithm are

provided in the CART monograph. Here we offer an informal statement that is highly

simplified.

Observethat this simplified algorithm sketch makes no reference to missing values,

classassignments,orothercoredetailsofCART. Thealgorithmsketchesamechanism

for growing the largest possible (maximal) tree.

© 2009 by Taylor & Francis Group, LLC

剩余22页未读,继续阅读

资源评论

huangyong0223

- 粉丝: 5

- 资源: 14

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功