没有合适的资源?快使用搜索试试~ 我知道了~

资源推荐

资源详情

资源评论

Breaking the Limits of Message Passing Graph Neural Networks

Muhammet Balcilar

1 2

Pierre H

´

eroux

1

Benoit Ga

¨

uz

`

ere

3

Pascal Vasseur

1 4

S

´

ebastien Adam

1

Paul Honeine

1

Abstract

Since the Message Passing (Graph) Neural Net-

works (MPNNs) have a linear complexity with

respect to the number of nodes when applied

to sparse graphs, they have been widely imple-

mented and still raise a lot of interest even though

their theoretical expressive power is limited to

the first order Weisfeiler-Lehman test (1-WL). In

this paper, we show that if the graph convolution

supports are designed in spectral-domain by a non-

linear custom function of eigenvalues and masked

with an arbitrary large receptive field, the MPNN

is theoretically more powerful than the 1-WL test

and experimentally as powerful as a 3-WL exist-

ing models, while remaining spatially localized.

Moreover, by designing custom filter functions,

outputs can have various frequency components

that allow the convolution process to learn differ-

ent relationships between a given input graph sig-

nal and its associated properties. So far, the best

3-WL equivalent graph neural networks have a

computational complexity in

O(n

3

)

with memory

usage in

O(n

2

)

, consider non-local update mech-

anism and do not provide the spectral richness of

output profile. The proposed method overcomes

all these aforementioned problems and reaches

state-of-the-art results in many downstream tasks.

1. Introduction

In the past few years, finding the best inductive bias for

relational data represented as graphs has gained a lot of

interest in the machine learning community. Node-based

message passing mechanisms relying on the graph structure

have given rise to the first generation of Graph Neural Net-

works (GNNs) called Message Passing Neural Networks

(MPNNs) (Gilmer et al., 2017). These algorithms spread

each node features to the neighborhood nodes using train-

1

LITIS Lab, University of Rouen Normandy, France

2

InterDigital, France

3

LITIS Lab, INSA Rouen Normandy, France

4

MIS Lab, Universit

´

e de Picardie Jules Verne, France. Correspon-

dence to: Muhammet Balcilar

<

muhammetbalcilar@gmail.com

>

.

Proceedings of the

38

th

International Conference on Machine

Learning, PMLR 139, 2021. Copyright 2021 by the author(s).

able weights. These weights can be shared with respect

to the distance between nodes (Chebnet GNN) (Defferrard

et al., 2016), to the connected nodes features (GAT for graph

attention network) (Veli

ˇ

ckovi

´

c et al., 2018) and/or to edge

features (Bresson & Laurent, 2018). When considering

sparse graphs, the memory and computational complexity

of such approaches are linear with respect to the number of

nodes. As a consequence, these algorithms are feasible for

large sparse graphs and thus have been applied with success

on many downstream tasks (Dwivedi et al., 2020).

Despite these successes and these interesting computational

properties, it has been shown that MPNNs are not pow-

erful enough (Xu et al., 2019). Considering two non-

isomorphic graphs that are not distinguishable by the first

order Weisfeiler-Lehman test (known as the 1-WL test), ex-

isting maximum powerful MPNNs embed them to the same

point. Thus, from a theoretical expressive power point of

view, these algorithms are not more powerful than the 1-WL

test. Beyond the graph isomorphism issue, it has also been

shown that many other combinatorial problems on graph

cannot be solved by MPNNs (Sato et al., 2019).

In (Maron et al., 2019b; Keriven & Peyr

´

e, 2019), it has been

proven that in order to reach universal approximation, higher

order relations are required. In this context, some powerful

models that are equivalent to the 3-WL test were proposed.

For instance, (Maron et al., 2019a) proposed the model

PPGN (Provably Powerful Graph Network) that mimics the

second order Folklore WL test (2-FWL), which is equivalent

to the 3-WL test. In (Morris et al., 2019), they proposed to

use message passing between 1, 2 and 3 order node tuples

hierarchically, thus reaching the 3-WL expressive power.

However, using such relations makes both memory usage

and computational complexities grown exponentially. Thus,

it is not feasible to have universal approximation models in

practice.

In order to increase the theoretical expressive power of

MPNNs by keeping the linear complexity mentioned above,

some researchers proposed to partly randomize node fea-

tures (Abboud et al., 2020; Sato et al., 2020) or to add a

unique label (Murphy et al., 2019) in order to have the

ability to distinguish two non-isomorphic graphs that are

not distinguished by the 1-WL test. These solutions need

massively training samples and involve slow convergence.

arXiv:2106.04319v1 [cs.LG] 8 Jun 2021

Breaking the Limits of Message Passing Graph Neural Networks

(Bouritsas et al., 2020; Dasoulas et al., 2020) proposed to

use a preprocessing step to extract some features that cannot

be extracted by MPNNs. Thus, the expressive power of

their GNN is improved. However, these handcrafted fea-

tures need domain expertise and a feature selection process

among an infinite number of possibilities.

All these studies target more theoretically powerful models,

closer to universal approximation. However, this does not

always induce a better generalization ability. Since most of

the realistic problems are given with many node/edge fea-

tures (which can be either continuous or discrete), there is

almost no pair of graphs that are not distinguishable by the

1-WL test in practice. In addition, theoretically more power-

ful methods use non-local updates, breaking one of the most

important inductive bias in Euclidean learning named local-

ity principle (Battaglia et al., 2018). These may explain why

theoretical powerful methods cannot outperform MPNNs

on many downstream tasks, as reported in (Dwivedi et al.,

2020). On the other hand, it is obvious that 1-WL equivalent

GNNs are not expressive enough since they are not able to

count some simple structural features such as cycles or trian-

gles (Arvind et al., 2020; Chen et al., 2020; Bouritsas et al.,

2020; Vignac et al., 2020), which are informative for some

social or chemical graphs. Finally, another important aspect

mentioned by a recent paper (Balcilar et al., 2021) concerns

the spectral ability of GNN models. It is shown that a vast

majority of the MPNNs actually work as low-pass filters,

thus reducing their expressive power.

In this paper, we propose to design graph convolution in the

spectral domain with custom non-linear functions of eigen-

values and by masking the convolution support with desired

length of receptive field. In this way, we have (i) a spatially

local updates process, (ii) linear memory and computational

complexities (except the eigendecomposition in preprocess-

ing step), (iii) enough spectral ability and (iv) a model that is

theoretically more powerful than the 1-WL test, and experi-

mentally as powerful as PPGN. Experiments show that the

proposed model can distinguish pairs of graphs that cannot

be distinguished by 1-WL equivalent MPNNs. It is also able

to count some substructures that 1-WL equivalent MPNNs

cannot. Its spectral ability enables to produce various kind

of spectral components in the output, while the vast majority

of the GNNs including higher order WL equivalent models

do not. Finally, thanks to the sparse matrix multiplication, it

has linear time complexity except the eigendecomposition

in preprocessing step.

The paper is structured as follows. In Section 2, we set

the notations and the general framework used in the follow-

ing. Section 3 is dedicated to the characterization of WL

test, which is the backbone of our theoretical analysis. It

is followed by our findings in Section 4 on analysing the

expressive power of MPNNs and our solutions to improve

expressive power of MPNNs in Section 5. The experimental

results and conclusion are the last two section of this paper.

2. Generalization of Spectral and Spatial

MPNN

Let

G

be a graph with

n

nodes and an arbitrary number

of edges. Connectivity is given by the adjacency matrix

A ∈ {0, 1}

n×n

and features are defined on nodes by

X ∈

R

n×f

0

, with

f

0

the length of feature vectors. For any matrix

X

, we used

X

i

,

X

:j

and

X

i,j

to refer to its

i

-th column

vector,

j

-th row vector and (

i, j

)-th entry, respectively. A

graph Laplacian is given by

L = D − A

(or

L = I −

D

−1/2

AD

−1/2

) where

D ∈ R

n×n

is the diagonal degree

matrix and

I

is the identity. Through an eigendecomposition,

L

can be written by

L = Udiag(λ)U

T

where each column

of

U ∈ R

n×n

is an eigenvector of

L

,

λ ∈ R

n

gathers

the eigenvalues of L and

diag(·)

creates a diagonal matrix

whose diagonal elements are from a given vector. We use

superscripts to refer to vectors or matrices evolving through

iterations or layers. For instance,

H

(l)

∈ R

n×f

l

refers to

the node representation on layer

l

whose feature dimension

is f

l

.

GNN models rely on a set of layers where each layer takes

the node representation of the previous layer

H

(l−1)

as input

and produces a new representation

H

(l)

, with

H

(0)

= X

.

According to the domain which is considered to design the

layer computations, GNNs are generally classified as either

spectral or spatial (Wu et al., 2019; Chami et al., 2020).

Spectral GNNs rely on the spectral graph theory (Chung,

1997). In this framework, signals on graphs are filtered using

the eigendecomposition of the graph Laplacian (Shuman

et al., 2013). By transposing the convolution theorem to

graphs, the spectral filtering in the frequency domain can

be defined by

x

flt

= Udiag(Ω(λ))U

>

x

, where

Ω(.)

is

the desired filter function which needs to be learnt by back-

propagation. On the other hand, spatial GNNs, such as GCN

(graph convolutional network) (Kipf & Welling, 2017) and

GraphSage (Hamilton et al., 2017), consider two operators,

one that aggregates the connected nodes messages and one

that updates the concerned node representation.

In a recent paper (Balcilar et al., 2021), it was explicitly

shown that both spatial and spectral GNNs are MPNN, tak-

ing the general form

H

(l+1)

= σ

X

s

C

(s)

H

(l)

W

(l,s)

, (1)

where

C

(s)

∈ R

n×n

is the

s

-th convolution support that

defines how the node features are propagated to the neigh-

boring nodes and

W

(l,s)

∈ R

f

l

×f

l+1

is the trainable matrix

for the

l

-th layer and

s

-th support. Within this generalization,

GNNs differ from each other by the design of the convo-

lution supports

C

(s)

. If the supports are designed in the

Breaking the Limits of Message Passing Graph Neural Networks

spectral domain by

Φ

s

(λ)

, the convolution support needs to

be written as C

(s)

= Udiag(Φ

s

(λ))U

>

.

One can see that as long as

C

(s)

matrices are sparse (number

of edges is defined by some constant multiplied by the

number of nodes), MPNN in Eq.1 has linear memory and

computational complexities with respect to the number of

nodes. Because, the valid entries in

C

(s)

that we need to

keep is linear with respect to the number of nodes and thank

to the sparse matrix multiplication

C

(s)

H

(l)

takes linear

time with respect to the number of edges thus nodes as well.

3. Characterization of Weisfeiler-Lehman

The universality of a GNN is based on its ability to embed

two non-isomorphic graphs to distinct points in the target

feature space. A model that can distinguish all pairs of non-

isomorphic graphs is a universal approximator. Since the

graph isomorphism problem is NP-intermediate (Takapoui

& Boyd, 2016), the Weisfeiler-Lehman Test (abbreviated

WL-test), which gives sufficient but not enough evidence

of graph isomorphism, is frequently used for characterizing

GNN expressive power. The classical vertex coloring WL

test can be extended by taking into account higher order of

node tuple within the iterative process. These extensions are

denoted as

k

-WL test, where

k

is equals to the order of the

tuple. These tests are described in Appendix A.

It is shown in (Arvind et al., 2020) that for

k ≥ 2

,

(k + 1)

-

WL

> (k)

-WL, i.e., higher order of tuple leads to a better

ability to distinguish two non-isomorphic graphs. For

k = 1

,

this statement is not true, and 2-WL is not more powerful

than 1-WL (Maron et al., 2019a). To clarify this point,

the Folkore WL (FWL) test has been defined such that

1-WL=1-FWL, but for

k ≥ 2

, we have

(k + 1)

-WL

≈

(k)-FWL (Maron et al., 2019a).

In literature, some confusions occur among the two ver-

sions. Some papers use WL test order (Morris et al., 2019;

Maron et al., 2019a), while others use FWL order under the

name of WL such as in (Abboud et al., 2020; Arvind et al.,

2020; Takapoui & Boyd, 2016). In this paper, we explicitly

mention both WL and FWL equivalent.

In order to better understand the capability of WL tests,

some papers attempt to characterize these tests using a first

order logic (Immerman & Lander, 1990; Barcel

´

o et al.,

2019). Consider two unlabeled and undirected graphs repre-

sented by their adjacency matrices

A

G

and

A

H

. These two

graphs are said

k

-WL (or

k

-FWL) equivalent, and denoted

A

G

≡

k−W L

A

H

, if they are indistinguishable by a

k

-WL

(or k-FWL) test.

Recently (Brijder et al., 2019; Geerts, 2020) proposed a

new Matrix Language called MATLANG. This language

includes different operations on matrices and makes some

explicit connections between specific dictionaries of oper-

ations and the 1-WL and 3-WL tests. Expressive power

varies with the operations included in each dictionnary.

Definition 1. M L(L)

is a matrix language with an al-

lowed operation set

L = {op

1

, . . . op

n

}

, where

op

i

∈

{., +,

>

, diag, tr, 1, , ×, f}

. The possible operations are

matrices multiplication and addition, matrix transpose,

vector diagonalization, matrix trace computation, column

vector full of 1, element-wise matrix multiplication, ma-

trix/scalar multiplication and element-wise custom function

operating on scalars or vectors.

Definition 2. e(X) ∈ R

is a sentence in

ML(L)

if it con-

sists of any possible consecutive operations in

L

, operating

on a given matrix X and resulting in a scalar value.

As an example,

e(X) = 1

>

X

2

1

is a sentence of

ML(L)

with

L = {.,

>

, 1}

, computing the sum of all elements of

square matrix

X

. In the following, we are interested in lan-

guages

L

1

, L

2

and

L

3

that have been used for characterizing

the WL-test in (Geerts, 2020). These results are given next.

Remark 1.

Two adjacency matrices are indistinguishable

by the 1-WL test if and only if

e(A

G

) = e(A

H

)

for all

e ∈ L

1

with

L

1

= {.,

>

, 1, diag}

. Hence, all possible

sentences in

L

1

are the same for 1-WL equivalent adjacency

matrices. Thus,

A

G

≡

1−W L

A

H

↔ A

G

≡

ML(L

1

)

A

H

.

(see Theorem 7.1 in (Geerts, 2020))

Remark 2. ML(L

2

)

with

L

2

= {.,

>

, 1, diag, tr}

is

strictly more powerful than

L

1

, i.e., than the 1-WL test,

but less powerful than the 3-WL test. (see Theorem 7.2 and

Example 7.3 in (Geerts, 2020))

Remark 3.

Two adjacency matrices are indistinguishable

by the 3-WL test if and only if they are indistinguishable by

any sentence in

ML(L

3

)

with

L

3

= {.,

>

, 1, diag, tr, }

.

Thus,

A

G

≡

3−W L

A

H

↔ A

G

≡

ML(L

3

)

A

H

. (see Theo-

rem 9.2 in (Geerts, 2020))

Remark 4.

Enriching the operation set to

L

+

= L ∪

{+, ×, f}

where

L ∈ (L

1

, L

2

, L

3

) does not improve the ex-

pressive power of the language. Thus,

A

G

≡

ML(L)

A

H

↔

A

G

≡

ML(L

+

)

A

H

. (see Proposition 7.5 in (Geerts, 2020))

4. How Powerful are MPNNs?

This section presents some results about the theoretical ex-

pressive power of state-of-the-art MPNNs. Those results are

derived using the MATLANG language (Geerts, 2020) and

more precisely the remarks of the preceding section. Proofs

of the theorems are given in Appendix B.

Theorem 1.

MPNNs such as GCN, GAT, GraphSage, GIN

(defined in Appendix H) cannot go further than operations

in

L

+

1

. Thus, they are not more powerful than the 1-WL test.

This result has already been given in (Xu et al., 2019), which

proposed GIN-

(GIN for Graph Isomorphism Network) and

Breaking the Limits of Message Passing Graph Neural Networks

showed that it is the unique MPNN which is provably exact

the same powerful with the 1-WL test, while the rest of

MPNNs are known to be less powerful than 1-WL test.

Chebnet is also known to be not more powerful than the

1-WL test. However, the next theorem states that it is true if

the maximum eigenvalues are the same for both graphs. For

a pair of graphs whose maximum eigenvalues are not equal,

Chebnet is strictly more powerful than the 1-WL test.

Theorem 2.

Chebnet is more powerful than the 1-WL test

if the Laplacian maximum eigenvalues of the non-regular

graphs to be compared are not the same. Otherwise Chebnet

is not more powerful than 1-WL.

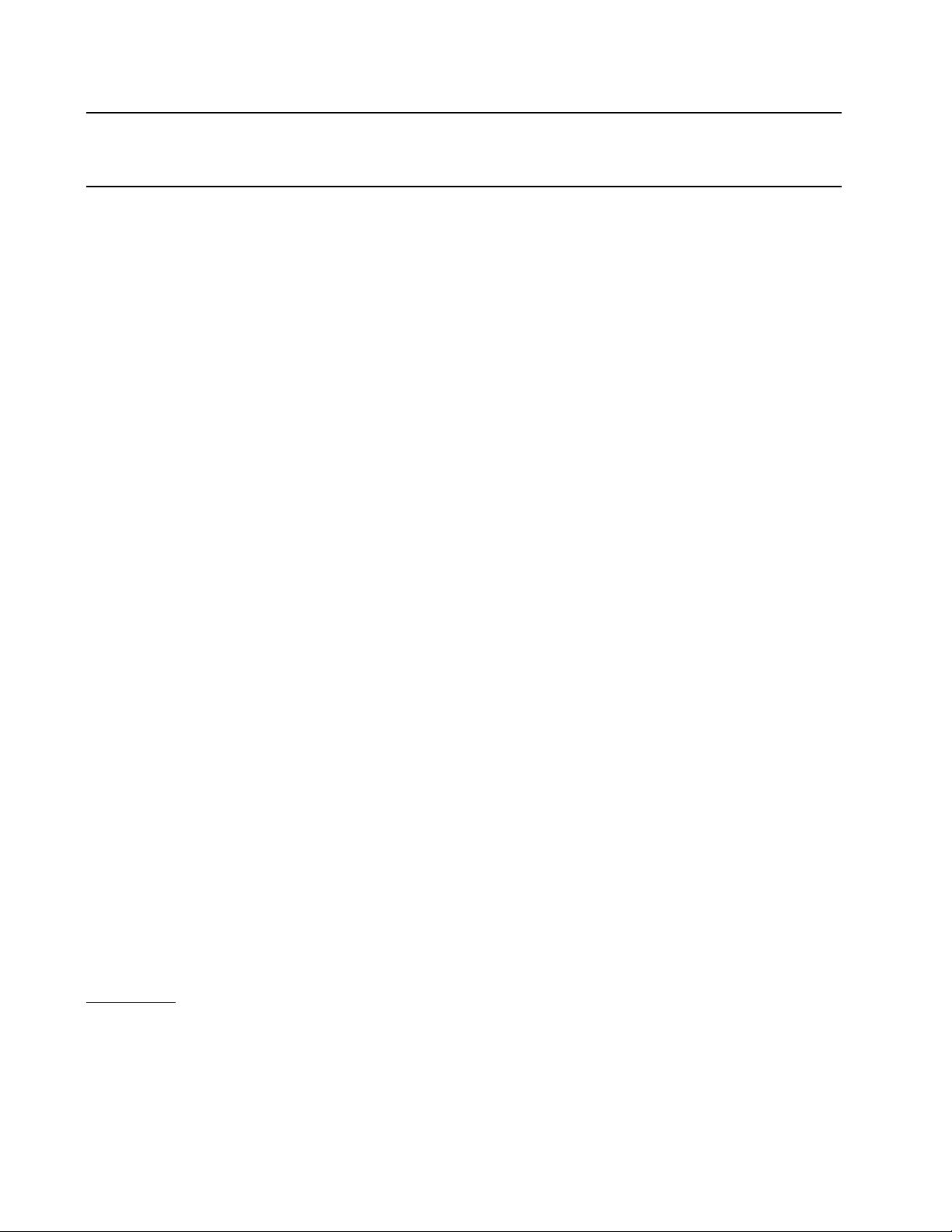

Figure 1.

Decalin (

G

) and Bicyclopentyl (

H

) graphs are

L

1

and

also 1-WL equivalent, but Chebnet can distinguish them.

Figure 1 shows two graphs that are 1-WL equivalent and

are generally used to show how MPNNs fail. However,

their normalized Laplacian’s maximum eigenvalues are not

the same. Thus, Chebnet can project these two graphs to

different points in feature space. Details can be found in

Appendix C.

As stated in the introduction, comparison with the WL-test

is not the only way to characterize the expressive power

of GNNs. Powerful GNNs are also expected to be able to

count relevant substructures in a given graph for specific

problems. The following theorems describe the matrix lan-

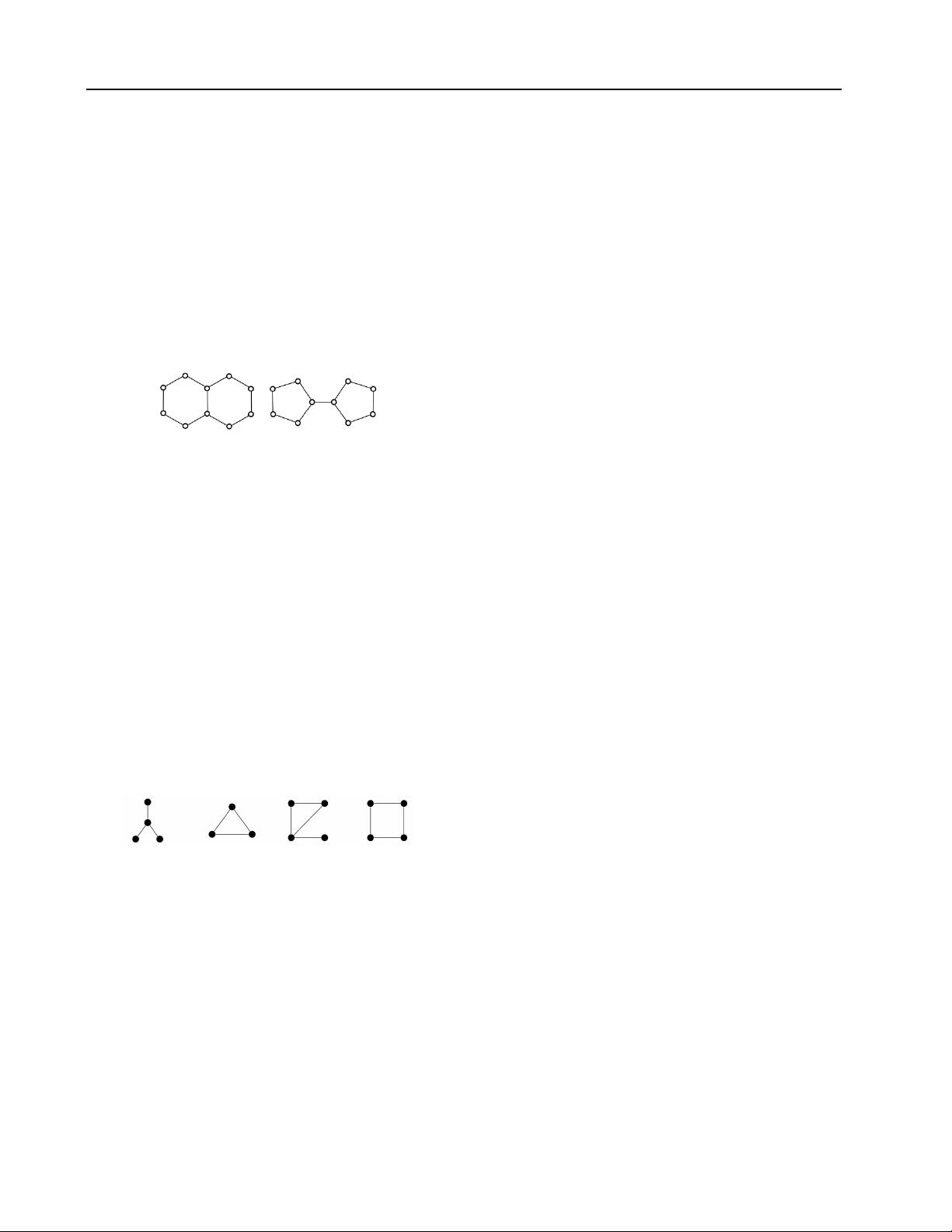

guage required to be able to count the graphlets illustrated

in Figure 2, which are called 3-star, triangle, tailed triangle

and 4-cycle.

Figure 2.

Sample of patterns: 3-star, triangle, tailed triangle and

4-cycle graphlets used in our analysis.

Theorem 3.

3-star graphlets can be counted by sentences

in L

+

1

.

Theorem 4.

Triangle and 4-cycle graphlets can be counted

by sentences in L

+

2

.

Theorem 5.

Tailed triangle graphlets can be counted by

sentences in L

+

3

.

These theorems show that 1-WL equivalent MPNNs can

only count 3-star patterns, while 3-WL equivalent MPNNs

can count all graphlets shown in Figure 2.

(Dehmamy et al., 2019) has shown that a MPNN is not able

to learn node degrees if the MPNN has not an appropriate

convolution support (e.g.

A

). Therefore, to achieve a fair

comparison, we assume that node degrees are included as

a node feature. Note however, that the number of 3-star

graphlets centered on a node can be directly derived from its

degrees (see Appendix B.3). Therefore, any graph agnostic

MLP can count the number of 3-star graphlets given the

node degree.

5. MPNN Beyond 1-WL

In this section, we present two new MPNN models. The

first one, called GNNML1 is shown to be as powerful as the

1-WL test. The second one, called GNNML3 exploits the

theoretical results of (Geerts, 2020) to break the limits of 1-

WL and reach 3-WL equivalence experimentally. GNNML1

relies on the node update schema given by :

H

(l+1)

=σ

(

H

(l)

W

(l,1)

+AH

(l)

W

(l,2)

+H

(l)

W

(l,3)

H

(l)

W

(l,4)

)

(2)

where

W

(l,s)

are trainable parameters. Using this model,

the new representation of a node consists of a sum of three

terms : (i) a linear transformation of the previous layer repre-

sentation of the node, (ii) a linear transformation of the sum

of the previous layer representations of its connected nodes

and (iii) the element-wise multiplication of two different

linear transformations of the previous layer representation

of the node.

The expressive power of GNNML1 is defined by the follow-

ing theorem. Its proof is given in Appendix B:

Theorem 6.

GNNML1 can produce every possible sen-

tences in

ML(L

1

)

for undirected graph adjacency

A

with

monochromatic edges and nodes. Thus, GNNML1 is exactly

as powerful as the 1-WL test.

Hence, this model has the same ability as the 1-WL test to

distinguish two non-isomorphic graphs, i.e., the same as

GIN. This is explained by the third term in the sum of Eq.

(2)

since it can produce feature-wise multiplication on each

layer. Since node representation is richer, we also assume

that it would be more powerful for counting substructures.

This assumption is validated by experiments in Section 6.

To reach more powerful models than 1-WL, theoretical re-

sults (see Remarks 1, 2 and 3 in Section 3) show that a

model that can produce different outputs than

L

+

1

language

is needed. More precisely, according to Remarks 2 and 3,

trace (

tr

) and element-wise multiplication (

) operations

are required to go further than 1-WL.

In order to illustrate the impact of the trace operation, one

can use 1-WL equivalent Decalin and Bicyclopentyl graphs

in Figure 1. It is easy to show that

tr(A

5

G

) = 0

but

tr(A

5

H

) = 20

,

tr(A

5

)

giving the number of 5-length closed

剩余17页未读,继续阅读

资源评论

易小侠

- 粉丝: 6514

- 资源: 9万+

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功