没有合适的资源?快使用搜索试试~ 我知道了~

概率模型检验与自治_Probabilistic Model Checking and Autonomy

1.该资源内容由用户上传,如若侵权请联系客服进行举报

2.虚拟产品一经售出概不退款(资源遇到问题,请及时私信上传者)

2.虚拟产品一经售出概不退款(资源遇到问题,请及时私信上传者)

版权申诉

0 下载量 158 浏览量

2022-01-27

03:28:27

上传

评论

收藏 2.46MB PDF 举报

温馨提示

试读

26页

概率模型检验与自治_Probabilistic Model Checking and Autonomy.pdf

资源推荐

资源详情

资源评论

Probabilistic Model

Checking and Autonomy

Marta Kwiatkowska,

1

Gethin Norman

2

and

David Parker

3

1

Department of Computer Science, University of Oxford, UK, OX1 3QD; email:

marta.kwiatkowska@cs.ox.ac.uk

2

School of Computing Science, University of Glasgow, UK, G12 8RZ; email:

gethin.norman@glasgow.ac.uk

3

School of Computer Science, University of Birmingham, UK; email:

d.a.parker@cs.bham.ac.uk

Posted with permission from the Annual

Review of Control, Robotics, and

Autonomous Systems, Volume 5;

copyright 2022 Annual Reviews,

https://www.annualreviews.org/

Keywords

probabilistic modelling, temporal logic, model checking, strategy

synthesis, stochastic games, equilibria

Abstract

Design and control of autonomous systems that operate in uncertain

or adversarial environments can be facilitated by formal modelling and

analysis. Probabilistic model checking is a technique to automatically

verify, for a given temporal logic specification, that a system model

satisfies the specification, as well as to synthesise an optimal strategy

for its control. This method has recently been extended to multi-agent

systems that exhibit competitive or cooperative behaviour modelled via

stochastic games and synthesis of equilibria strategies. In this paper,

we provide an overview of probabilistic model checking, focusing on

models supported by the PRISM and PRISM-games model checkers.

This includes fully observable and partially observable Markov decision

processes, as well as turn-based and concurrent stochastic games, to-

gether with associated probabilistic temporal logics. We demonstrate

the applicability of the framework through illustrative examples from

autonomous systems. Finally, we highlight research challenges and sug-

gest directions for future work in this area.

1

arXiv:2111.10630v1 [cs.LO] 20 Nov 2021

1. INTRODUCTION

As autonomous systems become embedded within computing infrastructure, from informa-

tion systems through to security and robotics, there is a growing need for methodologies

that ensure their safe, secure, reliable, timely and resource efficient execution. Design of

computer systems can be facilitated by formal modelling and verification, and in partic-

ular model checking, which aims to automatically check if a system model satisfies given

requirements typically expressed in temporal logic. Autonomy, however, creates additional

demands of controllability, since autonomous systems operate in uncertain or adversarial

environments, and strategic reasoning, to ensure effective coordination of cooperative or

competitive behaviour of system components (agents).

Probabilistic model checking is a collection of techniques for the modelling of systems

that exhibit probabilistic and non-deterministic behaviour, which supports not only their

model checking against temporal logic, but also synthesis of optimal controllers (strate-

gies) from temporal logic specifications. Probability is used to quantify environmental

uncertainty and stochasticity, while non-determinism represents model decisions. Markov

decision processes (MDPs) are typically employed to model and reason about the strategic

behaviour of an agent against a stochastic environment, where specifications are expressed

in probabilistic extensions of the temporal logics CTL or LTL. Partially observable Markov

decision processes (POMDPs) permit similar modelling and analysis, but for contexts where

the agent has limited power to observe its environment.

MDPs and POMDPs, however, are unable to faithfully represent the behaviour of mul-

tiple players competing or cooperating to achieve their individual goals. To this end, we

employ multi-agent systems modelled via stochastic games and reason about their strate-

gic behaviour for both zero-sum and nonzero-sum (equilibria) properties. For zero-sum

properties, the utilities of an agent are the negation of the utility of its opponent, whereas

for nonzero-sum each agent is pursuing its own quantitative objective. Probabilistic model

checking has been recently extended to encompass both turn-based and concurrent stochas-

tic games, together with an extension of the temporal logic that inherits the coalition op-

erator from ATL, as well as synthesis of optimal Nash equilibria strategies (more precisely,

subgame-perfect social welfare optimal strategies).

In this paper, we provide an overview of recent advances in probabilistic model check-

ing, focusing on the model checking and strategic reasoning methods implemented in the

PRISM (1) and PRISM-games (2) tools for discrete probabilistic models. The review covers

fully observable and partially observable Markov decision processes (Sections 2 and 3 re-

spectively), as well as turn-based and concurrent stochastic games (Sections 4 and 5 respec-

tively), together with associated probabilistic temporal logics. We discuss the core types of

quantitative analyses available for each model, as well as extensions such as multi-objective

analysis and continuous-time, also called real-time in the model checking literature, models

(Section 6). We demonstrate the applicability of the framework through illustrative exam-

ples, with emphasis on the areas of robotics and autonomy. Finally, we highlight challenges

and suggest directions for future work in this area (Section 7).

2. MARKOV DECISION PROCESSES

We begin with Markov decision processes (MDPs) (3), which are a classic model for deci-

sion making under uncertainty. This is a discrete-time model, with discrete sets of states

and actions, that allows both non-determinism, e.g., to represent the choices made by the

2 Kwiatkowska et al.

s

1

s

2

s

0

east

s

3

south

east

0.8

0.6

{goal}

s

5

s

4

{hazard}

0.2

south

0.6

0.4

east

north

west

east

south

east

0.2

0.8

0.2

0.2

0.9

0.1

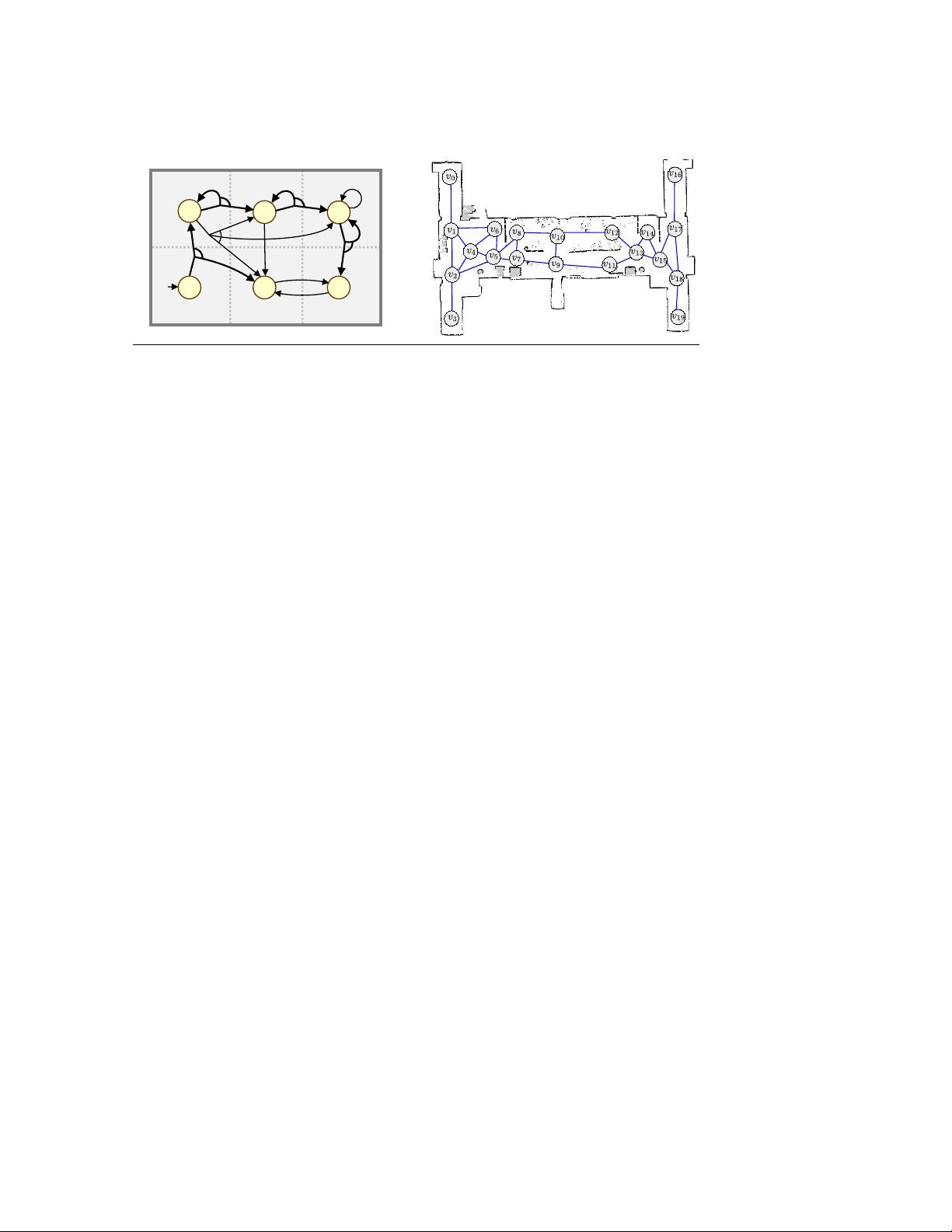

Figure 1

Left: A simple MDP representing a robot navigating through a grid; a (deterministic, memoryless)

optimal policy for the property P

max=?

[ ¬hazard U goal ] is marked in bold. Right: A topological

map from (4) used to build a similar style MDP modelling a mobile robot exploring a building.

controller of a robot or vehicle, and discrete probabilistic choice, to model environmental

uncertainty arising due to, for instance, the presence of humans, noisy sensors, unreliable

communication media or faulty hardware.

We give a formal definition of MDPs below. Here, and in the remainder of the pa-

per, Dist(X) denotes the set of (discrete) probability distributions over a finite set X, i.e.,

functions µ : X → [0, 1] such that

P

x∈X

µ(x) = 1.

Definition 1 (Markov decision process). A Markov decision process (MDP) is a tuple

M = (S, ¯s, A, δ, AP, L) where:

• S is a finite set of states and ¯s ∈ S is an initial state;

• A is a finite set of actions;

• δ : (S×A) → Dist(S) is a (partial) probabilistic transition function, mapping state-

action pairs to probability distributions over S;

• AP is a set of atomic propositions and L : S → 2

AP

is a state labelling function.

The execution of an MDP M proceeds as follows. When in a state s, there is a non-

deterministic choice over the actions that are available in the state, defined as the actions

a ∈ A such that δ(s, a) is defined and denoted A(s). It is assumed that the set of available

actions is non-empty for every state. After an action a ∈ A(s) has been chosen in s, it is

performed and the probability of transitioning to state s

0

∈ S equals δ(s, a)(s

0

).

Example 1. A simple example of an MDP is shown in Figure 1 (left); it models the

movement of a robot through locations in a 3 × 2 grid. Each state (s

i

) represents a location

and actions taken in states result in probabilistic transitions to other locations. For example,

in state s

1

there is a choice between moving east and southeast; if east is chosen, then with

probability 0.6 the robot moves east and with probability 0.4 the robot remains in its

current location. Also shown are atomic propositions (goal and hazard) needed for property

specification. Figure 1 (right) shows a topological map used to build a larger, similar-style

MDP modelling a mobile robot traversing locations within an office building (4).

A path of M is defined by an alternating sequence of action choices and transitions. More

formally, a path is a finite or infinite sequence π = s

0

a

0

−→ s

1

a

1

−→ s

2

a

2

−→ · · · such that s

0

= ¯s,

a

i

∈ A(s

i

) and δ(s

i

, a

i

)(s

i+1

) > 0 for all i > 0. FPaths

M

and IPaths

M

denote the sets of

finite and infinite paths of M, respectively.

www.annualreviews.org

•

Probabilistic Model Checking and Autonomy 3

We next introduce the notion of a strategy (often also called a policy) of an MDP M,

which resolves the non-determinism present in M. In particular, strategies decide which

actions to take in states of the MDP, depending on its execution to date.

Definition 2 (MDP strategy). A strategy of an MDP M is a function σ : FPaths

M

→

Dist(A) such that, if σ(π)(a) > 0, then a ∈ A(last(π)) where last(π) is the final state of π.

The set of all strategies of M is denoted Σ

M

. We classify a strategy σ ∈ Σ

M

in terms of its

use of randomisation and memory.

• Randomisation: σ is deterministic (or pure) if σ(π) picks a single action with

probability 1 for all finite paths π, and randomised otherwise.

• Memory: σ is memoryless if σ(π) depends only on last(π) for all finite paths π, and

finite-memory if there are finitely many modes such that, for any π, σ(π) depends only

on last(π) and the current mode, which is updated each time an action is performed;

otherwise, it is infinite-memory.

Under a particular strategy, the behaviour of MDP M is fully probabilistic and we can reason

about the probability of different events. For a strategy σ of M, we denote by FPaths

σ

M

and

IPaths

σ

M

the set of finite and infinite paths that correspond to the choices of σ. Following (5),

we can definite a probability measure Prob

σ

M

over IPaths

σ

M

that corresponds to the behaviour

of the MDP under σ. Using this probability measure we can then also define, for a random

variable X : IPaths

M

→ R, the expected value E

σ

M

(X) of X under σ.

Random variables can be used to introduce a variety of quantitative properties of MDPs.

This is often achieved by augmenting an MDP with reward structures (these can in some

cases represent costs, but for consistency we will use the term rewards). Example applica-

tions of rewards include: the energy consumption of a device, the number of tasks completed

by a robot or the number of packets lost by a communication protocol.

Definition 3 (MDP reward structure). A reward structure for an MDP M is a tuple

r = (r

S

, r

A

), where r

S

: S → R

>0

is a state reward function and r

A

: (S×A) → R

>0

is an

action reward function.

2.1. Property Specifications for MDPs

In order to formally specify the required behaviour of a system modelled as an MDP, we

use quantitative extensions of temporal logic. Below, we show a fragment of the logic used

as the property specification language for the PRISM model checker (1), which we refer to

here as the PRISM logic. This is based on the logics PCTL (probabilistic computation tree

logic) (6) and LTL (linear temporal logic) (7), and also incorporates operators to specify

expected reward properties (8).

Definition 4 (Property syntax). The syntax for a core fragment of the PRISM logic is:

Φ

:

= P

p

[ ψ ] | R

r

q

[ ρ ]

ψ

:

= φ | ¬ψ | ψ ∧ ψ | X ψ | ψ U

6k

ψ | ψ U ψ

ρ

:

= I

=k

| C

6k

| F φ

φ

:

= true | a | ¬φ | φ ∧ φ

where a ∈ AP is an atomic proposition, ∈{<, 6, >, >}, p ∈ [0, 1], r is a reward structure,

q ∈ R

>0

and k ∈ N.

4 Kwiatkowska et al.

Above, we assume that a property Φ for an MDP comprises a single probabilistic (P) or

reward (R) operator. The syntax also includes path (ψ) and reward (ρ) formulae, both

evaluated over paths, and propositional logic (φ) formulae, evaluated over states. The

intuitive meaning of the P and R operators, from the initial state of an MDP, is:

• P

p

[ ψ ] – the probability of a path satisfying path formula ψ satisfies the bound p;

• R

r

q

[ ρ ] – the expected value of reward formula ρ, under reward structure r, satisfies

the bound q.

A propositional formula φ is satisfied (or holds) in a state s if it evaluates to true in that

state, where an atomic proposition a is true if s is labelled with a (i.e., a ∈ L(s)) and the

logical connectives (¬, ∧) are interpreted in the usual way.

For path formulae ψ, the core temporal operators are:

• X ψ (next) – ψ is satisfied in the next state;

• ψ

1

U

6k

ψ

2

(bounded until) – ψ

2

is satisfied within k steps, and ψ

1

is satisfied until

that point;

• ψ

1

U ψ

2

(until) – ψ

2

is eventually satisfied, and ψ

1

is satisfied until then.

As is standard in model checking, we use the equivalences F ψ ≡ true U ψ (eventually)

and G ψ ≡ ¬F ¬ψ (always). If we restrict the sub-formulae of a path formula to be atomic

propositions, then we get the following common property classes:

• F a (reachability) – eventually a stated labelled with a is reached;

• G a (invariance) – a labels all states;

• F

6k

a (step-bounded reachability) – a labels a state within the first k steps;

• G

6k

a (step-bounded invariance) – a labels states for at least the first k steps.

Without this restriction, path formulae allow temporal operators to be nested. In fact the

syntax of path formulae given in Definition 4 is that of linear temporal logic (LTL) (7).

LTL can express a range of useful property classes, including:

• G F ψ (recurrence) – ψ is satisfied infinitely often;

• F G ψ (persistence) – eventually ψ is always satisfied;

• G (ψ

1

→ X ψ

2

) – whenever ψ

1

is satisfied, ψ

2

is satisfied in the next state;

• G (ψ

1

→ F ψ

2

) – whenever ψ

1

is satisfied, ψ

2

is satisfied in the future.

Finally, considering reward formulae ρ, the three key operators are:

• I

=k

(instantaneous reward) – state reward at time step k;

• C

6k

(bounded cumulative reward) – reward accumulated over k steps;

• F φ (reachability reward ) – reward accumulated until a state satisfying φ is reached.

Although omitted from the syntax here for simplicity, it is also common to generalise the

third case and consider the expected reward accumulated until some co-safe LTL formula

is satisfied. Intuitively, these are path formulae ψ whose satisfaction occurs within finite

time; examples include (F a

1

) ∧ (F a

2

) and F (a

1

∧ F a

2

), which require states labelled with

a

1

and a

2

to be reached, either in any order (first case) or in a specified order (second case).

www.annualreviews.org

•

Probabilistic Model Checking and Autonomy 5

剩余25页未读,继续阅读

资源评论

易小侠

- 粉丝: 6453

- 资源: 9万+

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜最新资源

- 论文(最终)_20240430235101.pdf

- 基于python编写的Keras深度学习框架开发,利用卷积神经网络CNN,快速识别图片并进行分类

- 最全空间计量实证方法(空间杜宾模型和检验以及结果解释文档).txt

- 5uonly.apk

- 蓝桥杯Python组的历年真题

- 2023-04-06-项目笔记 - 第一百十九阶段 - 4.4.2.117全局变量的作用域-117 -2024.04.30

- 2023-04-06-项目笔记 - 第一百十九阶段 - 4.4.2.117全局变量的作用域-117 -2024.04.30

- 前端开发技术实验报告:内含4四实验&实验报告

- Highlight Plus v20.0.1

- 林周瑜-论文.docx

资源上传下载、课程学习等过程中有任何疑问或建议,欢迎提出宝贵意见哦~我们会及时处理!

点击此处反馈

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功