没有合适的资源?快使用搜索试试~ 我知道了~

You Only Cache Once: Decoder-Decoder Architectures for Language

需积分: 0 1 下载量 18 浏览量

2024-05-23

18:00:08

上传

评论

收藏 928KB PDF 举报

温馨提示

试读

20页

You Only Cache Once: Decoder-Decoder Architectures for Language

资源推荐

资源详情

资源评论

You Only Cache Once:

Decoder-Decoder Architectures for Language Models

Yutao Sun

∗ †‡

Li Dong

∗ †

Yi Zhu

†

Shaohan Huang

†

Wenhui Wang

†

Shuming Ma

†

Quanlu Zhang

†

Jianyong Wang

‡

Furu Wei

†⋄

†

Microsoft Research

‡

Tsinghua University

https://aka.ms/GeneralAI

Abstract

We introduce a decoder-decoder architecture, YOCO, for large language models,

which only caches key-value pairs once. It consists of two components, i.e., a cross-

decoder stacked upon a self-decoder. The self-decoder efficiently encodes global

key-value (KV) caches that are reused by the cross-decoder via cross-attention.

The overall model behaves like a decoder-only Transformer, although YOCO

only caches once. The design substantially reduces GPU memory demands, yet

retains global attention capability. Additionally, the computation flow enables

prefilling to early exit without changing the final output, thereby significantly

speeding up the prefill stage. Experimental results demonstrate that YOCO achieves

favorable performance compared to Transformer in various settings of scaling

up model size and number of training tokens. We also extend YOCO to 1M

context length with near-perfect needle retrieval accuracy. The profiling results

show that YOCO improves inference memory, prefill latency, and throughput by

orders of magnitude across context lengths and model sizes. Code is available at

https://aka.ms/YOCO.

0

20

40

60

0

20

40

0

60

120

180

GPU Memory ↓

(GB)

Throughput ↑

(wps)

Prefilling Latency ↓

(s)

6.4X

30.3X

9.6X

Inference Cost (@512k)

Decoder-Decoder LLM

YOCOTransformer

Cross-Decoder

<s> You Only Cache

You Only Cache Once

Self-Decoder

KV Cache

Figure 1: We propose a decoder-decoder architecture, YOCO, for large language model, which only

caches key/value once. YOCO markedly reduces the KV cache memory and the prefilling time, while

being scalable in terms of training tokens, model size, and context length. The inference cost is

reported to be 512K as the context length, and Figures 7–10 present more results for different lengths.

∗

Equal contribution. ⋄ Corresponding author.

arXiv:2405.05254v2 [cs.CL] 9 May 2024

1 Introduction

The decoder-only Transformer [

VSP

+

17

] has become the de facto architecture for language models.

Numerous efforts have continued to develop suitable architectures for language modeling. There have

been main strands of explorations. First, encoder-only language models, such as BERT [

DCLT19

],

bidirectionally encode the input sequence. Second, encoder-decoder models, such as T5 [

RSR

+

20

],

use a bidirectional encoder to encode input and a unidirectional decoder to generate output. Both

of the above layouts struggle with autoregressive generation due to bidirectionality. Specifically,

encoders have to encode the whole input and output tokens again for the next generation step.

Although encoder-decoder can use only decoder to generate, the output tokens do not fully leverage

the parameters of encoder, especially for multi-turn conversation. Third, decoder-only language

models, such as GPT [

BMR

+

20

], generate tokens autoregressively. By caching the previously

computed key/value vectors, the model can reuse them for the current generation step. The key-value

(KV) cache avoids encoding the history again for each token, greatly improving the inference speed.

This compelling feature establishes the decoder-only language model as the standard option.

However, as the number of serving tokens increases, the KV caches occupy a lot of GPU memory,

rendering the inference of large language models memory-bounded [

PDC

+

22

]. For the example

of a 65B-size language model (augmented with grouped-query attention [

ALTdJ

+

23

] and 8-bit

KV quantization), 512K tokens occupy about 86GB GPU memory, which is even larger than the

capacity of one H100-80GB GPU. In addition, the prefilling latency of long-sequence input is

extremely high. For instance, using four H100 GPUs, the 7B language model (augmented with

Flash-Decoding [

DHMS23

] and kernel fusion) requires about 110 seconds to prefill 450K tokens, and

380 seconds for 1M length. The above bottlenecks make it difficult to deploy long-context language

models in practice.

In this work, we propose a decoder-decoder architecture, YOCO, for large language models, which

only caches KV pairs once. Specifically, we stack cross-decoder upon self-decoder. Given an input

sequence, the self-decoder utilizes efficient self-attention to obtain KV caches. Then the cross-decoder

layers employ cross-attention to reuse the shared KV caches. The decoder-decoder architecture is

conceptually similar to encoder-decoder, but the whole model behaves more like a decoder-only

model from the external view. So, it naturally fits into autoregressive generation tasks, such as

language modeling. First, because YOCO only caches once

2

, the GPU memory consumption of KV

caches is significantly reduced. Second, the computation flow of the decoder-decoder architecture

enables prefilling to early exit before entering the self-decoder. The nice property speeds up the prefill

stage dramatically, improving user experience for long-context language models. Third, YOCO

allows for more efficient system design for distributed long-sequence training. In addition, we

propose gated retention for self-decoder, which augments retention [

SDH

+

23

] with a data-controlled

gating mechanism.

We conduct extensive experiments to show that YOCO achieves favorable language modeling perfor-

mance and has many advantages in terms of inference efficiency. Experimental results demonstrate

that YOCO can be scaled up with more training tokens, larger model size, and longer context length.

Specifically, we scale up the 3B YOCO model to trillions of training tokens, attaining results on par

with prominent Transformer language models, such as StableLM [

TBMR

]. Moreover, the scaling

curves ranging from 160M to 13B show that YOCO are competitive compared to Transformer. We

also extend the context length of YOCO to 1M tokens, achieving near perfect needle retrieval accuracy.

In the multi-needle test, YOCO obtains competitive results even compared to larger Transformers.

In addition to good performance on various tasks, the profiling results show that YOCO improves the

GPU memory footprint, prefill latency, throughput, and serving capacity. In particular, the memory of

KV caches can be reduced by about

80×

for 65B models. Even for a 3B model, the overall inference

memory consumption can be reduced by two times for 32K tokens and by more than nine times for

1M tokens. The prefill stage is speeded up by

71.8×

for the 1M context and

2.87×

for the 32K input.

For example, for a 512K context, YOCO reduces the Transformer prefilling latency from 180 seconds

to less than six seconds. The results position YOCO as a strong candidate model architecture for

future large language models with native long-sequence support.

2

The word “once” refers to global KV cache. Strictly, self-decoder also needs to store a certain number of

caches. As the self-decoder utilizes an efficient attention module, the cache size is bounded to a constant, which

can be ignored compared to global caches when the sequence length is large.

2

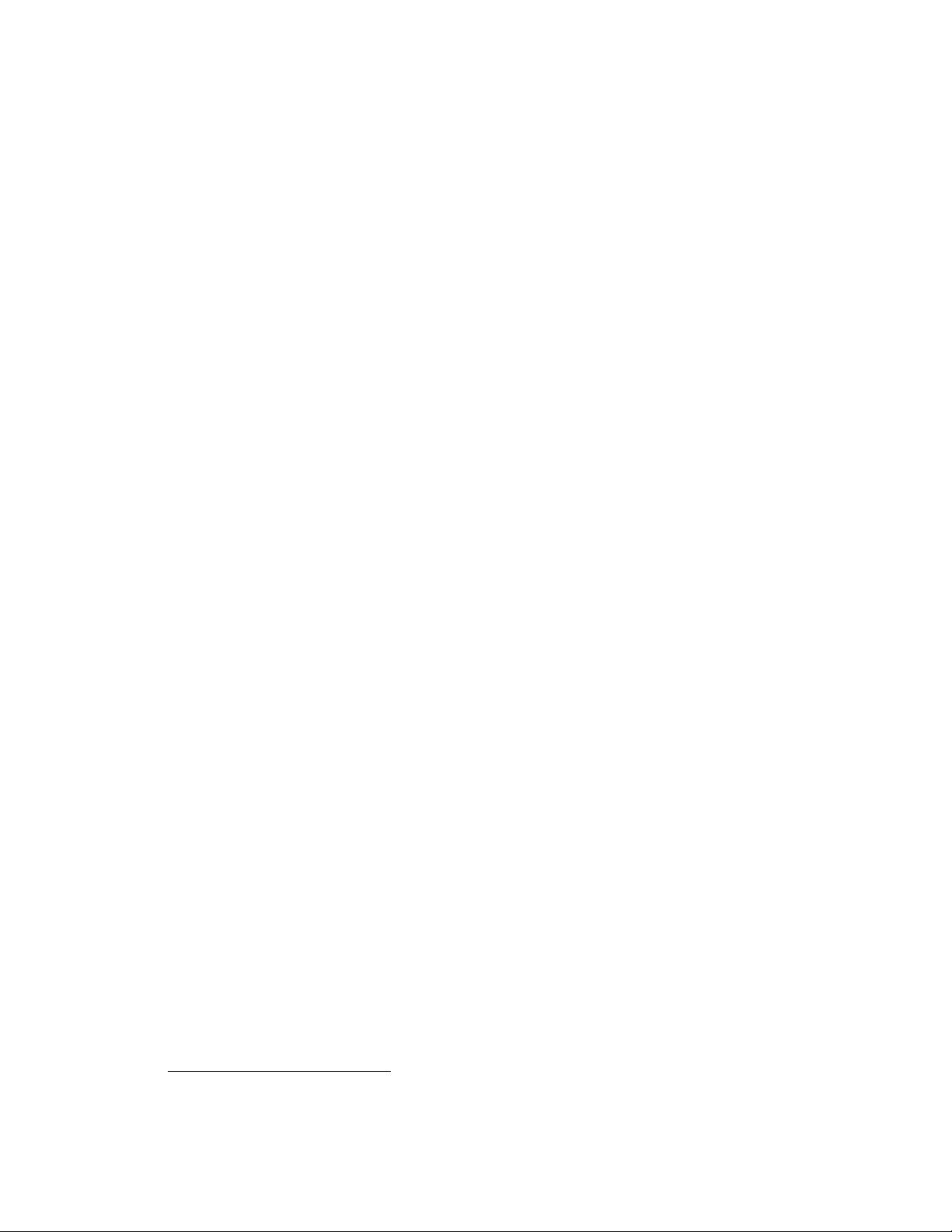

Figure 2: Overview of the decoder-decoder architecture. Self-decoder generates the global KV cache.

Then cross-decoder employs cross-attention to reuse the shared KV caches. Both self-decoder and

cross-decoder use causal masking. The overall architecture behaves like a decoder-only Transformer,

autoregressively generating tokens.

2 You Only Cache Once (YOCO)

The proposed architecture, named YOCO, is designed for autoregressive modeling, such as large

language models (LLMs). As shown in Figure 2, the decoder-decoder architecture has two parts, i.e.,

self-decoder and cross-decoder. Specifically, YOCO is stacked with

L

blocks, where the first

L

2

layers

are self-decoder while the rest modules are cross-decoder. Given an input sequence

x = x

1

· · · x

|x|

,

the input embeddings are packed into

X

0

= [x

1

, · · · , x

|x|

] ∈ R

|x|×d

model

, where

d

model

is hidden

dimension. We first obtain contextualized vector representations

X

l

= Self-Decoder(X

l−1

), l ∈

[1,

L

2

]

, where

X

L

/2

is used to produce KV caches

ˆ

K,

ˆ

V

for cross-decoder. Then we compute

X

l

= Cross-Decoder(X

l−1

,

ˆ

K,

ˆ

V ), l ∈ [

L

2

+ 1, L] to get the output vectors X

L

.

Both self- and cross-decoder follow a similar block layout (i.e., interleaved attention and feed-forward

network) as in Transformer [

VSP

+

17

]. We also include pre-RMSNorm [

ZS19

], SwiGLU [

Sha20

],

and grouped-query attention [

ALTdJ

+

23

] as improvements. The difference between the two parts

lies in attention modules. Self-decoder (Section 2.1) uses efficient self-attention (e.g., sliding-window

attention). In comparison, cross-decoder (Section 2.2) uses global cross-attention to attend to the

shared KV caches produced by the output of the self-decoder.

2.1 Self-Decoder

Self-decoder takes token embeddings

X

0

as input and compute intermediate vector representation

M = X

L

/2

:

Y

l

= ESA(LN(X

l

)) + X

l

X

l+1

= SwiGLU(LN(Y

l

)) + Y

l

(1)

where

ESA(·)

represents efficient self-attention,

SwiGLU(X) = (swish(XW

G

) ⊙ XW

1

)W

2

, and

RMSNorm [ZS19] is used for LN(·). Causal masking is used for efficient self-attention.

3

Cross-Decoder

(Skipped)

Self-Decoder

KV Cache

Cross-Decoder

Prefilling Generation

Pre- filling context and then generate

then generate new

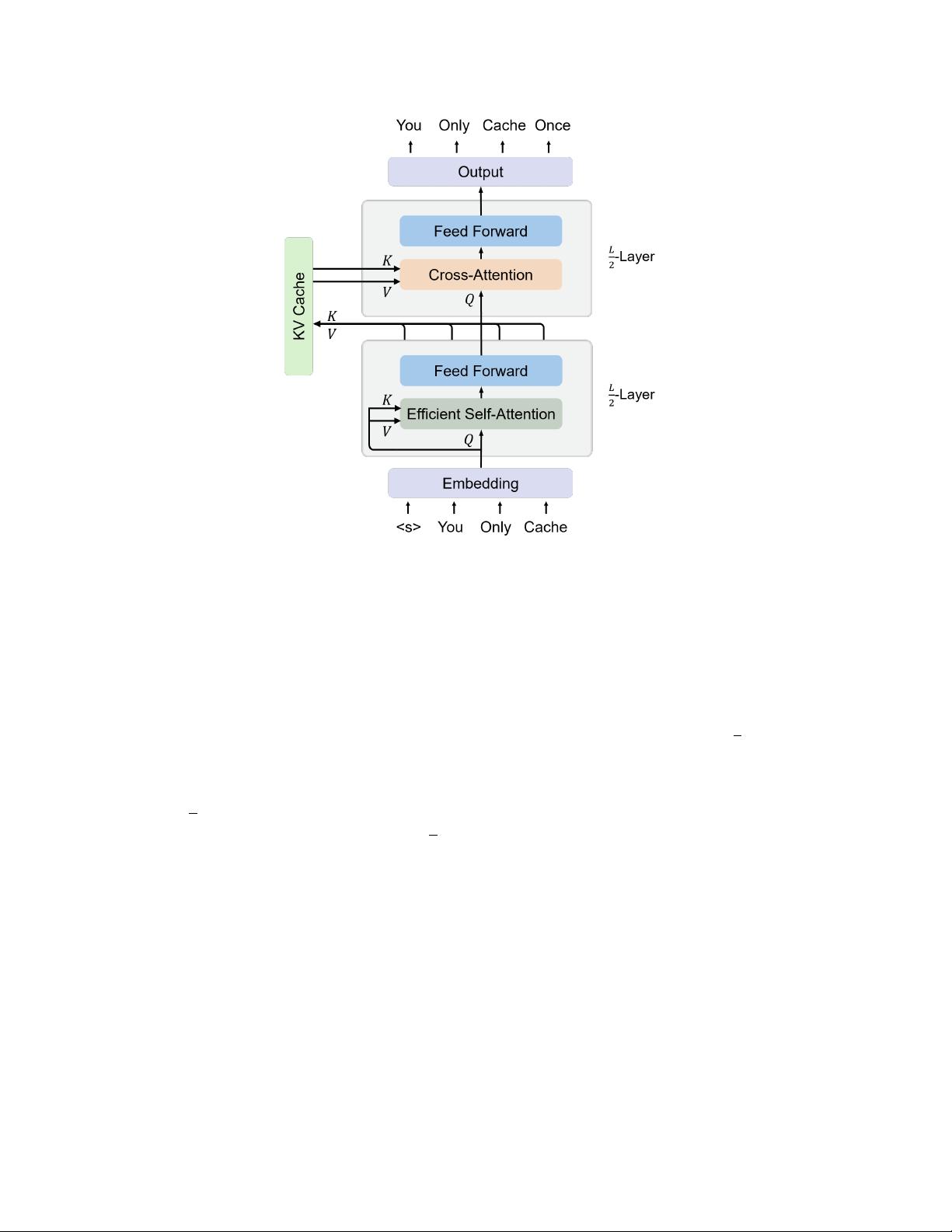

Figure 3: YOCO Inference. Prefill: encode input to-

kens in parallel. Generation: decode output tokens

one by one. The computation flow enables prefill-

ing to early exit without changing the final output,

thereby significantly speeding up the prefill stage.

KV Cache Memory

Transformer O(LND)

YOCO O((N + L)D)

Table 1: Inference memory complexity of KV

caches.

N, L, D

are the sequence length, num-

ber of layers, and hidden dimension.

Prefilling Time

Transformer O(LN

2

D)

YOCO O(LND)

Table 2: Prefilling time complexity of attention

modules. N, L, D are the same as above.

The key property of the efficient self-attention module is

O(1)

inference memory, i.e., constant

number of KV caches. For example, the cache size of sliding-window attention [CGRS19] depends

on the window size instead of the input length. More design choices (e.g., gated retention) of the

efficient self-attention module are detailed in Section 3.

2.2 Cross-Decoder

First, the output of the self-decoder X

L

/2

generates global KV caches

ˆ

K,

ˆ

V for cross-decoder:

ˆ

K = LN(X

L

/2

)W

K

,

ˆ

V = LN(X

L

/2

)W

V

(2)

where

W

K

, W

V

∈ R

d×d

are learnable weights. Then, cross-decoder layers are stacked after the

self-decoder to obtain the final output vectors

X

L

. The KV caches

ˆ

K,

ˆ

V

are reused by all the

L

2

cross-decoder modules:

ˆ

Q

l

= LN(X

l

)W

l

Q

Y

l

= Attention(

ˆ

Q

l

,

ˆ

K,

ˆ

V ) + X

l

X

l+1

= SwiGLU(LN(Y

l

)) + Y

l

(3)

where

Attention(·)

is standard multi-head attention [

VSP

+

17

], and

W

l

Q

∈ R

d×d

is a learnable

matrix. Causal masking is also used for cross-attention. Because cross-attention is compatible with

group query attention [

ALTdJ

+

23

], we can further save the memory consumption of KV caches.

After obtaining X

L

, a softmax classifier performs next-token prediction.

2.3 Inference Advantages

In addition to competitive language modeling results, YOCO significantly reduces serving costs and

improves inference performance. We report detailed inference comparisons in Section 4.4.

Saving GPU Memory and Serving More Tokens. Table 1 compares the memory complexity

between Transformers and YOCO. Specifically, because global KV caches are reused and efficient

self-attention needs constant caches, the number of caches is

O(N + CL)

, where

N

is the input

length,

C

is a constant (e.g., sliding window size), and

L

is the number of layers. For long sequences,

CL is much smaller than N , so about O(N) caches are required, i.e., you only cache once.

In comparison, Transformer decoders have to store

N × L

keys and values during inference. So

YOCO roughly saves

L

times GPU memory for caches compared to Transformer decoders. Because

the inference capacity bottleneck becomes KV caches (Figure 7b), our method enables us to serve

4

剩余19页未读,继续阅读

资源评论

Hefin_H

- 粉丝: 170

- 资源: 3

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜最新资源

- dirent.h,Windows下,标准库没有提供

- 年长者便捷上网中心源码

- 是一个简单的Python脚本示例,使用Pillow库来展示基本的图像处理操作,包括打开图像、显示图像、转换图像大小、旋转图像以及

- Hbulider制作的华为云物联网APP

- 车辆检测的视频,视频来自YouTube,Los Angeles Freeway I-101 HD 30fps traffic

- [初学者必看]JavaScript 简单实际案例练习,锻炼代码逻辑思维

- 高分项目,PID-电机类-PID电机调速控制源码+参考资料+PID测速

- grafana-enterprise-11.0.0.windows-amd64.msi

- 一个简单的Go程序示例,实现了上传并读取Excel文件的功能:

- ubuntu: jdk1.8安装包(免费)

资源上传下载、课程学习等过程中有任何疑问或建议,欢迎提出宝贵意见哦~我们会及时处理!

点击此处反馈

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功