馃摎 This guide explains how to use **Weights & Biases** (W&B) with YOLOv5 馃殌. UPDATED 29 September 2021.

- [About Weights & Biases](#about-weights-&-biases)

- [First-Time Setup](#first-time-setup)

- [Viewing runs](#viewing-runs)

- [Disabling wandb](#disabling-wandb)

- [Advanced Usage: Dataset Versioning and Evaluation](#advanced-usage)

- [Reports: Share your work with the world!](#reports)

## About Weights & Biases

Think of [W&B](https://wandb.ai/site?utm_campaign=repo_yolo_wandbtutorial) like GitHub for machine learning models. With a few lines of code, save everything you need to debug, compare and reproduce your models 鈥� architecture, hyperparameters, git commits, model weights, GPU usage, and even datasets and predictions.

Used by top researchers including teams at OpenAI, Lyft, Github, and MILA, W&B is part of the new standard of best practices for machine learning. How W&B can help you optimize your machine learning workflows:

- [Debug](https://wandb.ai/wandb/getting-started/reports/Visualize-Debug-Machine-Learning-Models--VmlldzoyNzY5MDk#Free-2) model performance in real time

- [GPU usage](https://wandb.ai/wandb/getting-started/reports/Visualize-Debug-Machine-Learning-Models--VmlldzoyNzY5MDk#System-4) visualized automatically

- [Custom charts](https://wandb.ai/wandb/customizable-charts/reports/Powerful-Custom-Charts-To-Debug-Model-Peformance--VmlldzoyNzY4ODI) for powerful, extensible visualization

- [Share insights](https://wandb.ai/wandb/getting-started/reports/Visualize-Debug-Machine-Learning-Models--VmlldzoyNzY5MDk#Share-8) interactively with collaborators

- [Optimize hyperparameters](https://docs.wandb.com/sweeps) efficiently

- [Track](https://docs.wandb.com/artifacts) datasets, pipelines, and production models

## First-Time Setup

<details open>

<summary> Toggle Details </summary>

When you first train, W&B will prompt you to create a new account and will generate an **API key** for you. If you are an existing user you can retrieve your key from https://wandb.ai/authorize. This key is used to tell W&B where to log your data. You only need to supply your key once, and then it is remembered on the same device.

W&B will create a cloud **project** (default is 'YOLOv5') for your training runs, and each new training run will be provided a unique run **name** within that project as project/name. You can also manually set your project and run name as:

```shell

$ python train.py --project ... --name ...

```

YOLOv5 notebook example: <a href="https://colab.research.google.com/github/ultralytics/yolov5/blob/master/tutorial.ipynb"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"></a> <a href="https://www.kaggle.com/ultralytics/yolov5"><img src="https://kaggle.com/static/images/open-in-kaggle.svg" alt="Open In Kaggle"></a>

<img width="960" alt="Screen Shot 2021-09-29 at 10 23 13 PM" src="https://user-images.githubusercontent.com/26833433/135392431-1ab7920a-c49d-450a-b0b0-0c86ec86100e.png">

</details>

## Viewing Runs

<details open>

<summary> Toggle Details </summary>

Run information streams from your environment to the W&B cloud console as you train. This allows you to monitor and even cancel runs in <b>realtime</b> . All important information is logged:

- Training & Validation losses

- Metrics: Precision, Recall, mAP@0.5, mAP@0.5:0.95

- Learning Rate over time

- A bounding box debugging panel, showing the training progress over time

- GPU: Type, **GPU Utilization**, power, temperature, **CUDA memory usage**

- System: Disk I/0, CPU utilization, RAM memory usage

- Your trained model as W&B Artifact

- Environment: OS and Python types, Git repository and state, **training command**

<p align="center"><img width="900" alt="Weights & Biases dashboard" src="https://user-images.githubusercontent.com/26833433/135390767-c28b050f-8455-4004-adb0-3b730386e2b2.png"></p>

</details>

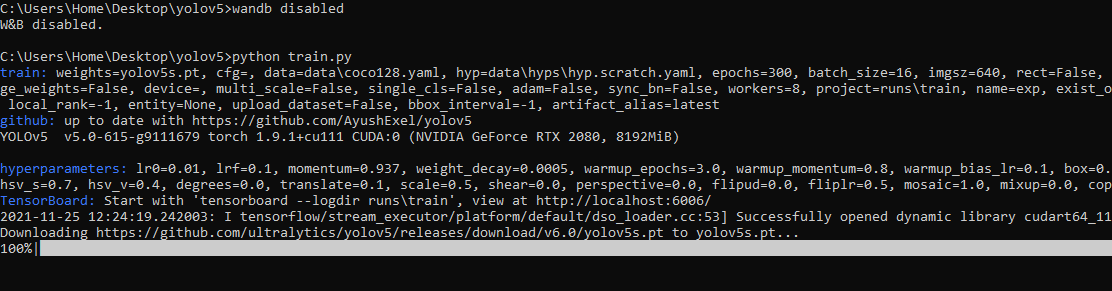

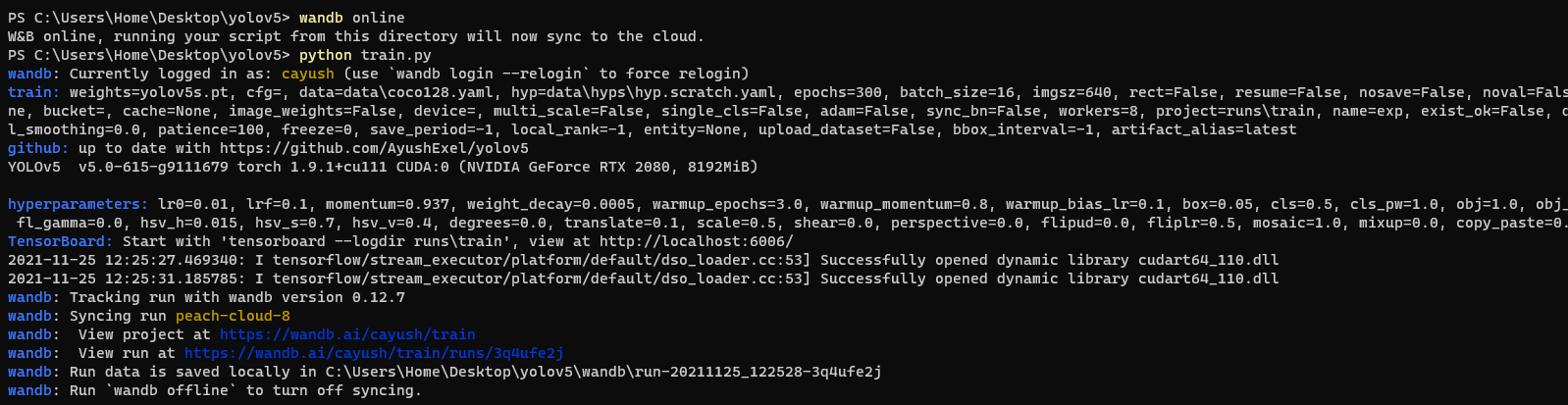

## Disabling wandb

- training after running `wandb disabled` inside that directory creates no wandb run

- To enable wandb again, run `wandb online`

## Advanced Usage

You can leverage W&B artifacts and Tables integration to easily visualize and manage your datasets, models and training evaluations. Here are some quick examples to get you started.

<details open>

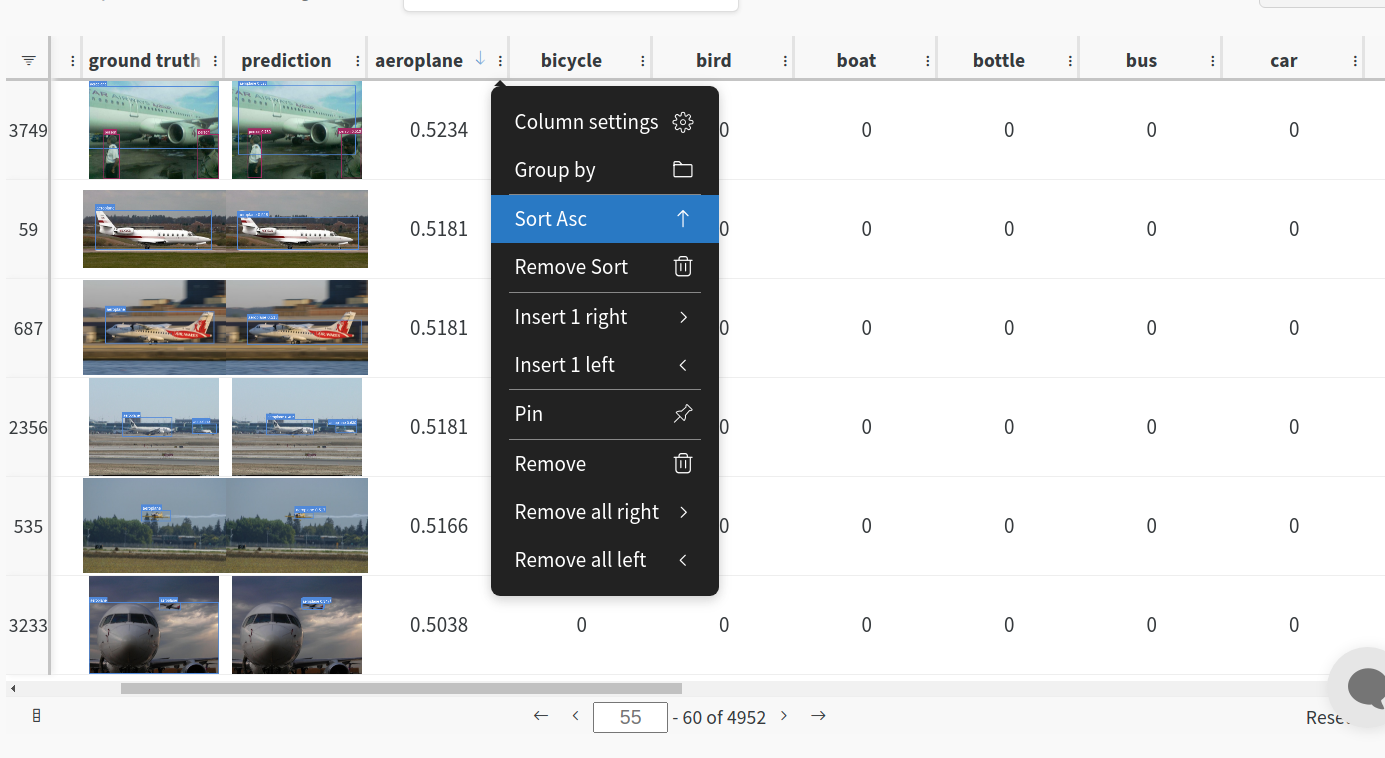

<h3> 1: Train and Log Evaluation simultaneousy </h3>

This is an extension of the previous section, but it'll also training after uploading the dataset. <b> This also evaluation Table</b>

Evaluation table compares your predictions and ground truths across the validation set for each epoch. It uses the references to the already uploaded datasets,

so no images will be uploaded from your system more than once.

<details open>

<summary> <b>Usage</b> </summary>

<b>Code</b> <code> $ python train.py --upload_data val</code>

</details>

<h3>2. Visualize and Version Datasets</h3>

Log, visualize, dynamically query, and understand your data with <a href='https://docs.wandb.ai/guides/data-vis/tables'>W&B Tables</a>. You can use the following command to log your dataset as a W&B Table. This will generate a <code>{dataset}_wandb.yaml</code> file which can be used to train from dataset artifact.

<details>

<summary> <b>Usage</b> </summary>

<b>Code</b> <code> $ python utils/logger/wandb/log_dataset.py --project ... --name ... --data .. </code>

</details>

<h3> 3: Train using dataset artifact </h3>

When you upload a dataset as described in the first section, you get a new config file with an added `_wandb` to its name. This file contains the information that

can be used to train a model directly from the dataset artifact. <b> This also logs evaluation </b>

<details>

<summary> <b>Usage</b> </summary>

<b>Code</b> <code> $ python train.py --data {data}_wandb.yaml </code>

</details>

<h3> 4: Save model checkpoints as artifacts </h3>

To enable saving and versioning checkpoints of your experiment, pass `--save_period n` with the base cammand, where `n` represents checkpoint interval.

You can also log both the dataset and model checkpoints simultaneously. If not passed, only the final model will be logged

<details>

<summary> <b>Usage</b> </summary>

<b>Code</b> <code> $ python train.py --save_period 1 </code>

</details>

</details>

<h3> 5: Resume runs from checkpoint artifacts. </h3>

Any run can be resumed using artifacts if the <code>--resume</code> argument starts with聽<code>wandb-artifact://</code>聽prefix followed by the run path, i.e,聽<code>wandb-artifact://username/project/runid </code>. This doesn't require the model checkpoint to be present on the local system.

<details>

<summary> <b>Usage</b> </summary>

<b>Code</b> <code> $ python train.py --resume wandb-artifact://{run_path} </code>

</details>

<h3> 6: Resume runs from dataset artifact & checkpoint artifacts. </h3>

<b> Local dataset or model checkpoints are not required. This can be used to resume runs directly on a different device </b>

The syntax is same as the previous section, but you'll need to lof both the dataset and model checkpoints as artifacts, i.e, set bot <code>--upload_dataset</code> or

train fro

没有合适的资源?快使用搜索试试~ 我知道了~

温馨提示

目前机器学习的替代者深度学习(卷积神经网络)基于卷积神经网络,设计一个基于深度学习的车牌检测识别系统,用于本科毕业论文可以说是非常合适的,这篇博文记录的就是一篇本科毕业设计所用到的模型算法,训练这个代码(在RTX4090上训练中等参数的Model大概只需要2个小时(时长不止由显卡配置决定,还取决于你选择的参数大小)),只需要提供你想检测的包含车牌的图片(任何位置、角度都可以),就可以得到图片中车牌位置的标记和车牌号码的输出,如果你有摄像头,你可以通过训练得到的模型去调用摄像头,通过摄像头动态监测车牌,也可以把一段.mp4结尾的视频丢给模型。模型会给你返回用框框标记好的图片以及输出检测出的车牌号码)

资源推荐

资源详情

资源评论

收起资源包目录

基于深度学习的车牌识别算法,其中,车辆检测网络直接使用YOLO侦测.zip (252个子文件)

基于深度学习的车牌识别算法,其中,车辆检测网络直接使用YOLO侦测.zip (252个子文件)  Dockerfile 2KB

Dockerfile 2KB Dockerfile 821B

Dockerfile 821B Dockerfile-arm64 2KB

Dockerfile-arm64 2KB Dockerfile-cpu 1KB

Dockerfile-cpu 1KB .dockerignore 4KB

.dockerignore 4KB .gitignore 1KB

.gitignore 1KB .gitignore 176B

.gitignore 176B .gitignore 47B

.gitignore 47B LicensePlate-master.iml 580B

LicensePlate-master.iml 580B fake_chs_lp-master.iml 284B

fake_chs_lp-master.iml 284B 13.jpeg 68KB

13.jpeg 68KB 2.jpg 408KB

2.jpg 408KB 14.jpg 160KB

14.jpg 160KB 10.jpg 152KB

10.jpg 152KB 11.jpg 143KB

11.jpg 143KB 1.jpg 96KB

1.jpg 96KB 3.jpg 94KB

3.jpg 94KB 9.jpg 49KB

9.jpg 49KB 8.jpg 47KB

8.jpg 47KB 1.jpg 29KB

1.jpg 29KB 5.jpg 29KB

5.jpg 29KB 6.jpg 18KB

6.jpg 18KB LICENSE 1KB

LICENSE 1KB README.md 11KB

README.md 11KB README.md 2KB

README.md 2KB README.md 366B

README.md 366B smu.png 3.47MB

smu.png 3.47MB 4.png 2.61MB

4.png 2.61MB 1.png 1.22MB

1.png 1.22MB 2.png 996KB

2.png 996KB 7.png 902KB

7.png 902KB 12.png 626KB

12.png 626KB yellow_bg.png 28KB

yellow_bg.png 28KB blue_bg.png 27KB

blue_bg.png 27KB black_bg.png 23KB

black_bg.png 23KB green_bg_0.png 4KB

green_bg_0.png 4KB green_bg_1.png 3KB

green_bg_1.png 3KB ne015.png 382B

ne015.png 382B ne013.png 357B

ne013.png 357B ne004.png 338B

ne004.png 338B ne116.png 327B

ne116.png 327B ne021.png 320B

ne021.png 320B ne025.png 313B

ne025.png 313B ne026.png 311B

ne026.png 311B ne010.png 310B

ne010.png 310B ne017.png 303B

ne017.png 303B ne133.png 303B

ne133.png 303B ne007.png 300B

ne007.png 300B ne121.png 298B

ne121.png 298B ne002.png 290B

ne002.png 290B ne001.png 288B

ne001.png 288B ne030.png 287B

ne030.png 287B ne018.png 284B

ne018.png 284B ne009.png 283B

ne009.png 283B ne130.png 279B

ne130.png 279B ne008.png 278B

ne008.png 278B ne020.png 273B

ne020.png 273B ne109.png 271B

ne109.png 271B ne101.png 268B

ne101.png 268B ne106.png 263B

ne106.png 263B ne016.png 263B

ne016.png 263B ne127.png 258B

ne127.png 258B ne123.png 258B

ne123.png 258B ne014.png 256B

ne014.png 256B ne132.png 253B

ne132.png 253B ne126.png 252B

ne126.png 252B ne129.png 252B

ne129.png 252B ne023.png 248B

ne023.png 248B ne115.png 248B

ne115.png 248B ne011.png 247B

ne011.png 247B ne005.png 236B

ne005.png 236B ne024.png 234B

ne024.png 234B ne012.png 233B

ne012.png 233B ne114.png 232B

ne114.png 232B ne000.png 232B

ne000.png 232B ne128.png 228B

ne128.png 228B ne122.png 222B

ne122.png 222B ne100.png 217B

ne100.png 217B ne112.png 215B

ne112.png 215B ne003.png 214B

ne003.png 214B ne028.png 207B

ne028.png 207B ne119.png 206B

ne119.png 206B ne102.png 201B

ne102.png 201B ne120.png 200B

ne120.png 200B ne019.png 197B

ne019.png 197B ne111.png 197B

ne111.png 197B ne113.png 193B

ne113.png 193B ne103.png 193B

ne103.png 193B ne131.png 191B

ne131.png 191B ne104.png 180B

ne104.png 180B ne124.png 178B

ne124.png 178B ne006.png 172B

ne006.png 172B ne027.png 170B

ne027.png 170B ne022.png 160B

ne022.png 160B ne029.png 158B

ne029.png 158B ne108.png 157B

ne108.png 157B ne125.png 148B

ne125.png 148B ne118.png 146B

ne118.png 146B ne105.png 143B

ne105.png 143B ne107.png 130B

ne107.png 130B共 252 条

- 1

- 2

- 3

资源评论

博士僧小星

- 粉丝: 1722

- 资源: 5850

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功