馃摎 This guide explains how to use **Weights & Biases** (W&B) with YOLOv5 馃殌. UPDATED 29 September 2021.

* [About Weights & Biases](#about-weights-&-biases)

* [First-Time Setup](#first-time-setup)

* [Viewing runs](#viewing-runs)

* [Disabling wandb](#disabling-wandb)

* [Advanced Usage: Dataset Versioning and Evaluation](#advanced-usage)

* [Reports: Share your work with the world!](#reports)

## About Weights & Biases

Think of [W&B](https://wandb.ai/site?utm_campaign=repo_yolo_wandbtutorial) like GitHub for machine learning models. With a few lines of code, save everything you need to debug, compare and reproduce your models 鈥� architecture, hyperparameters, git commits, model weights, GPU usage, and even datasets and predictions.

Used by top researchers including teams at OpenAI, Lyft, Github, and MILA, W&B is part of the new standard of best practices for machine learning. How W&B can help you optimize your machine learning workflows:

* [Debug](https://wandb.ai/wandb/getting-started/reports/Visualize-Debug-Machine-Learning-Models--VmlldzoyNzY5MDk#Free-2) model performance in real time

* [GPU usage](https://wandb.ai/wandb/getting-started/reports/Visualize-Debug-Machine-Learning-Models--VmlldzoyNzY5MDk#System-4) visualized automatically

* [Custom charts](https://wandb.ai/wandb/customizable-charts/reports/Powerful-Custom-Charts-To-Debug-Model-Peformance--VmlldzoyNzY4ODI) for powerful, extensible visualization

* [Share insights](https://wandb.ai/wandb/getting-started/reports/Visualize-Debug-Machine-Learning-Models--VmlldzoyNzY5MDk#Share-8) interactively with collaborators

* [Optimize hyperparameters](https://docs.wandb.com/sweeps) efficiently

* [Track](https://docs.wandb.com/artifacts) datasets, pipelines, and production models

## First-Time Setup

<details open>

<summary> Toggle Details </summary>

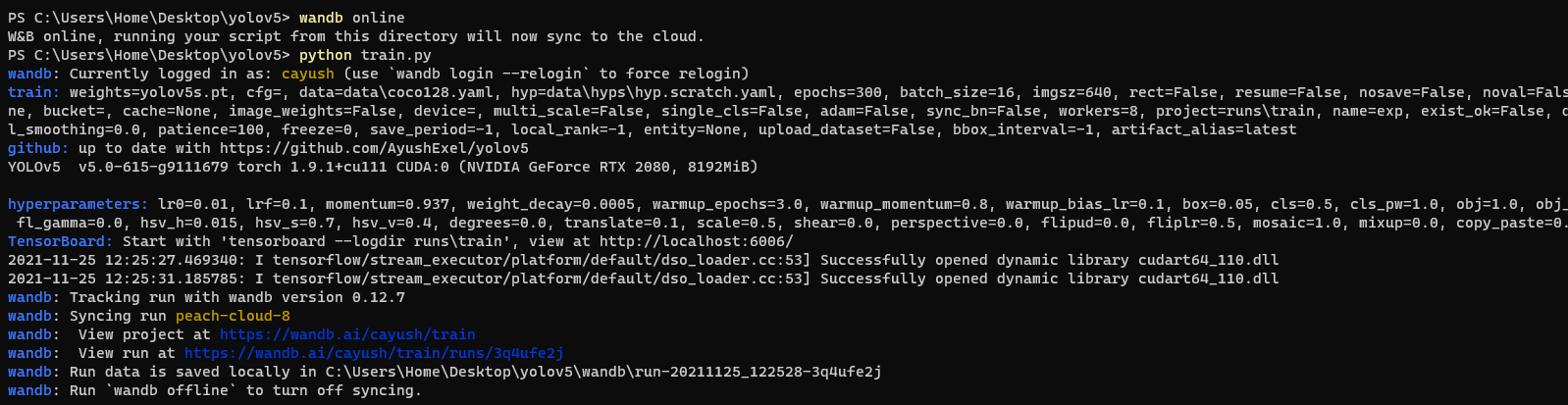

When you first train, W&B will prompt you to create a new account and will generate an **API key** for you. If you are an existing user you can retrieve your key from https://wandb.ai/authorize. This key is used to tell W&B where to log your data. You only need to supply your key once, and then it is remembered on the same device.

W&B will create a cloud **project** (default is 'YOLOv5') for your training runs, and each new training run will be provided a unique run **name** within that project as project/name. You can also manually set your project and run name as:

```shell

$ python train.py --project ... --name ...

```

YOLOv5 notebook example: <a href="https://colab.research.google.com/github/ultralytics/yolov5/blob/master/tutorial.ipynb"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"></a> <a href="https://www.kaggle.com/ultralytics/yolov5"><img src="https://kaggle.com/static/images/open-in-kaggle.svg" alt="Open In Kaggle"></a>

<img width="960" alt="Screen Shot 2021-09-29 at 10 23 13 PM" src="https://user-images.githubusercontent.com/26833433/135392431-1ab7920a-c49d-450a-b0b0-0c86ec86100e.png">

</details>

## Viewing Runs

<details open>

<summary> Toggle Details </summary>

Run information streams from your environment to the W&B cloud console as you train. This allows you to monitor and even cancel runs in <b>realtime</b> . All important information is logged:

* Training & Validation losses

* Metrics: Precision, Recall, [email protected], [email protected]:0.95

* Learning Rate over time

* A bounding box debugging panel, showing the training progress over time

* GPU: Type, **GPU Utilization**, power, temperature, **CUDA memory usage**

* System: Disk I/0, CPU utilization, RAM memory usage

* Your trained model as W&B Artifact

* Environment: OS and Python types, Git repository and state, **training command**

<p align="center"><img width="900" alt="Weights & Biases dashboard" src="https://user-images.githubusercontent.com/26833433/135390767-c28b050f-8455-4004-adb0-3b730386e2b2.png"></p>

</details>

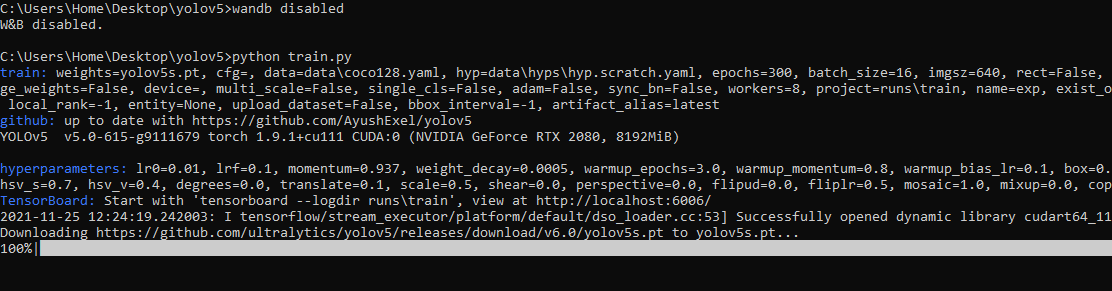

## Disabling wandb

* training after running `wandb disabled` inside that directory creates no wandb run

* To enable wandb again, run `wandb online`

## Advanced Usage

You can leverage W&B artifacts and Tables integration to easily visualize and manage your datasets, models and training evaluations. Here are some quick examples to get you started.

<details open>

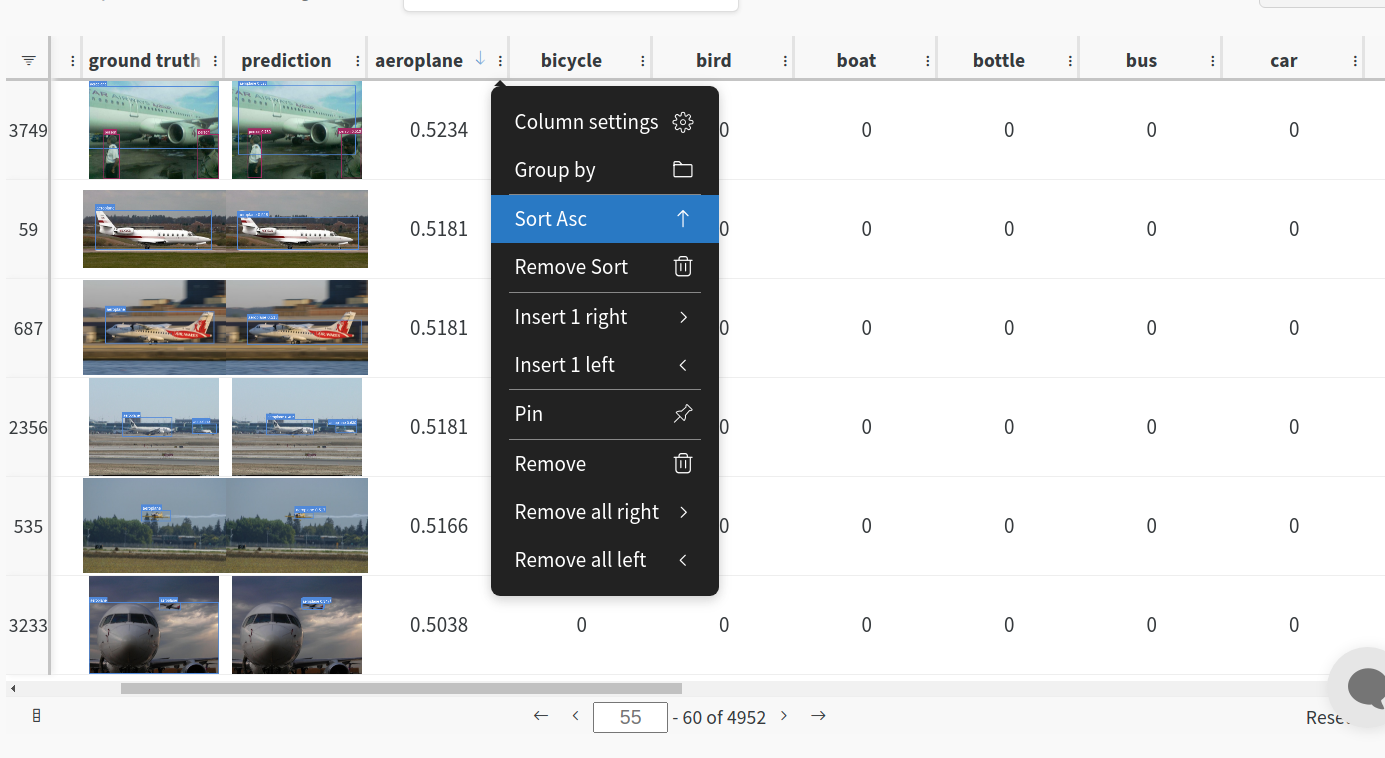

<h3> 1: Train and Log Evaluation simultaneousy </h3>

This is an extension of the previous section, but it'll also training after uploading the dataset. <b> This also evaluation Table</b>

Evaluation table compares your predictions and ground truths across the validation set for each epoch. It uses the references to the already uploaded datasets,

so no images will be uploaded from your system more than once.

<details open>

<summary> <b>Usage</b> </summary>

<b>Code</b> <code> $ python train.py --upload_data val</code>

</details>

<h3>2. Visualize and Version Datasets</h3>

Log, visualize, dynamically query, and understand your data with <a href='https://docs.wandb.ai/guides/data-vis/tables'>W&B Tables</a>. You can use the following command to log your dataset as a W&B Table. This will generate a <code>{dataset}_wandb.yaml</code> file which can be used to train from dataset artifact.

<details>

<summary> <b>Usage</b> </summary>

<b>Code</b> <code> $ python utils/logger/wandb/log_dataset.py --project ... --name ... --data .. </code>

</details>

<h3> 3: Train using dataset artifact </h3>

When you upload a dataset as described in the first section, you get a new config file with an added `_wandb` to its name. This file contains the information that

can be used to train a model directly from the dataset artifact. <b> This also logs evaluation </b>

<details>

<summary> <b>Usage</b> </summary>

<b>Code</b> <code> $ python train.py --data {data}_wandb.yaml </code>

</details>

<h3> 4: Save model checkpoints as artifacts </h3>

To enable saving and versioning checkpoints of your experiment, pass `--save_period n` with the base cammand, where `n` represents checkpoint interval.

You can also log both the dataset and model checkpoints simultaneously. If not passed, only the final model will be logged

<details>

<summary> <b>Usage</b> </summary>

<b>Code</b> <code> $ python train.py --save_period 1 </code>

</details>

</details>

<h3> 5: Resume runs from checkpoint artifacts. </h3>

Any run can be resumed using artifacts if the <code>--resume</code> argument starts with聽<code>wandb-artifact://</code>聽prefix followed by the run path, i.e,聽<code>wandb-artifact://username/project/runid </code>. This doesn't require the model checkpoint to be present on the local system.

<details>

<summary> <b>Usage</b> </summary>

<b>Code</b> <code> $ python train.py --resume wandb-artifact://{run_path} </code>

</details>

<h3> 6: Resume runs from dataset artifact & checkpoint artifacts. </h3>

<b> Local dataset or model checkpoints are not required. This can be used to resume runs directly on a different device </b>

The syntax is same as the previous section, but you'll need to lof both the dataset and model checkpoints as artifacts, i.e, set bot <code>--upload_dataset<

没有合适的资源?快使用搜索试试~ 我知道了~

yolov5目标检测+单目测距

共106个文件

yaml:39个

py:34个

pyc:14个

需积分: 0 83 下载量 56 浏览量

2023-06-05

16:40:15

上传

评论 9

收藏 13.84MB ZIP 举报

温馨提示

yolov5 车辆实时测距,可以换成自己的模型检测自己的物体。

资源推荐

资源详情

资源评论

收起资源包目录

yolov5目标检测+单目测距 (106个子文件)

yolov5目标检测+单目测距 (106个子文件)  setup.cfg 1KB

setup.cfg 1KB Dockerfile 2KB

Dockerfile 2KB Dockerfile 821B

Dockerfile 821B tutorial.ipynb 55KB

tutorial.ipynb 55KB bus.jpg 476KB

bus.jpg 476KB zidane.jpg 165KB

zidane.jpg 165KB LICENSE 34KB

LICENSE 34KB README.md 11KB

README.md 11KB CONTRIBUTING.md 5KB

CONTRIBUTING.md 5KB README.md 2KB

README.md 2KB README.md 273B

README.md 273B yolov5s.pt 14.12MB

yolov5s.pt 14.12MB datasets.py 45KB

datasets.py 45KB general.py 36KB

general.py 36KB train.py 33KB

train.py 33KB common.py 32KB

common.py 32KB export.py 27KB

export.py 27KB wandb_utils.py 27KB

wandb_utils.py 27KB tf.py 20KB

tf.py 20KB plots.py 20KB

plots.py 20KB val.py 19KB

val.py 19KB detect.py 15KB

detect.py 15KB yolo.py 15KB

yolo.py 15KB metrics.py 14KB

metrics.py 14KB torch_utils.py 14KB

torch_utils.py 14KB augmentations.py 11KB

augmentations.py 11KB loss.py 9KB

loss.py 9KB __init__.py 7KB

__init__.py 7KB autoanchor.py 7KB

autoanchor.py 7KB hubconf.py 6KB

hubconf.py 6KB downloads.py 6KB

downloads.py 6KB experimental.py 4KB

experimental.py 4KB benchmarks.py 4KB

benchmarks.py 4KB activations.py 4KB

activations.py 4KB callbacks.py 2KB

callbacks.py 2KB autobatch.py 2KB

autobatch.py 2KB resume.py 1KB

resume.py 1KB sweep.py 1KB

sweep.py 1KB __init__.py 1KB

__init__.py 1KB restapi.py 1KB

restapi.py 1KB log_dataset.py 1KB

log_dataset.py 1KB distance.py 503B

distance.py 503B example_request.py 299B

example_request.py 299B __init__.py 0B

__init__.py 0B __init__.py 0B

__init__.py 0B __init__.py 0B

__init__.py 0B datasets.cpython-36.pyc 35KB

datasets.cpython-36.pyc 35KB general.cpython-36.pyc 31KB

general.cpython-36.pyc 31KB common.cpython-36.pyc 30KB

common.cpython-36.pyc 30KB plots.cpython-36.pyc 18KB

plots.cpython-36.pyc 18KB yolo.cpython-36.pyc 12KB

yolo.cpython-36.pyc 12KB torch_utils.cpython-36.pyc 12KB

torch_utils.cpython-36.pyc 12KB metrics.cpython-36.pyc 11KB

metrics.cpython-36.pyc 11KB augmentations.cpython-36.pyc 9KB

augmentations.cpython-36.pyc 9KB autoanchor.cpython-36.pyc 6KB

autoanchor.cpython-36.pyc 6KB experimental.cpython-36.pyc 5KB

experimental.cpython-36.pyc 5KB activations.cpython-36.pyc 4KB

activations.cpython-36.pyc 4KB downloads.cpython-36.pyc 4KB

downloads.cpython-36.pyc 4KB __init__.cpython-36.pyc 1KB

__init__.cpython-36.pyc 1KB __init__.cpython-36.pyc 139B

__init__.cpython-36.pyc 139B userdata.sh 1KB

userdata.sh 1KB get_coco.sh 900B

get_coco.sh 900B mime.sh 780B

mime.sh 780B get_coco128.sh 615B

get_coco128.sh 615B download_weights.sh 523B

download_weights.sh 523B requirements.txt 926B

requirements.txt 926B additional_requirements.txt 105B

additional_requirements.txt 105B Objects365.yaml 8KB

Objects365.yaml 8KB xView.yaml 5KB

xView.yaml 5KB VOC.yaml 3KB

VOC.yaml 3KB anchors.yaml 3KB

anchors.yaml 3KB VisDrone.yaml 3KB

VisDrone.yaml 3KB Argoverse.yaml 3KB

Argoverse.yaml 3KB sweep.yaml 2KB

sweep.yaml 2KB SKU-110K.yaml 2KB

SKU-110K.yaml 2KB coco.yaml 2KB

coco.yaml 2KB yolov5-p7.yaml 2KB

yolov5-p7.yaml 2KB GlobalWheat2020.yaml 2KB

GlobalWheat2020.yaml 2KB yolov5x6.yaml 2KB

yolov5x6.yaml 2KB yolov5s6.yaml 2KB

yolov5s6.yaml 2KB yolov5n6.yaml 2KB

yolov5n6.yaml 2KB yolov5m6.yaml 2KB

yolov5m6.yaml 2KB yolov5l6.yaml 2KB

yolov5l6.yaml 2KB yolov5-p6.yaml 2KB

yolov5-p6.yaml 2KB coco128.yaml 2KB

coco128.yaml 2KB hyp.scratch-low.yaml 2KB

hyp.scratch-low.yaml 2KB yolov5-p2.yaml 2KB

yolov5-p2.yaml 2KB hyp.scratch-med.yaml 2KB

hyp.scratch-med.yaml 2KB hyp.scratch-high.yaml 2KB

hyp.scratch-high.yaml 2KB yolov3-spp.yaml 2KB

yolov3-spp.yaml 2KB yolov3.yaml 2KB

yolov3.yaml 2KB yolov5s-ghost.yaml 1KB

yolov5s-ghost.yaml 1KB yolov5s-transformer.yaml 1KB

yolov5s-transformer.yaml 1KB yolov5-bifpn.yaml 1KB

yolov5-bifpn.yaml 1KB yolov5-panet.yaml 1KB

yolov5-panet.yaml 1KB yolov5n.yaml 1KB

yolov5n.yaml 1KB yolov5m.yaml 1KB

yolov5m.yaml 1KB yolov5s.yaml 1KB

yolov5s.yaml 1KB yolov5x.yaml 1KB

yolov5x.yaml 1KB yolov5l.yaml 1KB

yolov5l.yaml 1KB共 106 条

- 1

- 2

资源评论

科研分母

- 粉丝: 1863

- 资源: 4

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功