# **Y**ou **O**nly **L**ook **A**t **C**oefficien**T**s

```

██╗ ██╗ ██████╗ ██╗ █████╗ ██████╗████████╗

╚██╗ ██╔╝██╔═══██╗██║ ██╔══██╗██╔════╝╚══██╔══╝

╚████╔╝ ██║ ██║██║ ███████║██║ ██║

╚██╔╝ ██║ ██║██║ ██╔══██║██║ ██║

██║ ╚██████╔╝███████╗██║ ██║╚██████╗ ██║

╚═╝ ╚═════╝ ╚══════╝╚═╝ ╚═╝ ╚═════╝ ╚═╝

```

A simple, fully convolutional model for real-time instance segmentation. This is the code for [our paper](https://arxiv.org/abs/1904.02689).

#### ICCV update (v1.1) released! Check out the ICCV trailer here:

[](https://www.youtube.com/watch?v=0pMfmo8qfpQ)

Read [the changelog](CHANGELOG.md) for details on, well, what changed. Oh, and the paper got updated too with pascal results and an appendix with box mAP.

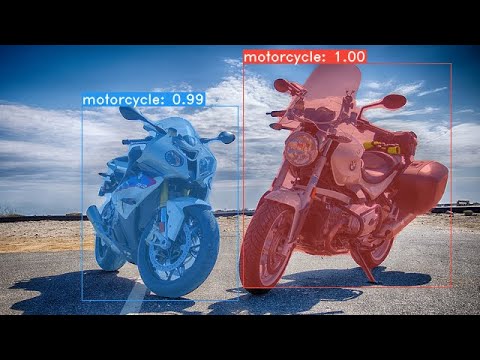

Some examples from our base model (33.5 fps on a Titan Xp and 29.8 mAP on COCO's `test-dev`):

# Installation

- Set up a Python3 environment.

- Install [Pytorch](http://pytorch.org/) 1.0.1 (or higher) and TorchVision.

- Install some other packages:

```Shell

# Cython needs to be installed before pycocotools

pip install cython

pip install opencv-python pillow pycocotools matplotlib

```

- Clone this repository and enter it:

```Shell

git clone https://github.com/dbolya/yolact.git

cd yolact

```

- If you'd like to train YOLACT, download the COCO dataset and the 2014/2017 annotations. Note that this script will take a while and dump 21gb of files into `./data/coco`.

```Shell

sh data/scripts/COCO.sh

```

- If you'd like to evaluate YOLACT on `test-dev`, download `test-dev` with this script.

```Shell

sh data/scripts/COCO_test.sh

```

# Evaluation

As of April 5th, 2019 here are our latest models along with their FPS on a Titan Xp and mAP on `test-dev`:

| Image Size | Backbone | FPS | mAP | Weights | |

|:----------:|:-------------:|:----:|:----:|----------------------------------------------------------------------------------------------------------------------|--------|

| 550 | Resnet50-FPN | 42.5 | 28.2 | [yolact_resnet50_54_800000.pth](https://drive.google.com/file/d/1yp7ZbbDwvMiFJEq4ptVKTYTI2VeRDXl0/view?usp=sharing) | [Mirror](https://ucdavis365-my.sharepoint.com/:u:/g/personal/yongjaelee_ucdavis_edu/EUVpxoSXaqNIlssoLKOEoCcB1m0RpzGq_Khp5n1VX3zcUw) |

| 550 | Darknet53-FPN | 40.0 | 28.7 | [yolact_darknet53_54_800000.pth](https://drive.google.com/file/d/1dukLrTzZQEuhzitGkHaGjphlmRJOjVnP/view?usp=sharing) | [Mirror](https://ucdavis365-my.sharepoint.com/:u:/g/personal/yongjaelee_ucdavis_edu/ERrao26c8llJn25dIyZPhwMBxUp2GdZTKIMUQA3t0djHLw)

| 550 | Resnet101-FPN | 33.0 | 29.8 | [yolact_base_54_800000.pth](https://drive.google.com/file/d/1UYy3dMapbH1BnmtZU4WH1zbYgOzzHHf_/view?usp=sharing) | [Mirror](https://ucdavis365-my.sharepoint.com/:u:/g/personal/yongjaelee_ucdavis_edu/EYRWxBEoKU9DiblrWx2M89MBGFkVVB_drlRd_v5sdT3Hgg)

| 700 | Resnet101-FPN | 23.6 | 31.2 | [yolact_im700_54_800000.pth](https://drive.google.com/file/d/1lE4Lz5p25teiXV-6HdTiOJSnS7u7GBzg/view?usp=sharing) | [Mirror](https://ucdavis365-my.sharepoint.com/:u:/g/personal/yongjaelee_ucdavis_edu/Eagg5RSc5hFEhp7sPtvLNyoBjhlf2feog7t8OQzHKKphjw)

To evalute the model, put the corresponding weights file in the `./weights` directory and run one of the following commands.

## Quantitative Results on COCO

```Shell

# Quantitatively evaluate a trained model on the entire validation set. Make sure you have COCO downloaded as above.

# This should get 29.92 validation mask mAP last time I checked.

python eval.py --trained_model=weights/yolact_base_54_800000.pth

# Output a COCOEval json to submit to the website or to use the run_coco_eval.py script.

# This command will create './results/bbox_detections.json' and './results/mask_detections.json' for detection and instance segmentation respectively.

python eval.py --trained_model=weights/yolact_base_54_800000.pth --output_coco_json

# You can run COCOEval on the files created in the previous command. The performance should match my implementation in eval.py.

python run_coco_eval.py

# To output a coco json file for test-dev, make sure you have test-dev downloaded from above and go

python eval.py --trained_model=weights/yolact_base_54_800000.pth --output_coco_json --dataset=coco2017_testdev_dataset

```

## Qualitative Results on COCO

```Shell

# Display qualitative results on COCO. From here on I'll use a confidence threshold of 0.15.

python eval.py --trained_model=weights/yolact_base_54_800000.pth --score_threshold=0.15 --top_k=15 --display

```

## Benchmarking on COCO

```Shell

# Run just the raw model on the first 1k images of the validation set

python eval.py --trained_model=weights/yolact_base_54_800000.pth --benchmark --max_images=1000

```

## Images

```Shell

# Display qualitative results on the specified image.

python eval.py --trained_model=weights/yolact_base_54_800000.pth --score_threshold=0.15 --top_k=15 --image=my_image.png

# Process an image and save it to another file.

python eval.py --trained_model=weights/yolact_base_54_800000.pth --score_threshold=0.15 --top_k=15 --image=input_image.png:output_image.png

# Process a whole folder of images.

python eval.py --trained_model=weights/yolact_base_54_800000.pth --score_threshold=0.15 --top_k=15 --images=path/to/input/folder:path/to/output/folder

```

## Video

```Shell

# Display a video in real-time. "--video_multiframe" will process that many frames at once for improved performance.

# If you want, use "--display_fps" to draw the FPS directly on the frame.

python eval.py --trained_model=weights/yolact_base_54_800000.pth --score_threshold=0.15 --top_k=15 --video_multiframe=4 --video=my_video.mp4

# Display a webcam feed in real-time. If you have multiple webcams pass the index of the webcam you want instead of 0.

python eval.py --trained_model=weights/yolact_base_54_800000.pth --score_threshold=0.15 --top_k=15 --video_multiframe=4 --video=0

# Process a video and save it to another file. This uses the same pipeline as the ones above now, so it's fast!

python eval.py --trained_model=weights/yolact_base_54_800000.pth --score_threshold=0.15 --top_k=15 --video_multiframe=4 --video=input_video.mp4:output_video.mp4

```

As you can tell, `eval.py` can do a ton of stuff. Run the `--help` command to see everything it can do.

```Shell

python eval.py --help

```

# Training

By default, we train on COCO. Make sure to download the entire dataset using the commands above.

- To train, grab an imagenet-pretrained model and put it in `./weights`.

- For Resnet101, download `resnet101_reducedfc.pth` from [here](https://drive.google.com/file/d/1tvqFPd4bJtakOlmn-uIA492g2qurRChj/view?usp=sharing).

- For Resnet50, download `resnet50-19c8e357.pth` from [here](https://drive.google.com/file/d/1Jy3yCdbatgXa5YYIdTCRrSV0S9V5g1rn/view?usp=sharing).

- For Darknet53, download `darknet53.pth` from [here](https://drive.google.com/file/d/17Y431j4sagFpSReuPNoFcj9h7azDTZFf/view?usp=sharing).

- Run one of the training commands below.

- Note that you can press ctrl+c while training and it will save an `*_interrupt.pth` file at the current iteration.

- All weights are saved in the `./weights` directory by default with the file name `<conf

没有合适的资源?快使用搜索试试~ 我知道了~

资源推荐

资源详情

资源评论

收起资源包目录

yolact_person.rar (82个子文件)

yolact_person.rar (82个子文件)  yolact_person

yolact_person  依赖环境.txt 219B

依赖环境.txt 219B .gitignore 2KB

.gitignore 2KB data

data  grid.npy 306KB

grid.npy 306KB __init__.py 86B

__init__.py 86B yolact_example_0.png 625KB

yolact_example_0.png 625KB yolact_example_2.png 495KB

yolact_example_2.png 495KB scripts

scripts  COCO.sh 2KB

COCO.sh 2KB data

data  coco

coco  images

images  train2017.zip 227KB

train2017.zip 227KB val2017.zip 113KB

val2017.zip 113KB annotations

annotations  annotations_trainval2017.zip 2.27MB

annotations_trainval2017.zip 2.27MB mix_sets.py 2KB

mix_sets.py 2KB COCO_test.sh 1KB

COCO_test.sh 1KB config.py 29KB

config.py 29KB __pycache__

__pycache__  coco.cpython-37.pyc 9KB

coco.cpython-37.pyc 9KB config.cpython-37.pyc 13KB

config.cpython-37.pyc 13KB __init__.cpython-37.pyc 217B

__init__.cpython-37.pyc 217B coco.py 11KB

coco.py 11KB yolact_example_1.png 633KB

yolact_example_1.png 633KB yolact.py 32KB

yolact.py 32KB eval.py 45KB

eval.py 45KB layers

layers  output_utils.py 6KB

output_utils.py 6KB functions

functions  detection.py 9KB

detection.py 9KB __init__.py 53B

__init__.py 53B __pycache__

__pycache__  detection.cpython-37.pyc 6KB

detection.cpython-37.pyc 6KB __init__.cpython-37.pyc 194B

__init__.cpython-37.pyc 194B __init__.py 48B

__init__.py 48B box_utils.py 15KB

box_utils.py 15KB __pycache__

__pycache__  interpolate.cpython-37.pyc 910B

interpolate.cpython-37.pyc 910B output_utils.cpython-37.pyc 5KB

output_utils.cpython-37.pyc 5KB __init__.cpython-37.pyc 167B

__init__.cpython-37.pyc 167B box_utils.cpython-37.pyc 12KB

box_utils.cpython-37.pyc 12KB modules

modules  __init__.py 68B

__init__.py 68B multibox_loss.py 28KB

multibox_loss.py 28KB __pycache__

__pycache__  multibox_loss.cpython-37.pyc 16KB

multibox_loss.cpython-37.pyc 16KB __init__.cpython-37.pyc 202B

__init__.cpython-37.pyc 202B interpolate.py 412B

interpolate.py 412B LICENSE 1KB

LICENSE 1KB CHANGELOG.md 2KB

CHANGELOG.md 2KB utils

utils  augmentations.py 23KB

augmentations.py 23KB functions.py 4KB

functions.py 4KB __init__.py 42B

__init__.py 42B timer.py 3KB

timer.py 3KB cython_nms.pyx 2KB

cython_nms.pyx 2KB __pycache__

__pycache__  functions.cpython-37.pyc 5KB

functions.cpython-37.pyc 5KB augmentations.cpython-37.pyc 22KB

augmentations.cpython-37.pyc 22KB timer.cpython-37.pyc 4KB

timer.cpython-37.pyc 4KB __init__.cpython-37.pyc 174B

__init__.cpython-37.pyc 174B logger.cpython-37.pyc 14KB

logger.cpython-37.pyc 14KB nvinfo.cpython-37.pyc 3KB

nvinfo.cpython-37.pyc 3KB nvinfo.py 2KB

nvinfo.py 2KB logger.py 15KB

logger.py 15KB scripts

scripts  bbox_recall.py 6KB

bbox_recall.py 6KB compute_masks.py 3KB

compute_masks.py 3KB parse_eval.py 1KB

parse_eval.py 1KB save_bboxes.py 797B

save_bboxes.py 797B train.sh 302B

train.sh 302B resume.sh 348B

resume.sh 348B convert_darknet.py 1KB

convert_darknet.py 1KB make_grid.py 5KB

make_grid.py 5KB cluster_bbox_sizes.py 2KB

cluster_bbox_sizes.py 2KB plot_loss.py 2KB

plot_loss.py 2KB augment_bbox.py 4KB

augment_bbox.py 4KB convert_sbd.py 2KB

convert_sbd.py 2KB optimize_bboxes.py 7KB

optimize_bboxes.py 7KB unpack_statedict.py 456B

unpack_statedict.py 456B eval.sh 306B

eval.sh 306B README.md 14KB

README.md 14KB .idea

.idea  misc.xml 298B

misc.xml 298B workspace.xml 9KB

workspace.xml 9KB inspectionProfiles

inspectionProfiles  profiles_settings.xml 174B

profiles_settings.xml 174B yolact_interface.iml 334B

yolact_interface.iml 334B modules.xml 291B

modules.xml 291B __pycache__

__pycache__  yolact.cpython-37.pyc 21KB

yolact.cpython-37.pyc 21KB eval.cpython-37.pyc 32KB

eval.cpython-37.pyc 32KB backbone.cpython-37.pyc 13KB

backbone.cpython-37.pyc 13KB backbone.py 16KB

backbone.py 16KB weights

weights  read.py 157B

read.py 157B yolact_coco_person_3_30000.pth 189.7MB

yolact_coco_person_3_30000.pth 189.7MB camera.py 6KB

camera.py 6KB logs

logs  yolact_base.log 569B

yolact_base.log 569B yolact_coco_person.log 11KB

yolact_coco_person.log 11KB train.py 21KB

train.py 21KB共 82 条

- 1

资源评论

神奇元创2020-09-03问下 代码跑起来后 一秒以后 屏幕就卡死了 是什么情况

神奇元创2020-09-03问下 代码跑起来后 一秒以后 屏幕就卡死了 是什么情况

\lambda

- 粉丝: 187

- 资源: 22

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功