Coping with variability in motion based activity recognition

Matthias Kreil

DFKI GmbH

Kaiserslautern, Germany

matthias.kreil@dfki.de

Bernhard Sick

Intelligent Embedded Systems

University of Kassel, Germany

bsick@uni-kassel.de

Paul Lukowicz

DFKI GmbH / TU

Kaiserslautern, Germany

paul.lukowicz@dfki.de

ABSTRACT

A key issue in the automatic recognition of human activities

with body worn sensors is the variability of human motions

and the huge space of possibilities for executing even fairly

simple actions. In this article we introduce a new algorithm to

address this issue. The core idea is that often even highly vari-

able actions include short more or less invariant parts which

are due to hard physical constraints. The aim is to develop

a method that can identify such invariants and use them to

improve the classification of the respective activities. The

method is meant to be combined with existing classification

approaches in an ensemble like fashion, being applied only to

the classes for which appropriate invariants can be found and

leaving the other classes to be handled by classical methods.

We compare our method’s results to prior publications on two

well known data sets and are able to improve the classifica-

tion in 5 of 23 respectively 4 of 19 classes, in same cases by

a large margin (best case is from 27% to 76% in the first and

from 50% to 64% in the second). In each data set there is

only one class for which we make the recognition worse and

in both cases it is one with poor results to start with and a rel-

atively small decrease (from 54% to 45% in the first and from

65% to 62% in the second). The results are achieved for an

user independent case.

Author Keywords

Activity Recognition, Motif Detection, Segmentation,

Wearable Computing, IMU

ACM Classification Keywords

H.5.4. Pattern Recognition: Applications: Signal processing

INTRODUCTION

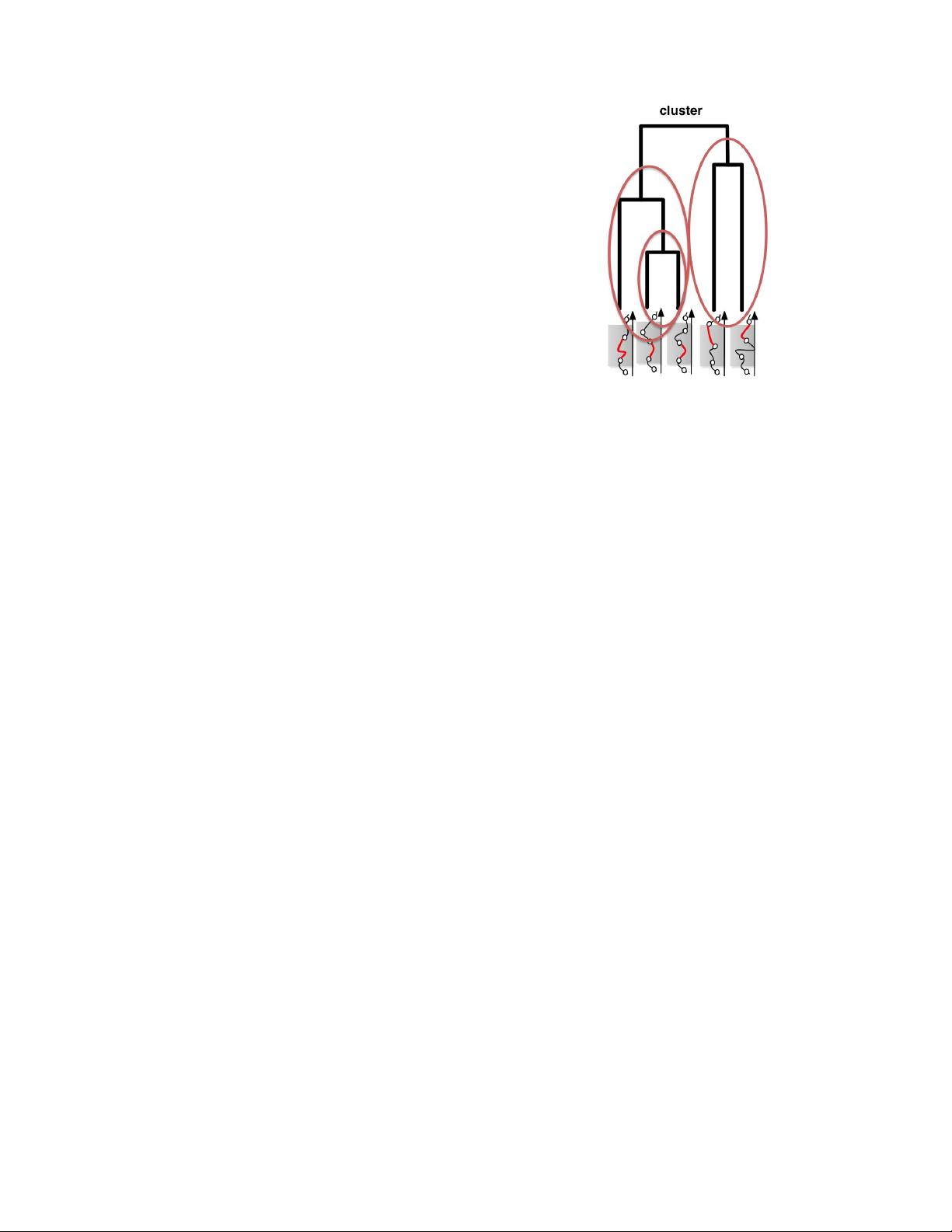

The basic idea behind our work is that many human activities

consist of two parts: one (mostly the larger part) being vari-

able due to inter or intra personal differences and the other be-

ing relatively invariant due to hard physical constraints. Thus,

for example, there are many different ways to hold and insert

a key into a lock, but in the end unlocking involves some sort

of a turning motion (which is the largely invariant part).

Publication rights licensed to ACM. ACM acknowledges that this contribution was

authored or co-authored by an employee, contractor or affiliate of a national govern-

ment. As such, the Government retains a nonexclusive, royalty-free right to publish or

reproduce this article, or to allow others to do so, for Government purposes only.

iWOAR ’16, June 23 - 24, 2016, Rostock, Germany.

Copyright is held by the owner/author(s). Publication rights licensed to ACM.

ACM 978-1-4503-4245-2/16/06....$15.00

DOI: http://dx.doi.org/10.1145/2948963.2948967

Based on this idea we propose a method that can identify such

invariants in motion related human activities and use them to

improve their automatic recognition from body worn sensors.

The method is meant to be combined with existing classifi-

cation approaches in an ensemble like fashion, being applied

only to the classes for which appropriate invariants can be

found and leaving the other classes to be handled by classi-

cal methods. Which class to handle using our methods and

which to leave to other methods is determined automatically

from the training data sets. We compare our results (includ-

ing the selection of the method to be applied) to previously

published results on two well known data sets. These are the

bicycle repair experiment from [14] and the car workshop

experiment from [17]. Our methods are able to improve the

classification in 5 of 23 respectively 4 of 19 classes, in same

cases by a large margin. In the bycycle data set the best case

improvement is from 27% to 76%, in the car data set the best

case is from 50% to 84%. In each data set there is only one

class for which we worsen the recognition (which means that

the system wrongly decided to opt for the invariants based

method) and in both cases it is one with poor results to start

with and a relatively small decrease (from 54% to 45% in the

first and from 65% to 62% in the second). The results are

achieved for a user independent classification case (training

on a set of users and then testing on a user that the system

has not seen before), which is the hardest activity recognition

task.

RELATED WORK

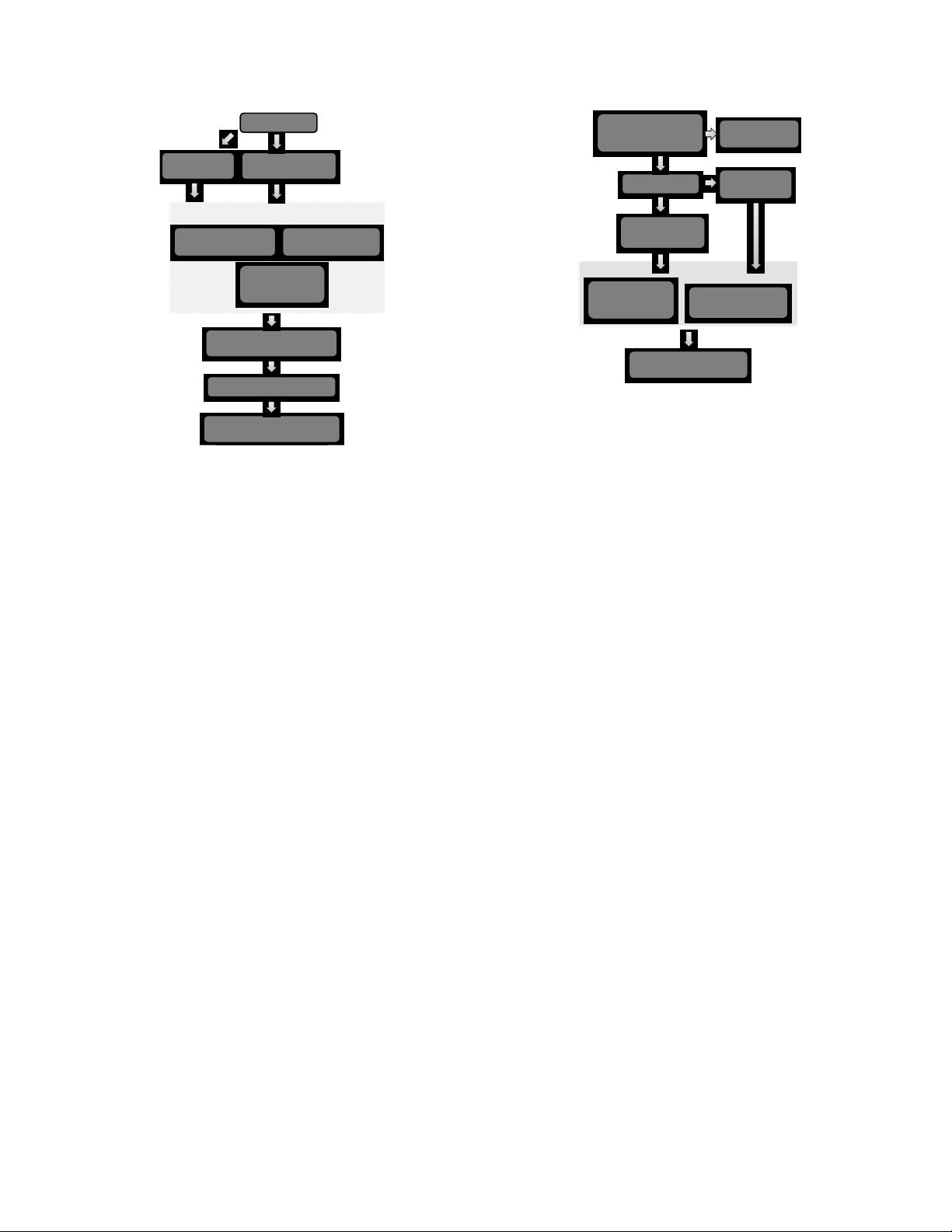

Activity recognition algorithms usually start with a strategy

to separate the signal data in reasonable units for further pro-

cessing. A straight forward strategy to segment a data stream

is to use a sliding window of a fixed size. This is done by

pushing the window forward by a fixed number of data points

and using the data of each window frame for the next pro-

cessing step in the classification chain, e.g., the feature cal-

culation. This has been implemented in several publications

in the field of activity recognition [1, 2, 5, 10, 16]. An-

other approach to segment time series is to linearly approxi-

mate the signal piecewise while satisfying a predefined error

condition. [11] introduces such an algorithm called Sliding

Window and Bottom-up (SWAB). [18] inspired us to the seg-

mentation strategy used in this publication. They observed

that the movement of the hand slows down when activities

start and end. As a consequence, the variance signal of the

hand’s acceleration data is calculated with a sliding window

of 10 samples. Whenever a minimum in the variance signal

is reached, a new segmentation point is set.