## PyTorch Implementation of [AnimeGANv2](https://github.com/TachibanaYoshino/AnimeGANv2)

**Updates**

* `2021-10-17` Add weights for [FacePortraitV2](#additional-model-weights)

* `2021-11-07` Thanks to [ak92501](https://twitter.com/ak92501), a web demo is integrated to [Huggingface Spaces](https://huggingface.co/spaces) with [Gradio](https://github.com/gradio-app/gradio).

See demo: [](https://huggingface.co/spaces/akhaliq/AnimeGANv2)

* `2021-11-07` Thanks to [xhlulu](https://github.com/xhlulu), the `torch.hub` model is now available. See [Torch Hub Usage](#torch-hub-usage).

* `2021-11-07` Add FacePortraitV2 style demo to a telegram bot. See [@face2stickerbot](https://t.me/face2stickerbot) by [sxela](https://github.com/sxela)

## Basic Usage

**Weight Conversion from the Original Repo (Requires TensorFlow 1.x)**

```

git clone https://github.com/TachibanaYoshino/AnimeGANv2

python convert_weights.py

```

**Inference**

```

python test.py --input_dir [image_folder_path] --device [cpu/cuda]

```

**Results from converted [[Paprika]](https://drive.google.com/file/d/1K_xN32uoQKI8XmNYNLTX5gDn1UnQVe5I/view?usp=sharing) style model**

(input image, original tensorflow result, pytorch result from left to right)

<img src="./samples/compare/1.jpg" width="960">

<img src="./samples/compare/2.jpg" width="960">

<img src="./samples/compare/3.jpg" width="960">

**Note:** Training code not included / Results from converted weights slightly different due to the [bilinear upsample issue](https://github.com/pytorch/pytorch/issues/10604)

## Additional Model Weights

**Webtoon Face** [[ckpt]](https://drive.google.com/file/d/10T6F3-_RFOCJn6lMb-6mRmcISuYWJXGc)

<details>

<summary>samples</summary>

Trained on <b>256x256</b> face images. Distilled from [webtoon face model](https://github.com/bryandlee/naver-webtoon-faces/blob/master/README.md#face2webtoon) with L2 + VGG + GAN Loss and CelebA-HQ images. See `test_faces.ipynb` for details.

<img src="./samples/face_results.jpg" width="512">

</details>

**Face Portrait v1** [[ckpt]](https://drive.google.com/file/d/1WK5Mdt6mwlcsqCZMHkCUSDJxN1UyFi0-)

<details>

<summary>samples</summary>

Trained on <b>512x512</b> face images.

[](https://colab.research.google.com/drive/1jCqcKekdtKzW7cxiw_bjbbfLsPh-dEds?usp=sharing)

[ðº](https://youtu.be/CbMfI-HNCzw?t=317)

</details>

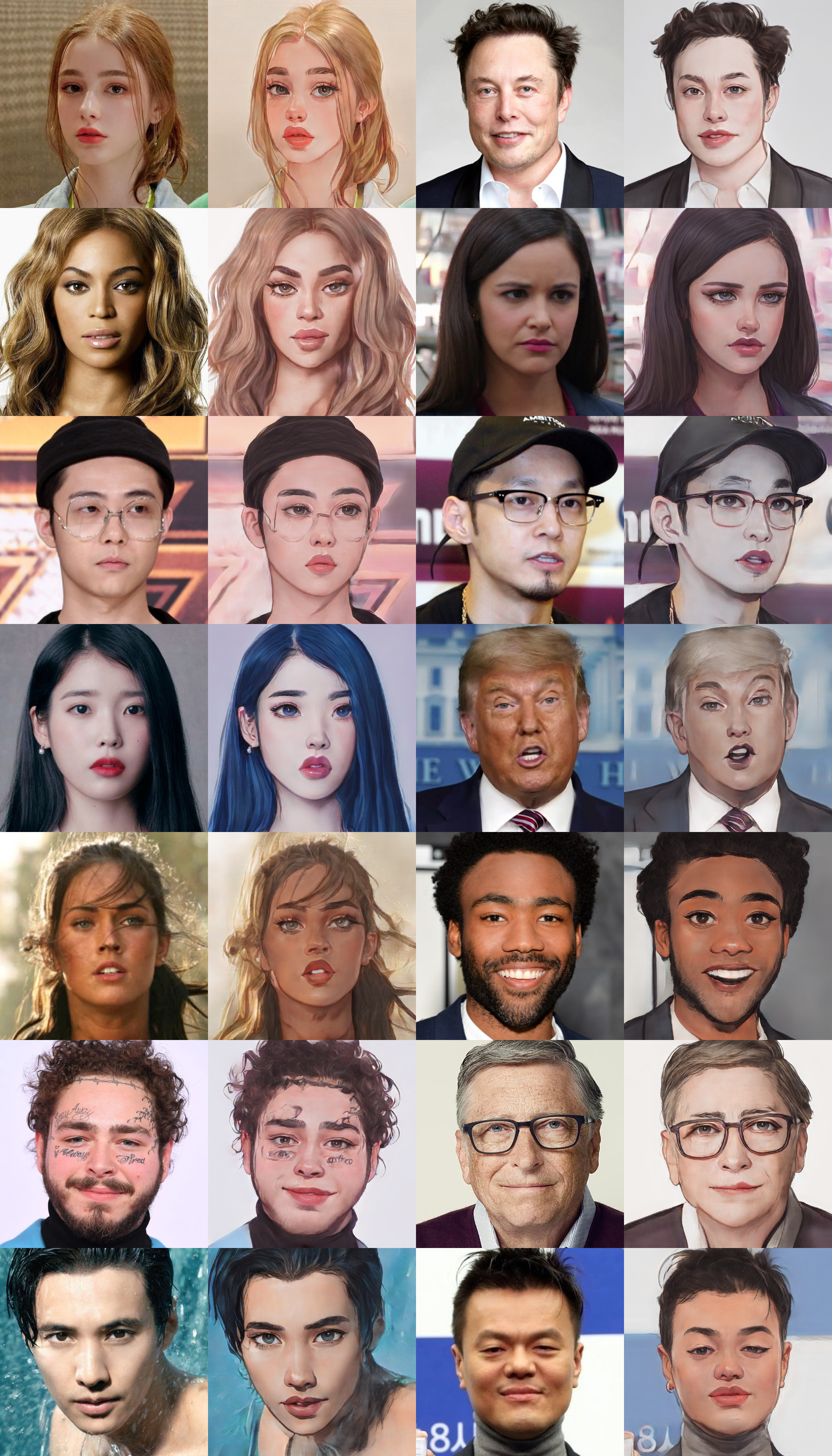

**Face Portrait v2** [[ckpt]](https://drive.google.com/uc?id=18H3iK09_d54qEDoWIc82SyWB2xun4gjU)

<details>

<summary>samples</summary>

Trained on <b>512x512</b> face images. Compared to v1, `ð»beautify` `ðºrobustness`

[](https://colab.research.google.com/drive/1jCqcKekdtKzW7cxiw_bjbbfLsPh-dEds?usp=sharing)

ð¦ ð® ð¥

</details>

## Torch Hub Usage

You can load Animegan v2 via `torch.hub`:

```python

import torch

model = torch.hub.load('bryandlee/animegan2-pytorch', 'generator').eval()

# convert your image into tensor here

out = model(img_tensor)

```

You can load with various configs (more details in [the torch docs](https://pytorch.org/docs/stable/hub.html)):

```python

model = torch.hub.load(

"bryandlee/animegan2-pytorch:main",

"generator",

pretrained=True, # or give URL to a pretrained model

device="cuda", # or "cpu" if you don't have a GPU

progress=True, # show progress

)

```

Currently, the following `pretrained` shorthands are available:

```python

model = torch.hub.load("bryandlee/animegan2-pytorch:main", "generator", pretrained="celeba_distill")

model = torch.hub.load("bryandlee/animegan2-pytorch:main", "generator", pretrained="face_paint_512_v1")

model = torch.hub.load("bryandlee/animegan2-pytorch:main", "generator", pretrained="face_paint_512_v2")

model = torch.hub.load("bryandlee/animegan2-pytorch:main", "generator", pretrained="paprika")

```

You can also load the `face2paint` util function. First, install dependencies:

```

pip install torchvision Pillow numpy

```

Then, import the function using `torch.hub`:

```python

face2paint = torch.hub.load(

'bryandlee/animegan2-pytorch:main', 'face2paint',

size=512, device="cpu"

)

img = Image.open(...).convert("RGB")

out = face2paint(model, img)

```

没有合适的资源?快使用搜索试试~ 我知道了~

AnimeGANv2.zip

共155个文件

jpg:39个

sample:22个

head:8个

需积分: 0 0 下载量 125 浏览量

2023-09-24

18:48:40

上传

评论

收藏 184.07MB ZIP 举报

温馨提示

AnimeGANv2.zip

资源推荐

资源详情

资源评论

收起资源包目录

AnimeGANv2.zip (155个子文件)

AnimeGANv2.zip (155个子文件)  1687bfc536dbf9fd858313f92d1f0d60c5f787 565B

1687bfc536dbf9fd858313f92d1f0d60c5f787 565B 1799a98af96b23c4d5c9443c7e8562e2fb8c0e 778KB

1799a98af96b23c4d5c9443c7e8562e2fb8c0e 778KB 1a1371c51771b82f4627be4e2346bc26631807 946B

1a1371c51771b82f4627be4e2346bc26631807 946B 1ab3b8013fab21762f6b1fbf7b6d90fbc77b7d 270B

1ab3b8013fab21762f6b1fbf7b6d90fbc77b7d 270B 1bddee872189532e101da47451d72813107b7e 778KB

1bddee872189532e101da47451d72813107b7e 778KB 1e13dbed72fb2d7ef78e6967cef2250d62765e 931B

1e13dbed72fb2d7ef78e6967cef2250d62765e 931B 21e4980a6016f04086822964b5443e799b26ac 1KB

21e4980a6016f04086822964b5443e799b26ac 1KB 21fc32d55e99a4dbcee233fde138e8bd96357e 271B

21fc32d55e99a4dbcee233fde138e8bd96357e 271B 2b017f940cf9c12112dab63d1eb3bf814e7c34 270B

2b017f940cf9c12112dab63d1eb3bf814e7c34 270B 33c7d53191f6504bdeb8aa2f9e2b53c4649efd 252B

33c7d53191f6504bdeb8aa2f9e2b53c4649efd 252B 3d5a9ab089253d8048e4b8b9998ff8e519ddf4 270B

3d5a9ab089253d8048e4b8b9998ff8e519ddf4 270B 5b5d8d8d91ce3f48bb58a1321c10494f27b11b 565B

5b5d8d8d91ce3f48bb58a1321c10494f27b11b 565B 6ca6aff9c92ed8495cdf06899c4d1fb5657f5c 1KB

6ca6aff9c92ed8495cdf06899c4d1fb5657f5c 1KB 74d641d933956f207fb3b0b240b4cf385d85a9 1KB

74d641d933956f207fb3b0b240b4cf385d85a9 1KB 75189f1dbaf6bd0ebd7029ae0202d3ad8e8125 270B

75189f1dbaf6bd0ebd7029ae0202d3ad8e8125 270B 7a74d5e6d16f60ba5859b2320eb677d125c749 40B

7a74d5e6d16f60ba5859b2320eb677d125c749 40B 7f1f9ace97e3b7125556cf741698f64bac4f90 660B

7f1f9ace97e3b7125556cf741698f64bac4f90 660B 81ffbc0fa1c5b847d4c74a2806e00ad641322e 270B

81ffbc0fa1c5b847d4c74a2806e00ad641322e 270B 8971586bce2f460c51dcb5142f9f204998331c 7.61MB

8971586bce2f460c51dcb5142f9f204998331c 7.61MB 8d5ff908cfffc57446e99b3c4d622c093e9f60 270B

8d5ff908cfffc57446e99b3c4d622c093e9f60 270B 97c3ad45b2db87e1ac4944ee62cfdb30d0670d 565B

97c3ad45b2db87e1ac4944ee62cfdb30d0670d 565B abab4d7bcf07ae1f55bc268a69d53a5f82dc44 1KB

abab4d7bcf07ae1f55bc268a69d53a5f82dc44 1KB b0e0a98f06822447119160b65b12af1da8fc89 565B

b0e0a98f06822447119160b65b12af1da8fc89 565B b3d3f7e69e07130687a2feee27391a248d7f8e 565B

b3d3f7e69e07130687a2feee27391a248d7f8e 565B b42652198a0d88cf0afe3e1574f1453210c0bf 1017B

b42652198a0d88cf0afe3e1574f1453210c0bf 1017B b5b50f48fbbd183716ad6da5aa113f1ae14bde 120B

b5b50f48fbbd183716ad6da5aa113f1ae14bde 120B c65d6cd5653a3a9e7aca717d40fec99226c63a 527B

c65d6cd5653a3a9e7aca717d40fec99226c63a 527B config 277B

config 277B config 267B

config 267B d3ab3238649c86868a5e2805f9aece5b00f436 68B

d3ab3238649c86868a5e2805f9aece5b00f436 68B shape_predictor_68_face_landmarks.dat 95.08MB

shape_predictor_68_face_landmarks.dat 95.08MB description 73B

description 73B description 73B

description 73B e47617de110dea7ca47e087ff1347cc2646eda 1011B

e47617de110dea7ca47e087ff1347cc2646eda 1011B edec9fbc974ca4bac38b797345bea5575ed636 175B

edec9fbc974ca4bac38b797345bea5575ed636 175B efd0f7af5cd1c163aa99248b261c755721f04e 1KB

efd0f7af5cd1c163aa99248b261c755721f04e 1KB exclude 240B

exclude 240B exclude 240B

exclude 240B fed30b67366d71b36e881a8f7f0b4f9a93de57 269KB

fed30b67366d71b36e881a8f7f0b4f9a93de57 269KB .gitignore 2KB

.gitignore 2KB .gitignore 2KB

.gitignore 2KB dlib-19.24.0.tar.gz 3.05MB

dlib-19.24.0.tar.gz 3.05MB HEAD 220B

HEAD 220B HEAD 220B

HEAD 220B HEAD 210B

HEAD 210B HEAD 210B

HEAD 210B HEAD 30B

HEAD 30B HEAD 30B

HEAD 30B HEAD 21B

HEAD 21B HEAD 21B

HEAD 21B pack-62add5ebc6452314e8c8a542eed0f3fda80d084c.idx 6KB

pack-62add5ebc6452314e8c8a542eed0f3fda80d084c.idx 6KB index 3KB

index 3KB index 671B

index 671B test_faces.ipynb 1.26MB

test_faces.ipynb 1.26MB test_faces-checkpoint.ipynb 1.26MB

test_faces-checkpoint.ipynb 1.26MB onnx-inference.ipynb 191KB

onnx-inference.ipynb 191KB onnx-inference-checkpoint.ipynb 191KB

onnx-inference-checkpoint.ipynb 191KB interface.ipynb 24KB

interface.ipynb 24KB AnimeGANv2_Convert2ONNX.ipynb 12KB

AnimeGANv2_Convert2ONNX.ipynb 12KB AnimeGANv2_Convert2ONNX-checkpoint.ipynb 12KB

AnimeGANv2_Convert2ONNX-checkpoint.ipynb 12KB ._openbayes-intro.ipynb 461B

._openbayes-intro.ipynb 461B 3.jpg 1.4MB

3.jpg 1.4MB 2.jpg 1.26MB

2.jpg 1.26MB 1.jpg 1.21MB

1.jpg 1.21MB 1.jpg 863KB

1.jpg 863KB face_results.jpg 843KB

face_results.jpg 843KB 1.jpg 719KB

1.jpg 719KB 2.jpg 536KB

2.jpg 536KB sample.jpg 269KB

sample.jpg 269KB sample-checkpoint.jpg 269KB

sample-checkpoint.jpg 269KB 3.jpg 227KB

3.jpg 227KB 5.jpg 131KB

5.jpg 131KB 5-checkpoint.jpg 131KB

5-checkpoint.jpg 131KB 6.jpg 95KB

6.jpg 95KB 13.jpg 62KB

13.jpg 62KB 13-checkpoint.jpg 62KB

13-checkpoint.jpg 62KB 4.jpg 51KB

4.jpg 51KB 2.jpg 50KB

2.jpg 50KB 2-checkpoint.jpg 50KB

2-checkpoint.jpg 50KB 6.jpg 50KB

6.jpg 50KB 6-checkpoint.jpg 50KB

6-checkpoint.jpg 50KB 16.jpg 39KB

16.jpg 39KB 16-checkpoint.jpg 39KB

16-checkpoint.jpg 39KB 11.jpg 37KB

11.jpg 37KB 11-checkpoint.jpg 37KB

11-checkpoint.jpg 37KB 7.jpg 37KB

7.jpg 37KB 14.jpg 37KB

14.jpg 37KB 14-checkpoint.jpg 37KB

14-checkpoint.jpg 37KB 14.jpg 37KB

14.jpg 37KB 1.jpg 36KB

1.jpg 36KB 9.jpg 35KB

9.jpg 35KB 4-checkpoint.jpg 35KB

4-checkpoint.jpg 35KB 4.jpg 35KB

4.jpg 35KB 12.jpg 30KB

12.jpg 30KB 10.jpg 26KB

10.jpg 26KB 10-checkpoint.jpg 26KB

10-checkpoint.jpg 26KB 3.jpg 22KB

3.jpg 22KB 8.jpg 21KB

8.jpg 21KB 15.jpg 15KB

15.jpg 15KB 15-checkpoint.jpg 15KB

15-checkpoint.jpg 15KB共 155 条

- 1

- 2

资源评论

2301_79895410

- 粉丝: 0

- 资源: 1

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功