没有合适的资源?快使用搜索试试~ 我知道了~

元学习(meta learning) 最新进展综述论文

需积分: 0 58 下载量 89 浏览量

2020-05-08

17:16:17

上传

评论 3

收藏 921KB PDF 举报

温馨提示

试读

28页

本文综述了元学习在图像分类、自然语言处理和机器人技术等领域的应用。与深度学习不同,元学习使用较少的样本数据集,并考虑进一步改进模型泛化以获得更高的预测精度。

资源推荐

资源详情

资源评论

A COMPREHENSIVE OVERVIEW AND SURVEY OF RECENT

ADVANCES IN META-LEARNING

A PREPRINT

Huimin Peng

∗

hpeng2@ncsu.edu

penghuimin@inspur.com

flyingphoen2008@hotmail.com

April 30, 2020

ABSTRACT

This article reviews meta-learning which seeks rapid and accurate model adaptation to unseen tasks

with applications in image classification, natural language processing and robotics. Unlike deep

learning, meta-learning uses few-shot datasets and concerns further improving model generalization

to obtain higher prediction accuracy. We summarize meta-learning models in three categories:

black-box adaptation, similarity based method and meta-learner procedure. Recent applications

concentrate upon combination of meta-learning with Bayesian deep learning and reinforcement

learning to provide feasible integrated problem solutions. We present performance comparison of

recent meta-learning methods and discuss future research direction.

Keywords Meta-Learning · Few-Shot Learning · Meta-Reinforcement · Meta-Imitation · Meta-Learner

1 Introduction

1.1 Background

Meta-learning is known as learning-to-learn models which allow rapid and precise adaptation to unseen tasks [

1

]. In

[

2

], meta-learning and transfer learning are regarded as synonyms. Learning-to-learn gains attention under the research

topic of continual learning. Similar to lifelong learning which accumulates knowledge and builds one model applicable

to all, meta-learning aims to develop a general framework that can be used in a wide variety of tasks.

A most important application of meta-learning is few-shot learning [

3

,

4

,

5

,

6

,

7

,

8

,

9

,

10

,

11

]. In image classification,

few-shot learning refers to tasks where there are only less than ten images within each category. Human is capable

of grasping new concepts from few demonstrations and utilizing past knowledge to identify the category of a new

object efficiently. In few-shot learning, the goal is to develop human-like machines that can make fast and accurate

classification based upon few images. For ancient languages where we only have few observations, and for dialects

with only a small group of people, we resort to few-shot meta-learning for fast and accurate predictive modeling.

Meta-learning models how we learn. By learning how to learn, meta-learning introduces a flexible framework that

applies to different tasks. For example, Model-Agnostic Meta-Learning (MAML) [

12

] is applicable to all tasks which

can be solved with gradient descent. On the other hand, based upon pre-trained deep models, meta-learning adapts to

new tasks rapidly without precision loss [

13

]. In meta-learning, both reinforcement learning [

14

] and neural network

[

15

] can be applied to search for an optimizer autonomously. In both cases, a general representation of an optimizer

search space should be defined explicitly.

Recently meta-learning focuses upon integration with other frameworks such as meta-reinforcement learning [

16

,

17

,

18

,

19

,

20

,

21

,

22

,

23

] and meta-imitation learning [

24

,

11

] which are closely associated with robotics research.

∗

Thank you for all helpful comments! Feel free to leave a message about comments on this manuscript. I will update manuscript

on a weekly basis. In case I did not receive email, my personal email is

flyingphoen2008@hotmail.com

and

974630998@qq.com

.

arXiv:2004.11149v2 [cs.LG] 29 Apr 2020

A Comprehensive Overview and Survey of Recent Advances in Meta-Learning A PREPRINT

Reinforcement learning estimates optimal actions based upon given policy and environment [

25

]. Imitation learning

evaluates the reward function from observing behaviors of another agent in the same environment [

26

]. Few-shot

learning helps an agent to make predictions based upon only few demonstrations from other agents [

12

]. Recent

application of meta-learning integrates reinforcement learning, imitation learning and few-shot meta-learning for robots

to learn basic skills and react to rare situations [27].

A typical assumption behind meta-learning is that tasks share similarity structure so that they can be solved under

the same meta-learning framework. To relax this assumption and improve model generalization, one proposal is the

integration of statistical models into meta-learning framework. Statistical models are less prone to over-fitting and

robust to model misspecification. Machine learning models are data-driven, highly integrated and applicable to real-life

problems. Under the framework of meta-learning, we can combine statistical models and machine learning for fast and

accurate adaptation.

Structure of our article is as follows. Section 1.2 presents history of meta-learning research. Section 1.3 provides

an outline of datasets and formulation. Section 2 summarizes meta-learning models. Section 2.4 surveys Bayesian

meta-learning methods. Section 3.1 briefly reviews meta-reinforcement learning and section 3.2 surveys meta-imitation

learning. Section 3.3 briefly introduces online meta-learning and section 3.4 reviews methods in unsupervised meta-

learning. Section 3 and section 4 summarize main applications of meta-learning and discuss future research direction.

1.2 History

Earlier research of meta-learning concerns hyper-parameter optimization, as in [

28

,

29

,

30

,

31

,

32

,

33

,

34

,

35

,

36

,

37

,

38

,

39

]. In [

29

], neural net is trained simultaneously using distinct but related tasks in order to improve generaliza-

tion. In [

30

], differentiation of a cross-validation loss function is used for optimization of several hyper-parameters

simultaneously. Combination of meta-learning and reinforcement learning dates back to [

16

], in which meta-learning

is introduced to tune hyper-parameters such as learning rate, exploration-exploitation tradeoff and discount factor of

future reward.

In addition, meta-learning can be applied to conduct autonomous model selection [

40

,

41

,

36

,

42

] such as neural

architecture optimization [

32

,

34

,

43

,

17

,

44

]. In [

40

], meta-learning is applied to ranking and clustering, where

algorithms are trained on meta-samples and an optimal model with highest prediction accuracy is selected. [

41

]

considers using feedforward neural network, decision tree or support vector machine as learner model. Then it selects

the class of models with the best performance on time series forecast. In [

36

], meta-learning is applied to select

parameters in support vector machine (SVM), which demonstrates superior generalization ability in data modeling.

Multi-objective particle swarm optimization is integrated with meta-learning to solve parameter selection.

Another research line is based upon learning how to learn by finding the optimal optimizer. [

14

] considers all first-order

and second-order optimization methods under the framework of meta-imitation learning and minimizes the distance

between predicted and target actions. Since a neural net can approximate any function, search space of optimizers is

defined to be the set of all neural nets. Policy update per iteration can be approximated using neural nets where weight

parameters are estimated jointly with step direction and step size. By learning optimizer autonomously, algorithms

converge faster and outperform gradient descent in most iterations.

Meta-learning concentrates upon model adaptation between tasks which share similarity structure. For out-of-

distribution tasks, we can extract the most similar experience from a large memory, and build predictive models

based upon few data collected in a new situation. Classification of recent meta-learning methods is not exact since

models tend to be more flexible and integrated in recent development. But with classification, we can roughly outline

recent research directions for later mixing. Recent meta-learning methodology can be categorized into three classes

which are model-based, metric-based and optimization-based methods, as in [

45

]. MANN (Memory-augmented neural

networks) [

46

] belongs to the model-based category. It stores all model training history in an external memory and loads

the most relevant model parameters from external memory every time a new task is present. Second, convolutional

Siamese neural network [

3

] is within the metric-based category. A metric refers to the similarity between tasks. Siamese

network designs a metric that is the similarity measure between convolutional features of different images. Matching

networks [4], relation network [8] and prototypical network [6] are all metric-based methods.

Optimization-based technique includes a learner for model estimation at task level and a meta-learner for model

generalization across tasks. In [

5

], a meta-learner updates parameters in learner on different batches of training data and

validation data. For learners optimized with gradient descent, a meta-learner can be specified to be a long short term

memory model correspondingly [

47

]. MAML (Model-Agnostic Meta-Learning) proposed in [

12

] does not impose any

model assumption and is applicable to any learner model optimized with gradient descent. First-order meta-learning

algorithm in [

48

] is also optimization-based, where iterative updates on parameters are designed to be the difference

between previous estimate and new sample average estimate.

2

A Comprehensive Overview and Survey of Recent Advances in Meta-Learning A PREPRINT

From another perspective, meta-learning can be formulated under the probabilistic framework of Bayesian inference.

In [

49

], a Bayesian generative model is combined with deep Siamese convolutional network to make classification

on hand-written characters. In [

50

], a Bayesian extension of MAML is proposed, where gradient descent in MAML

is replaced with Stein variational gradient descent (SVGD). SVGD offers an efficient combination of MCMC and

variational inference. In [

9

], amortization network is used to map training data onto weights in linear classifier.

Amortization network is also used to map input data to task-specific stochastic parameter for further sampling. It

utilizes an end-to-end stochastic training to compute approximate posterior distributions of task-specific parameters in

meta-learner and labels on new tasks.

Recent applications concentrate upon robotics, where meta-imitation learning [

26

,

24

,

11

,

27

] and meta-reinforcement

learning [

51

,

52

,

53

,

18

,

54

,

55

,

21

,

56

] are of primary interest. Human beings can learn basic movements from few

demonstrations so that researchers hope robots can do the same through meta-imitation learning. Imitation of action,

reward and policy is achieved by minimization of regret function which measures the distance between current state and

imitation target. In [

26

], MAML for one-shot imitation learning is proposed. Minimization of cloning loss leads to

closely minimick target action that robots try to follow. It estimates a policy function that maps visual inputs to actions.

[

11

] also integrates MAML into one-shot imitation learning. It collects one human demonstration video and one robot

demonstration video for robots to imitate. The objective here is to minimize behavioral cloning loss with inner MAML

parameter adaptation. It also considers domain adaptation with generalization to different objects or environment in the

imitation task.

Meta-reinforcement learning (Meta-RL) is designed for RL tasks such as reward-driven situations with sparse reward,

sequential decision and clear task definition [

19

]. RL considers the interaction between agent and environment through

policy and reward. By maximizing reward, robots select an optimal sequential decision. In robotics, meta-RL is applied

in cases where robots need rapid reaction to rare situations based upon previous experiences. [

52

] provides an overview

of meta-RL models in multi-bandit problems. Meta-learned RL models demonstrate better performance than RL models

from scratch. [

21

] it constructs a highly integrated meta-RL method PEARL which combines variational inference

and latent context embedding in off-policy meta-RL. In addition, reward-driven neuro-activities in animals can be

explained with meta-RL. In [

19

], phasic dopamine (DA) release is viewed as reward and meta-RL explains well the DA

regulations in guiding animal behaviors with respect to the changing environment in animal experiment.

Besides meta-RL and meta-imitation learning, meta-learning can be flexibly combined with machine learning models

for applications in real-life problems. For example, unsupervised meta-learning conducts rapid model adaptation

using unlabelled data. Online meta-learning analyzes streaming data and performs real-time model adaptation. First,

unsupervised meta-learning [

57

,

18

,

10

,

58

,

59

,

60

] is for modelling unlabelled data. Unsupervised clustering methods

such as adversarially constrained autoencoder interpolation (ACAI) [

61

], bidirectional GAN (BiGAN) [

61

], DeepCluster

[

62

] and InfoGAN [

63

] are applied to cluster data and estimate data labels [

59

]. Afterwards meta-learning methods

are used on unlabelled data and predicted labels obtained through unsupervised clustering. It is mentioned in [

59

] that

unsupervised meta-learning may perform better than supervised meta-learning. Another combination of unsupervised

learning and meta-learning is in [

60

]. It replaces supervised parameter update in inner loop with unsupervised update

using unlabelled data. Meta-learner in the outer loop applies supervised learning using labeled data to update the

unsupervised weight update rule. It demonstrates that this unique combination performs better in model generalization.

Second, online meta-learning analyzes streaming data so that the model should respond to changing conditions rapidly

using a small batch of data in each model adaptation [

64

,

55

,

65

]. [

64

] proposes a Bayesian online learning model

ALPaCA where kernel-based Gaussian process (GP) regression is performed on the last layer of neural network for

fast adaptation. It trains an offline model to estimate GP regression parameters which stay fixed through all online

model adaptations. [

55

] applies MAML to continually update the task-specific parameter in prior distribution so

that the Bayesian online model adapts rapidly to streaming data. [

65

] integrates MAML into an online algorithm

follow the leader (FTL) and creates an online meta-learning method follow the meta-leader (FTML). MAML updates

meta-parameters which are inputs into FTL and this integrated online algorithm generalizes better than previously

developed methods.

Meta-learning algorithms are hybrid, flexible and can be combined with machine learning models such as Bayesian deep

learning, RL, imitation learning, online algorithms, unsupervised learning and graph models. In these combinations,

meta-learning adds a model generalization module to existing machine learning methods.

1.3 Datasets and Formulation

Few-shot datasets used as benchmarks for performance comparison in meta-learning literature are reviewed in [

66

].

Many meta-datasets are available at

https://github.com/google-research/meta-dataset

. Commonly used

meta-learning datasets are briefly listed as follows.

3

A Comprehensive Overview and Survey of Recent Advances in Meta-Learning A PREPRINT

• Omniglot

[

67

] is available at

https://github.com/brendenlake/omniglot

. Omniglot is a large dataset

of hand-written characters with 1623 characters and 20 examples for each character. These characters are

collected based upon 50 alphabets from different countries. It contains both images and strokes data. Stroke

data are coordinates with time in miliseconds.

• ImageNet

[

68

] is available at

http://www.image-net.org/

. ImageNet contains 14 million images and 22

thousand classes for these images. Large scale visual recognition challenge 2012 (ILSVRC2012) dataset is

a subset of ImageNet. It contains 1,281,167 images and labels in training data, 50,000 images and labels in

validation data, and 100,000 images in testing data.

• miniImageNet

[

4

,

69

] is a subset of ILSVRC2012. It contains 60,000 images which are of size 84

×

84. There

are 100 classes and 600 images within each class. [

70

] splits 64 classes as training data, 16 as validation data,

and 20 as testing data.

• tieredImageNet

[

70

] is also a subset of ILSVRC2012 with 34 classes and 10-30 sub-classes within each. It

splits 20 classes as training data, 6 as validation data and 8 as testing data.

• CIFAR-10/CIFAR-100

[

71

,

72

] is available at

https://www.cs.toronto.edu/~kriz/cifar.html

.

CIFAR-10 contains 60,000 colored images which are of size 32

×

32. There are 10 classes, each contains

6,000 images. CIFAR-100 contains 100 classes, each includes 600 images.

CIAFR-FS

[

73

] is randomly

sampled from CIFAR-100 for few-shot learning in the same mechanism as miniImageNet.

FC100

[

72

] is also

a few-shot subset of CIFAR-100. It splits 12 superclasses as training data, 5 superclasses as validation data

and 5 superclasses as testing data.

• Penn Treebank (PTB)

[

74

] is available at

https://catalog.ldc.upenn.edu/LDC99T42

. PTB is a large

dataset of over 4.5 million American English words, which contain part-of-speech (POS) annotations. Over

half of all words have been given syntactic tags. It is used for sentiment analysis and classification of words,

sentences and documents.

• CUB-200

[

66

,

75

] is available at

http://www.vision.caltech.edu/visipedia/CUB-200.html

. CUB-

200 is an annotated image dataset that contains 200 bird species, a rough image segmentation and image

attributes.

• CelebA

(CelebFaces Attributes Dataset) is available at

http://mmlab.ie.cuhk.edu.hk/projects/

CelebA.html

. CelebA is an open-source facial image dataset that contains 200,000 images, each with

40 attributes including identities, locations and facial expressions.

• YouTube Faces

database is available at

https://www.cs.tau.ac.il/~wolf/ytfaces/

. YouTube Faces

contains 3,425 face videos from 1,595 different individuals. Number of frames in each video clip varies from

48 to 6070.

Among these datasets,

miniImageNet

[

4

,

69

],

tieredImageNet

[

70

] and CelebA are the most difficult few-shot

classification datasets. They are used to compare performances of meta-learning methods.

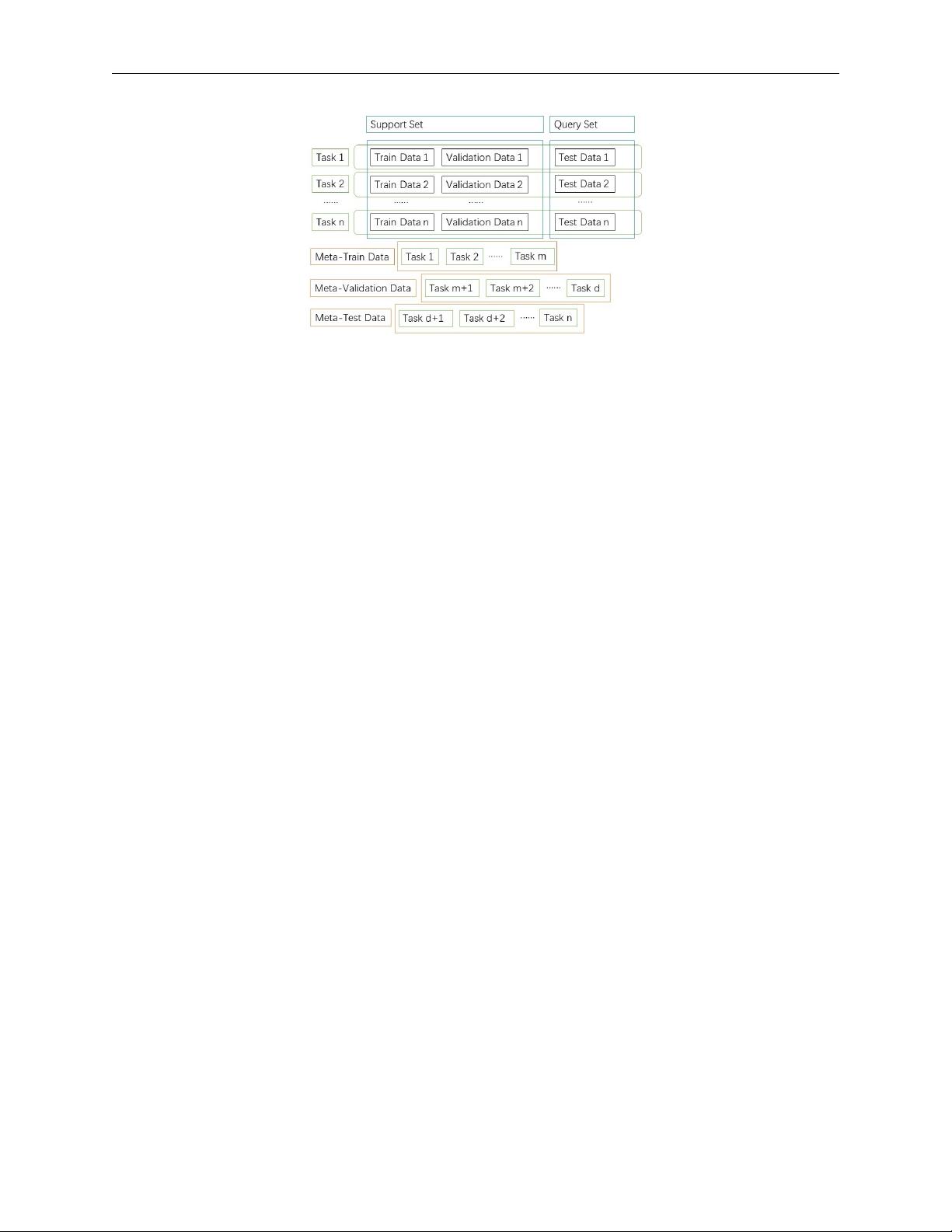

Dataset concepts used in meta-learning are outlined in figure 1. Within each task, there are train data

D

tr

, validation

data D

val

and test data D

test

. Support set S is the set of all labelled data. Train data and validation data are randomly

sampled from support set. Query set

Q

is the set of all unlabelled data and test data are randomly sampled from query

set. Meta-learning datasets include non-overlapping meta-train data

D

meta−train

, meta-validation data

D

meta−val

and

meta-test data D

meta−test

which consists of tasks.

In supervised meta-learning, input is labelled data

(x

x

x, y)

, where

x

x

x

is an image or a feature embedding vector and

y

is

label. Data model is y = h

θ

(x

x

x) parameterized by meta-parameter θ. As in [25], a task is defined as

T = {p(x

x

x), p(y|x

x

x), L},

where

L

is a loss function,

p(x

x

x)

and

p(y|x

x

x)

are data-generating distributions of inputs and labels. Task follows a task

distribution

T ∼ p(T )

. K-shot N-class learning is a typical problem setting in few-shot meta-learning, where there are

N classes each with K examples.

2 Meta-learning

For fast and accurate adaptation to unseen tasks with meta-learning, we need to balance exploration and exploitation. In

exploration, we define a complete model search space which covers all algorithms for the task. In exploitation, we

optimize over the search space, identify the optimal learner and estimate learner parameter. For example, learning-

optimizers method proposed in [

14

,

76

] defines an extensive search space of optimizers for model exploration. In

4

A Comprehensive Overview and Survey of Recent Advances in Meta-Learning A PREPRINT

Figure 1: Upper part shows data in each task: train data, validation data and test data. Lower part shows meta-train data,

meta-validation data and meta-test data that consists of tasks. Support set is the set of all labelled data. Query set is the

set of all unlabelled data.

[

77

], mean average precision defined as precision in predicted similarity is a proposed loss function used for model

exploitation.

On the other hand, meta-learning models can combine offline deep learning and online algorithms. In offline modeling,

we aggregate past experiences by training a deep model on large historical datasets. In online algorithms, we continually

adapt a deep offline model to conduct predictive analysis on few-shot datasets from novel tasks. For instance, memory-

based meta-learning model in [

78

] stores offline training results in memory so that they can be retrieved efficiently

in online model adaptation. Online Bayesian regression in [

64

] uses offline training results to initiate task-specific

parameters in prior distributions and update these parameters continually for rapid adaptation to online streaming data.

Based upon pre-trained deep learning models, meta-learning methods adapt to new tasks efficiently.

A typical assumption behind meta-learning is that tasks share similarity structure, and model generalization between

tasks can be performed efficiently. Degree of similarity between tasks depends upon the similarity function which often

constitutes the meta-learning objective function. Reliable adaptation between different tasks relies upon identifying the

similarity structure between them. In meta-learning research, primary interest lies in relaxing the requirements upon

degree of similarity between tasks and improving model adaptivity.

In this section, we briefly summarize meta-learning frameworks that emerge in recent literature into three categories:

black-box adaptation [25], similarity-based approach, learner and meta-learner procedure. This classification of meta-

learning frameworks is not exact and the boundaries are vague between different classes. It roughly points out research

directions of meta-learning methods.

2.1 Black-Box Adaptation

Hyperparameter optimization can be achieved through random grid search or manual search [

37

]. Model search space

is usually indexed by hyperparameters [

14

]. In adaptation to novel tasks, hyperparameters are re-optimized using data

from the novel task. Optimizers can be approximated with neural networks or reinforcement learners [

76

]. Neural

networks can approximate any function with good convergence results. By using neural networks, the optimizer search

space represents a wide range of functions that guarantee better potential optima.

In [

14

], optimization is through guided policy search and neural network is used to model policy which is the gradient

descent. Policy update is formulated as

∆x ← π(f, {x

0

, · · · , x

i−1

}) = −γ

P

i−1

j=0

α

j

5

x

j

f(x

j

),

where

f

is a neural network,

γ

is the step size and

α

is the discount factor. In this case, policy update is approximated

with a neural network and is continually adapted using task data.

Another approach is the adaptation of a pre-trained neural network from offline deep model to unseen tasks, as in figure

2. In deep neural network, weights and activations are highly correlated so that we can use a few parameters to predict

the others. In unseen tasks, we estimate a few parameters rapidly and use the pre-trained predictive mapping to estimate

the output directly. [

79

] proposes using a feedforward pass that maps activations to parameters in the last layer of a

pre-trained deep neural network. It applies to few-shot learning where the number of categories is large and the sample

size per category is small.

5

剩余27页未读,继续阅读

资源评论

syp_net

- 粉丝: 158

- 资源: 1196

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功