Perceptual Losses for Real-Time Style Transfer

and Super-Resolution

Justin Johnson, Alexandre Alahi, Li Fei-Fei

{jcjohns, alahi, feifeili}@cs.stanford.edu

Department of Computer Science, Stanford University

Abstract. We consider image transformation problems, where an input

image is transformed into an output image. Recent methods for such

problems typically train feed-forward convolutional neural networks us-

ing a per-pixel loss between the output and ground-truth images. Parallel

work has shown that high-quality images can be generated by defining

and optimizing perceptual loss functions based on high-level features ex-

tracted from pretrained networks. We combine the benefits of both ap-

proaches, and propose the use of perceptual loss functions for training

feed-forward networks for image transformation tasks. We show results

on image style transfer, where a feed-forward network is trained to solve

the optimization problem proposed by Gatys et al in real-time. Com-

pared to the optimization-based method, our network gives similar qual-

itative results but is three orders of magnitude faster. We also experiment

with single-image super-resolution, where replacing a per-pixel loss with

a perceptual loss gives visually pleasing results.

Keywords: Style transfer, super-resolution, deep learning

1 Introduction

Many classic problems can be framed as image transformation tasks, where a

system receives some input image and transforms it into an output image. Exam-

ples from image processing include denoising, super-resolution, and colorization,

where the input is a degraded image (noisy, low-resolution, or grayscale) and the

output is a high-quality color image. Examples from computer vision include se-

mantic segmentation and depth estimation, where the input is a color image and

the output image encodes semantic or geometric information about the scene.

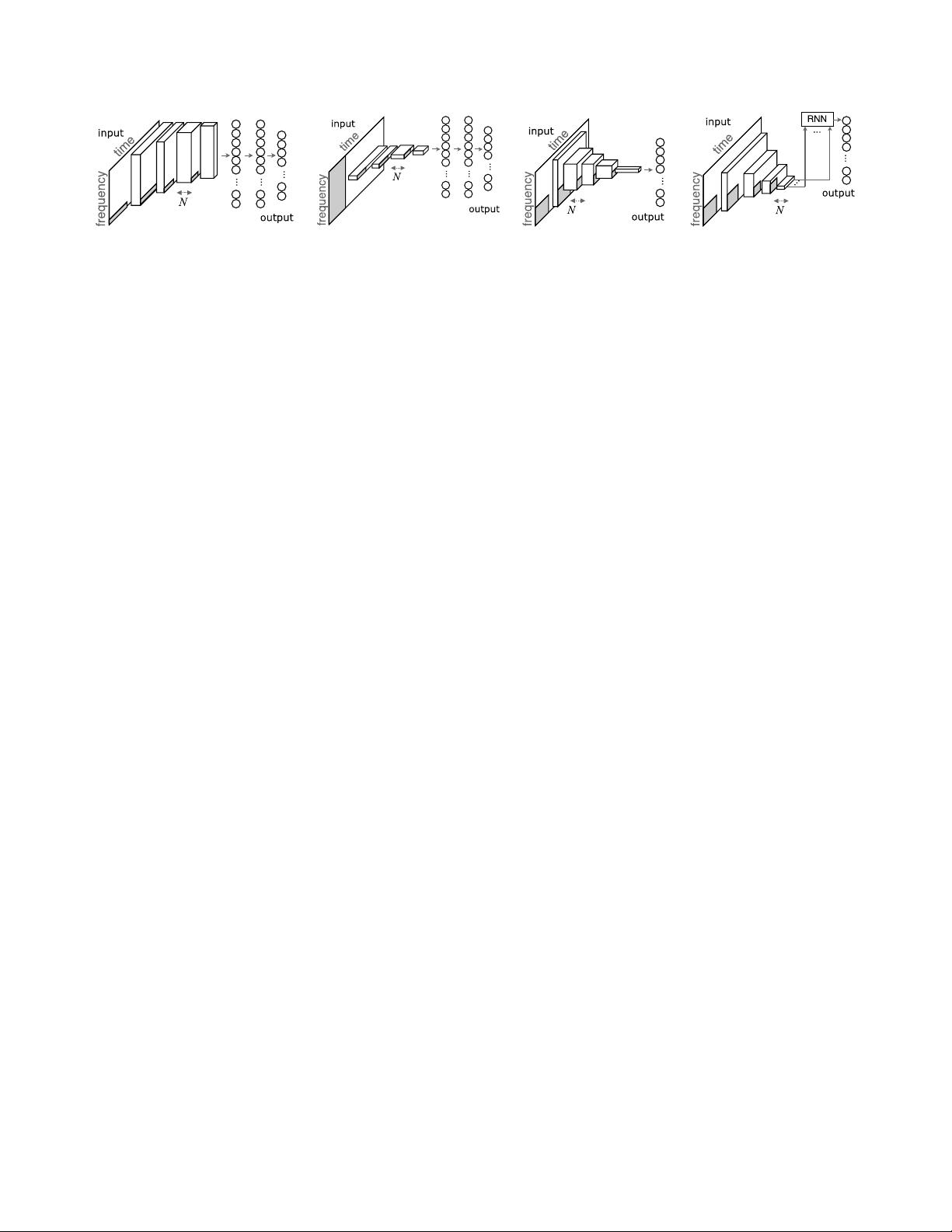

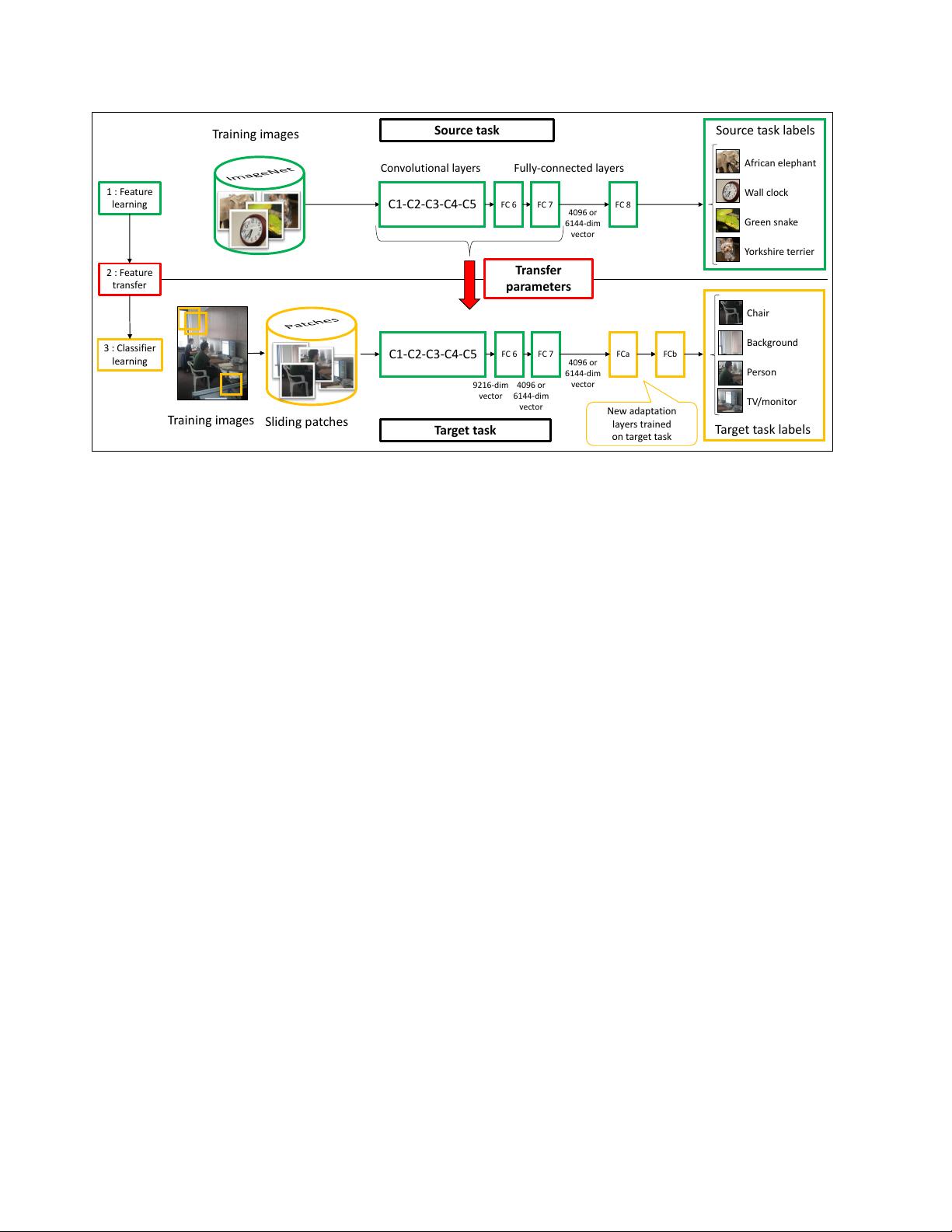

One approach for solving image transformation tasks is to train a feed-

forward convolutional neural network in a supervised manner, using a per-pixel

loss function to measure the difference between output and ground-truth images.

This approach has been used for example by Dong et al for super-resolution [1],

by Cheng et al for colorization [2], by Long et al for segmentation [3], and by

Eigen et al for depth and surface normal prediction [4,5]. Such approaches are

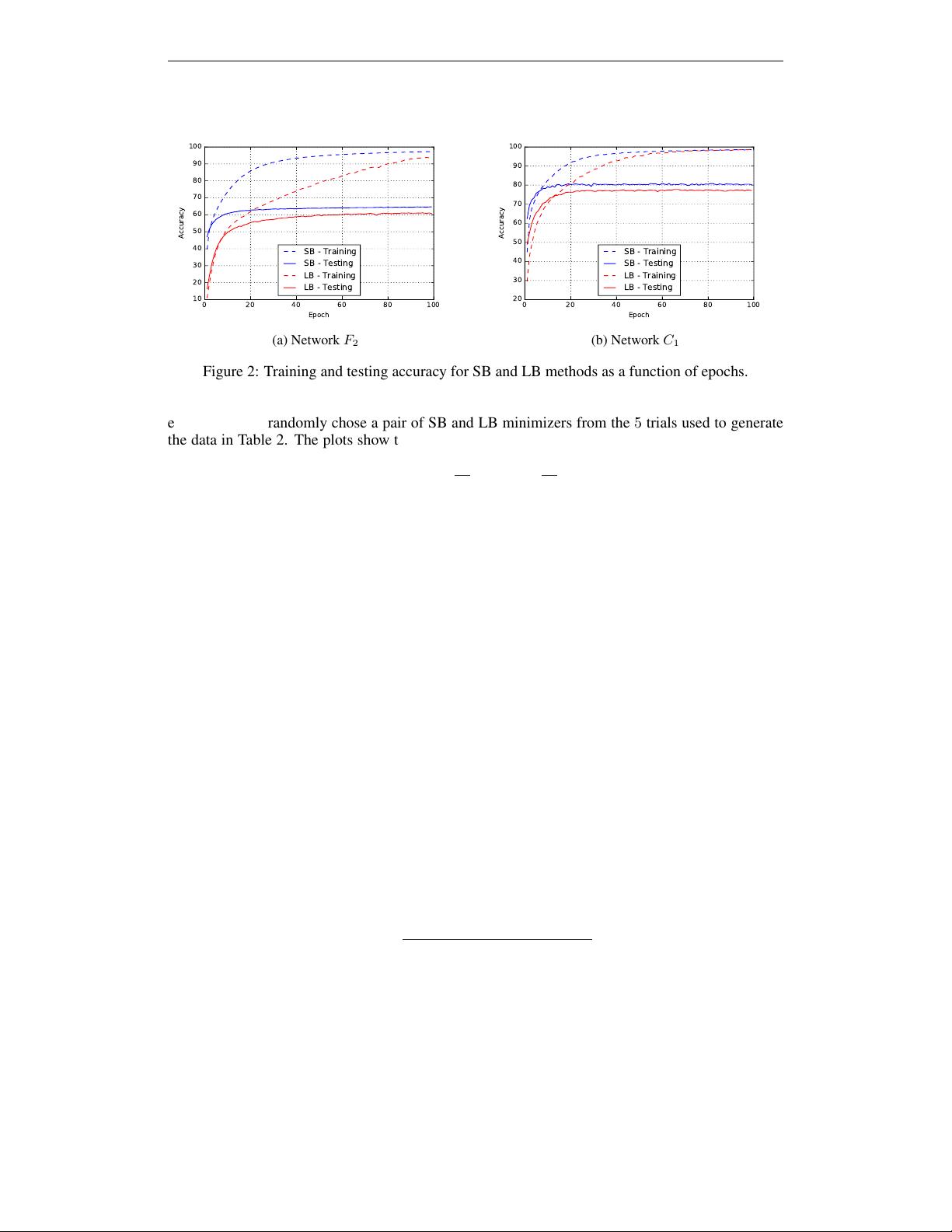

efficient at test-time, requiring only a forward pass through the trained network.

However, the per-pixel losses used by these methods do not capture perceptual

differences between output and ground-truth images. For example, consider two

arXiv:1603.08155v1 [cs.CV] 27 Mar 2016