Good

Features

to

Track

Jianbo Shi

Computer Science Department

Cornel1 University

Ithaca,

NY

14853

Abstract

No

feature-based vision system can work unless good

features can be identified and tracked from frame to

frame. Although tracking itself is by and large a solved

problem, selecting features that can be tracked well and

correspond to physical points

in

the world is still hard.

We propose a feature selection criterion that is optimal

by

construction because

it

is based on how the tracker

works, and a feature monitoring method that can de-

tect occlusions, disocclusions, and features that do not

correspond to points

in

the world. These methods are

based

on

a new tracking algorithm that extends pre-

vious Newton-Raphson style search methods to work

under affine image transformations. We test perfor-

mance with several simulations and experiments.

1

Introduction

Is feature tracking

a

solved problem? The exten-

sive studies of image correlation [4], [3], [15], [18], [7],

[17] and sum-of-squared-difference

(SSD)

methods

[2],

[l]

show that all the basics are in place. With small

inter-frame displacements,

a

window can be tracked

by optimizing some matching criterion with respect to

translation

[lo],

[I]

and linear image deformation

[6],

[8],

[ll],

possibly with adaptive window size[l4]. Fea-

ture windows can be

selected

based on some measure

of texturedness

or

corner’ness, such as

a

high standard

deviation in the spatial intensity profile [13], the pres-

ence of zero crossings of the Laplacian of the image

intensity

[12],

and corners [9], [5]. Yet, even a re-

gion rich in texture can be poor. For instance, it can

straddle a depth discontinuity

or

the boundary of a

reflection highlight on

a

glossy surface. In either case,

the window is not attached to a fixed point in the

world, making that feature useless

or

even harmful to

most structure-from-motion algorithms. Furthermore,

Carlo Tomasi

Computer Science Department

Stanford University

Stanford, CA 94305

even good features can become occluded, and trackers

often blissfully drift away from their original target

when this occurs.

No

feature-based vision system can

be claimed to really work until these issues have been

settled.

In this paper we show how to monitor the quality of

image features during tracking by using

a

measure of

feature

dissimilarity

that quantifies the change of ap-

pearance of a feature between the first and the current

frame. The idea is straightforward: dissimilarity is the

feature’s rms residue between the first and the current

frame, and when dissimilarity grows too large the fea-

ture should be abandoned. However, in this paper we

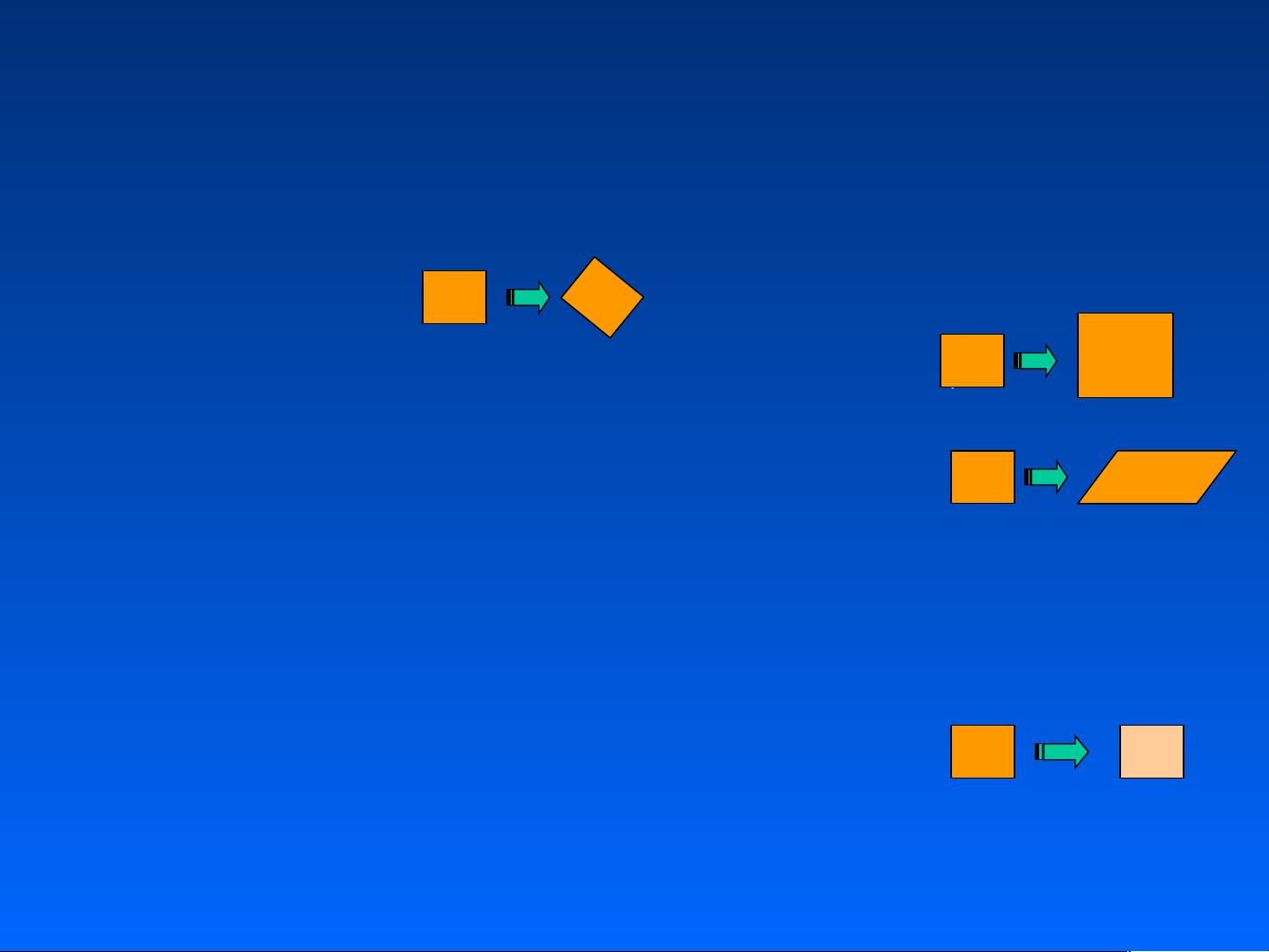

make two main contributions to this problem. First,

we provide experimental evidence that pure transla-

tion is not an adequate model for image motion when

measuring dissimilarity, but affine image changes, that

is, linear warping and translation, are adequate. Sec-

ond, we propose

a

numerically sound and efficient way

of determining affine changes by

a

Newton-Raphson

stile minimization procedure, in the style of what Lu-

cas and Kanade

[lo]

do for the pure translation model.

In addition, we propose a more principled way to se-

lect features than the more traditional “interest”

or

“cornerness” measures. Specifically, we show that fea-

tures with good texture properties can be defined by

optimizing the tracker’s accuracy. In other words, the

right features are exactly those that make the tracker

work best. Finally, we submit that using two models of

image motion is better than using one. In fact, trans-

lation gives more reliable results than affine changes

when the inter-frame camera translation is small, but

affine changes are necessary to compare distant frames

to determine dissimilarity. We define these two models

in the next section.

2

Two

Models

of

Image

Motion

OThis research

was

supported

by

the National Science Foun-

As

the camera moves, the patterns of image inten-

sities change in

a

complex way. However, away from

dation under contract

IRI-9201751

593

1063-6919/94 $3.00

0

1994

IEEE

- 1

- 2

- 3

- 4

- 5

- 6

前往页