712 IEEE TRANSACTIONS ON EVOLUTIONARY COMPUTATION, VOL. 11, NO. 6, DECEMBER 2007

MOEA/D: A Multiobjective Evolutionary Algorithm

Based on Decomposition

Qingfu Zhang, Senior Member, IEEE, and Hui Li

Abstract—Decomposition is a basic strategy in traditional mul-

tiobjective optimization. However, it has not yet been widely used

in multiobjective evolutionary optimization. This paper proposes

a multiobjective evolutionary algorithm based on decomposition

(MOEA/D). It decomposes a multiobjective optimization problem

into a number of scalar optimization subproblems and optimizes

them simultaneously. Each subproblem is optimized by only

using information from its several neighboring subproblems,

which makes MOEA/D have lower computational complexity at

each generation than MOGLS and nondominated sorting genetic

algorithm II (NSGA-II). Experimental results have demonstrated

that MOEA/D with simple decomposition methods outperforms

or performs similarly to MOGLS and NSGA-II on multiobjective

0–1 knapsack problems and continuous multiobjective optimiza-

tion problems. It has been shown that MOEA/D using objective

normalization can deal with disparately-scaled objectives, and

MOEA/D with an advanced decomposition method can generate

a set of very evenly distributed solutions for 3-objective test

instances. The ability of MOEA/D with small population, the scal-

ability and sensitivity of MOEA/D have also been experimentally

investigated in this paper.

Index Terms—Computational complexity, decomposition, evolu-

tionary algorithm, multiobjective optimization, Pareto optimality.

I. INTRODUCTION

A

multiobjective optimization problem (MOP) can be stated

as follows:

(1)

where

is the decision (variable) space, consists

of

real-valued objective functions and is called the ob-

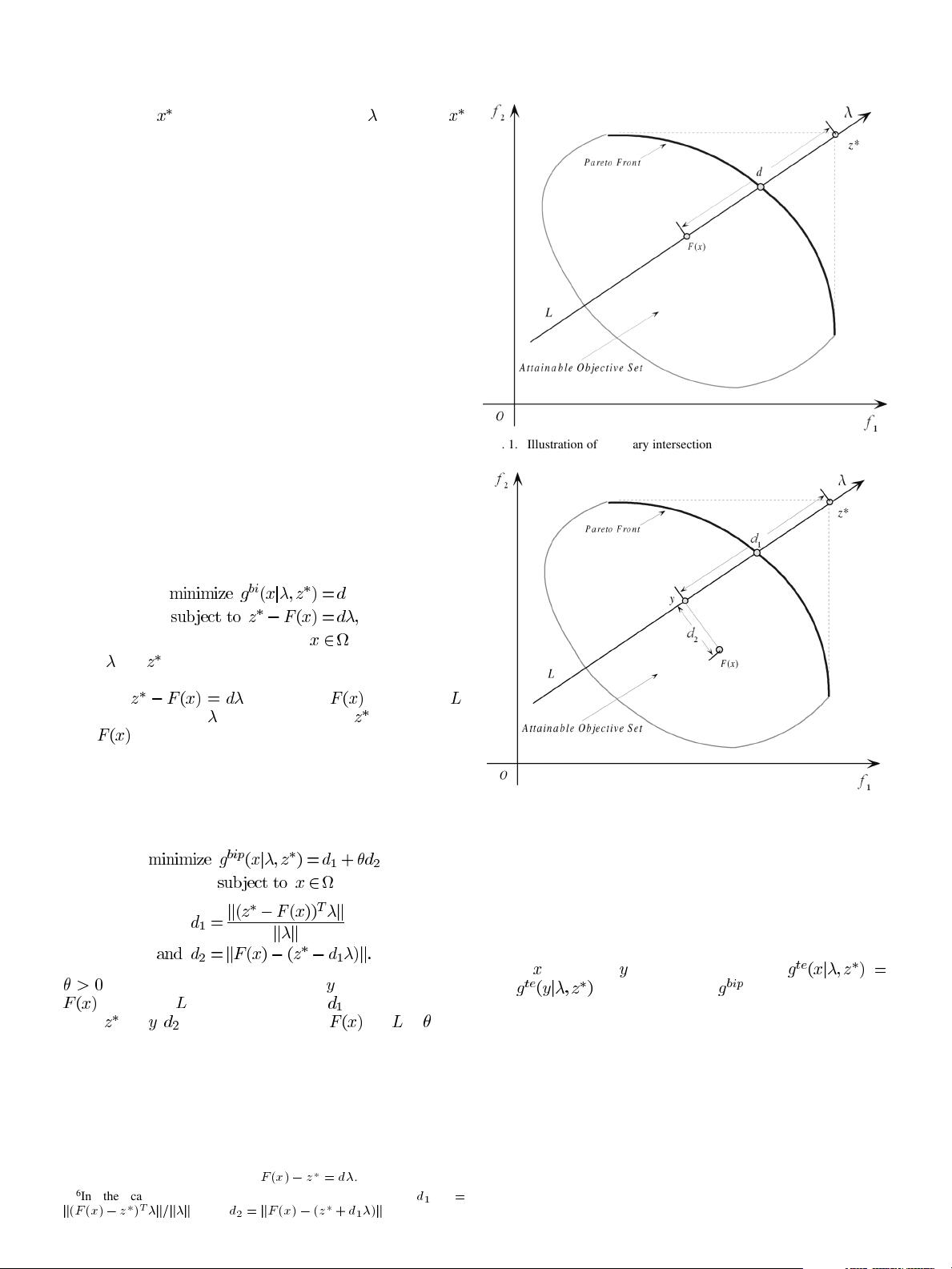

jective space. The attainable objective set is defined as the set

.

If

, all the objectives are continuous and is de-

scribed by

where are continuous functions, we call (1) a continuous

MOP.

Very often, since the objectives in (1) contradict each other,

no point in

maximizes all the objectives simultaneously. One

has to balance them. The best tradeoffs among the objectives

can be defined in terms of Pareto optimality.

Manuscript received September 4, 2006; revised November 9, 2006.

The authors are with the Department of Computer Science, University

of Essex, Wivenhoe Park, Colchester, CO4 3SQ, U.K. (e-mail: qzhang@

essex.ac.uk; hlil@essex.ac.uk).

Digital Object Identifier 10.1109/TEVC.2007.892759

Let

, is said to dominate if and only if

for every and for at least one index

.

1

A point

is Pareto optimal to (1) if

there is no point

such that dominates .

is then called a Pareto optimal (objective) vector. In other words,

any improvement in a Pareto optimal point in one objective must

lead to deterioration in at least one other objective. The set of

all the Pareto optimal points is called the Pareto set (PS) and the

set of all the Pareto optimal objective vectors is the Pareto front

(PF) [1].

In many real-life applications of multiobjective optimization,

an approximation to the PF is required by a decision maker

for selecting a final preferred solution. Most MOPs may have

many or even infinite Pareto optimal vectors. It is very time-con-

suming, if not impossible, to obtain the complete PF. On the

other hand, the decision maker may not be interested in having

an unduly large number of Pareto optimal vectors to deal with

due to overflow of information. Therefore, many multiobjec-

tive optimization algorithms are to find a manageable number

of Pareto optimal vectors which are evenly distributed along the

PF, and thus good representatives of the entire PF [1]–[4]. Some

researchers have also made an effort to approximate the PF by

using a mathematical model [5]–[8].

It is well-known that a Pareto optimal solution to a MOP,

under mild conditions, could be an optimal solution of a scalar

optimization problem in which the objective is an aggregation of

all the

’s. Therefore, approximation of the PF can be decom-

posed into a number of scalar objective optimization subprob-

lems. This is a basic idea behind many traditional mathemat-

ical programming methods for approximating the PF. Several

methods for constructing aggregation functions can be found in

the literature (e.g., [1]). The most popular ones among them in-

clude the weighted sum approach and Tchebycheff approach.

Recently, the boundary intersection methods have also attracted

a lot of attention [9]–[11].

There is no decomposition involved in the majority of the

current state-of-the-art multiobjective evolutionary algorithms

(MOEAs) [2]–[4], [12]–[19]. These algorithms treat a MOP as

a whole. They do not associate each individual solution with

any particular scalar optimization problem. In a scalar objective

optimization problem, all the solutions can be compared based

on their objective function values and the task of a scalar ob-

jective evolutionary algorithm (EA) is often to find one single

optimal solution. In MOPs, however, domination does not

define a complete ordering among the solutions in the objective

space and MOEAs aim at producing a number of Pareto op-

timal solutions as diverse as possible for representing the whole

PF. Therefore, conventional selection operators, which were

1

This definition of domination is for maximization. All the inequalities should

be reversed if the goal is to minimize the objectives in (1). “Dominate” means

“be better than.”

1089-778X/$25.00 © 2007 IEEE